The fall of the theorem economy (63 minute read)

A former mathematician warns that AI's ability to prove theorems is being confused with solving mathematics, when the discipline's real value is building human understanding and conceptual frameworks.

Deep dive

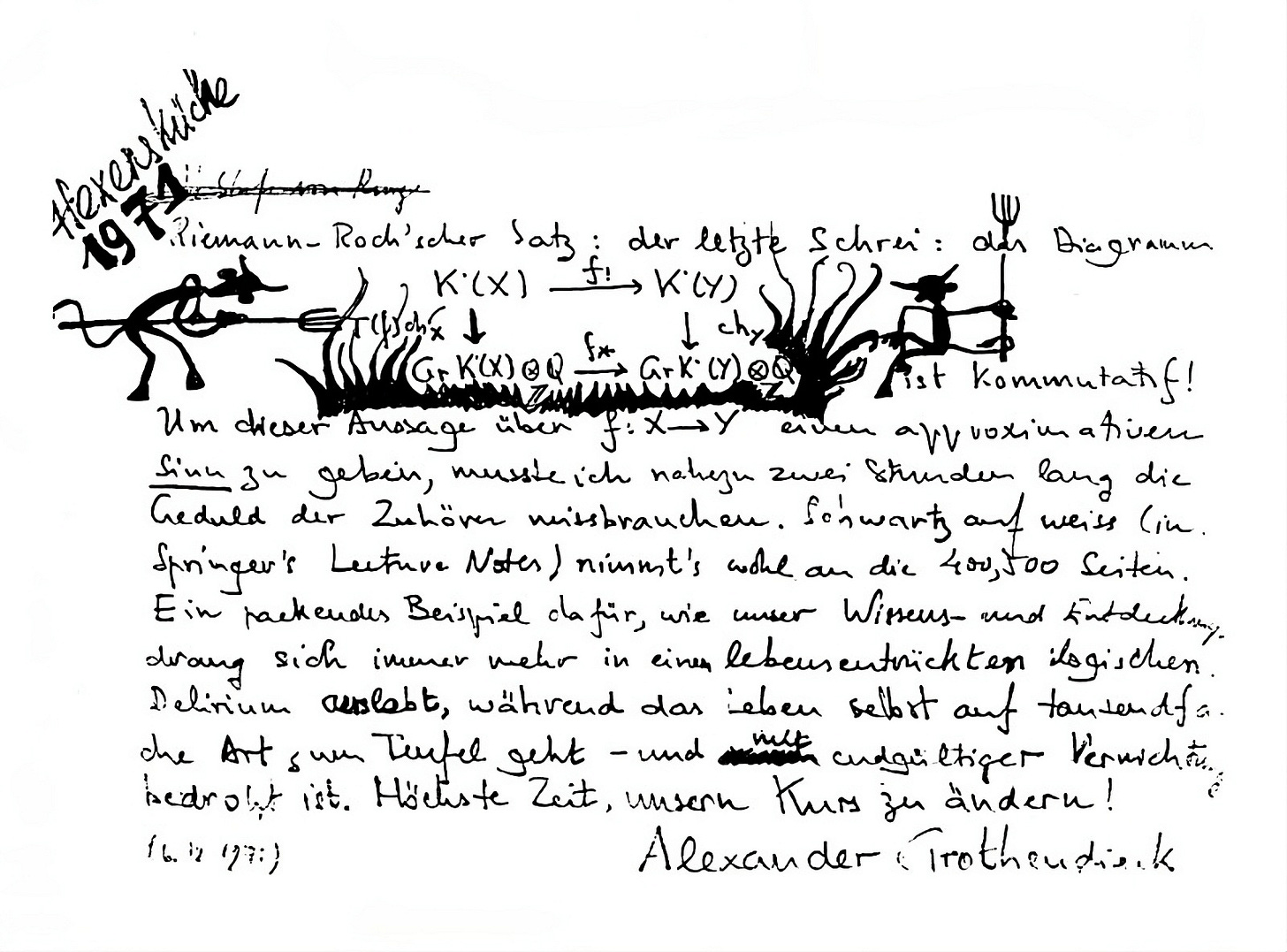

- Former mathematician David Bessis argues that AI's ability to prove theorems is exposing a fundamental misunderstanding about what mathematics really is

- Mathematics has operated under an honor code where only theorem-proving counts for career advancement, while the harder work of concept-building and creating understanding is officially worthless

- This created a system that worked for millennia: you proved theorems to demonstrate you'd built genuine conceptual innovations, making theorem-proving a cryptographic proof of deeper value

- AI is now exploiting a structural vulnerability by proving theorems without building intelligible conceptual frameworks, breaking the symbiotic relationship

- The First Proof project released 10 research-level math problems and AI systems solved 6-8, but with three critical caveats

- Caveat #1 (Oceans): The problems were technical lemmas rather than serious breakthroughs, with oceans between lemmas, papers, breakthroughs, and Fields medal work

- Caveat #2 (Accretiveness): AI solutions were often correct but unintelligible, lacking the conceptual clarity needed to be useful to future mathematics

- The Math Inc controversy: they autoformalized Viazovska's Fields medal work as a 200,000-line Lean proof, but it's an orphaned blob with no intelligible interface, creating radioactive wasteland for future formalization efforts

- Caveat #3 (The Overhang): Mathematics contains enormous unrealized value from connecting existing dots in the literature that AI's pattern-matching could harvest without true creativity

- Bessis argues Geoff Hinton's comparison of mathematics to Go and Chess is fundamentally wrong since those are finite games with optimal strategies while mathematics is about open-ended human understanding

- He warns of a nuclear scenario where AI proves something like the Riemann hypothesis with a 2-million-line unintelligible proof, leading to headlines that AI has solved math

- The impact extends to teaching: students are using AI to do homework, graduating with no real skills because the neuroplastic elevation from struggling with problems is lost

- Bessis proposes a Mathematical Intelligence Scale (like autonomous driving levels) to properly contextualize AI achievements and avoid misleading benchmarks

- He predicts mathematics will transform but survive, becoming unthinkable to do without AI assistance just like it became unthinkable without set theory and LaTeX

- Possible futures: clearer separation of pure vs applied math based on intelligibility vs applicability trade-offs; rise of intuition-maxxers who use AI to survey mathematical continents at unprecedented pace; renewed focus on philosophy and neuroscience of mathematics

- The core argument: The product of mathematics is clarity and understanding, not theorems by themselves (Bill Thurston) - but this message needs to become central to how mathematics is understood

Decoder

- First Proof: A project by 11 mathematicians including Fields medalist Martin Hairer that released 10 research-level math problems to benchmark AI's autonomous theorem-proving capabilities

- Autoformalization: Converting informal or semi-formal mathematical proofs into machine-verifiable formal logic, typically using proof assistants like Lean

- Lean/Mathlib: Lean is a proof assistant language for writing formally verified mathematics; Mathlib is its human-curated library of formalized results

- Canonization: The process of taking a local mathematical formalization and making it general, reusable, coherent, and compatible with the broader mathematical corpus

- The Overhang: Unrealized connections and latent value in existing mathematical literature - results that could be obtained by connecting dots between existing work

- Fields Medal: Mathematics' highest honor, awarded every four years to mathematicians under 40 for outstanding contributions

- Platonism vs Formalism vs Conceptualism: Three philosophical views - that mathematical objects exist in an ideal realm, that math is just symbol manipulation, or that math is cognitive infrastructure humans create

- Mathslop: The author's term for a hypothetical layer of formally correct but unintelligible mathematical proofs that no human has understood

- Honor code of mathematicians: The unwritten rule that only theorem-proving counts for career advancement while definitions, exposition, and concept-building are worth zero points

Original article

The fall of the theorem economy

How AI could destroy mathematics and barely touch it

"The product of mathematics is clarity and understanding. Not theorems, by themselves."

—Bill Thurston

My best theorem is one I never wrote down.

It crystallized one bright morning in Lausanne, Switzerland, as I was preparing for my last invited conference talk. The proof felt so obvious—and the result so compelling—that I made the reckless move of editing my slides at the last minute. Time was running out and I could only include the announcement as an informal remark at the bottom of the last slide, instead of stating it as a proper theorem.1

I had already quit academia and founded a machine-learning startup. I knew I would be too busy to write a clean proof and publish it. That was my excuse for being sloppy. I just wrote the remark and abandoned the slide deck as a message in a bottle.

My hope was that some bright young mathematician would pick it up someday and formalize the result as part of a broader theory. If I lucked out with the intrinsic randomness of attribution, I thought, it might even be remembered as the Bessis cellular decomposition theorem.

But that was stupid. By claiming the result, I had killed the incentive for anyone to write it up.

If I had to pick my second best result, it would be Theorem 0.5 in my old preprint on Garside categories. I had high ambitions for this paper, yet I ended up not submitting it anywhere. The creative process had drained me, and I left active research before regaining the courage to clean up the preliminary sections.

For a second best, this theorem is shockingly easy to prove. Once you get the preliminaries right, it only takes a few pages of pretty terrestrial group theory.

As for the preliminaries, they are even easier. All you have to do is plagiarize a dozen or so classical papers in an arcane subfield called Garside theory, replacing the original axiom set with a slightly more general one. If you understand what you're supposed to do, it is almost impossible to run into serious difficulties—it's just a giant conceptual find-and-replace bulk edit. But you have to take my word for it, because I balked at producing the hundreds of pages of necessary details.

If you think that the hard part of a mathematician's job is to prove theorems, let this serve as a counterexample—from the moment I conceived of Theorem 0.5, I knew it was true and that proving it would be straightforward.

What was the hard part, then?

Conjecturing the exact statement and writing it down?

Not even. In this example, this part was equally straightforward.

The hard part was to intuit that there should be "something like Theorem 0.5", and to come up with a conceptual framework where it became easy to express. Once I got the definitions right, the rest followed more or less organically.

Research mathematics isn't always like that, but there are miracle days where you just put your skis on and the next thing you know is that you're accelerating downhill.

Jean-Pierre Serre famously said that writing his revolutionary paper on coherent sheaves didn't require any thinking. Everything fell so naturally into place that his typewriter generated the 100 pages entirely by itself, as if the article had pre-existed.

But I wasn't Jean-Pierre Serre and he wouldn't lend me his typewriter. This is why my brightest mathematical idea never made it to publication.

Do I feel sad about it? Not really. My preprint remains freely available on the arXiv and has already been cited dozens of times, including by some fancy papers. The real innovation wasn't Theorem 0.5, but the language that made it possible, especially Definitions 2.4 and 9.3—and this language found its way to a 700-page book on Garside theory that filled out much of the missing preliminaries.

To be honest, I also had a selfish reason for sacrificing my most innovative preprint. It enabled me to focus on the more tedious preprint where I used Definition 9.3 as a magic ingredient in the resolution of a classical problem in my domain, the 𝐾(𝜋,1) conjecture for finite complex reflection groups, which permanently elevated my symbolic status as a mathematician.

But, in truth, the David who solved the 𝐾(𝜋,1) conjecture is a social parasite of the much better mathematician, the David who crafted Definitions 2.4 and 9.3.

Cracking the honor code

In the past few months, as I was grappling with the rapidly changing situation around AI and mathematics, I found myself more troubled than I ever expected to be.

In theory, I should feel vindicated and happy. In practice, I am also puzzled, worried, and sad.

The happy part of me sees a genuine revolution and gets excited. The vindicated part has legitimate claims to have prepared for it scientifically and epistemologically. The puzzled part is stunned by the timeline and accompanying frenzy. The sad part feels nostalgic for a lifestyle and value system that it engaged with and walked away from, and which might soon disappear.

The worried part holds the synthesis. I always knew that the general public had a flawed perception of mathematics, but never expected this to become an existential threat for the discipline itself.

In my book Mathematica: a Secret World of Intuition and Curiosity, I framed the misunderstanding as the tension between two versions of mathematics, official math and secret math.

Official math manifests itself as a formal deduction system where you start from axioms and mechanically derive theorems. This is a nerd's paradise, a world where truth takes binary values, reasoning is either valid or invalid, and there is technically no room for bullshit.

Secret math is the human part of the story—why official math was invented, how we can successfully interact with it, its effects on our brains, and the bizarre mental techniques through which mathematicians continuously expand its territory.

Secret math never made it to the curriculum, because it lacks the defining qualities of official math, and also because it feels peripheral. Official math is cold, hard, logical, objective, and it is rumored to be the language of the universe. Secret math is soft, fuzzy, subjective and, by contrast, it looks like cheap pedagogical backstory.

No wonder professional mathematicians have such a dissociative view of their job.

The first rule of the Intuition Club is: you don't talk about the Intuition Club. The second rule is, if you really want to talk about intuition, make it sound casual and accessory, because we ain't the psychology department. The third rule is definitions are worth zero points, expository work counts negative, and the best jobs should always go to the people who proved the hardest theorems.

If you think I'm exaggerating, here is what G. H. Hardy wrote in his celebrated (yet insufferable) mathematical autobiography:

There is no scorn more profound, or on the whole more justifiable, than that of the men who make for the men who explain. Exposition, criticism, appreciation, is work for second-rate minds.

This is peak dissociation. Behind closed doors, mathematicians are quick to complain about Hardy's curse. They insist on the importance of teaching, even for their own comprehension of the subject matter. They lament the system's pathological obsession with theorem-proving priority, while everyone knows the hard work often takes place outside of that loop, when trying to make sense of existing results. Yet, in public, they are bound by the honor code of mathematicians. Prove theorems and shut up!

There is one exception, though. Once you get the Fields medal, you are free to say whatever you want.

Bill Thurston, the 1982 Fields medallist, was a spectacular dissenter. Two years before his death, he took part in an extraordinary exchange on MathOverflow, in response to this question posted by an insecure undergrad:

What can one (such as myself) contribute to mathematics?

I find that mathematics is made by people like Gauss and Euler—while it may be possible to learn their work and understand it, nothing new is created by doing this. One can rewrite their books in modern language and notation or guide others to learn it too but I never believed this was the significant part of a mathematician work; which would be the creation of original mathematics. It seems entirely plausible that, with all the tremendously clever people working so hard on mathematics, there is nothing left for someone such as myself… Perhaps my value would be to act more like cannon fodder? Since just sending in enough men in will surely break through some barrier.

Thurston jumped in:

It's not mathematics that you need to contribute to. It's deeper than that: how might you contribute to humanity, and even deeper, to the well-being of the world, by pursuing mathematics? Such a question is not possible to answer in a purely intellectual way, because the effects of our actions go far beyond our understanding. We are deeply social and deeply instinctual animals, so much that our well-being depends on many things we do that are hard to explain in an intellectual way. That is why you do well to follow your heart and your passion. Bare reason is likely to lead you astray.2 None of us are smart and wise enough to figure it out intellectually.

The product of mathematics is clarity and understanding. Not theorems, by themselves. Is there, for example any real reason that even such famous results as Fermat's Last Theorem, or the Poincaré conjecture, really matter? Their real importance is not in their specific statements, but their role in challenging our understanding, presenting challenges that led to mathematical developments that increased our understanding…

Mathematics only exists in a living community of mathematicians that spreads understanding and breathes life into ideas both old and new. The real satisfaction from mathematics is in learning from others and sharing with others. All of us have clear understanding of a few things and murky concepts of many more. There is no way to run out of ideas in need of clarification…

Here we need to take a short metaphysical break, because it is all too easy to brush Thurston's words off as "feel-good" or "woke".

In my first Substack post, I (half-jokingly) declared that we had been wrong about mathematics for 2300 years, stuck in a false dilemma between formalism ("mathematics is a meaningless game of formal symbols") and Platonism ("mathematics captures properties of actual entities living in the perfect world of ideas").

My proposed conceptualist resolution is a rephrasing of Thurston's view: mathematics does rely on a meaningless game of formal symbols, but we only play this game because we project meaning onto it.

Meaning is a cognitive phenomenon—a product of our neural architecture—and not a direct access to transcendence.

When we "do math", we manipulate formal expressions and gradually develop an intuitive feel for what they represent, as if they were pointers to objects that "existed" in a Platonic sense. Platonists take this neuroplastic side-effect at face value. Formalists view it as accessory. Conceptualists like me recognize mathematics as a critical cognitive infrastructure of the human species.

A natural question is why the conceptualist resolution took so long to emerge. One reason is that it goes against the prevailing spiritualist worldview, which refuses physicalist interpretations of mathematics.

It also goes against the honor code of mathematicians. Hardy's curse is so powerful that even Thurston found it hard to overcome. When multiple MathOverflow users thanked him for his take, he noted in reply:

Thanks for the comments. I try to write what seems real. By now, I have no cause to fear how I will be judged, which makes it much easier for me. It's gratifying when my reality means something to others.

But then, how could such a toxic honor code survive for so long?

The answer is simple. The honor code was useful to mathematics as an academic discipline. It helped it stay exceptionally healthy and meritocratic, as noted in the epilogue of my book:

This system has its merits. It reduces arbitrariness and helps mathematicians guard against complacency and nepotism. When a discipline deals with eternal truths, it offers a neat way to evaluate careers.

The honor code also served as a guide to researchers themselves, when evaluating new ideas and new directions of research. Concept-building and problem-solving, the two facets of mathematics, are in a symbiotic relationship, as remarked by 2018 Fields medallist Peter Scholze:

What I care most about are definitions. For one thing, humans describe mathematics through language, and, as always, we need sharp words in order to articulate our ideas clearly… Unfortunately, it is impossible to find the right definitions by pure thought; one needs to detect the correct problems where progress will require the isolation of a new key concept.3

This is how the system worked for millennia. Mathematicians created value by introducing new concepts, but the rule was that only theorems could put bread on the table. The deal was fine because the two aspects almost always walked hand in hand. David, the social parasite who claimed credit for the 𝐾(𝜋,1) conjecture, was the same person as the David who crafted Definitions 2.4 and 9.3.

Solving a big conjecture was a cryptographic proof that you had come up with a genuine conceptual innovation.

I am using the past tense because this is no longer the case. There is a structural vulnerability in the honor code of mathematicians and AI has started exploiting it in a systematic manner.

The way of Go and Chess

The trigger for this post was a speech by Geoff Hinton, which caught me off guard:

I agree with Demis Hassabis, the leader of DeepMind, who for many years has said AI is going to be very important for making scientific progress…

There's one area in which that's particularly easy, which is mathematics, because mathematics is a closed system…

I think AI will get much better at mathematics than people, maybe in the next 10 years or so. And within mathematics, it's much like things like Go or Chess that are closed systems with rules...

I was used to the general public being profoundly wrong about the nature of mathematics. But I wasn't prepared for a Turing awardee and Nobel prize winner comparing it with Go and Chess.

I wrote a short response on X and tried to move on, but it kept troubling me.

Then it all clicked into place. About a year ago, I had been approached by a young mathematician friend who had done his PhD in my domain. He was thinking about launching an "AI for pure math" business and I mentored him for a while.

Like him—and like Hinton and Hassabis—I was fully convinced that AI was about to transform mathematics and science in general. But I was unsure about the business model and minimum viable product.

Mathematicians may look like Luddites, but they rarely are. They love pen and paper, blackboard and chalk, but they jumped on Donald Knuth's typesetting revolution. A century ago, they chose to rebuild their entire knowledge stack on a new operating system, set theory, that promised massive gains in reliability and scalability. A few decades later, they recognized that there was no real difference between a mathematical proof and a calculation, and set out to build the first computers. Deep learning, with its heavy use of linear algebra and stochastic gradient descent, is a brainchild of mathematics.

In the 1970s, when Kenneth Appel and Wolfgang Haken built a computer-assisted proof of the four-color theorem, this opened an intense debate on the epistemic nature of such proofs and their admissibility in peer-reviewed journals. Although, to be honest, there never was much suspense—the barbarians won, because there were barbarians on both sides.

Computers had always been part of my mathematical life4 and the promise of AI and autoformalization had long felt irresistible to me. This is what got me excited when my friend reached out and asked for my advice.

I started looking at the "AI for math" space and couldn't understand what was going on. Why were these startups raising so much money? Pure mathematics is such a tiny market. The investments felt disproportionate.

My preferred strategy, the one I would have pursued, was to create the Wolfram Research of the AI age. Mathematics-enabled science and technology is a much larger market than pure mathematics and, as Wolfram demonstrated, there is room for simplifying and productizing the experience of interacting with mathematical objects. The users love the product and it is sticky.

But my friend insisted he wanted to do something specifically about pure math.

I didn't know what to say, because I was stuck. The only useful products I could think of were literature spotters and interactive proof assistants—hard to package, hard to price, and even harder to sell. I could see a long term business strategy, but it was one I wouldn't touch with a ten-foot pole—becoming the Elsevier of the AI age, the most hated brand in science, an arm-twisting extractive monopoly that repackages the mathematical commons into a mandatory experience.

There was a third strategy, though. But it was risky and, like the previous one, it did require a certain degree of cynicism. I'd call that plan the luxury acquihire: 1) build a useless product that is striking enough, 2) give the impression that you have solved a major scientific problem, 3) pray for a quick M&A by a tech giant or a major AI lab.

Still, the numbers didn't add up. The "AI for math" startups were rumored to be raising hundreds of millions. There must have been a smarter investment thesis, which I was failing to comprehend.5

Then I heard that Google was leading a massive effort to solve the existence and smoothness of Navier–Stokes equations. I thought OK, I get it, that's a Millennium Prize problem. But, wait, that still doesn't make sense—the payout is one million dollars, peanuts. As one great mathematician remarked to me, Google likely mobilized more brainpower on this single effort than the entire community ever did.

It only started to make sense after I heard Hinton's speech.

If mathematics really was a closed system—or if this is what all the stakeholders around the table are willing to believe—then the investment pitch becomes trivial: "DeepMind solved Go and Chess, we're going to solve mathematics!"

At a time when the leading AI labs are betting trillions that humans are soon to become obsolete, the promise of "solving mathematics", the crown jewel, the pride of the human race, is simply irresistible.

Bringing a caliper to a gunfight

On February 5th, a team of eleven high-profile mathematicians (including Martin Hairer, the 2014 Fields medallist) announced the First Proof project and released a first batch of ten "research-level math questions":

This manuscript represents our preliminary efforts to come up with an objective and realistic methodology for assessing the capabilities of AI systems to autonomously solve research-level math questions. After letting these ideas ferment in the community, we hope to be able to produce a more structured benchmark in a few months.

One of our primary goals is to develop a sophisticated understanding of the role that AI tools could play in the workflow of professional mathematicians. While commercial AI systems are undoubtedly already at a level where they are useful tools for mathematicians, it is not yet clear where AI systems stand at solving research level math questions on their own, without an expert in the loop.

From a purely scientific perspective, there is nothing to complain about. These are incredibly smart people, engaging a real-world controversy with an open-minded attitude and a creative approach.

The First Proof team was doing everything right—and this is what terrified me.

But before I explain, I must reiterate that I have a very high opinion of this project. The team represents the mathematical community at its best, people driven by curiosity and integrity, willing to experiment outside of their comfort zone, and they did come up with genuinely good ideas.

Daniel Litt wrote an excellent essay, Mathematics in the library of Babel, on the First Proof project and his own first-hand assessment of the AI-for-math situation. His perspective is that of a radical non-Luddite, a pure mathematician who has engaged with LLMs for years and is genuinely impressed by the recent progress.

He was surprised by how many of the First Proof open problems ended up being solved by the teams at Google, OpenAI, and others. By his own count:

It seems likely that somewhere between 6 and 8 [of the 10] problems were solved correctly if one combines all attempts.

There are serious caveats, though:

The models (and the humans supervising them) generated an enormous amount of garbage, including some incorrect solutions claiming to be formalized in Lean. Even the best models/scaffolds seem not to be able to reliably detect when they are producing nonsense.

Even when AI-for-math systems operate autonomously, which few in the current crop actually do, they still require humans to intervene upstream and downstream, if only to assess the results and filter out the junk. This isn't anecdotal. The AI labs are investing so much human intelligence into these projects, much more than any real-life mathematician can ever mobilize, that the delineation is never entirely clear. The most damning illustration is this:

It was not clear to OpenAI which of their solutions to First Proof are correct.

In other words: without the pro bono effort of the good old academic community, they might have never known. (Litt was himself a contributor.)

In truth, this also applies to human-generated proofs. But there is a fundamental nuance. In the human way of doing math, theorem-proving and concept-building walk hand in hand, which forces proofs to be intelligible (if only to their authors).

This is where the metaphysics comes back to bite. If mathematics was just a formal game of meaningless symbols, intelligibility would be a vacuous notion. The reality of mathematical practice forcefully points in the opposite direction. As it happens, published research is full of bugs, but these bugs tend to be contained and fixable, precisely because human-generated proofs are meaningful and (almost always) directionally correct—two notions that are impossible to reconcile with the formalist worldview.

This isn't a cosmetic nuance. This is a mandatory condition for mathematics to exist as a sustainable endeavor.

As noted by Litt, the essential characteristic of being "truth-seeking" was missing from the AI solutions to the First Proof questions:

Many correct solutions are very poorly written, and their correctness is exceedingly difficult to check because of this... In [human solutions] the main ideas, obstructions to be overcome, etc., are usually identifiable; in [AI solutions] they are often completely unclear. And in the course of writing their solutions, the human authors often develop useful new objects, terminology, etc. to capture what they're doing, while the models usually just plow ahead.

This is where the second leg of the math-for-AI revolution comes into play: autoformalization, the capability to transform the mildly informal style of human-produced proofs—and the outputs of LLMs trained on them—into bulletproof, machine-verifiable logical derivations, expressed in specialized languages such as Lean.

On paper, this can address the issue of correctness and remove the need for human validation. But, as noted by Litt, "there was only one credible formalized solution to any problem, impressively and manually orchestrated by Tom de Groot."

This is of course an evolving situation. The stakes are high and the investment massive. LLMs, scaffolding, and autoformalization, are all making steady progress.

What would happen if, a year from now, the First Proof team released another set of 10 problems of equivalent difficulty? Litt doesn't answer this specific question, but he expects AI to autonomously produce results "at a level comparable to that of the best few papers" within the next few years.

I share his sentiment. In my view, the likely outcome would be that the leading AI labs would score a perfect 10, coming up with fully automated & fully correct solutions to all problems.

Does that mean that AI will have "solved mathematics" by early 2027?

Of course not. There are three additional caveats that I am sure all insiders will have already spotted, but non-specialist readers may have missed.

Caveat #1: Oceans

The first one is straightforward—the First Proof "research-level questions" were neither profound nor difficult. They were closer to technical lemmas, well-calibrated intermediate subproblems that occur in the course of proving a theorem and are typically handled in a few paragraphs or pages. There is an ocean between a technical lemma and a serious paper, another ocean between a serious paper and a breakthrough, another ocean between a breakthrough and a Fields-medal-level contribution, and yet again several oceans above that.

First Proof is now working on a second batch, which is likely to include harder problems.

From a technical perspective, they did pick the right level for their first batch. This only appeared in hindsight: the final score was above 0/10 and below 10/10, in the sweet spot for their stated goal of finding an "objective and realistic methodology for assessing the capabilities of [current] AI systems".

Yet I think that releasing a benchmark that didn't include serious problems was extremely dangerous. The general public doesn't read the fine print, and may not even know what a lemma is. If Google or OpenAI had scored a perfect 10, the headline would have read: "GAME OVER: The world's top mathematicians challenged an AI with 10 research-level problems; the AI nuked each and every one of them."

Similar distortions are being made every single day on social media, feeding the very confusion that First Proof was trying to dispel.

Caveat #2: Accretiveness

The second caveat is much more profound. It is also extremely subtle and hard to communicate to the general public, and this combination is creating a tricky situation for the mathematical community.

The problem with unintelligible proofs goes way beyond correctness, and cannot be resolved by autoformalization alone: even if correct, unintelligible proofs aren't accretive to the mathematical corpus.

I know this sounds barbaric. Let me explain with an example. A few weeks ago, Math Inc, one of the best-funded AI-for-math startups, produced a Lean formalization of Maryna Viazovska's spectacular work on the sphere packing problem in dimensions 8 and 24, results which earned her Fields medal in 2022. That was impressive in its own right: never before had theorems of this caliber been autoformalized. Yet this clear success was met by a massive pushback from the "formal mathematics" community, the very people who lead the effort to port "human mathematics" into machine-verified code.

Luddites?

Well, it's more complicated, as it emerges from their actual conversation. The thing is that autoformalization isn't a full solution to the problem of formalizing mathematics, just like Tesla's Full Self-Driving isn't a full solution to the problem of driving cars.

Yes, I know, that sounds counterintuitive. This is why outsiders are likely to miss the nuance and label the pushback as Luddism.

A clear explanation can be found in Alex Kontorovich's account of his own learning curve with formalized mathematics. In a nutshell: Mathlib, the dominant Lean library, is a human-curated formalization of an ever-growing fraction of existing human mathematics. It exposes clean APIs and abstractions, without which no autoformalization could take place. By contrast, Math Inc's autoformalized proof of Viazovska's results exposes no intelligible interface. Who in their right mind would merge a 200,000-line unaudited vibe-coded blob into the master branch of global human science?

Kontorovich has a great expression for what is missing—canonization:

By canonization, I mean the process of taking a local, one-off formalization and turning it into library mathematics: general, reusable, coherent, efficient, and compatible with the rest… Canonization often changes the picture itself: the definitions, the abstractions, the API, and sometimes even the statement… This is extremely difficult and time-consuming.

But this, again, might be mistaken for Luddism. "General", "reusable", "coherent", "efficient"… Aren't these reactionary arguments made up by dumb humans with narrow context-windows? Why would an AGI care about that? Isn't autoformalization, by design, the definitive solution of all problems with vibe-coding?

Doesn't proven code meet the highest conceivable standards for software? Why are you constantly shifting the goalposts?

The software analogy helps understand the core issue. For normal software, quality is first and foremost a pragmatic notion—good code should compile, run smoothly in production and satisfy user needs. But Lean code never runs anywhere. It is just a library that sits in a repository and might be imported in the future, who knows when, in the process of formalizing another theorem.

You cannot "ship and let the users do the QA for you" because your only user is the future of mathematics. This is why canonization, from Euclid to Zermelo-Fraenkel to Bourbaki, has always been a core concern of the formalist school.6

We're back to the metaphysics. The problem with unintelligible mathematics isn't that it might be false. It is that it is literally meaningless, in the sense that it doesn't compile on the only hardware that is currently able to make sense of it and appreciate its value—the human brain.

This, of course, could change in the not-so-distant future. There is nothing magical about the human brain. Artificial sense-making and truth-seeking architectures will certainly emerge, and at some point they will probably surpass humans on all aspects of mathematical creativity, including the canonization of formal proofs (which, from an algorithmic complexity perspective, is a mandatory requirement for all forms of mathematics, whether human or superhuman).

Yet this is absolutely not our present—nor a straightforward continuation of current trends—and I don't think the conditions are met for a fruitful debate. In any case, if humans are still around, they will still feel the need to understand the world, and this will continue to drive them to engage with mathematics.

No one knows the timeline to AGI. Meanwhile, the autocanonization capabilities of frontier models barely exceed zero and Math Inc's autoformalized proof of Viazovska's theorems sits as an orphaned 200,000-line blob.

This is what made the Mathlib community so angry. They had been working on a multiyear project to formalize Viazovska's work. Math Inc jumped in on this collective effort, leveraged prior insights, then abruptly went silent, until they made their spectacular announcement.

Is this necessarily bad news? Now that the brute-force autoformalization is done, why can't the Mathlib community refocus on the value-creating canonization?

Because of Hardy's curse and the honor code of mathematicians. Math Inc captured the prize ("first formalization of a Fields-medal-level theorem") and there is no social reward left for cleaning up after them. Hence this comment by Patrick Massot, a non-Luddite expert in formalized mathematics:

I think the situation is pretty clear: AI companies, and especially Math Inc, will indeed thoroughly bomb this area to turn it into a giant radioactive wasteland that will never be able to sustain life again, so we will never get the benefits expected from formalization (improved understanding and accessibility). I strongly advise young people to contribute to less shiny projects that are less likely to be destroyed.

What makes the situation really tricky is that unintelligible formal proofs may hold significant residual value, even if they aren't accretive to the canonized corpus.

And, to be honest, the issue existed well before AI, with the four-color theorem, with the classification of finite simple groups, or with Tom Hales's monumental work on the Kepler conjecture (which led him to seek a formalized proof).

The likely outcome is that formalized mathematics will now develop in two separate layers, an intelligible layer embodied by Mathlib, and an unintelligible layer we might call Mathslop, a library of results that are known to be correct via proofs that no human has ever understood.

Caveat #3: The Overhang

When the First Proof problems were released, my greatest fear was that the big AI labs were going to one-shot every single one of them, for the wrong reasons—because the answers might have been available somewhere in one shape or another.

Litt notes that 2 of the 10 problems had solutions7 in the existing literature (which, no surprise, most LLMs were able to leverage), while a "sketch of proof" was available for a third problem (which, nevertheless, no LLM was able to solve).

But what about the remaining 7 problems? Were they really open? In what sense?

My view is that it is impossible to say for sure, due to a structural feature of the mathematical corpus which, no doubt, is going to play a central role in the AI-for-math debate. In fact, I suspect that our legacy notions of "creativity" and "innovation" are ill-founded, and that AI is about to teach us brutal lessons about them.

Most mathematicians are intuitively aware of this structural feature, although its exact shape and size are impossible to chart. I haven't seen any serious attempt to theorize it and the feature has no agreed-upon name—let me call it the Overhang.

I expect the Overhang to be absolutely gigantic.

I met it on several occasions in my career. Most encounters were fairly casual, except for one mystifying experience that took place as I was doing my best work, right after I came up with the core idea in my Definition 9.3. That was an entirely new concept, built on a stack of new ideas that I had just come up with, unlocking the solution to a classical conjecture—subjectively, that felt like a stroke of genius.

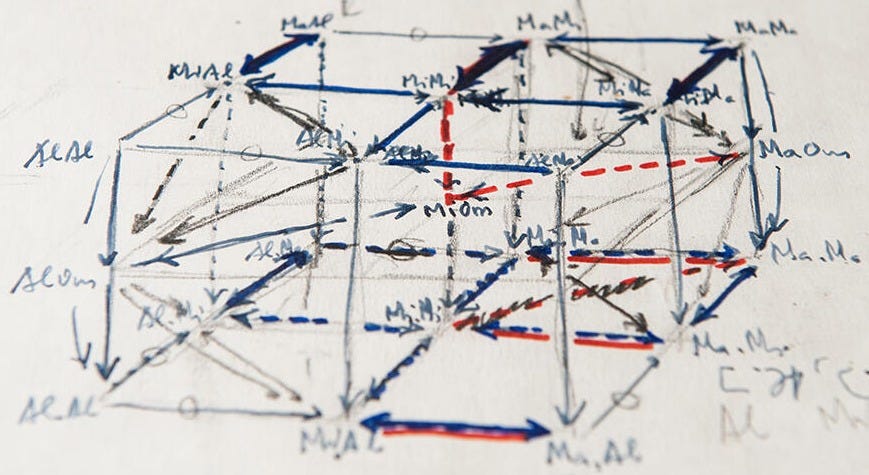

As I was "canonizing" my most creative idea ever, I made a shocking discovery. My proud invention, divided Garside categories, was essentially equivalent to a seemingly esoteric construction in an entirely different branch of mathematics, namely the Bökstedt-Hsiang-Madsen subdivision of Connes's cyclic category in algebraic K-theory.

In other words, my one genius idea was déjà vu. Or was it? Two decades later, I continue to view this moment as the high point of my career. I don't really care if my idea was or wasn't "original", as I doubt this is the most salient notion. What struck me was the sudden flash of meaning, which was otherworldly. (A clear cognitive sign of long-term value.)

In any case, a good LLM might have spotted the syntactic analogy in the preliminary phases of my work, when I still had no clear idea where it was leading. Then it could have scooped me by "front running."

The existence of profound correspondences between seemingly unrelated topics is one of the most celebrated aspects of mathematics, a source of joy and marvel. It might be the purest expression of mathematical beauty. Descartes changed the world by noticing that algebra and geometry were essentially the same, and by building a bridge between them. Once you "invent" cartesian coordinates, many legacy problems become trivial—and interesting new problems instantly emerge.

But modern mathematics is so fabulously complex that most correspondences go unnoticed.

Sometimes connecting the dots leads to initial mystery rather than discovery. A striking example is John McKay's famous remark that 196 883 + 1 = 196 884. The number 196 883 appeared in the study of the Monster, while the number 196 884 appeared in the study of modular forms, two areas of mathematics that were mutually alien. The conjectural vision that they should be related seemed so ludicrous that it was labelled the Moonshine theory, or the Monstrous Moonshine. Richard Borcherds received the 1998 Fields medal for proving it correct.

But sometimes connecting the dots is enough to solve a major problem. The last mile of proving a conjecture is often about realizing that the missing bit was already present somewhere in the literature.

The Overhang consists of the unrealized capital gains of past mathematical creativity, the latent value from connecting the dots in the existing corpus. It is a dividend of canonization. Mathematician X states problem A, mathematician Y crafts concept B, then mathematician Z notices that B trivially solves A and "captures" the social reward. But in the process of capturing the reward, Z usually introduces new concepts and new open problems, reinjecting latent value into the Overhang.

LLMs can be trained on the entirety of the mathematical corpus. Thanks to their phenomenal memorization and pattern-matching abilities (without always being able to map out their associative logic and attribute due credits), they are in a unique position to harvest the Overhang. By contrast, professional mathematicians have typically read a few hundred articles in their career, out of millions of existing references, less than 0.1% of the total.

This will lead to great discoveries, which is unambiguously exciting. But it could also lead to a sad new deal, where human slaves painfully curate the Overhang while AIs systematically beat them at the finish line.

We are very far from it, though, which in and of itself is disorienting. Litt adds this sharp remark:

The mystery is this: a human with these capabilities would, almost certainly, be proving amazing theorems constantly. Why haven't we seen this from the models yet? What are they missing?

The answer seems quite obvious—current AI systems and humans process mathematics in entirely different ways. The best models are insanely stronger on certain aspects, which necessarily implies that humans are still insanely stronger on others.

AI is at the same time superhuman and subhuman, depending on how you look at it.

This is a compliment to both AIs and humans. This plurality of aspects makes the whole "benchmarking" enterprise extremely fragile, if not ludicrous. There is a real possibility that AI will achieve problem-solving supremacy long before it achieves concept-building adequacy. What are we going to call that? "Superintelligence"? Seriously?

This is my core issue with the First Proof approach. It is a meaningful benchmark for working mathematicians wondering what AI can do for them in the present, from within the constraints of an obsolete honor code, at a moment when they urgently need to break away from it.

Why don't we construct benchmarks that are fairer to humans? Because we can't. Because the true value of mathematics, the collective and individual elevation of our worldviews, is ill-defined and intangible. This intangibility was the raison d'être of the honor code.

For millennia, we had agreed to only benchmark human intelligence on its problem-solving facet, as we had found that it was the best objective proxy. Theorem proving is so inordinately difficult for our cognition that the only progress path was through patient concept building and neuroplastic internalization of these new concepts.

Yet this was only ever a proxy. The thing we really care about is different in kind. Industrial robots are far stronger than humans, yet we still go to the gym. Blenders have been outchewing us for over a century, yet we still don't eat exclusively through straws.

I know the optics are horrible. Declaring that math is first and foremost about comprehension, an unbenchmarkable aspect, sounds like an all-too-convenient excuse. Yet this isn't something I am pulling out of my hat at the last minute. Here is what Thurston wrote in 2011:

Mathematics is commonly thought to be the pursuit of universal truths, of patterns that are not anchored to any single fixed context. But on a deeper level the goal of mathematics is to develop enhanced ways for humans to see and think about the world. Mathematics is a transforming journey, and progress in it can be better measured by changes in how we think than by the external truths we discover.

I cited this passage in my book, written five years ago, before the AI storm started. I also cited a 1628 rant by Descartes against the Ancient Greek mathematicians who, "with a kind of pernicious cunning", had "suppressed" the "true mathematics"(which he identified with the inner cognitive methodology) and only published "childish and pointless" stuff, "the fruits of their method, some barren truths proved by clever arguments, instead of teaching us the method itself."8

Terry Tao made a similar comment in his recent interview with Dwarkesh Patel:

In math, the process is often more important than the problem itself. The problem is kind of a proxy for measuring progress.

He also made this remarkable prediction:

I think within a decade, a lot of things that mathematicians currently do—what we spend the bulk of our time doing and a lot of stuff we put in our papers today—can be done by AI. But we will find that that actually wasn't the most important part of what we do.

The feedback on social media was overwhelmingly positive, as is often the case with Tao's public interventions. Yet I did notice some unusual dissent, specifically on the notion that problem solving in itself isn't all that valuable.

To the AGI prophets, this passed as a refusal to see the writing on the wall. There were some actual sneers.