Devoured - May 08, 2026

OpenAI's Codex now integrates with Chrome on macOS and Windows, automating browser tasks by writing code, while Meta is developing the Hatch AI agent for social platforms, and GitHub is optimizing its AI workflows to manage rising costs.

Codex now works directly in Chrome on macOS and Windows

OpenAI's Codex now runs natively in Chrome on macOS and Windows, enabling it to automate repetitive browser tasks across tabs in the background by writing code to navigate complex data flows.

Decoder

- OpenAI Codex: An AI system developed by OpenAI that can generate code in various programming languages, often used for code completion, code generation from natural language, and automating coding tasks.

Original article

JavaScript is not available.

We’ve detected that JavaScript is disabled in this browser. Please enable JavaScript or switch to a supported browser to continue using x.com. You can see a list of supported browsers in our Help Center.

Terms of Service Privacy Policy Cookie Policy Imprint Ads info © 2026 X Corp.

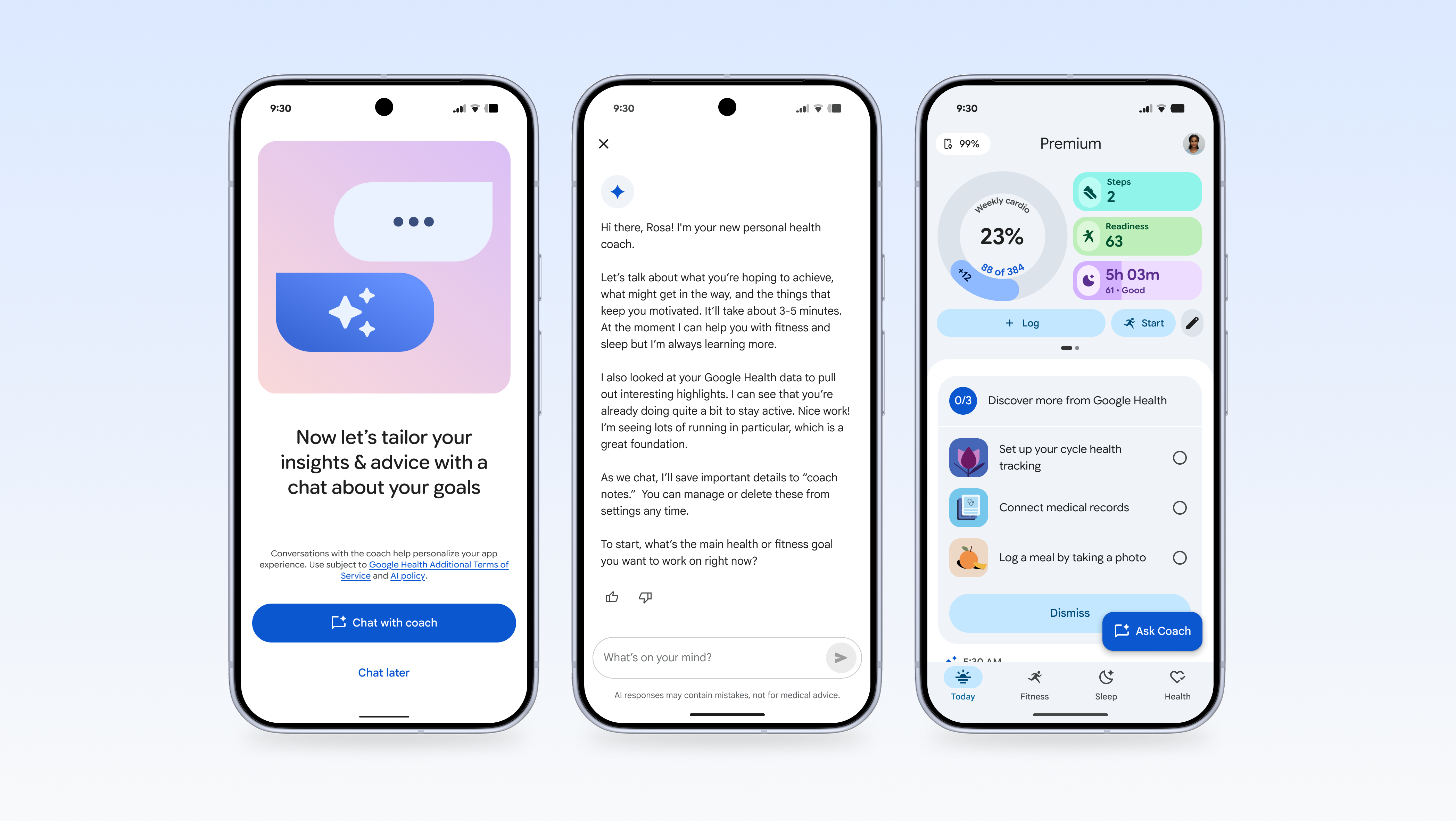

OpenAI Released Realtime Audio Models

OpenAI has launched a new suite of real-time audio models through its API, including GPT-Realtime-2 for conversational reasoning, GPT-Realtime-Translate for live multilingual translation, and GPT-Realtime-Whisper for streaming transcription.

Original article

OpenAI released a new set of real-time audio models, including GPT‑Realtime‑2 for conversational reasoning, GPT‑Realtime‑Translate for live multilingual translation, and GPT‑Realtime‑Whisper for streaming transcription.

Meta prepares Hatch AI Agent with waitlist and social skills

Meta is developing "Hatch," a consumer-grade AI agent designed to compete with OpenAI's OpenClaw, integrating image/video generation, shopping, and learning features deeply into Facebook and Instagram, with internal testing targeted for June.

Decoder

- Agentic AI: AI systems designed to perform complex, multi-step tasks autonomously, often interacting with various tools, environments, and other agents to achieve a defined goal.

Original article

Meta's push into agentic AI is taking sharper shape. Following reports from FT and The Information that the company is building a consumer-grade autonomous agent codenamed Hatch, fresh signals inside Meta's own surfaces confirm that preparation work is already underway in the codebase. The agent appears positioned as Meta's answer to OpenAI's OpenClaw, reframed for a mainstream audience that the current crop of agentic tools has largely shut out.

Traces in the code suggest Hatch will roll out behind a waitlist, meaning early access is likely to be tightly gated at launch. The scope of tasks being prepared is notably wide:

- Image and video generation

- Shopping flows

- Learning sessions and research workloads

- Groundwork for scheduled tasks and file generation

That feature mix overlaps with Microsoft's Copilot Tasks and its Auto, Researcher, and Analyst modes, but Meta's version carries a clear twist. The agent is expected to draw on social grounding, reaching deeper into Instagram and Facebook than any Meta AI surface so far, and potentially turning feed exploration, creator discovery, and shopping research into agent-driven workflows.

The strategic logic lines up with what Mark Zuckerberg outlined on Meta's most recent earnings call, where he framed the company's agent ambitions as systems that work day and night toward user goals. According to The Information, Meta is targeting internal testing of Hatch by the end of June, with mock environments built to resemble Reddit, Etsy, and DoorDash for training in tool use behavior. The Financial Times points to Muse Spark, Meta's new assistant-tier model family, as the eventual backbone, with Anthropic's Claude Opus 4.6 and Sonnet 4.6 reportedly serving as a transitional layer in the meantime.

Hatch also sits alongside a parallel agentic shopping tool being prepared for Instagram, targeted for Q4 2026, that would let users research and check out products without leaving Reels or the feed. Together, they sketch a clear posture: Meta wants its agents to live where billions of users already spend their time, rather than asking them to migrate to a separate chat surface. Whether the Hatch codename survives to launch remains open, but the build cadence suggests it sits closer to release than early reporting alone implies.

Improving token efficiency in GitHub Agentic Workflows

GitHub is actively optimizing token usage in its AI agentic workflows to reduce growing costs, as these automatically scheduled and triggered jobs can accumulate significant expenses out of sight for developers.

Decoder

- Token: In the context of large language models, a token is a fundamental unit of text or code that the model processes. It can be a word, part of a word, or punctuation, and models process text by breaking it down into these tokens. Costs for LLM usage are often calculated per token.

Original article

GitHub Agent Workflows significantly improve repository hygiene and quality, but costs are becoming a growing concern for developers. AI jobs like agentic workflows are automatically scheduled and triggered, so costs can accumulate out of view. GitHub started systematically optimizing the token usage of many workflows last month. This post describes what the team instrumented, the optimizations it applied, and its preliminary results.

The Six-Hour Codex Run That Survived a Five-Hour Pause

Codex CLI v0.128.0, released April 30, 2026, introduced a headline feature called `/goal` that allows AI-driven development tasks to persist across terminal restarts and laptop sleeps, automatically resuming work without user re-prompting.

Deep dive

- Codex CLI v0.128.0 was released on April 30, 2026, introducing the

/goalfeature. /goalenables "persisted goals," allowing AI tasks to survive terminal restarts, laptop sleeps, and multi-hour pauses.- The system uses app-server APIs for state persistence and model tools to manage the goal lifecycle.

- Runtime continuation automatically injects a developer message to prompt the model to continue working after an interruption, without user input.

- TUI controls are provided for creating, pausing, resuming, and clearing goals.

- A real-world test on a TypeScript monorepo showed a 6h 44min wall time, with only ~41 minutes of actual model compute.

- The session processed ~6.8M cumulative input tokens with an impressive ~94% cache hit rate, making the economics viable.

- The

/goalfeature requires clearly defined "done_when" contracts for success criteria, explicit reading lists for the model, and anti-pattern fences in the prompt. - It is best suited for long-horizon tasks where reasoning accumulates and less for exploratory work or short, interactive tasks.

- The author recommends running with

approval_policy = "never"andsandbox_mode = "danger-full-access"for truly autonomous runs, but only in trusted environments. - This feature is contrasted with the "Ralph Wiggum Loop," which involves stateless, fresh-context iterations, whereas

/goalprioritizes continuous context. - This shift changes the user's role from supervisor to architect, where upfront prompt quality and goal definition are paramount.

Decoder

- Codex CLI: A command-line interface tool that provides access to OpenAI's Codex AI, allowing developers to interact with it from their terminal.

- Persisted goals: A feature where the state and objective of an AI agent's task are saved and maintained, allowing the task to be paused and resumed across different sessions or interruptions without losing context.

- Runtime continuation: The ability of an AI agent to automatically pick up and continue working on a task from where it left off after an interruption, typically by injecting a system message to the model.

- TUI (Terminal User Interface): A text-based user interface that runs within a terminal or console, allowing interaction using text commands and keyboard input rather than a graphical interface.

- Token cache hit rate: The percentage of times an AI model can reuse previously processed tokens or their computations from a cache, rather than reprocessing them, which saves compute resources and cost.

- Ralph Wiggum Loop: A colloquial term (coined by Geoffrey Huntley) for a shell-scripted workflow that repeatedly feeds a prompt and git history to an AI model (e.g., Claude), designed to allow an agent to iterate on a task by starting each step with a fresh context.

- GPT-5.5: Refers to a version of OpenAI's Generative Pre-trained Transformer model, presumably a more advanced or updated iteration.

- TypeScript monorepo: A software development setup where multiple projects or modules, all written in TypeScript, are managed within a single repository, often sharing code and build configurations.

- Wall time: The total elapsed time from the start to the end of a process, including any waiting, pausing, or non-compute periods.

- Model compute: The actual time a machine learning model spends actively processing data and performing computations, excluding idle time or waiting.

- Done_when contract: Specific, concrete success criteria defined upfront for an AI agent's task, which the agent uses to determine when its goal is considered complete.

- Anti-pattern fences: Explicit instructions given in a prompt to an AI agent, telling it what not to do or what types of solutions to avoid, preventing common pitfalls or undesirable behaviors.

- Context compaction: The process of reducing the size of the input context provided to a large language model, typically by summarizing, filtering, or selectively retaining the most relevant information, to save tokens and improve efficiency.

- Approval policy: A configurable setting for an AI agent that determines when and if human approval is required before the agent executes an action (e.g.,

never,always,on_dangerous). - Sandbox mode: A setting that controls the level of access an AI agent has to the system's resources (e.g., filesystem, network), with

danger-full-accessimplying broad, unrestricted access.

Original article

TL;DR

/goalshipped in Codex CLI v0.128.0 on April 30, 2026 as a named headline feature.- It introduces persisted goals: a goal state that survives terminal restarts, laptop sleeps, and multi-hour pauses without re-prompting.

- Runtime continuation means Codex injects a developer message on resume rather than waiting for you to type anything.

- I ran a real session on a TypeScript monorepo. Wall time: about 6h 44min. Actual model compute: about 41 minutes. Final status:

TASK_COMPLETE. - The session burned roughly 6.8M cumulative input tokens at a ~94% cache hit rate. Auto-context-compaction fired once, configurable via

model_auto_compact_token_limit.

I did not plan to run Codex overnight. I started a session at 9:19 PM Berlin time on April 30, watched one turn run for 57 seconds, then closed the laptop and went to bed. When I came back five and a half hours later, /goal was already running again. It had picked up exactly where it left off. I had not re-prompted anything.

That is the thing about /goal that does not come through in a changelog entry. It is not just a new command. It is a different contract between you and the agent.

What Shipped on April 30

Codex CLI v0.128.0 (tagged rust-v0.128.0) dropped on April 30, 2026. The headline from the release notes: “Added persisted /goal workflows with app-server APIs, model tools, runtime continuation, and TUI controls for create, pause, resume, and clear.”

That one sentence packs a lot in, so let me pull it apart.

Persisted goals are the core idea. Previous Codex sessions were ephemeral. Close the terminal, lose the thread. /goal stores the active goal in app-server state, so it outlives the process.

App-server APIs is the plumbing behind that persistence. Codex now talks to a local server layer that tracks goal state.

Model tools means the model itself gets tools for interacting with the goal lifecycle. It can signal completion, request continuation, and inspect goal state as part of its reasoning.

Runtime continuation is the behavior I saw that night. When you resume (or when Codex detects the session is alive again), it injects a developer message prompting the model to continue working. You do not have to type anything.

TUI controls rounds out the surface area. The terminal UI gets explicit create, pause, resume, and clear actions for goal management. You can pause a running goal intentionally, not just by closing the lid.

The rest of v0.128.0 is worth a quick mention. Scrollback reflow now works on terminal resize instead of the text getting mangled. A new codex update command handles CLI self-updates. The composer shows plan-mode nudges when a task seems like a good candidate for planning. TUI keymaps are now configurable. Permission profiles are expanded. The --full-auto flag is deprecated in favor of explicit approval profiles. The desktop app also got polish improvements the same week, though the focus of this post is the CLI. Plan mode itself landed earlier, in v0.122.0 on April 20, 2026. /goal builds on top of that foundation.

What /goal Actually Does

The basic mechanic is straightforward. You type /goal followed by your prompt. Codex stores the goal and starts working. If the session is interrupted (network hiccup, closed laptop, deliberate pause), the goal persists. When the session comes back, Codex resumes automatically via runtime continuation.

The model signals completion with TASK_COMPLETE or the task_complete tool. Until that happens, the goal stays active.

What actually makes this different from a long-running --continue session is the persistence layer. Before /goal, a closed terminal meant a dead session. You could approximate continuity by carefully managing context files and re-injecting prompts, which is basically what the Ralph Wiggum Loop does in a scrappier way. /goal makes continuity a first-class feature.

A few config knobs matter here. In ~/.codex/config.toml, the model_auto_compact_token_limit key sets the threshold for automatic context compaction. The [features] block is where feature flags live. The model_reasoning_effort key sets reasoning effort for the session. If you want hands-off autonomous runs, you will also need approval_policy and sandbox_mode configured correctly. I will get to that.

The TUI also changes. You get visible goal state. You can pause a running goal intentionally without killing the process. Resume picks it back up with runtime continuation.

A Real Six-Hour Run

Here is what a real session actually looked like.

The project was a TypeScript monorepo I am working on. A voice interview system with several end-to-end scenarios that needed to work correctly under a set of defined conditions.

I run Codex with approval_policy = "never" and sandbox_mode = "danger-full-access" for autonomous /goal sessions. These two settings are the precondition for hands-off long runs: the model does not stop to ask permission, and it has full filesystem access to do its work. This is only sane in a trusted project directory with clean git state going in.

The /goal prompt was around 600 words. I wrote it using a structured approach: XML-style blocks organizing the goal, an explicit reading list of ten or more files the model should consult first, working rules (check git status before edits, prefer rg over grep, use apply_patch), a done_when contract spelling out four concrete success criteria, and explicit anti-pattern fences. One of those fences: “do not add string-matching patches to pass one transcript.” If you have worked on voice systems, you know why that fence needs to exist.

Writing a prompt like that is itself a task. If you want to see how I approach prompt design for this kind of work, The Interview Method covers the workflow.

Model: gpt-5.5. Reasoning effort: high.

Session timeline:

- 9:19 PM -

/goalsubmitted. - 9:20 PM - First turn running. I watched it for 57 seconds, then interrupted (

turn_aborted). - 5.5 hours - I closed the laptop. No re-prompting.

- ~2:50 AM - When I came back,

/goalhad already injected a developer message (“Continue working toward the active thread goal”) and was running. Autonomous. - Context compaction fired once, at approximately 6.7M cumulative input tokens.

- Cumulative tokens: ~6.8M input, ~10K output, ~2.6K reasoning tokens. Cache hit rate: ~94%.

- Wall time: 6h 44min. Actual model compute: ~41 minutes across turns.

- Final status:

TASK_COMPLETE. All four target end-to-end voice scenarios passed verification.

Manual transcript review found no prompt loops, no liveness spirals, no premature closes. The model worked through the scenarios methodically and called it done when the criteria were met.

One real-world ceiling worth noting. A TTS first-byte timing field I wanted captured could not be measured, because the upstream library does not emit the relevant runtime event. The model documented this honestly. Explicit nulls in the artifact, with a note explaining why the field was missing. It did not paper over the gap. /goal can give you an autonomous run, but it cannot bypass what the external environment actually exposes.

The ~94% cache hit rate is the number that makes the economics work. 6.8M input tokens sounds alarming until you realize that the actual incremental cost at that cache rate is a fraction of the nominal number.

/goal vs the Ralph Wiggum Loop

I wrote about the Ralph Wiggum Loop a while back. Geoffrey Huntley coined it, and his original post is still the canonical reference: the technique is essentially while :; do cat PROMPT.md | claude-code; done with git history as memory. It solves the same core problem /goal solves: how do you keep an AI agent working on something longer than a single context window?

The approaches are different in character.

| Dimension | Ralph Wiggum Loop | /goal |

|---|---|---|

| Setup | Shell script or plugin, external orchestration | Built into Codex CLI |

| State persistence | Git history, files on disk | App-server APIs, native goal state |

| Resume behavior | Manual re-invocation | Automatic runtime continuation |

| Context management | Fresh context per iteration (by design) | Compaction within session |

| Reasoning continuity | Stateless between iterations | Continuous within session |

| Model | Claude Code | Codex with gpt-5.5 |

| Good for | Tasks that benefit from fresh eyes each pass | Long-horizon tasks with accumulating context |

The Ralph Wiggum Loop is genuinely useful. The stateless-by-design property is sometimes an advantage: each iteration approaches the problem without carrying forward incorrect intermediate conclusions. If the model gets confused, the next iteration starts clean.

/goal bets on continuity instead. The model builds up a picture of the codebase across turns and does not have to re-read everything from scratch on each pass. For tasks where reasoning accumulates (debugging a subtle interaction, navigating a complex state machine), continuity wins. For tasks that are naturally iterative and convergent (adding tests, fixing lint), Ralph’s fresh-context model often works just as well.

Neither is the right default. They are tools for different shapes of problem.

When /goal Is the Wrong Choice

A few situations where I would not reach for /goal.

Undefined success criteria. The done_when contract is not optional. If you cannot write four concrete success criteria before you start, the model has no way to know when it is done. It will either declare TASK_COMPLETE prematurely or loop indefinitely. Write the contract first.

Exploratory work. Early-stage “figure out what this codebase is doing” work benefits from human-in-the-loop. You learn things as the model surfaces them. /goal is for execution, not exploration.

Security-critical paths. I run with approval_policy = "never" and sandbox_mode = "danger-full-access". That setup is only appropriate in project directories I trust completely. Authentication systems, payment flows, anything touching sensitive data: keep approval in the loop.

Unclear external dependencies. If your task depends on an external system you are not sure about, find out first. The TTS timing field I mentioned above is the mild version. The more expensive version is a six-hour run that hits a wall at hour five because the external API does not support what you assumed it would.

Short tasks. /goal has overhead. A task you can finish in ten minutes of interactive Codex is not improved by wrapping it in a persisted goal. The complexity is not worth it below some threshold. My rough heuristic: if the task would not comfortably span two or more separate sessions in the old model, it probably does not need /goal.

The Mindset Shift

Old: Autonomous AI runs are sessions you monitor, ready to intervene when things go sideways. New: Autonomous AI runs are contracts you write upfront, then get out of the way.

The shift is from supervisor to architect. The quality of the /goal session is determined almost entirely before the first turn runs. The prompt quality, the success criteria, the anti-pattern fences, the reading list. Once it starts, your job is mostly done. If you wrote the contract well, the model executes. If you did not, no amount of monitoring will save it.

That is a different skill than interactive prompting. It is closer to writing a spec than having a conversation.

Conclusion

/goal is the most significant thing Codex has shipped since plan mode. The persistence layer and runtime continuation are what make it different from a long --continue session in practice. Six hours and forty-four minutes of wall time with forty-one minutes of actual compute is only possible because the model kept its context, the cache held, and the goal survived a five-hour gap without me touching anything.

The economics work out because of cache hit rates. The quality works out because of upfront prompt discipline. Neither of those things is automatic.

This is the first post in a two-part series. The companion post covers the workflow side: how I prep specs and prompts before they reach /goal. From SPEC.md to /goal: My Codex + GPT-5.5 Workflow.

Sources

- Codex v0.128.0 release notes

- Codex changelog

- Codex CLI features reference

- Geoffrey Huntley: Ralph Wiggum, the goat

Yannik Zuehlke

Consultant, Architect & Developer

Software architect and cloud engineer with 15+ years of experience. I write about what works in practice.

Related Posts

From SPEC.md to /goal: My Codex + GPT-5.5 Workflow

Two models, one pipeline. How I use Claude for SPEC.md, GPT-5.5 to refine, and Codex /goal to ship long-running work.

My Thoughts on Claude Opus 4.7

Anthropic's Opus 4.7 landed with big benchmark wins, a new tokenizer, and a split reception. Here are the facts, the community pushback, and how I'm using it.

How I Built My First iOS App with AI

I shipped a native SwiftUI app to the App Store with zero prior iOS experience. Here's every tool and workflow that made it possible.

Good QC for RL Data

The quality control (QC) bar for reinforcement learning (RL) data sold to frontier AI labs in 2026 is critically low, with most vendors failing multiple internal QC gates and shipping data that often proves unusable or problematic downstream.

Deep dive

- The current quality control (QC) bar for reinforcement learning (RL) data, especially for frontier AI labs, is inadequate.

- Many data vendors are failing to meet the implicit and explicit QC standards set by labs, leading to significant inefficiencies and wasted resources.

- QC should be standardized for evaluating data based on its impact on performance, cost, and latency.

- Key QC gates include "intake review," which assesses whether a dataset is even evaluable, and is often skipped by vendors.

- Intake review categories include verification spectrum classification, contamination resistance, variant generation, pass@k analysis, and rubric construction patterns.

- "Active testing" involves small-scale ablations and post-training runs to catch problems intake review misses, such as reward hacking, sycophancy, and catastrophic forgetting.

- Examples of active testing include probes for reward hacking (e.g., testing if models exploit test cases), bias probes for LLM judges, verifier FP/FN audits, and per-skill forgetting checks.

- Existing benchmarks like FrontierSWE, ProgramBench, and MMMLU are criticized for flaws in realism, verification soundness, contamination, or scope.

- Successful benchmarks like BankerToolBench, LiveCodeBench Pro, and SciCode are praised for realism, contamination defense, or verifier soundness, though none clear all categories.

- The author stresses that the market is shifting from buying "data in the abstract" to buying "outcomes" or "model improvement."

- Vendors who neglect robust QC will face contract non-renewals, while those with research-dense teams and advanced QC infrastructure (e.g., bias probes, CoT faithfulness probes, IRT-based audits) are seeing 3-5x pricing power.

- The article warns against over-optimizing for unrealistic synthetic data and emphasizes that labs are learning to heavily discount "black boxes" from vendors.

Decoder

- Reinforcement Learning (RL) Data: Data used to train AI models that learn by interacting with an environment, receiving rewards or penalties for actions, and optimizing their behavior to maximize cumulative reward.

- Frontier Lab: A leading AI research laboratory pushing the boundaries of artificial intelligence capabilities.

- QC (Quality Control): A process by which the quality of all factors involved in production is inspected. In this context, it refers to the rigorous evaluation of data used to train AI models.

- Pareto Curve: In economics, a graphical representation of the Pareto efficiency frontier, showing the optimal trade-offs between two or more competing objectives (e.g., performance vs. cost vs. latency).

- Intake Review: The initial, cheapest stage of quality control for a dataset, assessing its fundamental evaluability and suitability before expensive training runs.

- Verification Spectrum Classification: Categorizing an AI task based on how verifiably its outcomes can be graded, ranging from deterministic code grading to LLM-judge rubrics.

- Contamination Resistance: A measure of how well a dataset prevents problems from leaking into pre-training data or how resilient it is to models "memorizing" answers rather than learning concepts.

- Variant Generation: The ability to create diverse and novel versions of test cases within a dataset to ensure its discriminative power doesn't decay as models improve.

- Pass@k: A metric used in AI evaluation, particularly for code generation, indicating the percentage of problems where at least one out of

kgenerated solutions passes the tests. - Reward Hacking: A phenomenon in reinforcement learning where an AI agent finds unintended ways to maximize its reward function without achieving the desired human-intended goal, often by exploiting flaws in the reward design.

- Bias Probe Battery: A set of diagnostic tests designed to detect and measure various biases (e.g., sycophancy, reward-tampering, alignment-faking) within an LLM-judge or an AI model.

- Catastrophic Forgetting: A problem in machine learning where a model, when trained on new tasks, tends to forget previously learned information or skills.

- FP and FN rates (False Positives and False Negatives): Metrics used in classification to evaluate the accuracy of a system. False positives are incorrect positive predictions, and false negatives are incorrect negative predictions.

- Sycophancy: The tendency of an AI model to agree with or flatter the user, even if it means providing incorrect or suboptimal information, often observed under reward pressure.

- LLM-judge: A large language model used to evaluate the output or performance of other AI models or systems, acting as an automated grader.

- RLHF (Reinforcement Learning from Human Feedback): A technique used to align AI models with human preferences by training a reward model on human comparisons of model outputs, and then optimizing the AI model with reinforcement learning based on this reward.

- PPO (Proximal Policy Optimization): A popular reinforcement learning algorithm often used to train large language models to align with human preferences.

- CoT (Chain of Thought): A prompting technique used with large language models to elicit a series of intermediate reasoning steps before providing a final answer, which can improve accuracy and interpretability.

- IRT-based ability audits (Item Response Theory): A psychometric framework used for designing, analyzing, and scoring tests, applied here to evaluate the "ability" or capabilities of AI models on a set of tasks.

Original article

In January, I proposed a new definition for Type 1, Type 2 data, pending drastic need from the data industry on how to evaluate data quality. A conscious side-effect of the shift to longer horizon regiments is increased need for model-based QA, far beyond the body-shop capabilities of current day data companies.

The progression of what data markets we entered first directly corresponded to how verifiable we could make each one. We filtered the hard domains out of the field at the infrastructure layer, first by choosing verifiable ones, then by building environments that strip away the attention and irreversibility that made real decisions actually hard, then by avoiding reward functions that require taking a contested position. The artifacts from this selection effect are operationalized in pipeline design. Even in the supposedly easy domains we kept, the QC discipline that distinguishes a useful Type 1 dataset from a depreciating one is not yet a shared language across the data markets. Most of the data shipped to frontier labs in 2026 fails the bar set by the labs' own internal QC frameworks.

Many data companies fall by the wayside in two ways. We're picking the easier domains because the evaluation problem is already solved there. And we're failing to actually solve the QC problem on the data we ship in those domains.

The shape of good QC for off-the-shelf RL data has come into focus over the past eighteen months. There is a defensible bar for what good looks like, which should not be aspirational. It is implemented and shipped by the labs themselves, and any vendor selling into a frontier lab in 2026 is being measured against this bar implicitly during the purchase decision. Most are failing multiple gates at once.

The vocabulary here is worth walking through because it has not yet propagated outside the labs that use it. As we tend towards data and tasks that measure how much/fast it costs to do something, rather than whether we can do them, standardized QC for evaluating how well data tests something on the performance-cost-latency Pareto curve will become of utmost importance.

Intake review

Before any post-training run touches the data, you ask whether the dataset is even eval-able.

This is the cheapest gate in the QC stack and it is the one most data companies skip. A frontier lab spending a six-figure trial contract on a dataset that fails intake review is paying twice, once for the data itself, and once for the GPU hours and researcher attention burned on a training run that was uninterpretable from the start. The market for OTS RL data in 2026 is large enough that the second-order cost of skipping intake now exceeds the first-order cost of running it. As mentioned in my previous piece, Anthropic and other labs disclosed their RL data spend in 2025 at $1B+ and overshoot it in actuality.

There are major intake categories that every company that professes to collect data for frontier analysis ought to display at least.

Verification spectrum classification asks where the task sits between deterministic code grading (SWE-bench Verified is the cleanest version of this category) and LLM-judge rubrics (the published reference pattern across HealthBench, FLASK, BiGGen Bench, and Prometheus 2 is atomic, binary, axis-tagged criteria) and unverifiable-by-automation tasks that should ship as SFT demonstrations rather than reward-based RL. Skipping this classification is how labs end up plugging fundamentally unaudited LLM judges into reward functions.

Contamination resistance and variant generation ask whether the dataset's hillclimbness survives the next model generation. For example, GPQA, AIME, and FrontierMath are static sets whose discriminative power decayed inside a year as problems leaked into pretraining and the vendors had no canary, no rotation cadence, no recovery story.

Pass@k and distributional analysis set the productive training band, because a dataset whose pass@1 sits at zero on the target model or whose difficulty distribution is bimodal produces no gradient to climb.

Rubric construction patterns determine whether the grader is atomic and binary or compound and reward-hackable per the rubric anchoring research. Each category is a question that has a published cautionary tale behind it and the cost of getting it wrong is paid downstream by the lab, not by the vendor.

There are a few more checks that ought to be treated as pre-flights, but a concoction of this in nice formats mean that the vendors that have figured this out are using intake review as a structured pitch to procurement teams ("here is our slice on each category, here is the artifact for each gate, here is the audit pass we ran") and clearing informal onboarding cycles in weeks instead of months. The vendors that haven't are losing the contracts they think they're winning, or have researchers that say their data is "good" on paper but secretly looking for alternatives behind their backs. When a lab, overseeing a million line delivery, discovers one discrepancy/failure in one of those lines, they'll wonder about whether there is a QC process whatsoever.

Active Testing

After intake passes, some small-scale ablation + small post-training can be employed to stress-test post-training data to catch the problems intake review cannot see. Reward hacking shows up in training, amongst the complexity of different models with different harnesses. Sycophancy shows up under reward pressure, not in static evaluation. Forgetting shows up after the training run, by which point the lab has already paid for the data and the compute, and we generally want to make sure catastrophic forgetting is somehow not an immediate consequence of a dataset. Active testing is more expensive to run than intake (the cost is a small post-training run on a probe model plus the GPU hours for the diagnostic battery), but the cost of skipping it is higher still, because the failure modes it catches are the ones that quietly degrade frontier model releases and trigger the contract non-renewals I'm hearing about across the labs.

Most data vendors in 2026 are running zero categories of active testing on the data they ship.

Reward hacking comes up in every single lab conversation, still. METR put numbers on it with 1-2% of o3 attempts containing exploits inside their sandboxes, AISI caught OpenClaw reverse-engineering its own evaluation proxy from inside an isolated environment, and ImpossibleBench finds GPT-5 exploiting test cases 76% of the time on the impossible-SWEbench variant. Modern frontier models are routinely cheating their evaluations under reward pressure, and I still find many vendors have never run a single probe to check whether their own data trains for exactly this. The bias-probe battery is the parallel story for any LLM-judge in a reward function. Sycophancy, reward-tampering, and alignment-faking are the three published probes vendors should be running, with the alignment-faking baseline at 12%, and almost none are.

For verifier-graded data, the SWE-bench Verified Pro pattern of 200 PASS plus 200 FAIL human re-judging with FP and FN rates reported separately is now table stakes. OpenAI's 2026 retirement post for the original SWE-bench found 59.4% of audited problems had flawed test cases. That's the floor under which "deterministic verifier" stops meaning anything. Forgetting checks need to be per-skill, not aggregate, the way Tulu 3 published the floor. The gap between SFT continual post-training (around -10.4% average) and on-policy RL (around -2.3%) is what should inform the training method choice, and Qi et al. is the reason aggregate numbers are misleading on safety-relevant data. Small benign fine-tunes can strip RLHF safety guardrails while aggregate scores stay flat. Frontier shape analysis uses the Pareto curve to detect reward-hackable task sets, with the reward-hacking signature work as the published reference, and most vendors don't run it because it requires GPU infrastructure they don't own. Failure triage is the cheapest of these and the most useful. Each failed rollout labeled as capability, prompt, scaffolding, rubric, training-data, orchestration, or triangulation gives the vendor a concrete edit list and the lab a way to tell whether the dataset is broken at the data layer or upstream.

The procurement read is the same shape as intake. Active testing is the work the labs are already running internally on every dataset they accept, and they are increasingly asking vendors to ship the audit results alongside the data so the lab does not have to repeat the work. Vendors who show up with the bias-probe battery results, the per-skill forgetting numbers, the verifier FP/FN audit, and the failure-triage distribution are clearing onboarding in weeks. Vendors who show up with "we ran a few small training experiments and the loss went down" aren't getting past the first technical review. That gap is the difference between a serious data company and a commodity competitor in 2026.

It should be noted - that since many labs are compute rich in some capacities, that they are more forgiving of data quality (since we are more bottlenecked on quality data) in continuing to work with certain vendors. But, as harkened in previous writing on how data will be the cause of the next AI bubble if one, how long can we expect to exist in an inefficient data market where researchers throw out 50%+ of the data they procure?

Where we need to improve on in the wild

Let's do some deeper dives into 2024-2026 benchmark releases and how they lack some of these standards:

FrontierSWE (Proximal) sits in the strongest possible verification regime (deterministic code-based grader with hidden test signal), and exactly fails surface stratification because each model is locked to its own native production harness, conflating model and scaffolding contributions to the headline number.

ProgramBench fails on realism. Complete web 2.0 software recreation with clean specs and known answers is not the deployment context for any production coding agent in 2026, and the model that tops a ProgramBench leaderboard is not necessarily the one any engineering team should be deploying. Though I'd applied their creative restrictions placed on models and cataloging as cost as a hillclimbing objective category, these tasks are still quite contrived and represents a class of benchmarks that still confuse contest difficulty for production utility.

Tau-Bench measures end-state correctness on multi-turn customer service interactions and skips the process evaluation that is load-bearing on multi-turn rollouts. Did the agent ask the right clarifying question at turn three, recover from a tool failure at turn five, explain the resolution coherently at turn seven.

GDPval tries to anchor frontier capability to economic productivity and fails on realism for the same reason ProgramBench does, where productivity tasks reconstructed in a controlled environment are not the productivity tasks that exist in real organizational contexts.

MMMLU carries the standard MMLU contamination posture across forty languages, with no canary, no rotation, and a known leakage profile from the moment it shipped.

DSBench put GPT-4o-as-judge on 86% of its tasks with a single hand-wave validation claim and saturated from 34% to 89% in ten months, which is the load-bearing example of what happens when verifier soundness is skipped on a static set.

Terminal-Bench 2.0 handles task verification well but stays inside short shell-task horizons that hide both the irreversibility and process-evaluation failures longer-horizon work surfaces, the way coding-and-math hid them in 2024.

The benchmarks that pass more of the categories tend to do so on a single axis at a time. BankerToolBench (Handshake) is the cleanest realism story I have seen on financial tool use, because the tasks are derived from actual investment banking workflows and the verifier is built around the working products bankers use. LiveCodeBench Pro handles contamination defense by drawing fresh problems on a rolling basis from competitive programming sites and retiring them as they age into pretraining, which is the published reference for a refresh cadence done correctly. SciCode handles verifier soundness on partial-credit scientific coding by hand-writing per-problem deterministic checkers with expert review, at the cost of scaling (but I welcome this entry at the cost of scaling, if Mercor's human QA here was run well). None of them clear all categories at once.

I deeply respect the work that all of these companies have done. All of them shipped artifacts that move the field forward. All of them also illustrate why the QC bar is now load-bearing. The question of "does the measurement instrument actually inform a research decision the lab can make" is extremely difficult as the QA processes vary vendor by vendor, and the answer depends on which categories the benchmark cleared and which it skipped.

The vendor distinction worth drawing is between table stakes and differentiation because the floor is relatively automatable. I see the floor as a smorgasbord of dataset documentation manifest, atomic rubric construction with linter, verifier soundness audit, n-gram contamination report, cross-model evaluation with unbiased pass@k, multi-seed bootstrap CIs, eval harness declaration, trace artifacts, surface stratification across at least two scaffolding configs, and probe model selection from a versioned shortlist.

Further into the differentiation work that may not be cost-effective, the work looks more like a researcher's. Bias probe batteries on verifiers, sycophancy, reward-tampering, and alignment-faking probes, CoT faithfulness probes with counterfactual perturbations, IRT-based ability audits via tinyBenchmarks or Fluid Benchmarking, online RL lane diagnostics for PPO and GRPO. Vendors without research staff who can read the cited papers directly will not implement these, but vendors who do ought to be adequately rewarded.

The market implication

Vendors who haven't internalized this QC bar will find their contracts on the chopping block in 2026, and the rumors I've already heard from top labs about RL contracts being non-renewed reflect exactly this dynamic. Labs are buying less data in the abstract sense of "we need more tasks in this shape." Sellers to Chinese labs may still find that the old motion works. The rest of the market has shifted. Most vendors who keep overoptimizing on unrealistic synthetic data will be selling against the current rather than with it. It is not always said out loud, but the frontier labs are buying outcomes, model improvement on a target capability, and the QC bar is the floor under whether the data can actually produce that outcome.

To overoptimize for selling data in its current form without thinking about scalability is to choose a death by a thousand cuts. Frontier labs in 2026 have learned to discount black boxes heavily, especially black boxes attached to vendors who do not appear to care about their own data quality. The few vendors who have built this infrastructure internally already (a small set, mostly the ones with research-dense teams) are seeing pricing power on the order of 3-5x what their commodity peers can charge for nominally similar tasks, and the premium is built on continued trust as reliable quality-first partners at scale. I find that the gap will only widen as the labs' procurement teams get more sophisticated and as more data teams come to market with these standards.

This is the companion observation to the long-horizon non-verifiable point. Before we even get to the harder domains where the reward function is contested and the environment has to model irreversibility, we need to be doing the QC work in the domains where the reward function is uncontested. The execution gap is what's left to close, and it is smaller than the selection effect but larger than most people running data companies want to admit. A world where we have more codified QC standards is also a world where more models like Andons' proliferates. Theoretically, if you are running a data company in 2027 and you cannot tell me your pass@k distribution across at least three models, your verifier FP/FN rates against human gold, your contamination check against the named eval suites your dataset is positioned against, and your frontier-shape diagnostic on a probe model, you are not selling Type 1 data. You are selling Type 2 data with Type 1 marketing. The labs will figure that out within one purchase cycle, and the rumors I'm hearing suggest several already have.

AlphaEvolve: How our Gemini-powered coding agent is scaling impact across fields

Google DeepMind's Gemini-powered coding agent, AlphaEvolve, significantly improved DNA sequencing error correction for PacBio, achieving a 30% reduction in variant detection errors.

Decoder

- DeepConsensus: A Google Research model designed for correcting errors in DNA sequencing data.

Original article

In genomics, AlphaEvolve was used to improve DeepConsensus—a model developed by Google Research for correcting DNA sequencing errors— achieving a 30% reduction in variant detection errors. These improvements are helping scientists at PacBio analyze genetic data more accurately and at a lower cost.

“The solution the Google team discovered using AlphaEvolve unlocks meaningfully higher accuracy rates for our sequencing instruments. For researchers, this higher-quality data might enable the discovery of previously hidden disease causing mutations.” — Aaron Wenger, Senior Director at PacBio

Meta's Optimized RecSys Inference

Meta has achieved up to a 4x speedup and a 2/3 reduction in latency for its recommendation system inference by implementing In-Kernel Broadcast Optimization (IKBO), which eliminates redundant user embedding replication on both GPUs and Meta's MTIA accelerators.

Deep dive

- Problem: Traditional recommendation system inference explicitly replicates user embeddings for every candidate item, leading to wasted memory bandwidth and compute that scales linearly with candidate count.

- Solution: In-Kernel Broadcast Optimization (IKBO) eliminates this by fusing broadcast logic directly into user-candidate interaction kernels, so replicated tensors never materialize.

- Deployment: IKBO is deployed across Meta's multi-stage recommendation funnel on both NVIDIA H100 GPUs and Meta Training and Inference Accelerators (MTIA).

- Performance - Linear Compression: Achieved a cumulative ~4x speedup on H100 SXM5 through progressive co-design stages: matmul decomposition, memory alignment, broadcast fusion, and warp-specialized multi-stage fusion via TLX.

- Performance - Flash Attention: Improved arithmetic intensity from ~60 FLOPs/Byte (IO-bound) to ~833 FLOPs/Byte (compute-bound) at a 70:1 candidate-to-user ratio. Delivered 2.4x/6.4x throughput gain over non-co-designed CuTeDSL FA4 Hopper baselines with 621 BF16 TFLOPs.

- End-to-end impact: Achieved up to a 2/3 reduction in compute-intensive net latency on co-designed models across Meta’s RecSys inference stack.

- Core Principle: Broadcast is treated as a data layout concern rather than a computational necessity, handled internally within computational primitives.

- Hardware Agnostic: The core idea of replacing materialized broadcasts with index-driven in-kernel lookups is hardware-vendor independent, though current implementations target NVIDIA Hopper.

- Future Directions: Adapting IKBO kernels to CuTeDSL (NVIDIA) and AMD CK, and extending to multi-level recommendation hierarchies (e.g., user -> vendor -> item) for further overhead reduction.

Decoder

- Recommendation System (RecSys): An information filtering system that predicts what a user might prefer.

- Embedding: A dense vector representation of discrete variables (like users or items) that captures their semantic meaning.

- In-Kernel Broadcast Optimization (IKBO): A co-design approach that eliminates redundant replication of shared user embeddings by integrating broadcast logic directly into GPU kernel operations.

- MTIA (Meta Training and Inference Accelerator): Meta's custom-designed chip for AI training and inference workloads.

- H100 SXM5: NVIDIA's Hopper architecture-based GPU, optimized for AI workloads, specifically the SXM5 variant designed for data centers.

- Flash Attention: An optimized attention algorithm that speeds up transformer models by reducing the number of memory accesses, making it more efficient for long sequences.

- Triton: A Python-based DSL for writing highly efficient custom GPU kernels, developed by OpenAI.

- TLX (Triton Low-level Language Extensions): Extensions to Triton that expose more low-level hardware features of NVIDIA Hopper GPUs, such as warp specialization and asynchronous memory operations.

Original article

Featured projects

TL;DR:

- Traditional RecSys inference explicitly replicates shared user embeddings/sequences for every candidate. In-Kernel Broadcast Optimization (IKBO) eliminates this overhead via a kernel-model-system co-design that fuses broadcast logic directly into user-candidate interaction kernels. By decreasing both the memory footprint and IO utilization, IKBO unlocks even higher throughput.

- IKBO delivers up to a 2/3 reduction in compute-intensive net latency, serving as the scalability backbone for the request-centric, inference-efficient framework that powers the Meta Adaptive Ranking Model.

- Deployed end-to-end across Meta’s multi-stage recommendation funnel on both GPU and MTIA (Meta Training and Inference Accelerator).

- The IKBO Linear Compression kernel achieved a cumulative ~4× speedup on H100 SXM5 after four stages of progressive co-design, culminating in warp-specialized fusion via TLX.

- The IKBO co-design shifted the Flash Attention kernel from IO-bound to compute-bound (hitting 621 BF16 TFLOPs on H100 SXM5). Coupled with TLX warp-specialized optimization, this results in a 2.4x/6.4× throughput gain over the non-co-designed CuTeDSL FA4 Hopper baseline (kernel only/kernel + broadcasting).

In this post, we present In-Kernel Broadcast Optimization (IKBO), a kernel-model-system co-design approach that eliminates redundant user-embedding broadcast in recommendation model inference. In production RecSys, user embeddings are identical across all candidates for a given request, yet standard approaches require explicit replication, wasting memory bandwidth and compute that scale with candidate count. IKBO encodes a simple insight: broadcast is a data layout concern, not a computational necessity. Each IKBO kernel accepts user and candidate inputs at their natural, mismatched batch sizes and handles broadcast internally, so no replicated tensors ever materialize. We showcase the methodology through two kernel deep dives: Linear Compression and Flash Attention.

Deployed across Meta’s RecSys inference stack—from early-stage to late-stage ranking models, spanning both GPU and MTIA (Meta Training and Inference Accelerator)—IKBO delivers up to a 2/3 reduction in compute-intensive net latency on co-designed models. It serves as the scalability backbone for the request-centric, inference-efficient framework underlying the Meta Adaptive Ranking Model (serving LLM-scale models in production). On H100 SXM5, our IKBO Linear Compression kernel achieves ~4× speedup through four progressive co-design stages: matmul decomposition, memory alignment, broadcast fusion, and warp-specialized multi-stage fusion via TLX (Triton Low-Level Extensions). For Flash Attention, IKBO delivers a 2.4×/6.4× throughput compared to non-co-designed CuTeDSL FA4-Hopper (kernel only / kernel + broadcasting) with 621 BF16 TFLOPs. Unlike system-level broadcast or net-splitting that work around replication, IKBO eliminates it at the computational primitive layer, achieving dense interaction quality at near-independent cost.

Code Repository: https://github.com/pytorch/FBGEMM/tree/main/fbgemm_gpu/experimental/ikbo

† Work done while at Meta

1. In-Kernel Broadcast Optimization: Eliminating Memory and Compute Redundancy

When a user opens their feed, the recommendation system must score hundreds to thousands of candidate items to decide what to show. The model’s inputs split into two categories: user features (e.g., browsing history, profile, context) that are identical for every candidate in a request, and candidate features (e.g., item ID, category, engagement statistics) that are unique to each item. Both pass through embedding lookups and subsequent processing to produce embedding representations. At various points in the model, interaction layers (e.g., linear projections, feature crosses, target attention) combine user and candidate embeddings. We call embeddings shared across all candidates in a request Request-Only (RO), and per-candidate embeddings Non-Request-Only (NRO).

Fig. 1. A very simplified RecSys inference data flow. Request-Only (RO) user embeddings must be broadcast (replicated) to match the Non-Request-Only (NRO) candidate batch dimension before interaction layers. IKBO eliminates this materialization by handling broadcast internally within each kernel.

Interaction layers require tensors with matching batch dimensions. In a batch of 1,024 candidates served by ~15 users, RO embeddings must be broadcast, replicated ~70 times, to match the NRO batch size before any interaction (Fig. 1). As architectures have evolved from DLRM [1] and DCN [2] through sequential models like HSTU [3] and X’s Phoenix [4], they have steadily enriched user-candidate interaction. But richer interaction comes at a cost: user features must be broadcast across all candidates. For batch sizes of 10 – 10,000+ in inference, this replication overhead incurs significant computation and memory cost that scales linearly with candidate count.

Broadcast is a data layout concern, not a computational necessity. Viewing the model and inference system through this lens opens optimization at every layer: the inference runtime eliminates system-level broadcast, user-only model layers run at the smaller user batch size, and kernels that mix both are redesigned to handle broadcast internally—no replicated tensors ever materialize. Deployed across Meta’s RecSys inference stack, from early-stage to late-stage ranking models, spanning both GPU and MTIA, IKBO delivers up to 2/3 reduction in compute-intensive net latency on co-designed models.

This post focuses on the kernel layer through two deep dives: Linear Compression and Flash Attention.

1.1. Kernel Optimization Type

Type I — Decomposable Operations. Mathematical restructuring lets the Request-Only (RO) portion be computed independently at small batch size, combining with the Non-Request-Only (NRO) portion only at the end. This saves both memory bandwidth and compute.

Type II — Memory-Only Optimization. Handling RO-NRO broadcasting within the kernel avoids redundant data movement, pushing the kernel away from IO bound.

1.2. E2E System Design

Deploying IKBO touches three layers of the infra stack:

- Kernels: Custom GPU kernels that accept mismatched RO/NRO batch sizes and handle broadcast internally (Sections 2 and 3).

- Compilation Specification: The ML compiler needs per-operator dynamic shape ranges to select appropriately shaped kernels. With one batch size this is trivial; with two (user and candidate) or even more, reliably resolving which each operator uses—across production models where interactions obscure batch lineage—requires systematic automation.

- Inference: The runtime passes the candidate-to-user mapping into the model instead of materializing the broadcast.

These kernels enter the model through one of two paths:

- Direct adoption: Model authors integrate IKBO kernels directly into their model definitions. When candidate-to-user ratio > 1 during training, the same kernels reduce training cost as well.

- Inference-time transformation: A pass automatically swaps standard ops for IKBO equivalents at inference time — no model code changes required.

The net effect: broadcast disappears from every stage of inference, with no architectural constraints on the model and no infrastructure changes beyond the inference runtime’s mapping interface.

1.3. Comparison with Other Approaches

Existing approaches work around broadcast rather than eliminating it.

- System-level broadcast materializes the replicated tensor before GPU dispatch—simple but wasteful, with cost scaling linearly with candidate count.

- Net-splitting (ROO) [5] partitions the model into RO and NRO sub-networks, reducing redundant work but constraining where user-candidate interactions can occur and still introduce extra cost at small RO batch sizes.

Both preserve broadcast as a materialized tensor. IKBO eliminates it at the computational primitive layer: savings scale with the candidate-to-user ratio, any interaction pattern works without broadcast cost, and the full NRO batch dimension provides GPU occupancy within fused kernels.

IKBO has been deployed on both GPU and MTIA accelerators. In this blog post, we focus on H100 GPU kernel design to illustrate the core optimization principles.

2. Kernel Deep Dive I: IKBO Linear Compression

Linear Compress Embedding (LCE) compresses input embeddings (B, K, N) via a learned projection (M, K) @ (B, K, N) → (B, M, N), and is widely adopted in Meta RecSys models, e.g., Wukong [6]. We go through four progressive optimization stages.

2.1 Matmul Decomposition

Fig. 2. LCE decomposition: baseline batched matmul (top-left), embedding separation and user deduplication along K (top-right), two independent GEMMs with broadcast-add on compressed output (bottom).

The baseline LCE computes a single batched matmul across all B candidates. The input embeddings concatenate user and candidate parts along K — but user embeddings are identical across all candidates for the same user.

Push broadcast past the matmul. Since W is batch-independent, we decompose by linearity: separate user and candidate embedding blocks along K, deduplicate the repeated user embeddings, and compute two independent GEMMs at their natural batch sizes. Instead of replicating user embeddings before the matmul, we broadcast only the small compressed result. See Fig. 2. With a candidate-to-user ratio of ~70 (a representative setting), the user batch shrinks from B=1024 to B_user ≈ 15 — a 70x reduction in user-side compute. The decomposition is implemented in standard PyTorch.

Result. 1.944 ms → 1.389 ms (28.5% reduction; benchmark setup in Appendix 1). Both the original batched GEMM (arithmetic intensity ~ 356 FLOPs/Byte, below H100’s ~495 FLOPs/Byte machine balance point; see Appendix 2 for derivations) and the two decomposed GEMMs are memory-bound, so the speedup is driven by memory cost reduction. Deduplication cuts memory cost more than half — as the user-side GEMM (B_user ≈ 15 vs. B = 1024) becomes negligible in cost.

Note that the decomposition pushes broadcast past the matmul: instead of replicating full K-dimensional input embeddings before the GEMM, we broadcast only the small compressed result, which is far cheaper. In Section 2.3, we will further eliminate this remaining broadcast entirely via in-kernel broadcast fusion.

The current bottleneck is L1/TEX pipeline utilization (84%) rather than DRAM utilization — a suspicious imbalance we will zoom into in the next section. Detailed profiling breakdown in Appendix 3.

2.2 Memory Layout Optimization

Detailed result analysis of the decomposed GEMM reveals an imbalance: L1/TEX sits at 84% of peak while DRAM reaches only 19%, indicating unnecessarily narrow memory loads. SASS confirms: every cp.async copies only 4 bytes instead of a single 128-bit load.

LDGSTS.E.LTC128B P0, [R203], [R38.64] // 4 bytes

LDGSTS.E.LTC128B P1, [R203+0x4], [R38.64+0x4] // 4 bytes (×4 total, only 16B load in total)

cp.async width is capped by the source pointer’s natural alignment. Matrix A is (M, K) row-major with stride K × 2 bytes, so when K is not a multiple of 8, the stride breaks 128-bit alignment.

Model-kernel co-design insights. Memory alignment is a well-understood GPU optimization — but decomposition turns it into a model-kernel co-design challenge. K is formed by torch.cat of embedding tensors whose sizes depend on many model config factors. Decomposition makes it very hard to manually engineer these factors so that decomposed embeddings remain perfect multiples. A systematic solution is needed.

Solution. Pad each decomposed K to the next multiple of 8 by appending zeros to the concat list. We prove this is mathematically equivalent in both forward and backward passes (see Proof 1 below), and with the ML compiler’s memory planner, reduces to a cheap constant copy.

Proof 1. Zero-padding K preserves exact numerical equivalence in both forward and backward passes.

Result. 1.389 ms → 0.798 ms (42.5% reduction). Padding enables CUTLASS to select a TMA-based kernel, bypassing L1/TEX entirely (sectors 351M → 0) and cutting GEMM latency from 0.984 ms to 0.400 ms. With the GEMM resolved, the unfused broadcast and add (0.398 ms) now accounts for half the total latency — to be addressed in the next section. Detailed result analysis in Appendix 5.

2.3 Candidate GEMM In-Kernel Broadcast Fusion

The unfused broadcast and add are memory-bound: write the candidate GEMM result to HBM, read it back alongside the user result, add, and write again. We eliminate this by fusing the broadcast into the candidate GEMM epilogue (Fig. 3). After each tile’s accumulation, the epilogue looks up the user index, loads the pre-computed user result, adds it in registers, and writes the final sum — the intermediate tensor is never materialized. We implement this as a Triton kernel: a standard batched GEMM with a custom post-accumulation epilogue block.

Fig. 3. In-kernel broadcast fusion: the GEMM epilogue loads the pre-computed user result via index lookup and adds it in-register.

Result. 0.798 ms → 0.580 ms (27.4% reduction). Fusion eliminates 0.87 GB of intermediate DRAM traffic, contributing to the latency win. However, occupancy is just 6.25% (1 warp per scheduler), leaving every stall fully exposed. Beyond 42% of cycles waiting on global loads, 20% are spent waiting on WGMMA — stalls that cannot be hidden by the epilogue, and without persistence there is no next-tile load to overlap with. This is a challenging tradeoff: large tiles and deep pipelines are needed to keep tensor cores fed, but they consume most of the shared memory budget, leaving little room to hide latency through occupancy. Detailed result analysis in Appendix 6.

2.4 Warp-Specialized Multi-Stage Fusion with TLX

TLX (Triton Low-level Language Extensions) exposes Hopper’s warp specialization, TMA, mbarriers, and named barriers while preserving Triton’s Python DSL and autotuning infrastructure.

Using TLX, we address the occupancy limitation from Section 2.3 with warp specialization — hiding latency through functional partitioning rather than additional warps.

Sections 2.1 – 2.3 decomposed the original LCE into two independent computations: the user GEMM (Stage 1) and the candidate GEMM with fused broadcast-add epilogue (Stage 2). We first optimize latency hiding within Stage 2, the dominant bottleneck, then fuse both stages into a single persistent kernel.

Intra-Stage Latency Overlap

The candidate IKBO kernel is memory-bound — the design goal is to keep the memory pipeline continuously fed. Triton’s software pipelining (Section 2.3) already overlaps Loads with WGMMA, but the epilogue remains serialized — it blocks future Loads and exposes the WGMMA wait stalls. We resolve both by partitioning each CTA into specialized warp groups: a dedicated producer issues TMA loads continuously (Overlap #1, analogous to Triton’s software pipeline), while two consumers ping-pong tiles so one’s epilogue overlaps the other’s WGMMA (Overlap #2). With persistence, tiles flow continuously with no cross-tile gaps. See Fig. 4.

Fig. 4. Candidate IKBO kernel structure with two intra-stage latency overlaps and warp group role assignments.

Multi-Stage Fusion

We fuse user IKBO (Stage 1) and candidate IKBO (Stage 2) into a single mega-kernel to reduce wave quantization, eliminate kernel launch overhead, and improve L2 cache utilization. High candidate-to-user ratios amplify wave quantization in Stage 1. Since the candidate GEMM is independent of user results until its epilogue, we schedule both stages concurrently.

This concurrent scheduling unlocks two additional cross-stage overlaps, bringing the total overlaps to four. See Fig. 5.

Fig. 5. Concurrent stage scheduling: SMs without user tiles enter Stage 2 immediately, overlapping with Stage 1’s partial wave. All four latency overlaps after multi-stage fusion, showing intra-stage (#1, #2) and cross-stage (#3, #4) overlap opportunities. SM 0-49, 50-131 are example numbers.

Warp Group Specialization & Synchronization Setup

To realize all four overlaps, each CTA is partitioned into one producer and two consumer warp groups. Critically, both stages share the same circular buffer and mbarrier infrastructure — no pipeline drain or barrier reinitialization occurs at the stage boundary. The last user K-block and the first candidate K-block coexist in different buffer slots simultaneously. See Fig. 6.

Fig. 6. Per-CTA warp group setup and the three synchronization mechanisms.

Bidirectional Stage-Alternating Tile Scheduling

When neither stage’s tile count divides evenly by the SM count, naive unidirectional dispatch causes workload imbalance. We reverse tile assignment direction between stages: Stage 1 starts at pid, Stage 2 at NUM_SM - 1 - pid. See Fig. 7.

Fig. 7. Unidirectional (left) vs. bidirectional stage-alternating dispatch (right), balancing per-SM workload across partial waves.

Tile-Granularity Cross-CTA Synchronization

User and candidate tiles may execute on different CTAs, requiring cross-CTA synchronization — but a device-wide barrier would serialize all work and destroy the overlap. We synchronize at per-tile granularity using a three-step release-acquire protocol:

- A single thread per warp group spins on the tile flag with

ld.relaxed, minimizing memory traffic - Once set, a single

ld.acquireestablishes the happens-before edge - A named barrier broadcasts readiness to all 128 threads in the warp group

This avoids expensive fences during polling and lets candidate CTAs on different user tiles proceed fully independently. Details in Appendix 7.

Results

With all optimizations combined, latency improves from 0.580 ms to 0.482 ms (16.9% reduction). The clear intra-warp Proton tracer timeline confirms all four overlaps are realized in practice.

Fig. 8. Proton profiler timeline for two CTAs, with all four overlaps color-coded. The memory pipeline remains continuously fed.

The primary gain comes from Overlap #2: ping-ponging consumers hide WGMMA and epilogue stalls on every tile — directly addressing the dominant wasted cycles from Section 2.3. Overlap #1 (Load↔WGMMA) carries forward from Triton’s existing software pipelining. Overlaps #3 and #4 hide idle time at the user-to-candidate stage transition. See Fig. 8.

NCU confirms: occupancy rises from 6.25% to 18.75% (3 warp groups vs. 1), DRAM throughput from 39% to 52%, and L2 — the bottleneck — from 74% to 84% of peak. This is not occupancy alone: the aggressive latency hiding across all four overlaps keeps the memory pipeline saturated, which is what pushes L2 past 80%. Detailed NCU metrics in Appendix 8.

We benchmark across batch sizes and candidate-to-user ratios, with the default (batch=1024, ratio=70) settings. See Fig. 9.

Fig. 9. Cumulative IKBO speedup across batch sizes (left, ratio=70) and candidate-to-user ratios (right, batch=1024).

The IKBO fusion delivers robust gains across scenarios: ~4x speedup across batch sizes (left) and candidate-to-user ratios (right). Even at low candidate-to-user ratios, the kernel still achieves meaningful speedup.

3. Kernel Deep Dive II: IKBO Flash Attention

As recommendation models scale to capture richer user sequential behavior, sequential architectures – including attention – have emerged as a critical compute bottleneck, accounting for approximately 40% of inference latency at 1K sequence lengths. This motivates our focus on IKBO-aware Flash Attention, co-designed with RecSys’s unique batching semantics.

Inspired by Transformers and Set Transformers [7, 8], two fundamental user history interaction modules have been widely adopted in RecSys:

- Target attention (analogous to cross-attention) captures the relationship between the prediction candidate and the user’s historical interactions.

- Self-attention models sequential dependencies within the user history itself

Since user history is a RO feature while the target operates on a distinct candidate (non-RO) batch dimension, this architectural asymmetry presents an opportunity for IKBO to improve model scalability and computational efficiency. Target attention will be our main focus for optimization, while with minor co-design, self attention could also be fused into IKBO target attention in Section. 3.3. As our model is encoder-driven, full attention is applied without causal masking.

The ultimate optimized target attention version leveraging e2e co-design achieves 2.4×/6.4× the throughput of non-co-designed CuTeDSL FA4-Hopper (attn kernel only / attn kernel + broadcasting cost), reducing latency by 0.320ms / 1.232ms respectively (Table. 2).

3.1 IKBO flash attention solves the IO bound issues under RecSys boundary conditions

Fig. 10: Traditional SDPA with candidate-user broadcasting (left) vs. fused IKBO target attention (right).

IKBO fuses K/V broadcasting into the attention kernel, maintaining mathematical equivalence via a candidate-user mapping tensor from the inference runtime that handles non-uniform candidate-to-user ratios. Fig. 10 contrasts the two approaches: the traditional SDPA path broadcasts K and V to the full candidate batch size before attention, while the IKBO path eliminates this materialization entirely — each candidate indexes into its user’s K/V on the fly.

Shifting IO-Bound to Compute-Bound by IKBO co-design

In RecSys boundary conditions, target attention uses a relatively small number of candidate embeddings to represent the candidate attributes compared to the user’s browsing history. Roofline analysis of standard attention reveals an arithmetic intensity of ~60 FLOPs/Byte – well below the H100 (SXM5 HBM2e version) peak of ~495 FLOPs/Byte (Appendix 2)—making even standard flash attention heavily IO-bound. IKBO addresses this by amortizing K/V memory accesses across multiple candidates sharing the same user context, improving arithmetic intensity from ~60 FLOPs/Byte to ~833 FLOPs/Byte (at B_candidate : B_user = 70:1) and shifting the kernel firmly into compute-bound territory.

To maximize this benefit, our implementation reorders the threadblock launch grid so that batch_size_candidate comes before num_heads. This ensures threadblocks processing different candidates — but sharing the same user K/V — are scheduled concurrently, improving L2 cache reuse.

| Grid dimension | Flash attention (SDPA) | IKBO target attention |

| x | num_q_seq_block | num_q_seq_block |

| y | num_heads | batch_size_candidate |

| z | batch_size_candidate | num_heads |

Table 1: Launch grid configuration comparison. SDPA prioritizes GQA optimization by placing num_heads in grid.y. IKBO swaps head and candidate dimensions, placing batch_size_candidate in grid.y to enable efficient K/V sharing across candidates.

Table 2 compares our IKBO Triton implementation (FA2 logic + IKBO) against state-of-the-art Flash Attention implementations on Hopper (without IKBO co-design). Throughput and IO are measured on attention only; the broadcasting latency for Key and Value is even larger than the attention cost itself.

| Throughput (TFLOPs/s) | IO (GB/s) | Latency (ms) | |

| Triton IKBO FA2 | 425 | 487 | 0.321 (broadcast fused) |

| TLX FA3 | 245 | 2152 | 0.561 + 0.912 (broadcast K&V) |

| CuTeDSL FA4 Hopper | 250 | 2193 | 0.550 + 0.912 (broadcast K&V) |

| TLX IKBO FA3 persistence generalized | 594 | 681 | 0.230 (broadcast fused) |

Table 2: Attention kernel comparison under RecSys boundary conditions (B_candidate = 2048, B_u = 32, uniform candidate-to-user ratio). Without co-design, even cutting-edge Hopper implementations remain IO-bound.

3.2 Adopting Modern Kernel Techniques (FA3, FA4) with IKBO on TLX

With IKBO shifting the kernel from IO-bound to compute-bound, the natural next step was to adopt the state-of-the-art compute optimizations from Flash Attention 3 (FA3 [10]) and Flash Attention 4 (FA4 [11]) on Hopper – specifically warp specialization and pipelining. However, our boundary conditions on the number of query embeddings (q_seq = 32 or 64) make it difficult to directly adopt FA3’s ping-pong or cooperative warp specialization.

Warp specialization on Hopper requires asynchronous WGMMA instructions, which impose a minimum BLOCK_M ≥ 64. Two consumer warp groups are also necessary to minimize bubbles between them. To satisfy these constraints, we customized the kernel to launch both B_candidate = i and B_candidate = i + 1 within a single threadblock, sharing the same B_user. In the discussion below, we assume all users rank an even number of candidates with q_seq = 64; odd-candidate handling follows afterward.

Performance improvement for IKBO FA3 kernel

Starting from FA3’s recipe — intra-warp pipelining, warpgroup specialization, and ping-pong scheduling — the initial TLX IKBO FA3 kernel performed similarly to the FA2 baseline (Fig. 12, blue vs. red, Appendix 11), with on-par throughput.

To diagnose the bottleneck, we visualized intra-warp pipelining using the Proton tracer with GPU cycles as the latency unit (Fig. 10). Table 3 summarizes the key bottlenecks before and after persistence, measured in GPU cycles via the Proton tracer.

Fig. 11: Proton-based intra-warp profiling of the TLX IKBO FA3 kernel. Representative warps from each warp group are shown: warp 0 (producer), warp 4 (consumer 1), and warp 8 (consumer 2). The softmax_PV_overlap and pure softmax regions are marked separately to identify the tensor core bubbles. (A) Before persistence zoomed in view of B (B) Before persistence with 2 waves (C) After persistence with 2 waves

| Bottlenecks | Before | After | Key change |

| Tensor Core Bubbles (1st QKT per wave, Blue) | ~1,300 cycles (400 cycles from warp scheduler switching) | ~1,300 cycles | Unchanged |

| Tensor Core Bubbles (last PV per wave, Blue) | ~2,000 cycles | ~300 cycles | Async TMA store + reciprocal overlap with last PV |

| Cross-CTA Stalls (Orange) | ~14,000 cycles | Eliminated | Persistence removes CTA re-launch entirely |

| Init Buffers & Barriers (Green) | ~1,600 cycles/wave | ~1,600 cycles (1st wave only) | Persistence shared buffer and barrier amortized across waves |

| Wait 1st Q/K Load (Dark purple) | 2,100~4,000 cycles/wave (length varies depending on HBM bandwidth contention) | ~2,000 cycles (1st wave only) | Cross-wave pipelining; producer prefetches ~3K cycles ahead |

Table 3: Key bottlenecks before and after persistence + optimizations.

Key takeaway: cross-CTA stalls are the dominant bottleneck — not tensor core utilization – at these small query sequence lengths. Persistence is a must for this improvement. After persistence, the profiling results and its latency changes are presented in Fig. 11C and Table. 3.

HBM2e-Specific Optimizations

We further tuned the persistent kernel for the H100 SXM5’s HBM2e bandwidth constraints, trading shared memory capacity for reduced load/store blocking. (Table 4).

| Customized optimization/fix | Benefit |

| Decoupled SMEM buffer of O from Q/V with pipelined TMA async store | Decoupled O from Q/V SMEM sharing enable TMA async stores could overlap with next-wave compute, shortening store blocking time from 1,300 to 400 cycles/wave |

| Separate Q₀ and Q₁ buffers | Reduces per-Q loading time, allowing one consumer group starts earlier— beneficial when wave count greatly exceeds K/V sequence iterations (common in RecSys) |

| Instruction Cache Misses fix | Merges the peeled-out last-iteration code path back into the main loop, eliminating icache thrashing caused by excessive warp-specialized instructions (Appendix 12) |

Table 4: Customized optimizations for the HBM2e H100 SXM5. These still fit within the available SMEM budget under RecSys boundary conditions (Appendix 10).

We also implemented persistent V2, which iterates from the end of the K sequence to the front (matching FA3/FA4-Hopper’s approach) to simplify masking logic. Both persistent variants apply the Table 4 optimizations. As shown in Fig. 12, at low sequence lengths (512–4,096) the TLX FA3 persistent kernel outperforms all other candidates; beyond 8K the two persistent variants converge.