Interaction Models: A Scalable Approach to Human-AI Collaboration

Thinking Machines Lab's TML-Interaction-Small handles simultaneous speech and visual proactivity with 200ms micro-turns, beating GPT-4o Realtime 77.8 to 46.8 on interaction benchmarks.

Summary

Deep Dive

- Introduces "interaction models" that handle audio, video, and text simultaneously in real-time, unlike turn-based commercial models that wait for complete user turns

- TML-Interaction-Small: 276B-parameter MoE with 12B active parameters, trained from scratch with encoder-free early fusion

- Architecture uses 200ms "micro-turns" continuously interleaving input processing and output generation across all modalities

- Split design: real-time interaction model for immediate responses + asynchronous background model for reasoning, tool use, and long-horizon tasks

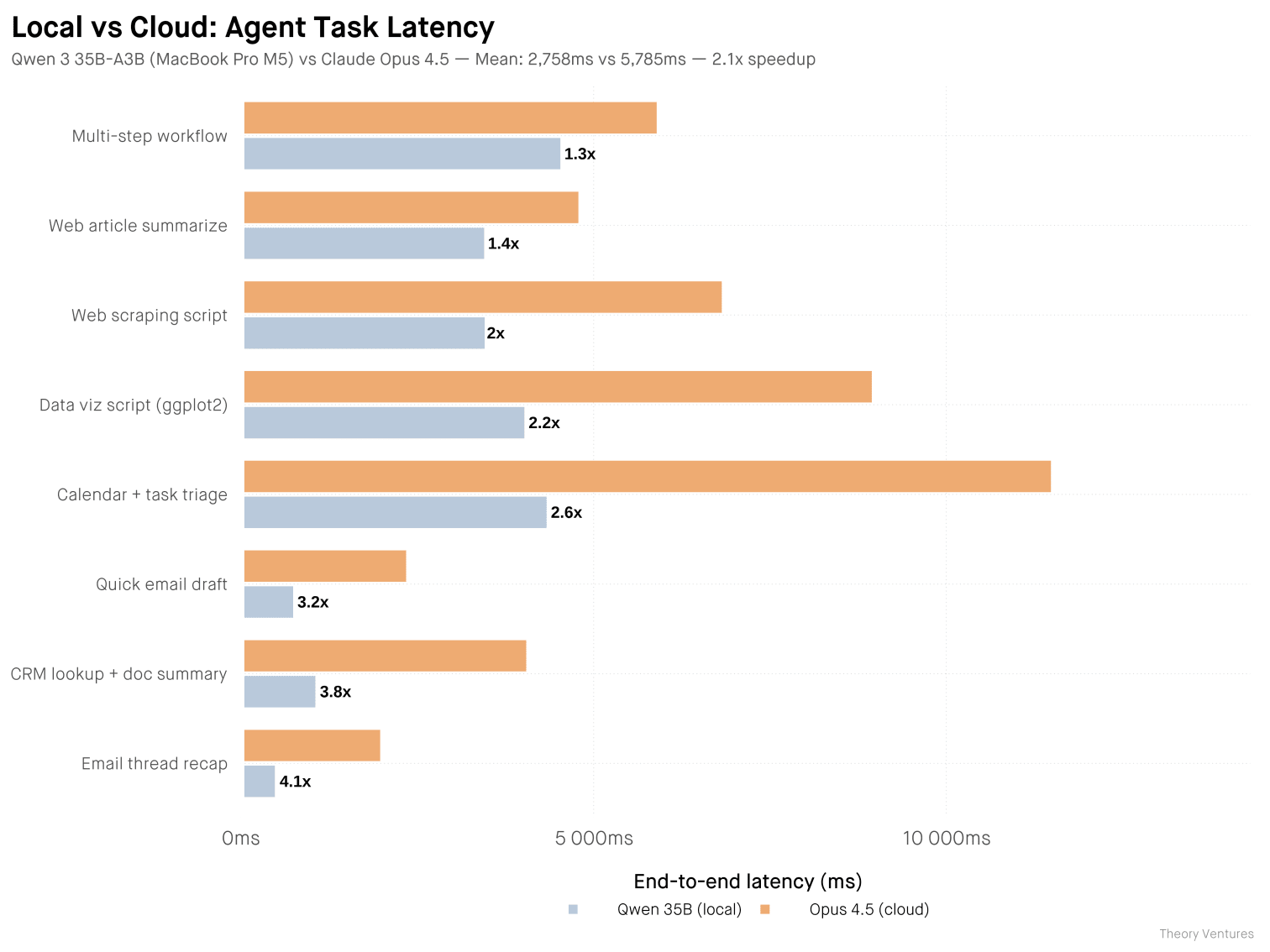

- FD-bench v1.5 interaction quality: 77.8 (TML) vs 46.8 (GPT-4o Realtime minimal) vs 45.5 (Gemini-3.1-flash high)

- Turn-taking latency: 0.40s (TML) vs 1.18s (GPT-4o Realtime minimal) vs 0.94s (Gemini high)

- Audio MultiChallenge APR intelligence: 43.4% (TML) vs 37.6% (GPT-4o minimal) vs 48.5% (GPT-4o with thinking)

- Enables qualitatively new capabilities: simultaneous speech (live translation while listening), visual proactivity (responds to on-screen changes without audio cue), time awareness (accurate elapsed time tracking)

- New benchmarks introduced: TimeSpeak (64.7% vs 4.3% for GPT), CueSpeak (81.7% vs 2.9%), RepCount-A for visual counting (35.4% vs 1.3%), ProactiveVideoQA (33.5 vs baseline 25.0), Charades temporal action (32.4% mIoU vs 0%)

- No voice-activity-detection (VAD) harness needed—all interaction management is native to the model

- Technical optimizations: streaming sessions for frequent small prefills, bitwise trainer-sampler alignment, custom NVLS comm kernels for Blackwell GPUs

- Audio processing: dMel input with lightweight embedding layer; video: 40x40 patches with hMLP encoding

- Limitations: long sessions require context management, needs reliable connectivity, larger models (beyond 12B active) too slow to serve currently

- Limited research preview coming with wider release later in 2026; accepting feedback at [email protected]

Decoder

- Micro-turns: 200ms chunks of interleaved input/output streams that replace traditional turn boundaries, allowing the model to process and respond continuously rather than waiting for complete user turns

- Full-duplex: Simultaneous two-way communication where the model can speak and listen at the same time, like a phone call (vs half-duplex where only one party can transmit at a time)

- MoE (Mixture of Experts): Model architecture where only a subset of parameters (here 12B out of 276B) activate for each input, reducing computational cost while maintaining large total capacity

- Voice-activity-detection (VAD): External component that traditional turn-based models use to detect when a speaker has finished talking; TML's model eliminates this by natively understanding turn boundaries

- FD-bench: Full-Duplex benchmark measuring how well models handle interruptions, backchanneling, talking to others, and background speech during real-time conversations

- dMel: Discrete mel-spectrogram representation for audio that can be processed directly by transformers without large external encoders like Whisper

Original Article

Today, we're announcing a research preview of interaction models: models that handle interaction natively rather than through external scaffolding. We think interactivity should scale alongside intelligence; the way we work with AI should not be treated as an afterthought. Interaction models let people collaborate with AI the way we naturally collaborate with each other—they continuously take in audio, video, and text, and think, respond, and act in real time.

We train an interaction model from scratch. To ensure real-time responsiveness, we adopt a multi-stream, micro-turn design. Our research preview demonstrates qualitatively new interaction capabilities, as well as state-of-the-art combined performance in intelligence and responsiveness.

The collaboration bottleneck

AI labs often treat the ability for AI to work autonomously as the model's most important capability. As a result, today's models and interfaces aren't optimized for humans to remain in the loop. A recent frontier model card states: "Importantly, we find that when used in an interactive, synchronous, "hands-on-keyboard" pattern, the benefits of the model were less clear. When used in this fashion, some users perceived [our model] as too slow and did not realize as much value. Autonomous, long-running agent harnesses better elicited the model's coding capabilities."

Autonomous interfaces are valuable, but in most real work, users can't fully specify their requirements upfront and walk away—good results benefit from a collaborative process where the human stays in the loop, clarifying and giving feedback along the way. However, humans increasingly get pushed out not because the work doesn't need them, but because the interface has no room for them. Instead, people are most effective when they can collaborate with AI the same way we do with other people: messaging, talking, listening, seeing, showing, and interjecting as needed—and for the model to do the same.

In order to resolve this, we need to move beyond the current turn-based interface for the models. Today's models experience reality in a single thread. Until the user finishes typing or speaking, the model waits with no perception of what the user is doing or how the user is doing it. Until the model finishes generating, its perception freezes, receiving no new information until it finishes or is interrupted. This creates a narrow channel for human-AI collaboration that limits how much of a person's knowledge, intent, and judgement can reach the model, and how much of the model's work can be understood. Picture trying to resolve a crucial disagreement over email rather than in person.

At Thinking Machines, we believe we can solve this bandwidth bottleneck by making AI interactive in real time across any modality. This enables AI interfaces to meet humans where they are, rather than forcing humans to contort themselves to AI interfaces.

Most existing AI models bolt on interactivity with a harness: stitching components together to emulate interruptions, multimodality, or concurrency. However, existing research suggests that these hand-crafted systems will be outpaced by the advance of general capabilities. For interactivity to scale with intelligence, it must be part of the model itself. With this approach, scaling a model makes it smarter and a better collaborator.

Capabilities

Having interactivity be part of the model unlocks a variety of capabilities that would otherwise need to be implemented in the harness.

- Seamless dialog management. The model tracks implicitly whether the speaker is thinking, yielding, self-correcting, or inviting a response. There is no separate dialog management component.

- Verbal and visual interjections. The model jumps in as needed depending on the context, not only when the user finishes speaking.

- Simultaneous speech. The user and the model can speak concurrently (e.g. live translation)

- Time-awareness. The model has a direct sense of elapsed time.

- Simultaneous tools calls, search, and generative UI. While speaking and listening to the user, the model can concurrently search, browse the web, or generate UI—weaving back results into the conversation as needed.

In a longer real session, all of this happens continuously, creating an experience that feels more like collaborating and less like prompting.

Our approach

An interaction model is in constant two-way exchange with the user—perceiving and responding at the same time. Some domains take such interactivity as a given—the physical world demands that robotics and autonomous vehicles operate in real time. Audio full-duplex models are another example where interaction is bidirectional and continuous.

Applying the same principle, we set out to build an interaction model native to this regime—one that perceives and responds in the same continuous loop, across audio, video, and text. The result is a system architected around two ideas: a time-aware interaction model that maintains real-time presence, and an asynchronous background model that handles sustained reasoning, tool use, and longer-horizon work.

System overview

The interaction model is in constant exchange with the user. When a task requires deeper reasoning than can be produced instantaneously, the interaction model delegates to a background model that runs asynchronously. The interaction model remains present throughout — answering follow-ups, taking new input, holding the thread — and integrates background results into the conversation as they arrive.

This split lets the user benefit from both responsiveness as well as the full extent of intelligence: the planning, tool-use, and agentic workflows of reasoning models at the response latency of non-thinking ones. Note that both the background and interaction models are intelligent — on its own, the interaction model is also competitive on both interactive and intelligence benchmarks.

The interaction model

Our starting point is continuous audio and video — modalities that are inherently real-time. Text can wait, but a live conversation cannot. By designing around the hardest case first, we arrive at an architecture that is natively multimodal, time-aware, and capable of handling concurrent input and output streams across all modalities. Several design choices make this possible.

Time-aligned micro-turns. The interaction model works with micro-turns continuously interleaving the processing of 200ms worth of input and generation of 200ms worth of output. Rather than consuming a complete user-turn and generating a complete response, both input and output tokens are treated as streams. Working with 200ms chunks of these streams enables near real-time concurrency of multiple input and output modalities.

With this design, there are no artificial turn boundaries that the model must adhere to. In contrast, most existing real-time systems require a harness that predicts turn boundaries in order for the turn-based models to feel real-time and responsive. This harness is made out of components like voice-activity-detection (VAD) that are meaningfully less intelligent than the model itself. This precludes a variety of interaction modes like proactive interjections ("interrupt when I say something wrong") or reactions to visual cues ("tell me when I've written a bug in my code"). Moreover, the model can do things like speak while listening ("translate from spanish to english live") or watching ("live-commentate this sports game").

Thus, all of these different interaction modes that require special harnesses today become special-cases of what the model can do and improve in quality as we scale up model size and training data.

Encoder-free early fusion. Rather than processing audio and video through large, standalone encoders, we opt for a system with minimal pre-processing. Many omnimodal models require training a separate encoder (e.g. Whisper-like) or decoder (e.g. TTS model-like). We instead take in audio signals as dMel and transform it via a light-weighted embedding layer. Images are split into 40x40 patches which are encoded by an hMLP. For the audio decoder we use a flow head. All components are co-trained from scratch together with the transformer.

Inference optimization. At inference time, 200ms chunks require frequent prefills and decodes of small sizes, each having to meet strict latency constraints. Unfortunately, existing LLM inference libraries are not optimized for frequent small prefills—they often have a significant amount of overhead per turn. To address this, we implemented streaming sessions. The client sends each 200ms chunk as a separate request, while the inference server appends these chunks into a persistent sequence in GPU memory. This avoids frequent memory reallocations and metadata computations, and we've upstreamed a version of this feature to SGLang. In addition, we also optimized our kernels for latency as well as the shapes we see for bidirectional serving. For example, we use a gather+gemv strategy for MoE kernels instead of the standard grouped gemm.

Trainer-sampler alignment. We've found bitwise trainer-sampler alignment to be useful for training stability as well as debugging the various components of our system. We implement batch-invariant kernels with minimal (<5%) e2e performance overhead. To highlight two particular kernels:

- All-reduce and reduce-scatter: We use NVLS to implement low-latency comm kernels which are deterministic on Blackwell, and achieve bitwise alignment between somewhat different parallelism strategies (i.e. Sequence Parallelism and Tensor Parallelism).

- Attention: The primary challenge with attention is Split-KV, which can typically lead to inconsistent accumulation orders between decode and prefill. However, we can maintain consistent accumulation order by choosing to split consistently between decode and prefill. For example, we could split SMs to process 4096 tokens at a time (left-aligned), achieving good efficiency in both prefill and decode.

Coordination between interaction and background models. When the interaction model delegates, it sends a rich context package — not a standalone query, but the full conversation. Results stream back as the background model produces them, and the interaction model interleaves these updates into the conversation at a moment appropriate to what the user is currently doing, rather than as an abrupt context switch.

Safety. Because real-time interaction stresses safety differently than turn-based exchanges, our safety work focused on two axes: modality-appropriate refusals and long-horizon robustness. To make refusals colloquial in speech, we use a text-to-speech model to generate refusal and over-refusal training data covering a range of disallowed topics, with the refusal boundary calibrated to favor naturally-phrased, but no less firm, refusals. To improve robustness across extended speech-to-speech conversations, we used an automated red-teaming harness to generate multi-turn refusal data, while maintaining close behavioral parity with the model's text-based refusals.

Benchmarks

Intelligence and interactivity frontier

We show that our interaction model, named TML-Interaction-Small, is the first model that has both strong intelligence/instruction following and interactivity. To measure interaction quality we use FD-bench which is one of the few existing benchmarks intended to measure interactivity. In FD-bench v1.5, the model is given prerecorded audio, and must respond at certain times. This benchmark measures model behavior across several scenarios: user interruption, user backchannel, talking to others, and background speech. Our model scores well in all of these areas. To quantify intelligence we use Audio MultiChallenge, a common benchmark that tracks intelligence and instruction following.

For more intelligence, safety, and interactivity/latency results please see the table below. We report our performance on both streaming and turn-based benchmarks.

| Instant | Thinking | |||||||

|---|---|---|---|---|---|---|---|---|

| TML-interaction-small | GPT-realtime-2.0 (minimal) | GPT-realtime-1.5 | Gemini-3.1-flash-live (minimal) | Qwen 3.5 OMNI-plus-realtime | GPT-realtime-2.0 (xhigh) | Gemini-3.1-flash-live (high) | ||

| Streaming | FD-bench V1 Turn-taking latency (s) · Audio | 0.40 | 1.18 | 0.59 | 0.57 | 2.14 | 1.63 | 0.94 |

| FD-bench V1.5 Average · Audio | 77.8 | 46.8 | 48.3 | 54.3 | 39.0 | 47.8 | 45.5 | |

| FD-bench V3 Response Quality (%) / Pass@1 (%) · Audio + Tools | 82.8* / 68.0* | 80.0 / 52.0 | 77.9 / 55.0 | 68.5 / 48.0 | 60.0 / 50.0 | 81.0 / 58.0 | 71.4 / 48.0 | |

| QIVD** Accuracy (%) · Video + Audio | 54.0 | 57.5 | 41.2 | 54.7 | 59.0 | 58.2 | 56.1 | |

| Turn-based | Audio MultiChallenge APR (%) · Audio | 43.4 | 37.6 | 34.7 | 26.8 | -*** | 48.5 | 36.1 |

| BigBench Audio Accuracy (%) · Audio | 75.7 / 96.5* | 71.8 | 81.4 | 71.3 | 73.0 | 96.6**** | 96.6 | |

| IFEval (VoiceBench) Accuracy (%) · Audio | 82.1 | 81.7 | 68.1 | 67.6 | 80.3 | 83.2 | 82.8 | |

| IFEval Accuracy (%) · Text | 89.7 | 89.6 | 87.5 | 85.8 | 83.4 | 95.2 | 90.0 | |

| Harmbench Refusal rate (%) · Text | 99.0 | 99.5 | 100.0 | 99.0 | 99.5 | 100.0 | 98.0 |

* For benchmarks that require reasoning or tool calls we report our results with background agent enabled.

** We evaluate Qualcomm IVD in a streaming setting – is a video-audio QA benchmark. In each video clip, somebody performs an action and speaks a question. We evaluate in a streaming setting, sending the raw clip from the beginning and grading the model's transcript. Following Qwen 3.5 Omni we use a GPT-4o-mini grader.

*** Audio MultiChallenge metrics for all the baseline models are reported by Scale AI, where Qwen 3.5 OMNI-plus-realtime is not listed.

**** Bigbench Audio metrics for all the baseline models are reported by Artificial Analysis, where GPT-realtime-2.0 thinking is on high.

New dimensions of interactivity

The existing interactivity-oriented benchmarks above do not adequately capture the qualitative jumps in interaction capabilities we notice. To that end, we have some early work aimed at quantifying these capabilities.

Time awareness and simultaneous speech. Turn-based models with a dialog management system do not support accurate time estimation or simultaneous speech. Examples include: "How long did it take me to run one mile?", "Correct my mispronunciations as you hear them" or "How long did it take me to write this function?"

We created two internal benchmarks to measure these proactive audio capabilities:

- TimeSpeak: Tests whether the model can initiate speech at user-specified times while producing the correct content. For example: "I want to practice my breathing, remind me to breathe in and out every 4 seconds until I ask you to stop."

- CueSpeak: Tests whether the model speaks at the appropriate moment with the expected semantically correct response. Dataset entries are created to ensure that the model needs to speak at the same time as the user to get a full score. For example: "Everytime I codeswitch and use another language, give me the correct word in the original language."

For both benchmarks, each example has a single expected semantic response and timing window. We grade with an LLM judge: A response is counted as correct only if it conveys the expected meaning and is delivered at the appropriate time; failing either criterion receives no credit. We report macro-averaged accuracy across examples.

Visual proactivity. Today's commercial real-time APIs perform turn-detection via audio-only dialogue management harnesses. They respond to spoken turns, but they cannot proactively choose to speak when the visual world changes. Several academic papers have built related research prototypes including StreamBridge, Streamo, StreamingVLM, and MMDuet2 which study when to output text in a streaming video input setting. Being text out, they do not study additional constraints of speech-output interaction: speech has duration, can overlap with the user, and must be coordinated with turntaking, interruptions, and backchanneling. Closest to ours is AURA, which adds an ASR/TTS demo around a VideoLLM that decides when to emit text or be silent; in contrast ours is speech-native and full-duplex. For instance, if asked "Please count how many pushups I do" such a system might respond "Sure thing!" and then remain silent – waiting for an audio-only cue that never comes.

We adapted three benchmarks to evaluate visual proactivity of our model:

- RepCount-A contains videos of repeated actions and is adapted into an online counting task. We stream the video following an audio instruction "Please count out reps for {action}.". We extract the last number said by the model after the ground truth penultimate rep, and grade by whether it was within one rep of the ground truth. This task measures continuous visual tracking and timely counting.

- ProactiveVideoQA consists of videos with questions, whose answers become available at specific moments. We stream the question in audio and then the video. We report the paper's turn-weighted PAUC@ω=0.5 metric (scaled 0-100), averaged across turns and categories. Staying silent scores 25.0. Higher scores require correct answers at the correct times and incorrect answers are penalized.

- Charades is a standard temporal action-localization benchmark. Each video contains an action occurring over a labeled time interval. We stream a user audio instruction: "Say 'start' when the person starts doing {action} then say 'Stop' when they stop."; then we stream the video. The model is graded by temporal IoU between predicted and the reference intervals.

| TML-interaction-small | GPT realtime-2.0 (minimal) | |

|---|---|---|

| Time awareness TimeSpeak · macro-acc |

64.7 | 4.3 |

| Verbal cues trigger CueSpeak · macro-acc |

81.7 | 2.9 |

| Visual-based counting RepCount-A · off-by-one |

35.4 | 1.3 |

| Visual cues trigger ProactiveVideoQA · PAUC@ω=0.5 |

33.5 | 25.0* |

| Visual cues trigger Charades · mIoU |

32.4 | 0 |

* No-response baseline on ProactiveVideoQA is 25.0

No existing model can meaningfully perform any of these tasks. For the sake of completeness, we report the results of GPT Realtime-2 (minimal), but all models evaluated perform similar or worse on these tasks, including thinking high models. They stay silent or give incorrect answers.

Future evals. We believe that interactivity is an important area for future research and we invite the community to contribute benchmarks here. We are launching a research grant to encourage more research into the field of interaction models and human-AI collaboration, including but not limited to new frameworks for assessing interactivity quality, with details coming soon.

Limitations and future work

Long sessions. Continuous audio and video accumulate context quickly. The streaming-session design handles short and medium interactions well, but very long sessions still require careful context management—an active area of work.

Compute and deployment. Streaming audio and video at low latency requires reliable connectivity. Without a good connection, the experience degrades significantly. We believe that this can be improved significantly in the future both by improving system reliability as well as training our model to be more robust to delayed frames.

Alignment and safety. A realtime interface opens up an exciting area of research for both alignment and safety. We are collecting feedback and reviewing research grants.

Scaling model size. The current TML-Interaction-Small is a 276B parameter MoE with 12B active. While we expect the interactivity to improve with model scale, our larger pretrained models are currently too slow to serve in this setting. We plan to release larger models later this year.

Improved background agents. Although we have primarily focused on real-time interactivity in this post, agentic intelligence is also an essential capability. In addition to pushing agentic intelligence to the frontier, we believe we have just scratched the surface in how the background agents can work together with the interaction model.

Citation

Please cite this work as:

Thinking Machines Lab, "Interaction Models: A Scalable Approach to Human-AI Collaboration",

Thinking Machines Lab: Connectionism, May 2026.Or use the BibTeX citation:

@article{thinkingmachines2026interactionmodels,

author = {Thinking Machines Lab},

title = {Interaction Models: A Scalable Approach to Human-AI Collaboration},

journal = {Thinking Machines Lab: Connectionism},

year = {2026},

month = {May},

note = {https://thinkingmachines.ai/blog/interaction-models/},

doi = {10.64434/tml.20260511},

}TanStack npm Packages Compromised in Ongoing Mini Shai-Hulud Supply-Chain Attack

84 TanStack npm packages with 12M+ weekly downloads were compromised in a supply-chain attack, forcing TanStack to deprecate affected versions and harden their GitHub Actions workflow.

Summary

Decoder

- Supply-chain attack: Malicious code injected into legitimate software packages during the build or distribution process, compromising all downstream users who install the package.

- GitHub Actions cache: GitHub's workflow caching mechanism that can be exploited to persist malicious code across workflow runs if not properly secured with repository-owner guards.

Original Article

GemStuffer Campaign Abuses RubyGems as Exfiltration Channel Targeting UK Local Government

GemStuffer abuses RubyGems as an exfiltration channel, packaging scraped UK council portal data into junk gems published from new accounts.

Google's Gemini Omni video model surfaces ahead of I/O debut

Google's Gemini Omni video model leaked with unusually strong editing features but trailing ByteDance's Seedance 2 on raw cinematic quality.

Summary

Deep Dive

- Google's Gemini Omni video model briefly appeared to users in a revised Gemini interface, described as "meet our new video model, remix your videos, edit directly in chat, try templates, and more"

- The leak appears either accidental or part of a limited A/B test, surfacing ahead of Google I/O 2026 (May 19-20)

- Early testing shows the model excels at video editing tasks: watermark removal, object swapping within clips, and scene rewriting via chat instructions

- Raw generation quality trails ByteDance's Seedance 2, with reviewers noting cinematic fidelity is "a step behind the current benchmark leader"

- Google appears to be following the Nano Banana playbook: Nano Banana launched with middling generation scores but topped editing leaderboards, then was upgraded to frontier-level quality

- The model is expected to ship in tiered variants, likely Flash and Pro, with circulating outputs likely from the Flash tier

- A new usage limits tab appeared in settings, with users reporting video generation burns through credits quickly, suggesting metered usage

- The strategy prioritizes modality unification under Gemini over raw quality leadership at launch

- Timing aligns with Google I/O's pattern of unveiling major AI initiatives, with the pre-event leak allowing Google to gather reactions before the keynote

Decoder

- Nano Banana: Google's native image generation and editing model that launched in Gemini, initially with modest generation quality but strong editing capabilities, later upgraded to frontier-level performance

- Seedance 2: ByteDance's video generation model, currently considered the benchmark leader for cinematic quality in AI-generated video

Original Article

Google's Gemini Omni Video Model Surfaces Ahead of I/O

Fresh signals around Google's upcoming Gemini Omni video model surfaced over the weekend, with Reddit users posting screenshots of a revised Gemini interface exposing the new model card. The description read "Create with Gemini Omni: meet our new video model, remix your videos, edit directly in chat, try templates, and more," appearing to confirm the long-rumored unified approach Google has been preparing ahead of next week's developer event. The rollout looked either accidental or part of a limited A/B test.

Sample video and early feedback 👀

I won't lie, this is one of the best video models I have seen, maybe not *the* best, but a really strong performance. I was particularly impressed by the prompt adherence (except for the one shot with the missing centerpiece), the model…

Alongside the model card, users spotted a new usage limits tab inside settings, and several reported that video generation burned through credits fast, hinting at a metered system similar to what Google has been testing across Gemini surfaces. Early outputs drew mixed reactions. On raw generation fidelity, Omni appears to lag behind ByteDance's Seedance 2, with viewers noting that the cinematic quality is a step behind the current benchmark leader. Where the model stood out was in editing: removing watermarks, swapping objects within clips, and rewriting scenes via chat instructions all worked unusually well for a first public glimpse.

GOOGLE 🔥: An upcoming Gemini Omni video model from Google is expected to be much more advanced in video editing, capable of completing tasks like removing watermarks, replacing objects in the video, and more.

It is also likely that Google will release 2 versions of this model,…

That pattern mirrors Nano Banana, which launched as a native image model on Gemini, debuted with middling generation scores but topped editing leaderboards, and was later upgraded into a frontier image system. Google appears to be running the same playbook for video, prioritizing modality unification under Gemini over raw quality leadership at launch. There are also hints that Omni will ship in tiered variants, likely Flash and Pro, with the outputs circulating now most likely coming from the Flash tier.

Google keeps preparing its upcoming Gemini Omni models for the release.

Gemini Omni model will be available on APIs as well

The model will be considered as Agent, similarly to Deep Research on AI Studio

Soon? 👀

P. S. Just a reminder that Nano Banana 1 wasn't better than…

The timing fits neatly with Google I/O on May 19 and 20, where the company has a track record of unveiling its most ambitious AI shifts. A short pre-event window paired with a controlled leak gives Google room to gather reactions and shape the narrative before the keynote.

The Inference Shift

Cerebras' surging IPO signals AI inference splitting into speed-optimized answer chips for humans and memory-heavy agentic chips for autonomous work without humans waiting.

Summary

Deep Dive

- Cerebras is raising its IPO price to $150-160/share (up from $115-125) and increasing share count to 30 million amid surging demand for AI chips beyond GPUs

- The WSE-3 uses wafer-scale integration (entire 300mm wafer as single chip) with 44GB on-chip SRAM at 21 PB/s bandwidth, versus H100's 80GB HBM at 3.35 TB/s (6,000x bandwidth advantage, but 55% of memory capacity)

- Inference has three parts: prefill (parallel, compute-bound), decode reading KV cache (serial, bandwidth-bound with variable memory), and decode over model weights (serial, bandwidth-bound with fixed memory)

- Cerebras excels when models and KV caches fit entirely in on-chip memory, delivering dramatically faster token generation for human-facing applications like voice interfaces and AI wearables

- Ben Thompson argues inference is splitting into two categories: answer inference (human-facing, latency-critical, optimized for token speed) and agentic inference (machine-to-machine, latency-tolerant, optimized for memory capacity)

- Agentic inference doesn't need cutting-edge speed because agents work autonomously without humans waiting, making slower cheaper memory (DRAM vs HBM) and older chip nodes economically rational

- This architectural split favors different players: Nvidia for training (compute + HBM + networking), Cerebras/Groq for answer inference (extreme speed), and potentially simpler/cheaper systems for agentic inference (memory hierarchy + good-enough compute)

- Nvidia is responding with Dynamo inference framework, standalone memory racks, and CPU racks to disaggregate inference workloads and keep expensive GPUs busy

- Implications: China has sufficient older-node chips for agentic inference (circumventing cutting-edge compute restrictions), space data centers become viable with cooler/simpler older nodes, and Nvidia's architectural premium may erode in the largest inference market (agentic)

- SpaceX contracted Anthropic for 300+ megawatts at Colossus 1 (220,000+ Nvidia GPUs), demonstrating that training and inference still share GPU infrastructure when models aren't heavily used

Decoder

- KV cache: Key-Value cache storing context and attention states from previous tokens in a language model sequence, growing with each generated token and requiring full reads during decode to maintain conversation coherence

- Prefill: Initial encoding step in LLM inference that processes the entire input prompt in parallel to populate the KV cache before token generation begins

- Decode: Serial token generation phase where the model alternates between reading the KV cache and model weights through each layer to produce one output token at a time

- HBM (High Bandwidth Memory): Specialized stacked DRAM offering much higher bandwidth than standard memory, used in GPUs to feed data fast enough to keep compute units busy during parallel operations

- Reticle limit: Maximum chip area (~26mm × 33mm) that lithography equipment can pattern in a single exposure, traditionally limiting chip size until Cerebras invented wafer-scale wiring

- Wafer-scale integration: Cerebras' technique of wiring across scribe lines (boundaries between exposures on a silicon wafer) to create a single functional chip from an entire 300mm wafer instead of cutting it into separate dies

- SRAM: Static RAM residing on-chip with extremely low latency but limited capacity and high cost, versus off-chip HBM which offers vastly more capacity at lower bandwidth

- Interposer: Silicon substrate connecting multiple chips to enable chip-to-chip communication, used by Nvidia's B200 to link dies together versus Cerebras' monolithic wafer approach

Original Article

The Inference Shift

If you were looking for the ideal time to IPO, being a chip company in May 2026 is hard to beat. Reuters reported over the weekend:

Cerebras Systems is set to raise the size and price of its initial public offering as soon as Monday, as demand for the artificial intelligence chipmaker's shares continues to climb, two people familiar with the matter told Reuters on Sunday. The company is considering a new IPO price range of $150-$160 a share, up from $115-$125 a share, and raising the number of shares marketed to 30 million from 28 million, said the sources, who asked not to be identified because the information isn't public yet.

The fundamental driver of the ongoing surge in semiconductor stocks is, of course, AI, particularly the realization that agents are going to need a lot of compute. What Cerebras represents, however, is something broader: while the compute story for AI has been largely about GPUs, particularly from Nvidia, the future is going to look increasingly heterogeneous.

The GPU Era

The story of how Graphics Processing Units became the center of AI is a well-trodden one, but in brief:

- Just as drawing pixels on a computer screen was a parallel process, which meant there was a direct connection between the number of processing units and graphics speed, making AI-related calculations was a parallel process, which meant there was a direct connection between the number of processing units and calculation speed.

- Nvidia enabled this dual-usage by making its graphics processors programmable, and created an entire software ecosystem called CUDA to make this programming accessible.

- The big difference between graphics and AI has been the size of the problem being solved — models are a lot bigger than video game textures — which has led to a dramatic expansion in high-bandwidth memory (HBM) per GPU, and dramatic innovations in terms of chip-to-chip networking to allow multiple chips to work together as one addressable system. Nvidia has been the leader in both.

The number one use case for GPUs has been training, which stresses the third point in particular. While the calculations within each training step are massively parallel, the steps themselves are serial: every GPU has to share its results with every other GPU before the next step can begin. This is why a trillion-parameter model needs to fit in the aggregate memory of tens of thousands of GPUs that can communicate as one system. Nvidia dominates both problem spaces, first by securing HBM ahead of the rest of the industry, and second thanks to its investments in networking.

Of course training isn't the only AI workload: the other is inference. Inference has three main parts:

- Prefill encodes everything the LLM needs to know into an understandable state; this is highly parallelizable and compute matters.

- The first part of decode entails reading the KV cache — which stores context, including the output of the prefill step — to make an attention calculation. This is a serial step where bandwidth matters, but the memory requirements are variable and increasingly large.

- The second part of decode is the feed-forward computation over the model weights; this is also a serial step where bandwidth matters, and the memory requirements are defined by the size of the model.

The two decode steps alternate for every layer of the model (they're interleaved, not in sequence), which is to say that decode is serial and memory-bandwidth bound. For every token generated, two distinct memory pools must be read: the KV cache, which stores context and grows with each token, and the model weights themselves. Both must be read in full to produce a single output token.

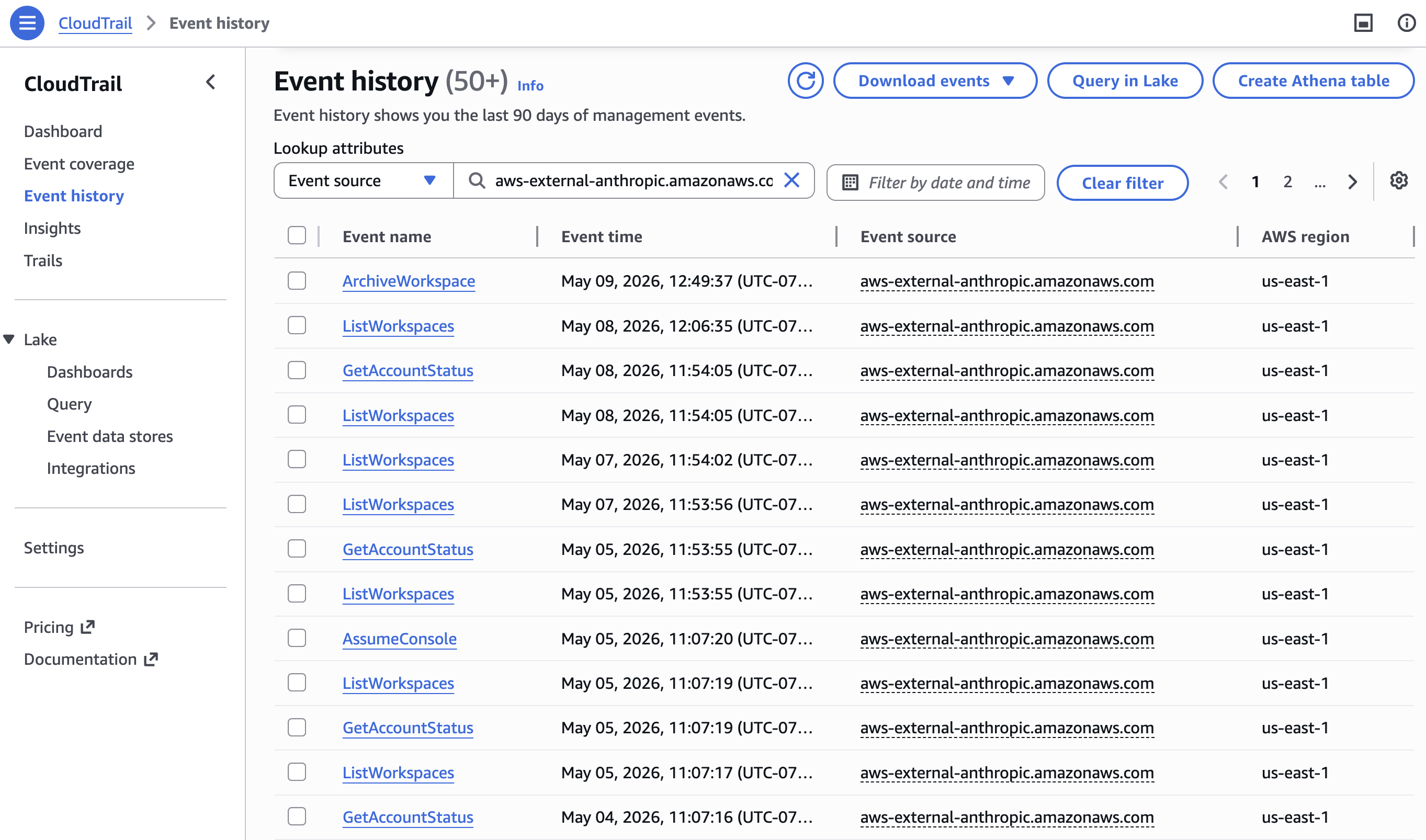

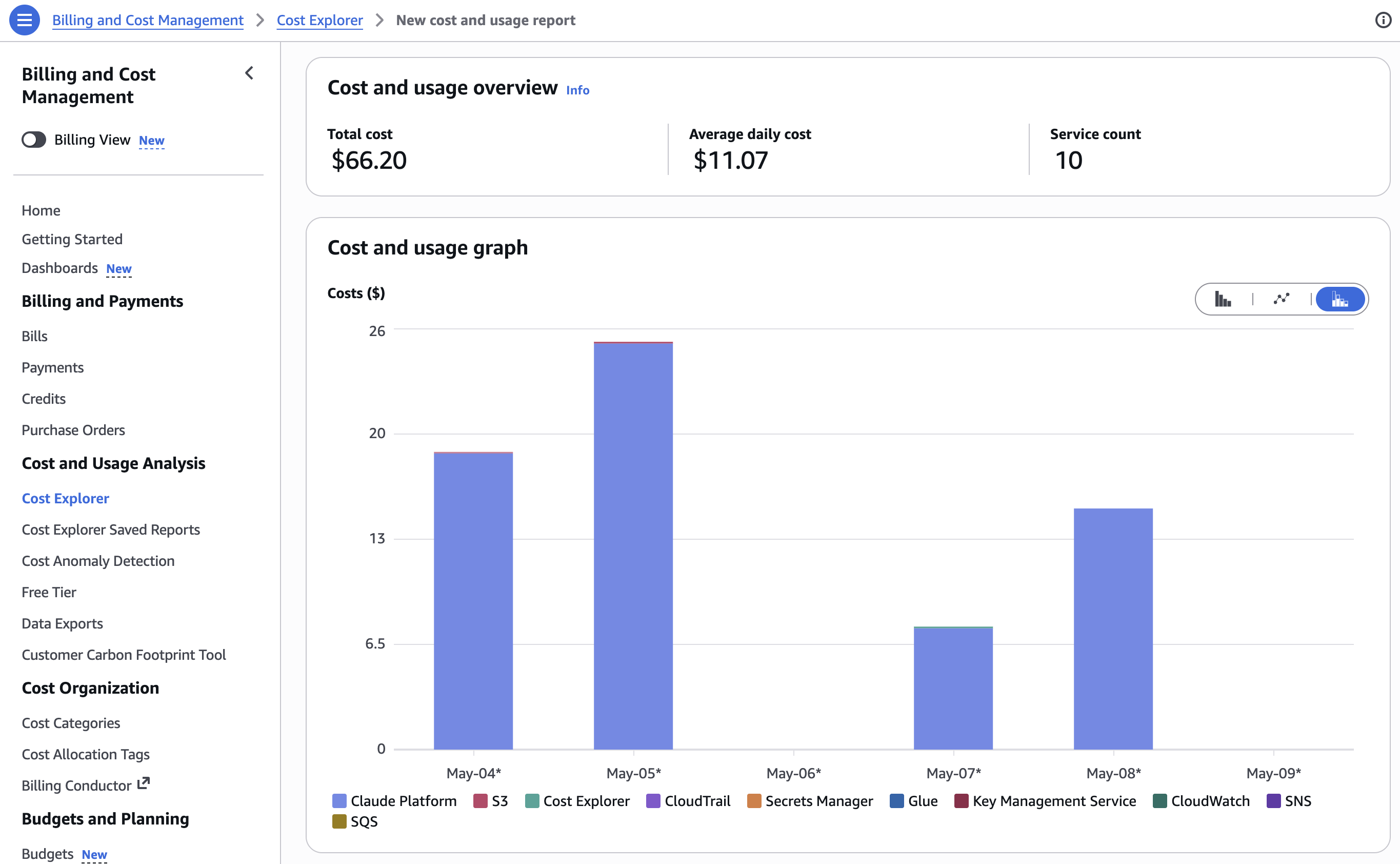

GPUs handle all three needs: high compute for prefill, abundant HBM for KV cache and model weights, and chip-to-chip networking to pool memory across multiple chips when a single GPU isn't enough. In other words, what works for training works for inference — look no further than the deal SpaceX made with Anthropic. From Anthropic's blog:

We've signed an agreement with SpaceX to use all of the compute capacity at their Colossus 1 data center. This gives us access to more than 300 megawatts of new capacity (over 220,000 NVIDIA GPUs) within the month. This additional capacity will directly improve capacity for Claude Pro and Claude Max subscribers.

SpaceX retains Colossus 2 — presumably for both training of future models and inference of existing ones — and can afford to do both in the same data center precisely because xAI's models aren't getting much usage; more pertinently to this piece, they can do both in the same data center because both training and inference can be done on GPUs. Indeed, the GPUs Anthropic is contracting for at Colossus 1 were originally used for training as well; the fact that GPUs are so flexible is a big advantage.

Understanding Cerebras

Cerebras makes something completely different. While a silicon wafer has a diameter of 300mm, the "reticle limit" — the maximum area that a lithography tool can expose on that wafer — is around 26mm x 33mm. This is the effective size limit for chips; going beyond that entails linking two separate chips together over a chip-to-chip interposer, which is exactly what Nvidia has done with the B200. Cerebras, on the other hand, has invented a way to lay down wiring across the so-called "scribe lines" that are the boundary between reticle exposures, making the entire wafer into a single chip with no need for relatively slow chip-to-chip linkages.

The net result is a chip with a lot of compute and a lot of SRAM that is blisteringly fast to access. To put it in numbers, the WSE-3 (Cerebras' latest chip) has 44GB of on-chip SRAM at 21 PB/s of bandwidth; an H100 has 80GB of HBM at 3.35 TB/s. In other words, the WSE-3 has just over half the memory of an H100, but 6,000 times the memory bandwidth.

The reason to compare the WSE-3 to an H100 is that the H100 is the chip most used for inference — and inference is clearly what Cerebras is most well-suited for. You can use Cerebras chips for training, but the chip-to-chip networking story isn't very compelling, which is to say that all of that compute and on-chip memory is mostly just sitting around; what is much more interesting is the idea of getting a stream of tokens at dramatically faster speed than you can from a GPU.

Note, however, that the limitation in terms of training also potentially applies in terms of inference: as long as everything fits in on-chip memory Cerebras' speed is an incredible experience; the moment you need more memory, whether that be for a larger model or, more likely, a larger KV cache, then Cerebras doesn't make much sense, particularly given the price. That whole-wafer-as-chip technique means high yields are a massive challenge, which hugely drives up costs.

At the same time, I do think there will be a market for Cerebras-style chips: right now the company is highlighting the usefulness of speed for coding — reasoning means a lot of tokens, which means that dramatically scaling up tokens-per-second equals faster thinking — but I think this is a temporary use case, for reasons I'll explain in a bit. What does matter is how long humans are waiting for an answer, and as products like AI wearables become more of a thing, the speed of interaction, particularly for voice — which will be a function of token generation speed — will have a tangible effect on the user experience.

Agentic Inference

I have previously made the case, including in Agents Over Bubbles, that we have gone through three inflection points in the LLM era:

- ChatGPT demonstrated the utility of token prediction.

- o1 introduced the idea of reasoning, where more tokens meant better answers.

- Opus 4.5 and Claude Code introduced the first usable agents, which could actually accomplish tasks, using a combination of reasoning models and a harness that utilized tools, verified work, etc.

All of this falls under the banner of "inference", but I think it will be increasingly clear that there is a difference between providing an answer — what I will call "answer inference" — and doing a task — what I will call "agentic inference." Cerebras' target market is "answer inference"; in the long run, I think the architecture for "agentic inference" will look a lot different, not just from Cerebras' approach, but from the GPU approach as well.

I mentioned above that fast inference for coding is a temporary use case. Specifically, coding with LLMs requires a human in the loop. It's the human that defines what is to be coded, checks the work, commits the pull request, etc.; it's not hard to envision a future, however, where all of this is completely handled by machines. This will apply to agentic work broadly: the true power of agents will not be that they do work for humans, but rather that they do work without human involvement at all.

This, by extension, will mean that the likely best approach to solving agentic inference will look a lot different than answer inference. The most important aspect for answer inference is token speed; the most important aspect for agentic inference, however, is memory. Agents need context, state, and history. Some of that will live as active KV cache; some will live in host memory or SSDs; much of it will live in databases, logs, embeddings, and object stores. The important point is that agentic inference will be less about GPUs answering a question and more about the memory hierarchy wrapped around a model.

Critically, this articulation of an agentic-specific memory hierarchy implies a necessary trade-off of speed for capacity. Here's the thing, though: lower speed isn't nearly as important a consideration if there isn't a human in the loop. If an agent is waiting around for a job that is being run overnight, the agent doesn't know or care about the user experience impact; what is most important is being able to accomplish a task, and if entirely new approaches to memory make that possible, then delays are fine.

Meanwhile, if delays are fine, then all of the focus on pure compute power and high-bandwidth memory seems out of place: if latency isn't the top priority, then slower and cheaper memory — like traditional DRAM, for example — makes a lot more sense. And if the entire system is mostly waiting on memory, then chips don't need to be as fast as the cutting edge either. This represents a profound shift in future architectures, but it also doesn't mean that current architectures are going away:

- Training will continue to matter, and Nvidia's current architecture, including high-speed compute, large amounts of high-bandwidth memory, and high-speed networking, will likely continue to dominate.

- Answer inference will be a meaningful market, albeit a relatively small one, and speed from chips like Cerebras or Groq (I explained how Nvidia is deploying Groq's LPUs here) will be very useful.

- Agentic inference will gradually unbundle the GPU, which alternates between stranding high-bandwidth memory (during the prefill process) and stranding compute (during the decode process), in favor of increasingly sophisticated memory hierarchies dominated by high capacity and relatively lower cost memory types, with "good enough" compute; indeed, if anything it will be the speed of CPUs for things like tool use that will matter more than the speed of GPUs.

At the same time, these categories won't be equal in size or importance. Specifically, agentic inference will be the largest market by far, because that is the market that won't be limited by humans or time. Today's agents are fancy answer inference; in the future true agentic inference will be work done by computers according to dictates given by other computers, and the market size scales not with humans but with compute.

The Implications of Agentic Inference on Compute

To date the invocation of "scaling with compute" has implicitly meant Nvidia bullishness. However, much of Nvidia's relative advantage to date has been a function of latency: Nvidia chips have fast compute, but keeping that compute busy has required big investments in ever-expanding HBM memory and networking. If latency isn't the key constraint, however, then Nvidia's approach seems less worth paying a premium for.

Nvidia does recognize this shift: the company launched an inference framework called Dynamo that helps disaggregate different parts of inference, and is shipping products like standalone memory and CPU racks to enable increasingly large KV caches and faster tool use, the better to keep their expensive GPUs busy. Ultimately, however, it's easy to see cost and simplicity being increasingly attractive to hyperscalers for agentic inference that isn't remotely GPU-bound.

China, meanwhile, for all of its lack of leading edge compute, has everything it needs for agentic inference: fast-enough (but not leading-edge) GPUs, fast-enough (but not leading-edge) CPUs, DRAM, hard drives, etc. The challenge, of course, is compute for training; it's also possible that answer inference is more important for national security, at least when it comes to military applications.

The other interesting angle is space: slower chips actually make space data centers more viable for a number of reasons. First, if memory can be offloaded, chips can be made much simpler and run much cooler. Second, older nodes, by virtue of being physically larger, will better withstand space radiation. Third, older nodes require less power, which means there will be less heat to dissipate via radiation. Fourth, not being on the bleeding edge will mean higher reliability, an important consideration given that satellites won't be repairable.

Nvidia CEO Jensen Huang regularly says that "Moore's Law is Dead"; what he means is that the future of computing speed-ups will be a function of systems innovation, which is exactly what Nvidia has done. Maybe the most profound implication of agents that act without humans in the loop, however, will be that Moore's Law doesn't matter, and that the way we get more compute is by realizing that the compute we have is already good enough.

Foundation Model Scaling

AWS detailed how foundation model scaling split from one regime into three—pre-training, post-training, and test-time compute—with P6e-GB200 UltraServers exposing 72 Blackwell GPUs in a single 13.4 TB NVLink domain.

Summary

Deep Dive

- Foundation model scaling evolved from a single pre-training curve (Kaplan et al. 2020 power laws) to three distinct regimes: pre-training, post-training (SFT, RLHF), and test-time compute (search, verification, multi-sample strategies)

- AWS infrastructure spans four layers: accelerated compute (P5/P6 instances), resource orchestration (Slurm via ParallelCluster/PCS, Kubernetes via EKS/HyperPod), ML software stack (drivers → CUDA → NCCL → PyTorch → training frameworks), and observability (Prometheus/Grafana)

- P6 instances use Blackwell B200 (2.25 PFLOPS BF16, 4.5 PFLOPS FP8, 180 GB HBM3e per GPU) and B300 (same compute, 288 GB HBM3e per GPU) with NVLink 5th gen (14.4 TB/s aggregate) and EFA v4 (400 GB/s or 800 GB/s aggregate)

- P6e-GB200 UltraServers compose up to 72 Blackwell GPUs into a single NVLink domain with 13.4 TB aggregate HBM3e, reducing how often MoE all-to-all communication must leave the NVLink fabric to traverse EFA

- Grace-Blackwell superchips provide cache-coherent NVLink-C2C between Grace CPU memory and Blackwell GPU HBM, enabling GPU workloads to extend into CPU-attached memory without explicit PCIe copies

- HyperPod's checkpointless training maintains continuous peer-to-peer state replication across GPUs via EFA; on failure, surviving nodes reconstruct lost state through communication rather than reading multi-TB checkpoints from FSx/S3, reducing recovery latency

- Kubernetes gaps for distributed training (pod-level vs job-level scheduling, no topology awareness, no batch queue semantics) are addressed by layered extensions: Kueue for admission control and gang scheduling, Volcano or NVIDIA KAI Scheduler for topology-aware placement

- Custom kernels (FlashAttention, Triton-compiled fused ops, CUTLASS GEMMs) and specialized libraries increasingly determine end-to-end performance as much as the ML framework, as step time is often dominated by memory movement and collective communication rather than raw compute

- MoE models with expert parallelism depend on all-to-all collectives for token dispatch (send each token to the GPU hosting its assigned expert) and combine (return expert outputs), with communication volume scaling with number of experts and becoming a bottleneck at high expert-parallelism degrees

- Disaggregated inference separating prefill and decode onto distinct GPU pools requires efficient point-to-point KV cache transfer; NVIDIA NIXL provides a unified API for point-to-point transfers across memory tiers (HBM, DRAM, NVMe) and interconnects (NVLink, InfiniBand, Ethernet)

- veRL (Volcano Engine Reinforcement Learning) implements PPO, GRPO, and REINFORCE++ with HybridFlow architecture mixing training backends (FSDP2, Megatron) with inference engines (vLLM, SGLang) in the same job, sharing model weights in memory between actor and rollout components

- GPU health monitoring via DCGM tracks ECC single-bit errors (SBE) and double-bit errors (DBE), with XID 63 (row remap failure), XID 64 (GPU fallen off bus), and XID 94/95 (contained/uncontained errors) warranting immediate node replacement

- Storage is tiered: local NVMe instance store (30.72 TB raw, 8 × 3.84 TB NVMe SSD) for hot data, FSx for Lustre (POSIX parallel filesystem with TB/s throughput, Data Repository Associations for S3 lazy loading) for shared high-throughput access, S3 for durable checkpoint persistence

- Amazon EC2 UltraClusters provision thousands of accelerated instances as a single tightly placed cluster within an Availability Zone, interconnected with a petabit-scale nonblocking network

- The aws-ofi-nccl plugin bridges NCCL to libfabric interfaces, allowing NCCL to leverage EFA's OS-bypass and Scalable Reliable Datagram (SRD) protocol without application changes

Decoder

- EFA (Elastic Fabric Adapter): AWS network interface providing OS-bypass RDMA using the Scalable Reliable Datagram (SRD) protocol; enables applications to communicate directly with the network device through libfabric API, bypassing the kernel to reduce latency for collective operations in distributed training

- NVLink domain: The set of GPUs directly connected via NVIDIA's NVLink high-bandwidth interconnect (e.g., 8 GPUs in a p5.48xlarge instance, or 72 GPUs in a P6e-GB200 UltraServer); collectives within the domain avoid traversing the host networking stack, achieving higher bandwidth and lower latency than EFA-based cross-node communication

- UltraServers: AWS EC2 instances that extend the NVLink domain beyond a single instance by connecting multiple component instances through dedicated accelerator interconnect; P6e-GB200 UltraServers built on NVIDIA GB200 NVL72 platform expose up to 72 Blackwell GPUs in one NVLink domain

- All-to-all collective: Communication pattern in MoE models where every GPU exchanges data with every other GPU in the expert-parallel group; used for dispatch (send each token to the GPU hosting its assigned expert) and combine (return expert outputs to originating GPUs), with communication volume scaling with number of experts

- Checkpointless training: Fault tolerance approach in HyperPod that maintains continuous peer-to-peer state replication across GPUs instead of periodically serializing model state to shared storage; on failure, surviving nodes reconstruct lost state through EFA-based communication rather than reading multi-terabyte checkpoints

- XID events: NVIDIA GPU error codes indicating hardware failures; XID 63 (row remap failure), XID 64 (GPU fallen off bus), and XID 94/95 (contained/uncontained errors) typically warrant immediate node replacement, while accelerating ECC single-bit error (SBE) rates often precede more severe failures

- DCGM (Data Center GPU Manager): NVIDIA's suite for managing and monitoring GPUs in cluster environments; DCGM-Exporter exposes GPU metrics (utilization, memory, power, temperature, ECC errors, XID events) in Prometheus format for observability stacks

Original Article

Building Blocks for Foundation Model Training and Inference on AWS

For a long time, "scaling" in foundation models mostly meant one thing: spend more compute on pre-training and capabilities rise. That intuition was supported by empirical work such as Kaplan et al. (2020), which reported predictable power-law trends in loss as you scale model parameters, dataset size, and training compute. In practice, these trends justified sustained investment in large-scale accelerator capacity and the surrounding distributed infrastructure needed to keep it efficiently utilized. But the frontier has evolved—and scaling is no longer a single curve. NVIDIA's "from one to three scaling laws" framing usefully emphasizes that, beyond pre-training, performance increasingly scales through post-training (e.g., supervised fine-tuning (SFT) and reinforcement learning (RL)-based methods) and through test-time compute ("long thinking," search/verification, multi-sample strategies).

Figure: Adapted from "AI's Three Scaling Laws, Explained" (NVIDIA Blog).

Taken together, these scaling regimes push the foundation-model lifecycle—pre-training, post-training, and inference—toward convergent infrastructure requirements: tightly coupled accelerator compute, a high-bandwidth low-latency network, and a distributed storage backend. They also raise the importance of orchestration for resource management, and of application- and hardware-level observability to maintain cluster health and diagnose performance pathologies at scale.

Another key trend is the increasing reliance of the foundation-model lifecycle on an open-source software (OSS) ecosystem that spans model development frameworks, cluster resource management, and operational tooling. At the cluster layer, resource management is typically provided by systems such as Slurm and Kubernetes. Model development and distributed training are commonly implemented in frameworks such as PyTorch and JAX. Monitoring and visualization—that is, observability—are often achieved using Prometheus for metrics collection and Grafana for visualization and alerting, positioned as an operational layer atop infrastructure and resource management. Figure 1 illustrates this layered architecture, showing how hardware infrastructure supports resource orchestration, which in turn enables ML frameworks, with observability spanning across all layers.

Figure 1: The layered architecture of open-source software stacks for foundation model training and inference

This post is intended for machine learning engineers and researchers involved in foundation model training and inference, with particular attention to workflows built atop OSS frameworks. It analyzes how AWS infrastructure—including multi-node accelerator compute, high-bandwidth low-latency networking, distributed shared storage, and associated managed services—interacts with common OSS stacks across the foundation model lifecycle. The primary goal is to provide a technical foundation for understanding systems bottlenecks and scaling characteristics spanning pre-training, post-training, and inference. This introductory post surfaces the overall system architecture, emphasizing the integration points between AWS infrastructure components and OSS tools that underpin large-scale distributed training and inference.

The AWS Building Blocks

The remainder of this series examines how this layered architecture is realized on AWS, progressing through infrastructure, resource orchestration, the ML software stack, and observability. The following sections preview each layer.

Infrastructure: Compute, Network, and Storage

As illustrated in Figure 1, infrastructure is anchored by three coupled building blocks—accelerated compute with large device memory, wide-bandwidth interconnect for collective communication, and scalable distributed storage for data and checkpoints.

Accelerated compute forms the foundation of large-scale foundation model pre-training, post-training, and inference. AWS offers several generations of NVIDIA GPUs as part of its Amazon EC2 accelerated computing instances, including the Amazon EC2 P instance family. The P5 instance family includes p5.48xlarge with eight NVIDIA H100 GPUs, p5.4xlarge with a single H100 GPU for smaller-scale workloads, and p5e.48xlarge/p5en.48xlarge variants with NVIDIA H200 GPUs. The P6 instance family introduces NVIDIA Blackwell B200 architecture with p6-b200.48xlarge and Blackwell Ultra B300 with p6-b300.48xlarge. Across these generations, the dominant scaling axes are peak Tensor throughput, HBM capacity and bandwidth, and interconnect bandwidth (within and across nodes).

As a first-order approximation, peak Tensor Core throughput—measured in floating point operations per second (FLOPS)—helps situate these accelerators on a common axis. The table below summarizes per-GPU peak throughput for dense BF16/FP16 and FP8 Tensor operations, along with HBM capacity and HBM bandwidth, using SXM/HGX-class specifications that align with NVSwitch/NVLink-based multi-GPU nodes.

| GPU (representative variant) | BF16/FP16 Tensor peak (dense) | FP8 Tensor peak (dense) | FP4 Tensor peak (dense) | HBM capacity | HBM bandwidth |

|---|---|---|---|---|---|

| H100 (SXM) | 0.9895 PFLOPS | 1.979 PFLOPS | — | 80 GB HBM3 | 3.35 TB/s |

| H200 (SXM) | 0.9895 PFLOPS | 1.979 PFLOPS | — | 141 GB HBM3e | 4.8 TB/s |

| B200 (HGX, per GPU) | 2.25 PFLOPS | 4.5 PFLOPS | 9 PFLOPS | 180 GB HBM3e | 8 TB/s |

| B300 (HGX, per GPU) | 2.25 PFLOPS | 4.5 PFLOPS | 13.5 PFLOPS | 288 GB HBM3e | 8 TB/s |

Note: NVIDIA product tables often report Tensor throughput "with sparsity"; this table reports dense throughput. Where applicable, dense throughput is taken as half of sparse throughput, following NVIDIA's guidance for HGX-class platforms (NVIDIA). DGX figures are system-level; the B200 HBM capacity and bandwidth values are expressed per GPU by dividing DGX totals by eight (NVIDIA).

As models scale, step time is often dominated by collective communication and memory movement rather than raw compute throughput, motivating explicit scale-up and scale-out bandwidth accounting. For the multi-GPU instances, GPU communication spans two regimes. Internal scale-up (NVLink/NVSwitch) provides high-bandwidth, low-latency GPU-to-GPU connectivity within a node, enabling collectives such as all-reduce and all-gather to execute without traversing the host networking stack. External scale-out (EFA) provides OS-bypass networking across nodes, which AWS uses as a building block for Amazon EC2 UltraClusters where communication-heavy collectives span thousands of instances. The following table summarizes key specifications across these instance types:

| Instance Type | GPU | GPUs | GPU Memory | NVLink | NVLink BW (aggregate) | EFA | EFA BW (aggregate) |

|---|---|---|---|---|---|---|---|

| p5.4xlarge | H100 | 1 | 80 GB HBM3 | — | — | v2 | 12.5 GB/s |

| p5.48xlarge | H100 | 8 | 640 GB HBM3 | 4th | 7.2 TB/s | v2 | 400 GB/s |

| p5e.48xlarge | H200 | 8 | 1,128 GB HBM3e | 4th | 7.2 TB/s | v2 | 400 GB/s |

| p5en.48xlarge | H200 | 8 | 1,128 GB HBM3e | 4th | 7.2 TB/s | v3 | 400 GB/s |

| p6-b200.48xlarge | B200 | 8 | 1,440 GB HBM3e | 5th | 14.4 TB/s | v4 | 400 GB/s |

| p6-b300.48xlarge | B300 | 8 | 2,100 GB HBM3e | 5th | 14.4 TB/s | v4 | 800 GB/s |

Note: EFA bandwidth is converted from Gbps to GB/s (÷8) for consistency with other bandwidth metrics; see the EC2 accelerated computing networking specifications. NVLink and EFA bandwidth figures are shown as aggregate per-instance values rather than per-link values; see the P5 instance family page and the P6 instance family page for the corresponding intra-node interconnect and networking characteristics.

Elastic Fabric Adapter (EFA) is a network interface for Amazon EC2 that provides OS-bypass remote direct memory access (RDMA) capability using the Scalable Reliable Datagram (SRD) protocol. By enabling applications to communicate directly with the network device through the Libfabric API—bypassing the operating system kernel—EFA reduces latency and improves throughput for collective operations in distributed training.

Multiple generations of EFA are available on different instance families. Amazon EC2 P5 and P5e instances are equipped with EFA version 2 (EFAv2). EFA version 3 (EFAv3), provided on P5en instances, reduces packet latency by approximately 35% compared to EFAv2. EFA version 4 (EFAv4), available on P6 instances, delivers an additional 18% improvement in collective communication performance relative to EFAv3.

At scale, both distributed training (streaming corpora and writing multi-terabyte checkpoints) and large-scale inference (staging weights and managing KV cache growth) motivate a tiered storage hierarchy—local NVMe SSD for hot data, Lustre for shared high-throughput access, and Amazon S3 for durable persistence.

In this series' primary multi-GPU instances, local NVMe is provided as instance store (ephemeral) with 30.72 TB raw capacity (8 × 3.84 TB NVMe SSD); see the EC2 accelerated-computing instance store specifications.

Lustre is an open-source, POSIX compliant distributed file system widely used in high-performance computing (HPC) to provide a shared namespace with high aggregate throughput across many clients. Amazon FSx for Lustre provides Lustre as a fully managed service and exposes it as a parallel file system capable of terabytes per second of throughput, millions of IOPS, and sub-millisecond latencies. Data Repository Associations enable integration with Amazon S3, supporting lazy loading of training datasets and automatic checkpoint export for durability.

At cluster scale, these instances are deployed in Amazon EC2 UltraClusters, which provision thousands of accelerated instances as a single, tightly placed cluster within an Availability Zone and interconnect them using a petabit-scale nonblocking network.

Figure: 2nd-generation Amazon EC2 UltraClusters (example P5 UltraCluster).

For workloads with high per-step communication intensity (e.g., expert parallelism in MoE models where all-to-all token dispatch spans many GPUs), the size of the NVLink domain can become a first-order constraint. As an extension of the internal scale-up axis, increasing the NVLink domain reduces how often performance-critical communication must leave the NVLink fabric.

Amazon EC2 UltraServers extend the NVLink domain beyond a single EC2 instance by connecting multiple component instances through a dedicated accelerator interconnect. AWS reports that P6e-GB200 UltraServers are built on the NVIDIA GB200 NVL72 platform and expose up to 72 Blackwell GPUs and 13.4 TB of aggregate HBM3e within one NVLink domain. At larger scales, EFA remains the cross-node fabric for multi-UltraServer jobs, but increasing the intra-domain GPU count can reduce how often performance-critical communication must leave the NVLink fabric.

These systems are built from NVIDIA Grace–Blackwell superchips, which couple Grace CPU memory and Blackwell GPU HBM via cache-coherent NVLink-C2C, enabling direct access across CPU- and GPU-attached memory without explicit host–device copies. In practice, this can extend the effective memory available to GPU workloads (e.g., by placing colder model state or KV cache in CPU-attached memory) while avoiding PCIe-scale copy overheads, albeit with higher latency and lower bandwidth than local HBM.

The component instance type for P6e-GB200 UltraServers is p6e-gb200.36xlarge, which provides four GPUs and Elastic Fabric Adapter (EFA) v4 networking. The tables below summarize the per-instance and composed UltraServer configurations.

| Instance Type | GPU | GPUs | GPU Memory | Memory BW | NVLink | NVLink BW | EFA | EFA BW |

|---|---|---|---|---|---|---|---|---|

| p6e-gb200.36xlarge | GB200 NVL72 | 4 | 740 GB HBM3e | — | — | — | v4 | 200 GB/s |

Note: The p6e-gb200.36xlarge EFA bandwidth is converted from the published aggregate EFA networking (4 × 400 Gbps) to GB/s (÷8); see the EC2 accelerated computing networking specifications.

| UltraServer | Component instance type | GPUs (NVLink domain) | HBM3e (aggregate) | EFA | EFA BW |

|---|---|---|---|---|---|

| u-p6e-gb200x36 | p6e-gb200.36xlarge | 36 | 6.7 TB | v4 | 1,800 GB/s |

| u-p6e-gb200x72 | p6e-gb200.36xlarge | 72 | 13.4 TB | v4 | 3,600 GB/s |

Note: UltraServer EFA bandwidth is converted from terabits per second (Tbps), as reported by AWS, to GB/s (÷8); see the P6e-GB200 UltraServers announcement and the P6 instance family page.

Resource Orchestration: Slurm and Kubernetes

When training spans hundreds or thousands of accelerators, manual resource management becomes intractable. For example, a training job requiring 512 GPUs must co-schedule 64 eight-GPU nodes (P-instances) simultaneously, and release resources atomically upon completion or failure. Both Slurm and Kubernetes address this challenge through a control-plane architecture: a centralized scheduler maintains cluster state and makes allocation decisions, while worker nodes execute assigned workloads.

Figure 2: High-level architecture of Slurm-based and Kubernetes-based resource orchestration on AWS

Slurm (Simple Linux Utility for Resource Management) is the dominant workload manager in high-performance computing, built on a modular plugin architecture that allows the scheduling algorithm, topology model, resource types, and accounting backend to be configured independently. Its scheduling model organizes resources into partitions (logical groupings of nodes), accepts job submissions via sbatch, and launches parallel tasks via srun with synchronized startup across allocated nodes. Critically for distributed training, Slurm schedules at the job level—allocating entire multi-node jobs atomically before any task launches. A backfill scheduler starts lower-priority jobs in idle slots without delaying higher-priority ones, while a multi-factor priority system weighs fair-share usage, job age, and QOS tiers to order the queue across tenants. Slurm also supports topology-aware placement through plugins that model network switch hierarchies—on AWS, encoding the EFA fabric topology to co-locate jobs on nodes with minimal switch hops—and native GPU scheduling through its Generic Resource (GRES) interface, which tracks GPU types and enforces device affinity.

AWS provides multiple deployment options for Slurm-based orchestration. AWS ParallelCluster is an open-source cluster management tool that automates the deployment of Slurm clusters on EC2, handling head node provisioning, compute fleet scaling, and integration with shared storage. AWS Parallel Computing Service (PCS) offers an alternative that provides the managed control plane. For distributed training workloads specifically, Amazon SageMaker HyperPod supports Slurm mode with additional capabilities tailored to large-scale training, such as continuous node health monitoring and job auto-resume functionality.

Kubernetes takes a declarative, API-driven approach: users specify desired state through resource manifests, and controllers reconcile actual state to match. While Kubernetes excels at model deployment, its native scheduling model exposes several gaps for tightly coupled distributed training. Kubernetes schedules at the pod level; without job-level atomicity, a multi-node training job can partially start—some ranks running while others remain Pending—wasting GPUs or causing deadlocks. Vanilla Kubernetes also lacks batch queue semantics with priority-based backfill, built-in awareness of network fabric topology (NVLink domains, EFA interconnects) for placement of communication-heavy collectives.

Several Kubernetes-native projects address these gaps at different layers. Kueue operates as an admission controller atop the default scheduler, managing job-level gang admission, multi-tenant quotas with hierarchical fair sharing, and priority-based preemption—while delegating pod placement to the underlying scheduler. Volcano and NVIDIA KAI Scheduler take a different approach, replacing or augmenting the default scheduler to integrate gang scheduling directly with topology-aware pod placement—Volcano as a general-purpose batch scheduler, KAI Scheduler with deep NVLink/NVSwitch awareness for GPU-optimized placement. These layers are complementary: Kueue can manage admission and quota policy while passing admitted jobs to a topology-aware scheduler for placement.

For Kubernetes-based orchestration on AWS, Amazon Elastic Kubernetes Service (EKS) provides managed Kubernetes with GPU scheduling via the NVIDIA device plugin. Amazon SageMaker HyperPod also supports EKS mode, combining Kubernetes orchestration with HyperPod's training-specific capabilities. HyperPod EKS extends EKS with features designed for foundation model training at scale. Task governance provides compute allocation and policy enforcement across teams, integrating managed Kueue for admission control and Karpenter for just-in-time node provisioning. Checkpointless training addresses the recovery latency inherent in traditional checkpoint-based fault tolerance. Rather than periodically serializing model state to shared storage, checkpointless training maintains continuous peer-to-peer state replication across GPUs. When a failure occurs, surviving nodes reconstruct the lost state through EFA-based communication rather than reading multi-terabyte checkpoints from FSx for Lustre or S3. Elastic training enables jobs to automatically scale based on resource availability. When additional accelerators become available (e.g., from completed jobs or newly provisioned capacity), elastic jobs can expand to utilize them; when higher-priority workloads require resources, jobs can contract while maintaining training progress.

ML Software Stack

Distributed training and inference involve multiple software layers that must be correctly configured and coordinated. A useful model treats the runtime stack as five layers, ordered from hardware-adjacent components (which must function correctly for anything to run) to framework-level abstractions (which determine programmer productivity and model throughput): hardware enablement, accelerator runtime and math libraries, communication substrate, ML frameworks, and distributed training/inference frameworks.

Figure 3: The ML software stack for distributed training and inference on EC2 instances

Hardware enablement: kernel drivers

At the foundation, Linux kernel drivers provide direct hardware access. The NVIDIA GPU driver exposes compute capabilities and supports GPUDirect RDMA for direct data transfers between GPUs and network adapters. The GDRCopy driver (gdrdrv) enables low-latency CPU-initiated copies to and from GPU memory, used by NCCL for small-message transfers. The EFA driver provides OS-bypass networking through the libfabric API, and the Lustre client driver enables POSIX access to FSx for Lustre parallel file systems.

Accelerator runtime, compilers, and kernel libraries

The CUDA platform provides the programming model and runtime for GPU compute. Applications compiled against CUDA can launch kernels on NVIDIA GPUs, manage device memory, and coordinate execution across multiple devices. The current release is CUDA Toolkit 13.x, with support for Blackwell architecture (compute capability 10.x).

Modern training and inference performance is increasingly driven by specialized optimization libraries and custom kernels, not just general-purpose vendor primitives. Kernels like FlashAttention fuse attention into a single memory-efficient pass, cutting HBM traffic and improving throughput. Many teams also write shape- and precision-specialized fused kernels (e.g., layernorm/residual/activation, quantized GEMMs, MoE dispatch, KV-cache ops) tuned to their exact models. This is enabled by programmable toolchains such as Triton (Python GPU kernel compiler) and NVIDIA's CuTe (tensor layout and warp-level DSL), with libraries like CUTLASS providing highly optimized GEMM and fusion building blocks. In practice, this kernel and compiler layer often determines end-to-end performance as much as the ML framework.

Communication substrate: NCCL and transport plugins

Multi-GPU training depends on efficient collective communication. NVIDIA Collective Communications Library (NCCL) implements collective operations—all-reduce, all-gather, reduce-scatter, all-to-all, broadcast, and point-to-point send/receive—with topology-aware algorithms that exploit NVLink for intra-node communication and network transports for inter-node traffic. NCCL dynamically detects the communication topology and selects ring or tree algorithms depending on message size and available bandwidth. While data-parallel and tensor-parallel strategies rely primarily on all-reduce and all-gather, Mixture-of-Experts (MoE) models with expert parallelism depend on all-to-all collectives to route tokens between GPUs: a dispatch all-to-all sends each token to the GPU hosting its assigned expert, and a combine all-to-all returns expert outputs to the originating GPUs (NVIDIA Developer Blog). Because every GPU exchanges data with every other GPU in the expert-parallel group, all-to-all communication volume scales with the number of experts and can become a dominant bottleneck at high expert-parallelism degrees.

On AWS, NCCL's inter-node communication is enabled through the aws-ofi-nccl plugin, which maps NCCL's transport APIs to libfabric interfaces. This allows NCCL to leverage EFA's OS-bypass and Scalable Reliable Datagram (SRD) protocol without application changes.

For inference workloads, collective operations do not capture all communication patterns. Disaggregated inference architectures—which separate prefill and decode phases onto distinct GPU pools—require efficient point-to-point data movement, particularly for transferring KV cache state between instances. NVIDIA Inference Xfer Library (NIXL) addresses this requirement by providing a unified API for point-to-point transfers across memory tiers (HBM, DRAM, NVMe, distributed storage) and interconnects (NVLink, InfiniBand, Ethernet). NIXL integrates with inference frameworks such as NVIDIA Dynamo and supports backends including UCX and GPUDirect Storage.

ML frameworks: PyTorch

The two dominant frameworks for foundation model development are PyTorch and JAX. JAX takes an SPMD (Single Program Multiple Data) approach through XLA, where the same program executes across devices with automatic data distribution and collective lowering. This blog focuses on PyTorch, which sees broader adoption in the open-source ecosystem and forms the basis for the distributed training and inference frameworks discussed below.

PyTorch provides tensor computation with GPU acceleration, automatic differentiation, and a flexible eager-execution model. For distributed workloads, PyTorch's torch.distributed module provides the core primitives: process groups for collective communication, and distributed data-parallel abstractions including Distributed Data Parallel (DDP) and Fully Sharded Data Parallel (FSDP2). DDP replicates models across GPUs and synchronizes gradients via all-reduce, while FSDP2 shards parameters, gradients, and optimizer states across workers using techniques from the ZeRO algorithm, enabling training of models that exceed single-GPU memory capacity.

Distributed training and inference frameworks

The top layer comprises frameworks that build on PyTorch to provide higher-level abstractions for distributed training and inference at scale. For training, three categories of frameworks address different points in the complexity-performance tradeoff. Below are few examples

Hugging Face Transformers provides the Trainer class with built-in support for distributed training via Accelerate, which abstracts over DDP, FSDP, and DeepSpeed. This path prioritizes ease of use and broad model compatibility, making it suitable for fine-tuning and moderate-scale training where configuration simplicity matters more than maximum throughput.

NVIDIA Megatron Core targets maximum efficiency at scale, implementing 3D parallelism (tensor, pipeline, and expert parallelism) with optimizations including FP8 mixed precision via Transformer Engine. The NeMo Framework builds on Megatron Core to provide end-to-end workflows for pre-training and fine-tuning.