How to achieve truly serverless GPUs

Modal cut GPU inference server boot time 40x by snapshotting CUDA contexts directly into memory, taking replica spin-up from 2000 seconds to 50 seconds across 15 million production deployments.

Summary

Deep Dive

- Modal reduced GPU inference server replica spin-up from 2000+ seconds to ~50 seconds through four architectural optimizations spanning the entire stack

- Cloud buffers: Maintain idle, health-checked GPUs ready for immediate scheduling, removing tens of minutes of instance allocation latency from the hot path; GPU failure rates are significant enough to require active boot checks and weekly dcgmi diagnostics

- Custom filesystem (ImageFS): Built with libfuse to lazily load container images from a content-addressed, multi-tier cache (page cache → SSD → AZ cache → CDN → blob storage); most container files are never read, so lazy loading with metadata-first start cuts boot from minutes to ~100ms

- Disabled gzip compression (single-threaded DEFLATE bottlenecks at ~100 MB/s), tuned read_ahead_kb to 32 MB, achieving multi-GB/s throughput from cache

- CPU checkpoint/restore: Using gVisor's runsc runtime (emulates Linux kernel in userspace), they serialize container state to disk and restore 10x faster than cold start; snapshots are host-sensitive (e.g., AWS g6.12xlarge lacks pclmulqdq instruction), requiring multiple snapshots per deployment

- GPU memory snapshotting: Recent Nvidia drivers checkpoint device memory into host memory, which then gets checkpointed to disk, enabling full device state restoration including CUDA graphs and Torch compiler artifacts

- Typical speedups are 4-10x for GPU workloads — vLLM boot latency dropped from 95.7s to 13.8s mean, SGLang from 83.7s to 17.5s for 1 GiB models (Qwen 3 0.6B) across tens of thousands of cold starts

- GPU snapshots require adjustments: weight offloading before checkpoint, KV cache recreation, and currently limited to single-GPU (multi-GPU nccl programs deadlock on pause)

- Weight loading bottleneck: billions to trillions of bytes at few GB/s = seconds to hundreds of seconds; could be >3x faster with AZ weight server, >10x with RDMA over RoCE/InfiniBand

- Production usage Feb-April 2026: ~35M CPU snapshot replicas (>5M GPU-hours), ~15M CPU+GPU snapshot replicas (>2M GPU-hours) across hundreds of organizations

- Reducto case study: Document processing platform (known for indexing Jeffrey Epstein's JMail correspondence) scales to thousands of GPUs on-demand for enterprise jobs with tens-of-minutes deadlines

- System aggregates capacity from multiple cloud providers for low cost and high availability, requiring snapshot compatibility across heterogeneous instance types

- GPU Allocation Utilization (GPU-seconds running code ÷ GPU-seconds paid for) is distinct from nvidia-smi metrics and is the critical bottleneck for inference economics — industry average is <70% at peak, often 10-20% in practice

- Spiky demand from external user behavior creates high peak-to-average ratios that wreck economics with fixed allocations but are profitable with fast auto-scaling

Decoder

- GPU Allocation Utilization: GPU-seconds spent running application code divided by GPU-seconds paid for — distinct from nvidia-smi "utilization" (fraction of time kernel code runs) and Model FLOP/s Utilization (algorithmic operations divided by arithmetic bandwidth)

- CRIU (Checkpoint/Restore In Userspace): Linux transparent checkpoint/restore system that serializes running process state (heap, threads, file descriptors) to recreate processes from storage faster than re-executing

- gVisor/runsc: Google container runtime that emulates Linux kernel in userspace for security isolation, enabling checkpoint/restore without host kernel cooperation via nvproxy for GPU driver communication

- libfuse: Library for writing Linux filesystems in userspace with kernel intermediation, simpler than kernel modules but with double context switches

- CUDA graphs: GPU execution graphs made up of pointers to tensors and kernels with no native serialization, expensive to recreate (tens of seconds to minutes) during inference engine startup

- Content-addressed cache: Cache indexed by file content hash rather than path, enables reuse when same files appear in different container layers or images

- vLLM/SGLang: Popular LLM inference servers that perform model loading, CUDA graph capture, and Torch compilation during startup

Original Article

Full article content is not available for inline reading.

Fast mode for Claude Opus 4.7

Anthropic launches Claude Opus 4.7 fast mode in research preview for API and seven developer tools, opt-in now but set to become default.

Summary

Original Article

Full article content is not available for inline reading.

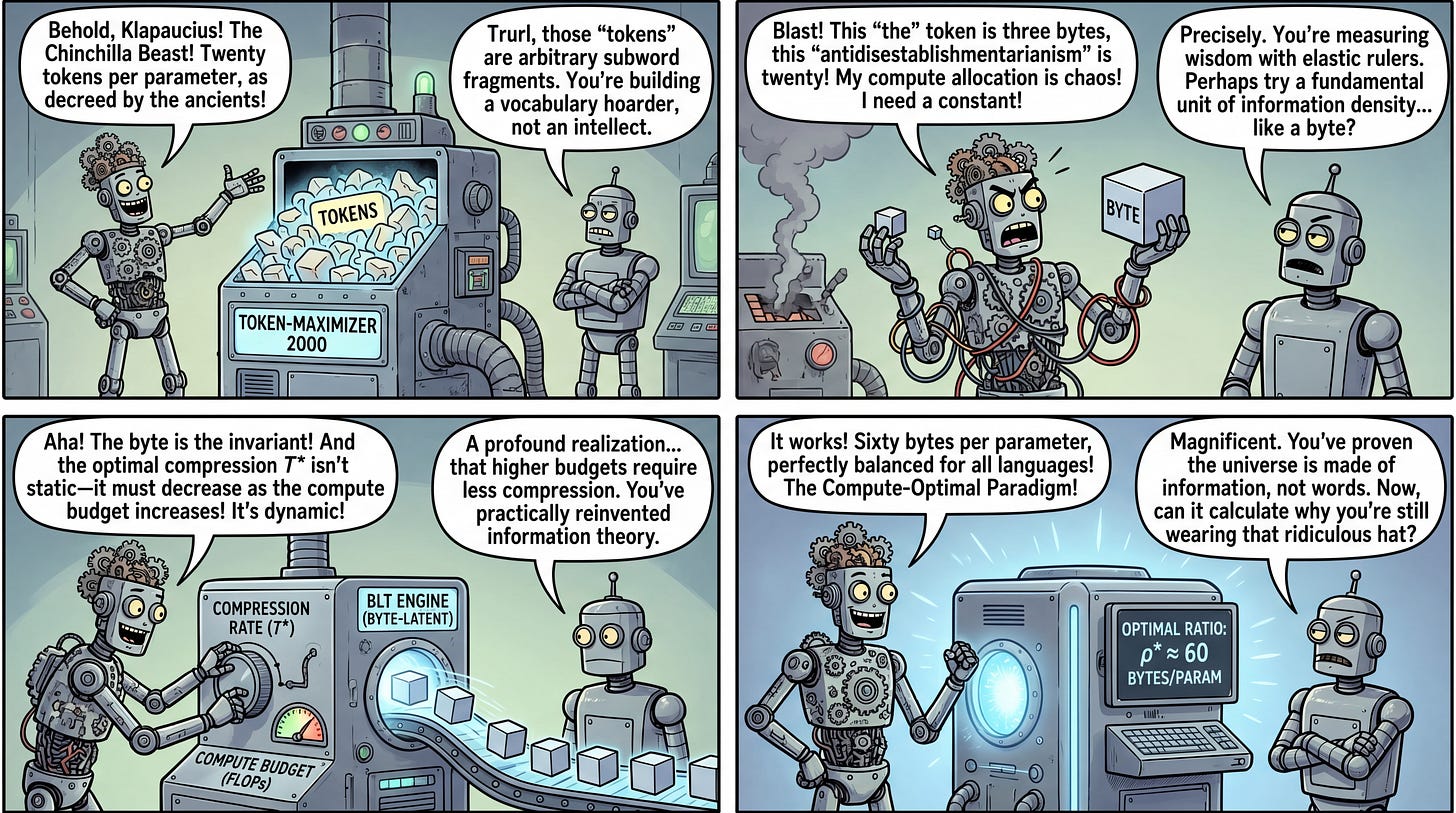

Compute Optimal Tokenization

Training 1,300 models revealed the industry-standard 20 tokens per parameter scaling law is a BPE tokenizer artifact, not a universal constant.

Summary

Deep Dive

- The authors trained 1,300 models across varying model sizes and compression rates (bytes per token) to systematically derive tokenizer-agnostic scaling laws

- The widely-cited Chinchilla law (20 tokens per parameter) is shown to be an artifact of specific BPE tokenizers, not a fundamental property of neural scaling

- Training data should scale proportionally to model parameters in bytes, not tokens, to account for variable information density across languages and tokenizers

- Optimal compression rate is compute-dependent: as FLOP budgets increase, lower compression (more bytes per token) becomes more efficient

- This framework provides a path to more efficient multilingual foundation models by optimizing tokenization as a dynamic scaling variable rather than a static preprocessing choice

- Project code and interactive tools available at co-tok.github.io for exploring compression-compute tradeoffs

Decoder

- Byte-Pair Encoding (BPE): A tokenization algorithm that iteratively merges the most frequent pairs of bytes/characters into single tokens. Used by most LLMs including GPT and LLaMA.

- Chinchilla scaling laws: Research from DeepMind establishing that optimal LLM training uses roughly 20 tokens per model parameter. Named after their 70B parameter Chinchilla model.

- Compression rate: In this context, the information density of tokens measured in bytes per token. Higher compression means each token encodes more raw information.

- FLOP budgets: Total floating-point operations allocated for training. Determines the compute cost/scale of a training run.

Original Article

Compute Optimal Tokenization

Authors: Tomasz Limisiewicz, Artidoro Pagnoni, Srini Iyer, Mike Lewis, Sachin Mehta, Alisa Liu, Margaret Li, Gargi Ghosh, Luke Zettlemoyer

Paper: https://arxiv.org/abs/2605.01188v1

Code: https://co-tok.github.io

TL;DR

WHAT was done? The authors systematically derived compression-aware neural scaling laws by training nearly 1,300 models to determine how information granularity (bytes per token) impacts optimal compute allocation.

WHY it matters? This work proves that the widely accepted heuristic of scaling models by 20 tokens per parameter is an artifact of specific subword tokenizers. Establishing a tokenizer-agnostic scaling law based on bytes provides a robust framework for maximizing compute efficiency across diverse languages and modalities.

Executive summary: For research teams optimizing large-scale pre-training runs, the tokenization scheme is often treated as a static preprocessing step. This paper reframes tokenization as a dynamic scaling variable. By optimizing the "compression rate" (information density), the authors demonstrate that training data should scale proportionally to model parameters in bytes, not tokens. Furthermore, they reveal that the optimal compression rate is compute-dependent, requiring lower compression as FLOP budgets scale up, thus offering a new blueprint for training highly efficient, massively multilingual foundation models.

Details

The Tokenization Artifact Bottleneck in Neural Scaling

Foundation model scaling is largely governed by established scaling laws, most notably the heuristic derived in Training Compute-Optimal Large Language Models (Chinchilla), which posits an optimal ratio of approximately 20 training tokens per model parameter. However, a critical blind spot in this heuristic is its reliance on a fixed tokenization scheme. Expressing data volume strictly in tokens ignores the variable information density that each token represents, essentially binding fundamental scaling behavior to the arbitrary mechanics of Byte-Pair Encoding (BPE) tokenizers. This study isolates the token as a variable to identify the true invariant in scaling behavior, exposing the extent to which popular tokenizers inherently skew compute allocation.

Reinforcing Recursive Language Models

RL fine-tuning closes the gap: Daniel Kim and Rehaan Ahmad's 4B Qwen matches Claude Sonnet 4.6 on evidence selection, 8x faster.

Summary

Deep Dive

- Uses GRPO (Group Relative Policy Optimization) where child RLM rollouts inherit the advantage of parent rollouts, enabling single-policy training instead of separate parent/child models

- Training pipeline requires cold-start SFT on teacher traces from Qwen3.5-397B-A17B before RL because small models cannot bootstrap RLM behavior from prompting alone (0 pass@16 without SFT)

- Step-wise training treats each turn as a separate sample since the RLM scaffold rewrites the user prompt per turn rather than accumulating chat history, so an N-turn rollout produces N training samples

- Rubric-based LLM judges for reward assignment proved more robust than F1 span-overlap metrics, which were too noisy when multiple valid answer spans exist for the same question

- REPL environment exposes built-in functions (list_papers, search, extract_section, get_paper_abstract) and RLM-specific calls (FINAL, rlm_query, rlm_query_batched for parallel sub-agent spawning)

- Evidence selection task: given a question and up to 10 arXiv papers, return variable-length verbatim text spans that answer the question (RAG unsuitable due to dynamic span requirements)

- Training objective includes normalization term (1/k_g) when summing child contributions to prevent gradient domination when many children spawned, keeping contributions balanced across RLM tree depth

- Dataset consists of 1000 synthetically generated queries over paper groups, with a frontier model generating questions and selecting relevant paragraphs as ground truth from semantically similar high-upvoted papers

- Models see noisy PDF-parsed text at test time (not clean OCR) to mimic production settings where running OCR on every new document is prohibitively expensive

- Ablation with reduced prompt (200 vs 1500 tokens describing task strategy) converges slightly lower and less stably, suggesting current models still need explicit strategy guidance but future RLM-native models could discover strategies autonomously

- Training performed on single 8xH200 node with batch size 16 and 8 samples per prompt, supporting up to 512 concurrent rollouts across parent and child RLMs

- Recursive extension supports arbitrary RLM depth using recursive subtree loss formulation where each node contributes its own loss plus averaged contributions from all children

Decoder

- RLM (Recursive Language Model): Inference strategy where a language model operates in a programmatic environment (typically a REPL) and can spawn copies of itself as sub-agents to decompose long-context or complex tasks, recursively calling itself with different prompts and contexts

- REPL (Read-Eval-Print-Loop): In RLM context, a Python environment where the model writes code each turn, the system executes it, and results are returned as the next user message—code becomes the primary interface for inspecting and transforming data, not just another tool

- GRPO (Group Relative Policy Optimization): RL algorithm that computes advantages for each rollout relative to a group of sampled rollouts (group statistics) rather than using a learned value function baseline

- Advantage inheritance: Training technique where child RLM rollouts inherit the sequence-level advantage computed for their parent rollout, enabling single-policy training across the entire RLM tree depth without separate reward signals for each child trajectory

- Step-wise training: Each turn in a multi-turn RLM rollout becomes a separate training sample (necessary when turns don't share prefixes because user prompt is rewritten per turn), with the final turn's advantage broadcast backward to previous turns

Original Article

Full article content is not available for inline reading.

Cactus Needle (GitHub Repo)

Cactus Compute distilled Gemini 3.1 into a 26M-parameter model that outperforms Qwen-0.6B at function calling and runs 6,000 tokens/sec on MacBooks.

Summary

Deep Dive

- 26M-parameter Simple Attention Network distilled from Gemini 3.1 using encoder-decoder architecture (12 encoder, 8 decoder layers)

- Encoder uses self-attention with GQA and RoPE but no feedforward network; decoder adds cross-attention and tool calling head

- Pretrained on 200B tokens over 27 hours on 16 TPU v6e, then post-trained on 2B tokens of function call data in 45 minutes

- Runs at 6,000 tokens/sec prefill and 1,200 decode on Cactus hardware; weights fully open on GitHub

- Beats FunctionGemma-270m, Qwen-0.6B, Granite-350m, and LFM2.5-350m on single-shot function calling for personal AI

- Includes web playground UI for testing and finetuning on custom tools at the click of a button

- Designed for consumer devices (phones, watches, glasses) prioritizing local inference over conversational scope

- Architecture uses ZCRMSNorm, gated residuals, tied embeddings, d=512, 8 heads/4 KV heads, 8192 BPE vocab

- CLI supports finetuning on custom JSONL data, data generation via Gemini, evaluation, and TPU management

- Authors acknowledge small models can be "finicky" and recommend testing on own tools before deployment

Decoder

- Simple Attention Network: Novel architecture class designed for efficient inference on consumer devices, using encoder-decoder structure without traditional feedforward networks in the encoder

- GQA (Grouped Query Attention): Memory-efficient attention mechanism that groups multiple query heads to share key-value pairs, reducing KV cache size

- RoPE (Rotary Position Embeddings): Position encoding technique that applies rotation matrices to queries and keys, enabling better length extrapolation

- ZCRMSNorm: Zero-centered Root Mean Square normalization, a normalization layer variant

Original Article

Needle

We distilled Gemini 3.1 into a 26m parameter "Simple Attention Network" that you can even finetune locally on your Mac/PC. In production, Needle runs on Cactus at 6000 toks/sec prefill and 1200 decode speed. Weights are fully open on Cactus-Compute/needle, as well as the dataset generation.

d=512, 8H/4KV, BPE=8192

┌──────────────┐

│ Tool Call │

└──────┬───────┘

┌┴──────────┐

│ Softmax │

└─────┬─────┘

┌─────┴─────┐

│ Linear (T)│ ← tied

└─────┬─────┘

┌─────┴─────┐

│ ZCRMSNorm │

└─────┬─────┘

┌────────┴────────┐

│ Decoder x 8 │

│┌───────────────┐│

││ ZCRMSNorm ││

││ Masked Self ││

││ Attn + RoPE ││

││ Gated Residual││

│├───────────────┤│

┌──────────────┐ ││ ZCRMSNorm ││

│ Encoder x 12 │──────────────────────▶Cross Attn ││

│ │ ││ Gated Residual││

│ ┌──────────┐ │ │└───────────────┘│

│ │ZCRMSNorm │ │ └────────┬────────┘

│ │Self Attn │ │ ┌─────┴─────┐

│ │ GQA+RoPE │ │ │ Embedding │ ← shared

│ │Gated Res │ │ └─────┬─────┘

│ │ │ │ ┌───────┴───────-┐

│ │ (no FFN) │ │ │[EOS]<tool_call>│

│ └──────────┘ │ │ + answer │

│ │ └───────────────-┘

└──────┬───────┘

│

┌────┴──────┐

│ Embedding │

└────┬──────┘

│

┌────┴──────┐

│ Text │

│ query │

└───────────┘

- Pretrained on 16 TPU v6e for 200B tokens (27hrs).

- Post-trained on 2B tokens of single-shot function call dataset (45mins).

Needle is an experimental run for Simple Attention Networks, geared at redefining tiny AI for consumer devies (phones, watches, glasses...). So while it beats FunctionGemma-270m, Qwen-0.6B, Graninte-350m, LFM2.5-350m on single-shot function call for personal AI, Those model are have more scope/capacity and excel in conversational settings. Also, small models can be finicky. Please use the UI in the next section to test on your own tools, and finetune accordingly, at the click of a button.

Quickstart

git clone https://github.com/cactus-compute/needle.git

cd needle && source ./setup

needle playgroundOpens a web UI at http://127.0.0.1:7860 where you can test and finetune on your own tools. Weights are auto-downloaded.

Usage (Python)

from needle import SimpleAttentionNetwork, load_checkpoint, generate, get_tokenizer

params, config = load_checkpoint("checkpoints/needle.pkl")

model = SimpleAttentionNetwork(config)

tokenizer = get_tokenizer()

result = generate(

model, params, tokenizer,

query="What's the weather in San Francisco?",

tools='[{"name":"get_weather","parameters":{"location":"string"}}]',

stream=False,

)

print(result)

# [{"name":"get_weather","arguments":{"location":"San Francisco"}}]Finetuning

# Playground (generates data via Gemini, trains, evaluates, bundles result)

needle playground

# CLI (auto-downloads weights if not local)

needle finetune data.jsonlCLI

needle playground Test and finetune via web UI

needle finetune <data.jsonl> Finetune on your own data

needle run --query "..." --tools Single inference

needle train Full training run

needle pretrain Pretrain on PleIAs/SYNTH

needle eval --checkpoint <path> Evaluate a checkpoint

needle tokenize Tokenize dataset

needle generate-data Synthesize training data via Gemini

needle tpu <action> TPU management (see docs/tpu.md)@misc{ndubuaku2026needle,

title={Needle},

author={Henry Ndubuaku, Jakub Mroz, Karen Mosoyan, Roman Shemet, Parkirat Sandhu, Satyajit Kumar, Noah Cylich, Justin H. Lee},

year={2026},

url={https://github.com/cactus-compute/needle}

}Building Self-Repairing Agent Loops

OpenAI demonstrates agent loops that automatically fix stale code examples through structured review, repair, and validation cycles until tests pass.

Summary

Deep Dive

- Three-phase loop architecture: review inspects without editing and returns structured findings; repair applies focused changes using findings plus latest validation feedback; validate runs checks and reports what still needs work

- Uses Codex CLI (@openai/codex npm package version 0.130.0) in headless mode so repair steps run from Python cells instead of interactive chat UI

- Structured outputs with JSON schemas at each phase: findings schema (artifact, issue_type, severity, description, suggested_fix_direction), fix schema (changes_made, unresolved_items, updated_artifact_path), validation schema (overall_passed, cases array, remaining_delta)

- Business rules define what good looks like: current API patterns (client.responses.create not chat.completions.create, modern tools schema not legacy function-calling, qdrant.query_points not qdrant.search, current Evals API not oaieval CLI), runnable local setup, preserved teaching goals

- Three example notebooks with increasing repair depth: one-pass (modernize Qdrant query path), two-pass (modernize Evals flow then remove result-log brittleness using validation feedback), three-pass (modernize model/API shape, tighten runnable setup, restore full retrieval teaching flow)

- Validation executes each repaired notebook end-to-end; failures become structured feedback for next iteration rather than terminal errors

- Models: defaults to gpt-5.4-mini for speed (configurable via REPAIR_MODEL env var), uses gpt-5.5 for COOKBOOK_CHAT_MODEL, supports REPAIR_REASONING_EFFORT setting

- Separation of concerns keeps each phase focused: review doesn't edit files, repair doesn't validate, validate doesn't prescribe fixes

- Working Python code loads sample notebooks, extracts metadata (target_iteration, repair_depth), runs review/repair/validate cycle tracking iterations until validation passes or limit reached

- Setup: npm install -g @openai/codex, set OPENAI_API_KEY, download companion data/docs folder with pre-repair sample notebooks

- Pattern is domain-agnostic: substitute notebook execution with your validation method (unit test suite, schema validator, policy checker, simulation harness, approval workflow)

- Structured handoffs between phases make the loop debuggable, rerunnable, and adaptable to other artifact types beyond notebooks

Decoder

- Codex CLI: OpenAI's agent orchestration tool (npm package @openai/codex), distinct from the deprecated Codex code-generation model. Runs agent workflows programmatically with structured JSON schemas for input/output rather than free-form chat, enabling headless automation.

Original Article

Full article content is not available for inline reading.

AI for the Real World: A conversation with Yann LeCun

Yann LeCun argues LLMs will never reach human-level intelligence because language represents only a tiny fraction of human understanding.

Summary

Decoder

- World models: AI systems that learn abstract representations of how the physical world works (physics, causality, cause-and-effect) rather than just predicting text sequences. Enables AI to simulate outcomes and plan actions in real environments.

Original Article

Full article content is not available for inline reading.

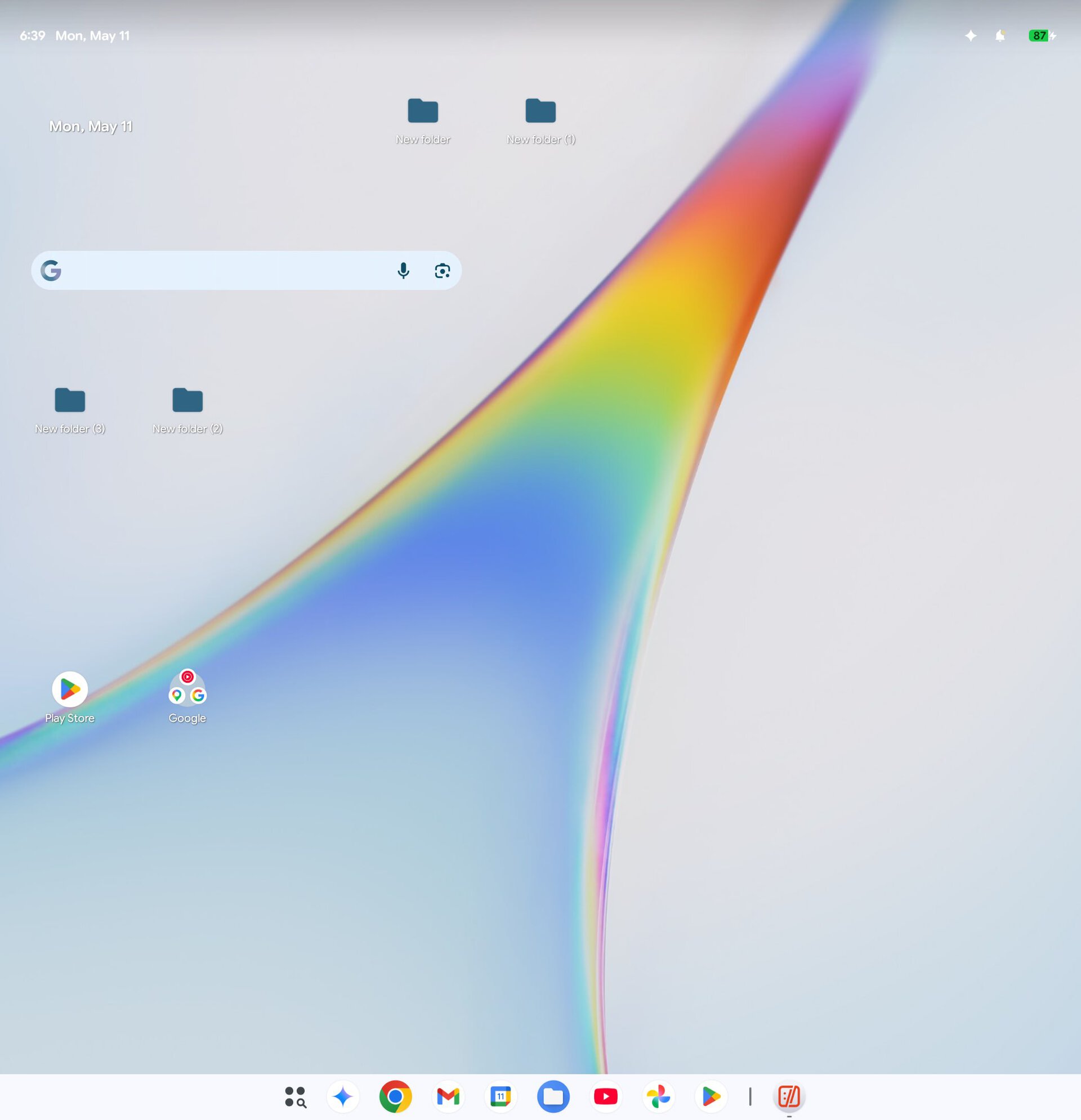

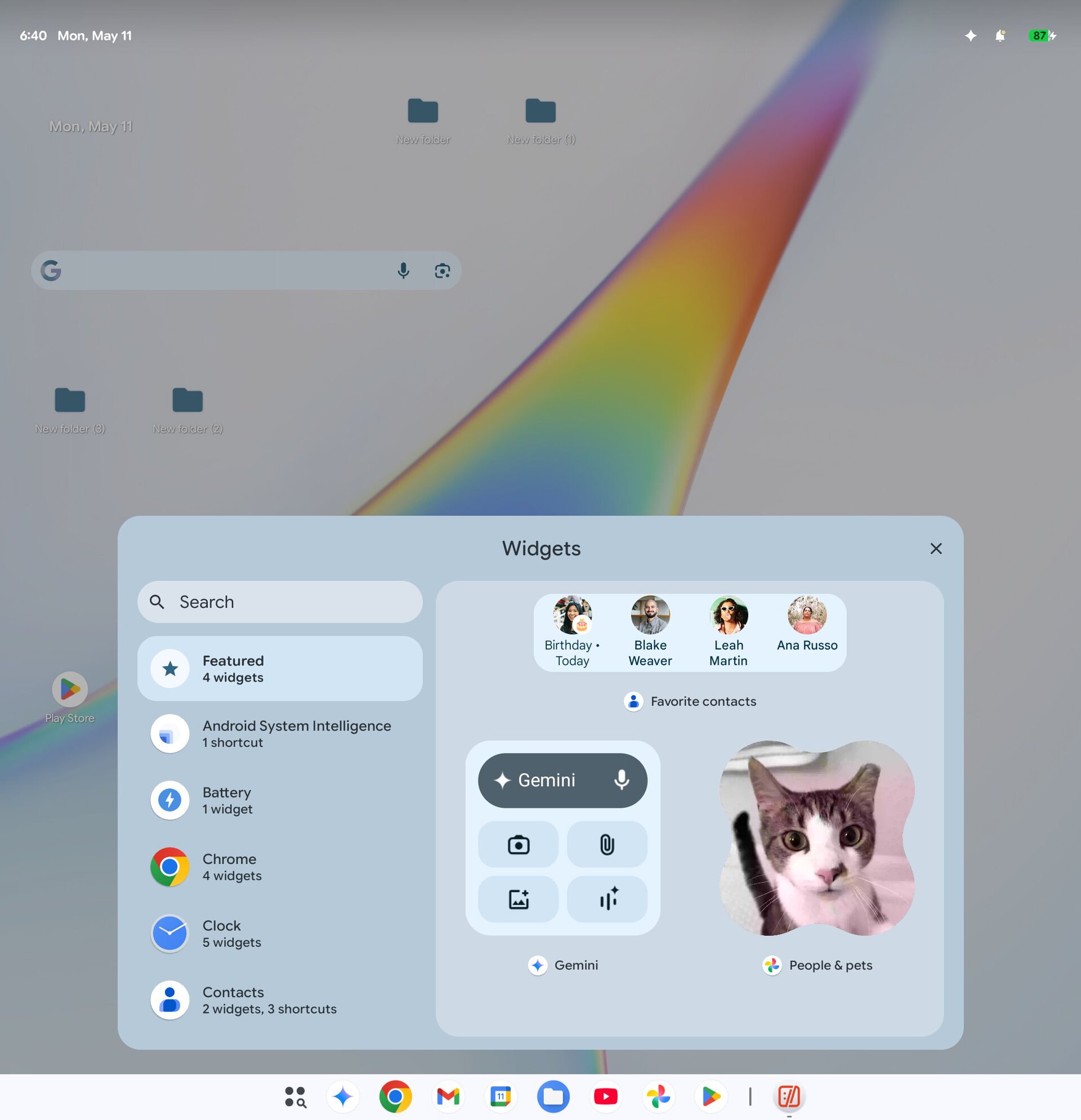

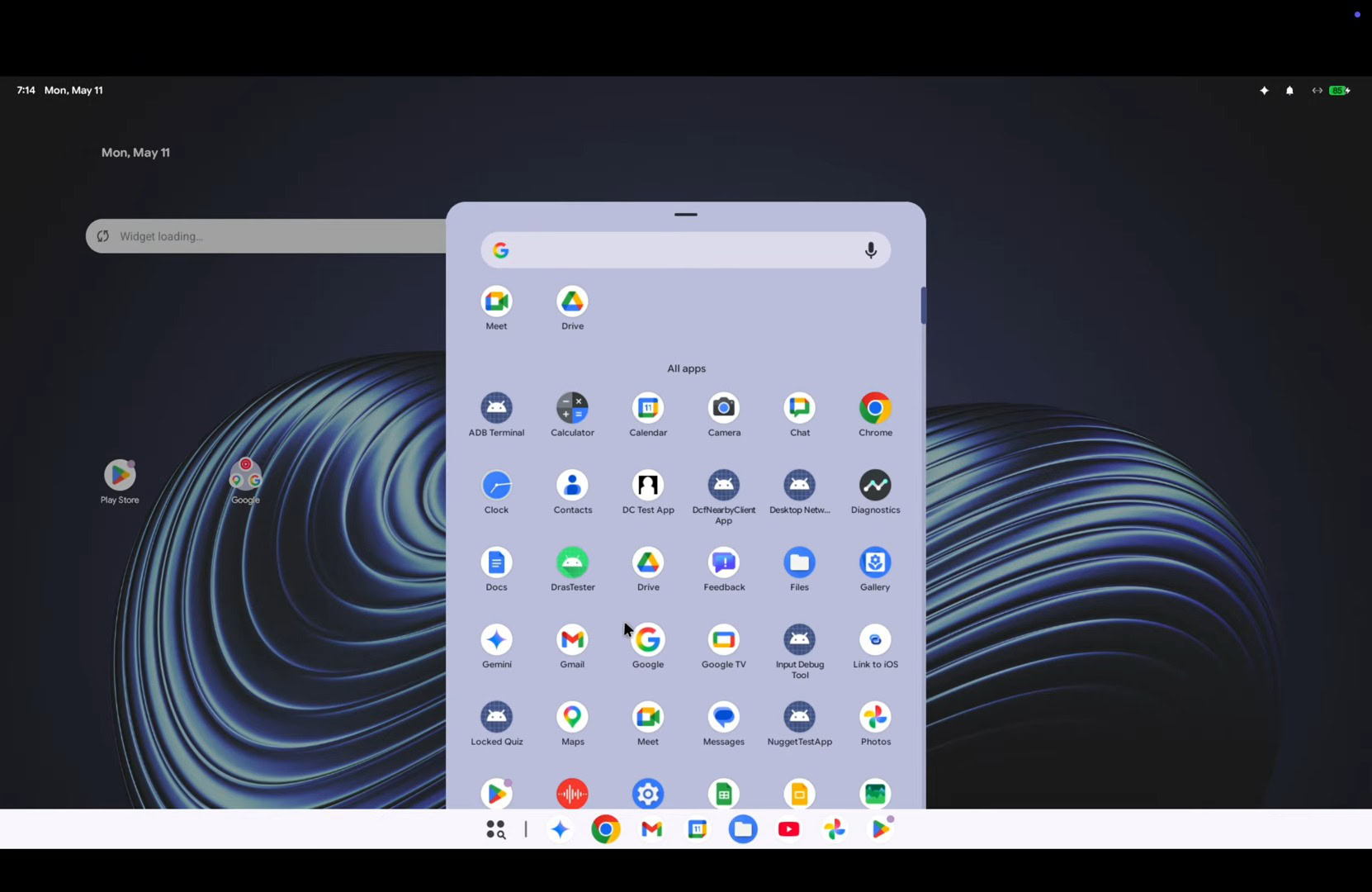

Gemini Intelligence Comes to Android

Google's Gemini Intelligence brings autonomous task agents to Android that shop across apps, browse the web, and create widgets from natural language.

Summary

Deep Dive

- Google unveiled Gemini Intelligence at Android Show: I/O Edition with autonomous AI features that perform multi-step tasks across Android apps

- Cross-app automation example: press power button, describe task like "copy my grocery list and add items to my shopping cart," Gemini completes it with confirmation before checkout

- Auto-browse feature (experimentally introduced in January) now rolling out to Android for booking appointments and completing web-based tasks

- Gemini in Chrome coming to Android in late June for page summarization and Q&A, matching desktop functionality

- Personal Intelligence form autofill learns user details and fills forms automatically (opt-in, can be disabled in settings)

- Rambler feature in Gboard uses multimodal AI for dictation that transcribes speech, removes filler words, and formats text

- Natural language widget creation lets users build custom Android widgets by describing them (e.g., "Suggest three high-protein meal prep recipes every week")

- Widget creation similar to tool released by hardware startup Nothing last year

- All features follow Material 3 design language and launch first on Samsung Galaxy S26 and Google Pixel devices in summer 2026

- Broader Android device rollout scheduled for later in 2026

Decoder

- Agentic AI: AI systems that autonomously complete multi-step tasks across applications without constant user guidance, acting as agents on the user's behalf (e.g., booking appointments, managing shopping workflows).

- Vibe-coding: Creating software, UI components, or widgets by describing them in natural language rather than writing code, named for specifying the desired "vibe" or characteristics you want.

Original Article

Google announced a number of new Gemini Intelligence-branded AI features at its Android Show: I/O Edition event on Tuesday. These include the ability for AI to complete tasks across apps, browse the web, fill out forms, dictate speech, and even allow you to vibe-code your own Android widgets.

Gemini gets more powerful

The company had already introduced some agentic capabilities, such as ordering food or booking a ride, to Gemini at the Samsung Galaxy S26 launch earlier this year. There, Google announced that Gemini would soon be able to perform more complex tasks, like booking a front-row bike for a spin class, finding a class syllabus in Gmail, and then searching for books related to that topic.

Now Google's AI assistant will be able to handle a multistep process, like copying a grocery list from your notes app, then adding items to the cart in your shopping app. To use this feature, you'll press the phone's power button and describe the task. Meanwhile, the content on the phone's screen acts as the context for the assistant. Google noted that Gemini will wait for your final confirmation to complete the checkout.

In addition, a feature first introduced in January had allowed Gemini to browse the web for you and complete tasks like booking an appointment, as part of an experimental rollout. Today, Google said this auto-browse feature is making its way to Android, too.

In late June, Android devices will also get Gemini in Chrome, an AI feature that will help users summarize content or ask questions about what is on the web page, similar to how Gemini in Chrome works on the desktop.

Another small but useful addition is that Gemini will be able to fill out forms on your behalf after learning details about you through Personal Intelligence. (Google said this feature is opt-in, and you can turn it off via settings anytime.)

Plus, Gemini will come to Android's Gboard keyboard. Google is using Gemini's multimodal capabilities by introducing a feature called Rambler in Gboard, which is similar to those found in other AI-powered dictation apps. The feature will let you speak in your own tone, transcribe the speech, and format it by removing filler words.

Vibe-code your own Android widgets

Vibe-coding apps are picking up pace, and Google wants to give Android users a taste of this, too.

The company is introducing a way for users to build Android widgets by describing them in natural language. For example, users can build a meal-planning widget using query text like, "Suggest three high-protein meal prep recipes every week."

The idea of creating a widget is not novel to Gemini. Notably, the hardware startup Nothing also released a similar tool last year.

Google said that Gemini Intelligence will follow the company's Material 3 expressive design language in its features.

The company said that these AI-powered features will first make their way to the latest Samsung Galaxy and Google Pixel devices this summer and will be available across other Android devices later this year.

Claude for Legal (GitHub Repo)

Anthropic released Claude for Legal on GitHub with 12 practice-area plugins, 100+ workflow agents, and a security-reviewed marketplace for community legal skills.

Summary

Deep Dive

- 12 practice-area plugins each include a cold-start interview that learns your playbook and writes a practice profile (CLAUDE.md) that every skill in that plugin reads from

- Named workflow agents include Vendor Agreement Reviewer, DSAR Responder, Claim Chart Builder, Termination Reviewer, NDA Triager, Board Consent Drafter, and 100+ others

- MCP connectors wire Claude to legal systems: Thomson Reuters CoCounsel (Westlaw Deep Research), Ironclad (contract lifecycle management), DocuSign, iManage (document management), Everlaw (e-discovery), CourtListener (federal dockets)

- Dual deployment: install as interactive Claude Cowork/Code plugin OR deploy via Managed Agents API for scheduled/headless workflows (renewal watcher, docket watcher, regulatory feed monitor)

- Built-in guardrails reflect attorney-review requirement: source attribution on every citation, conservative defaults on privilege and subjective legal calls, jurisdiction assumptions surfaced, explicit gates before anything is filed or sent

- Microsoft 365 integration: contract review skills output Word tracked changes preserving styles and numbering, diligence skills output Excel workbooks with citation columns

- legal-builder-hub provides a trust layer for community skills: injection detection, hidden-content scans, source allowlists, license gates (personal/firm/commercial), freshness tracking for bundled regulatory content, install audit logs

- Scheduled agents run on cron cadence: renewal watcher scans contract registers for cancel-by deadlines, docket watcher monitors court filings, reg-change monitor polls regulatory feeds and writes Monday digests

- All skills are markdown files with YAML frontmatter, no build step — customize by editing practice profiles or forking skills directly

- Example workflows: tabular M&A diligence (one row per document, every cell cited to source), element-by-element patent claim charts, privacy triage (PIA vs mandatory DPIA vs proceed), AI impact assessments across regulatory regimes

Decoder

- DSAR: Data Subject Access Request — GDPR right for individuals to request a copy of their personal data a company holds

- PIA: Privacy Impact Assessment — internal review of how a project affects user privacy

- DPIA: Data Protection Impact Assessment — mandatory GDPR assessment required for high-risk data processing activities

- DPA: Data Processing Agreement — contract between data controller and processor defining how personal data is handled under GDPR

- FTO: Freedom To Operate — patent clearance analysis to determine if launching a product would infringe existing patents

- VDR: Virtual Data Room — secure online repository for sharing confidential documents during M&A due diligence

- MCP: Model Context Protocol — Anthropic's standard for connecting Claude to external data sources and tools

Original Article

Full article content is not available for inline reading.

Qwen-Image-2.0 Technical Report

Qwen-Image-2.0 generates slides, posters, and comics from 1K-token prompts with accurate multilingual typography and photorealistic rendering in a unified model.

Summary

Deep Dive

- Qwen-Image-2.0 unifies high-fidelity image generation and precise editing in a single framework, addressing previous model limitations

- Architecture couples Qwen3-VL (vision-language model) as condition encoder with a Multimodal Diffusion Transformer for joint condition-target modeling

- Supports instruction prompts up to 1,000 tokens, enabling complex compositional requirements beyond what previous models handled

- Generates text-rich content including presentation slides, marketing posters, data infographics, and comic panels with accurate embedded text rendering

- Significantly improves multilingual text fidelity and typography across different scripts and languages, a persistent challenge in image generation

- Enhances photorealistic generation with richer details, more realistic textures, and coherent lighting compared to prior Qwen-Image models

- Training uses large-scale data curation and a customized multi-stage pipeline to balance multimodal understanding with flexible generation and editing capabilities

- Addresses key limitations: ultra-long text rendering, multilingual typography, high-resolution photorealism, robust instruction following, and efficient deployment

- Human evaluations show substantial improvements over previous Qwen-Image models in both generation and editing tasks across diverse scenarios

- Handles compositionally complex scenarios better than existing models, particularly in text-rich and multilingual contexts

- 57 co-authors from the Qwen team contributed to the technical report

- Submitted to arXiv on May 11, 2026 as cs.CV (Computer Vision and Pattern Recognition)

Decoder

- Diffusion Transformer: Neural architecture combining diffusion models (which generate images by iteratively denoising random noise) with transformer attention mechanisms for better quality and control

- Condition encoder: Component that processes input instructions or reference images into embeddings that guide the generation process

- Qwen3-VL: Qwen team's vision-language model that understands both images and text, used here to interpret user prompts and reference images

Original Article

Qwen-Image-2.0 Technical Report

We present Qwen-Image-2.0, an omni-capable image generation foundation model that unifies high-fidelity generation and precise image editing within a single framework. Despite recent progress, existing models still struggle with ultra-long text rendering, multilingual typography, high-resolution photorealism, robust instruction following, and efficient deployment, especially in text-rich and compositionally complex scenarios. Qwen-Image-2.0 addresses these challenges by coupling Qwen3-VL as the condition encoder with a Multimodal Diffusion Transformer for joint condition-target modeling, supported by large-scale data curation and a customized multi-stage training pipeline. This enables strong multimodal understanding while preserving flexible generation and editing capabilities. The model supports instructions of up to 1K tokens for generating text-rich content such as slides, posters, infographics, and comics, while significantly improving multilingual text fidelity and typography. It also enhances photorealistic generation with richer details, more realistic textures, and coherent lighting, and follows complex prompts more reliably across diverse styles. Extensive human evaluations show that Qwen-Image-2.0 substantially outperforms previous Qwen-Image models in both generation and editing, marking a step toward more general, reliable, and practical image generation foundation models.

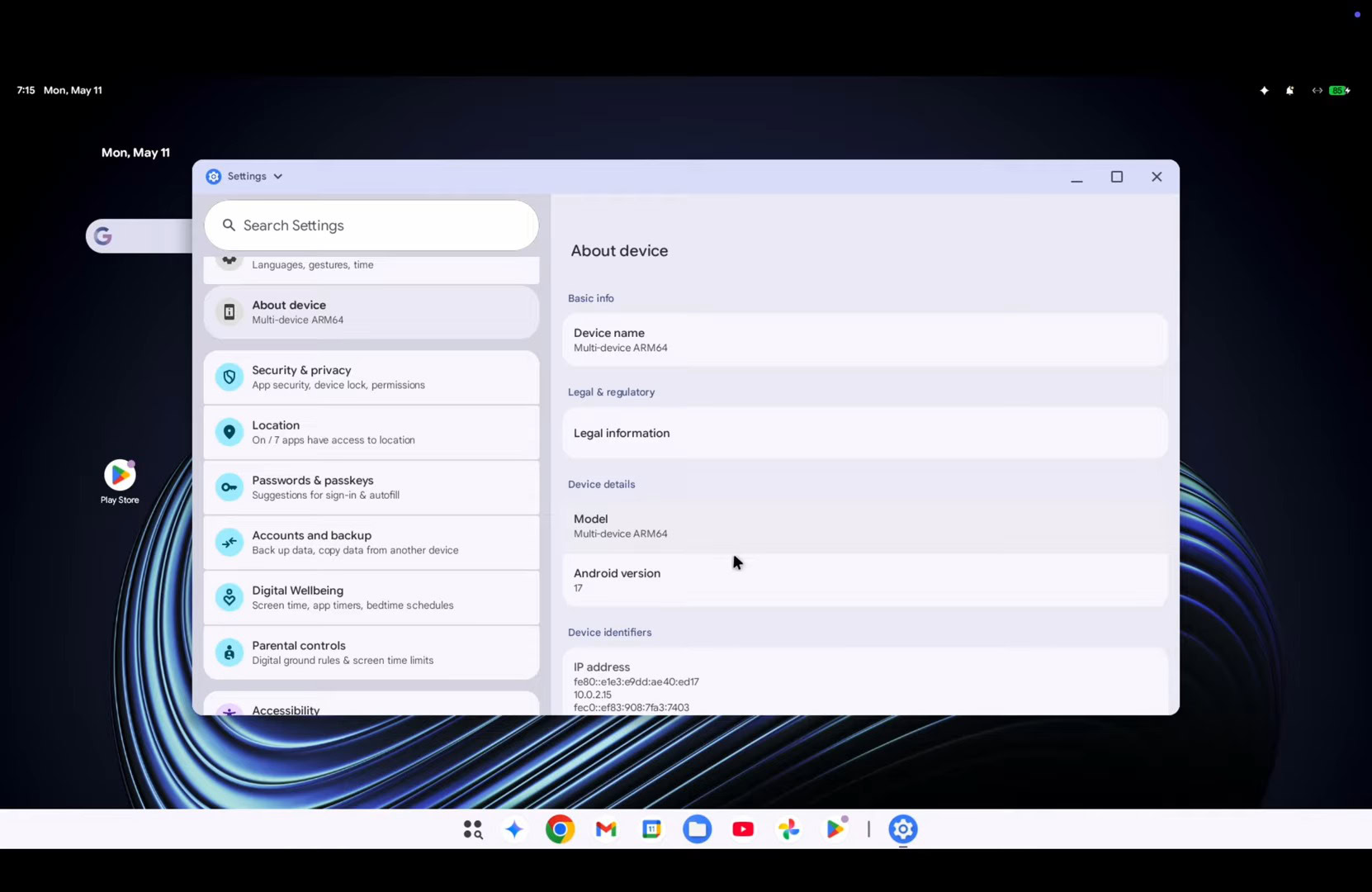

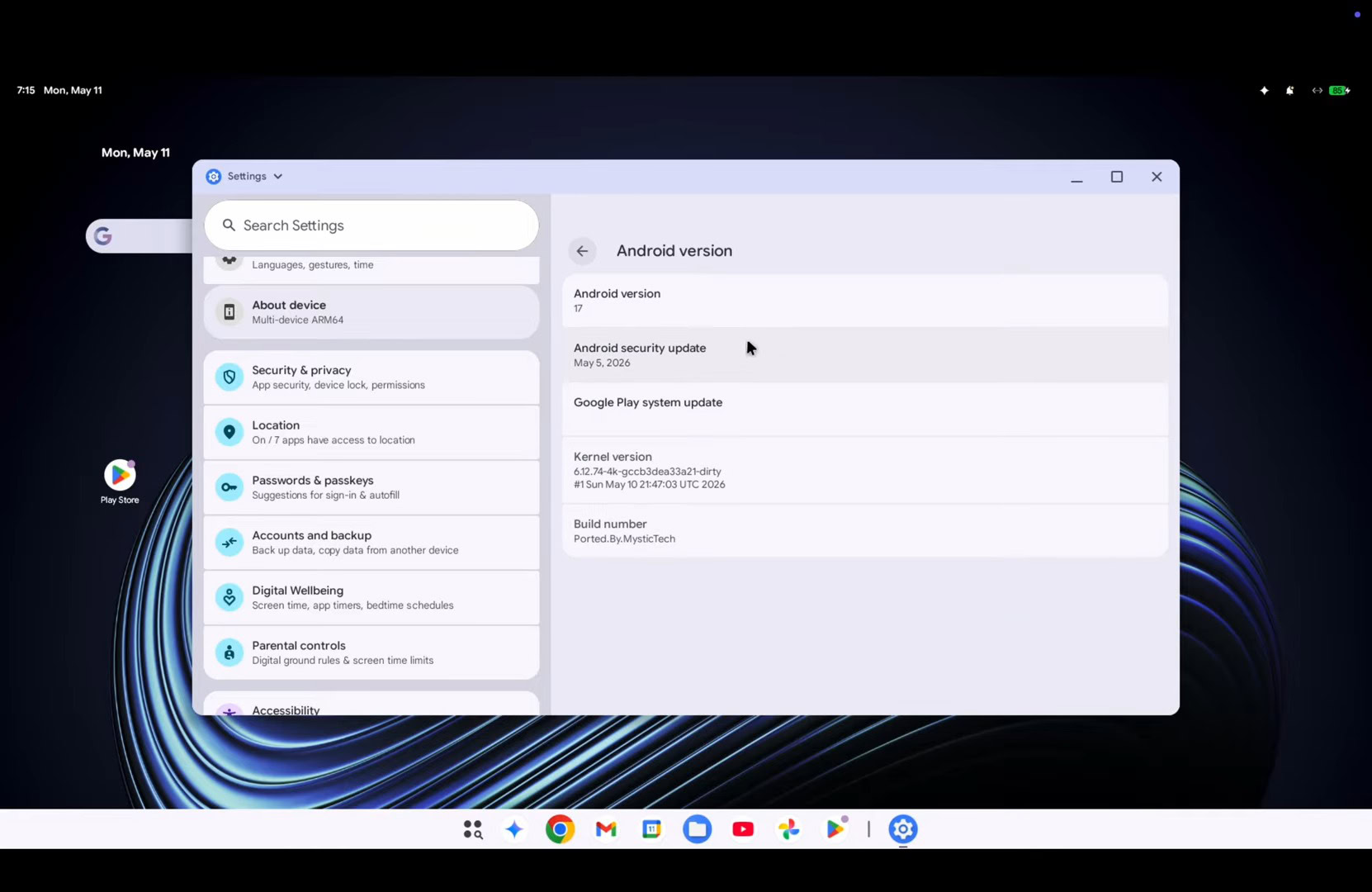

Android is getting a big AI overhaul in 2026

Google bypasses Android 17 for its AI features, shipping app automation, Gemini widgets, and Auto Browse through Play Services and app updates instead.

Summary

Deep Dive

- Google announcing major Android AI features ahead of I/O 2026, most shipping through Play Services rather than Android 17 OS release

- App automation expanding beyond initial DoorDash/Uber test (launched earlier 2026), now handling multi-step cross-app tasks like extracting course books from Gmail syllabus and adding to shopping cart

- Chrome Auto Browse (launched on desktop months ago) coming to Android 12+ in late June, allows AI to navigate mobile web pages for multi-step tasks

- Autofill with Google getting Gemini integration to handle more complex data like license plates beyond standard name/address fields

- "Create My Widget" lets users generate custom widgets via prompts (e.g., meal plan schedule, event countdown with weather data), fully Material themed

- Rambler voice input feature in Gboard cleans up spoken text, removing "ums" and "uhs" while preserving context and tone

- Android Auto getting adaptive display support for non-standard screen shapes, Material 3 Expressive themes, Immersive Navigation, and home screen widgets

- Video playback coming to Android Auto (parked only, YouTube initially) in select vehicles from BMW, Ford, Genesis, Hyundai, Kia, Mercedes-Benz, Renault, Škoda, Volvo, and others

- Android 17 (June launch) features mostly limited to camera improvements for flagship devices, Instagram integration with Ultra HDR and Night Mode, enhanced lost device security requiring PIN plus biometric, session-only precise location access with new indicator, and Pause Point 10-second cooldown for distracting apps

- New 3D emoji design rolling out to Pixel devices this summer, other Android 17 devices later this year

- Most new Android features bypassing OS updates entirely, shipping via Play Services and app updates to reach broader device base including Android 12+

Decoder

- Auto Browse: Chrome feature where Gemini AI autonomously navigates web pages to complete multi-step tasks, operating visibly or in background until requiring user authorization for sensitive actions

- Immersive Navigation: Android Auto feature announced earlier in 2026 that integrates vehicle camera feeds with Google Maps to provide enhanced lane-level turn guidance

- Create My Widget: Gemini-powered Android feature allowing users to generate custom home screen widgets via text prompts, combining data sources like weather, countdowns, and meal plans in Material-themed layouts

- Play Services: Google's proprietary background service layer for Android that enables feature updates independent of OS version, allowing new capabilities to reach devices without waiting for manufacturer OS updates

Original Article

Google's I/O conference is next week, and we expect to hear a lot about the company's AI endeavors. The company says there's so much to talk about that it's spilling the Android beans a little early, and yes, a lot of AI is involved. In the coming months, Google will roll out more smartphone AI features under the Gemini Intelligence banner, bringing more automation and customization to your phone.

App automation will be a major element of Android going forward, Google says. Automation for apps is expanding after Google began testing it earlier in 2026 with DoorDash and Uber on Pixel and Samsung phones. It was a very frustrating experience at launch, but Google says it has spent the intervening months fine-tuning the system.

Google promises that Android will be able to handle more complex automations across apps. For example, the robot could find a course syllabus in Gmail and then hop to a shopping app to add the necessary books to your shopping cart. Google also suggests taking a picture of a travel brochure and telling Gemini to book something similar in the Expedia app.

This could theoretically reduce busy work, but that's only true if it works and your task takes advantage of the right apps. Android won't just automate any old app on your phone. The automation will only work in select apps, mostly limited to food and grocery ordering and ride-hailing. For everything else, there's Chrome.

The Gemini-powered Auto Browse feature that debuted on desktop Chrome several months ago will launch on Android toward the end of June for all Android 12 and higher devices. This feature uses powerful cloud-based Gemini models to parse webpages and handle multi-step tasks for you.

We were not overly impressed with the speed or accuracy of Auto Browse in desktop Chrome, but simpler, mobile-optimized pages might be a little more usable for the robot. Like the desktop version, you'll be able to watch the AI navigate the web for you or let it do its thing in the background until your authorization is needed for something sensitive.

Similarly, the Autofill system in Android is getting an AI automation upgrade. Google says Autofill with Google will soon plug into Gemini's Personal Intelligence, allowing it to fill in more information when you encounter an online form. It can still handle your name, address, and other established personal details, but it may also be able to add data like your car's license plate. Google says the feature is opt-in, so you can keep the traditional autofill experience.

Gemini is all ears

Gemini Intelligence is also powering some new convenience features in Android, including those rumored AI-generated widgets. Google calls that "Create My Widget," but don't expect miracles here. These widgets appear mostly to be about displaying data from your account or around the web.

For example, Google says you might want a widget that recommends meal plans on a set schedule or sets a countdown to an important event. You can do that with Create My Widget. You can even mix and match the kinds of included data. Android will offer suggested recipes for new AI widgets, but you can also simply enter a prompt describing what you're looking for. Perhaps you want to see a countdown widget with specific weather metrics—that should be possible with Gemini-powered widgets. No matter how you make them, the widgets are fully Material themed and resizable.

Google is also bringing more AI to voice input with a feature called Rambler, which is integrated with Gboard. Plenty of people already use AI to polish text in emails or other documents before sending them, and this is essentially the same thing for voice input. With Rambler, you can just start talking—or rambling, if you will—and the AI will get the gist of what you say. All the "ums" and "uhs" will go away, and the final result will essentially become a summary of what you said.

The company claims that Rambler will understand the context and nuance of what you're saying, so the end product still sounds like you. There will be a prominent indicator when Rambler is enabled, and Google promises that no audio or text will be retained.

Android in cars

Plugging your Android phone into a car that supports Android Auto will be different soon, too. For starters, Google says Android Auto will now adapt to varying display sizes and shapes. So even if your car has a weird polygon screen, Android should fill it completely.

What you see on that screen will be different, too. Android Auto is getting a makeover with greater support for Material 3 Expressive themes and a new navigation experience. Yep, Immersive Navigation, which Google announced earlier this year, is almost ready to actually roll out to users. Accessing data from other apps in the car will be easier as well due to the addition of widgets. Google says there will be widgets for contacts, weather, and select third-party apps. For cars with Google built in, the vehicle's cameras will plug into Maps to provide more accurate lane guidance. Gemini will also be able to answer questions about the vehicle's status, including warning lights and cargo capacity.

Android Auto media apps have hardly evolved over the years, but 2026 will bring some notable changes. Popular apps like YouTube Music and Spotify are getting design overhauls that make them easier to use in the car. Video playback is also coming to Android Auto for the first time. Naturally, this will only work when you're parked and using a supported app like YouTube.

Google says Android Auto will switch seamlessly to audio-only mode when you start driving, but this requires buy-in from automakers for safety and technical reasons. Video will only be available in supported cars from BMW, Ford, Genesis, Hyundai, Kia, Mahindra, Mercedes-Benz, Renault, Škoda, Tata, and Volvo. More vehicles may come later.

What about Android 17?

Google has announced all these new Android features while barely mentioning Android 17, which is slated to launch in June. Almost everything new in Android will arrive via Play Services, app updates, or on specific devices (like Pixel and Samsung Galaxy) through partnerships.

There are a few tidbits for Android 17 itself. Google says flagship Android 17 devices will see some changes to the camera experience, including better video quality in social media apps like Instagram and "screen reaction" overlays in video. There will also be native support for Ultra HDR, native stabilization, and Night Mode in the Instagram Edits app on Android 17 devices.

On the security front, Google will enhance lost device features to require both a PIN and biometric unlock to better prevent bad actors from using your device. This will disable quick settings and block new Wi-Fi and Bluetooth connections. Android 17 will also get a new option for location access, allowing you to share your precise location with an app only for the current session. A new location indicator, similar to the ones for the camera and microphone, will make it clear when an app is accessing your location.

There's also Pause Point, which lets you add a 10-second cooldown timer to apps you've labeled as distracting. This will be bundled into the existing Digital Wellbeing suite.

Lastly, Google has redesigned its emoji yet again. No, the blobs aren't coming back. Emoji now have a more detailed 3D appearance, but you'll see them first on Pixel devices over the summer. Other Android 17 devices will have to wait until "later this year." Most device makers craft their own emoji, so you may never see Google's new smileys outside of apps like YouTube and Gmail.

Redis and the Cost of Ambition

Redis Inc's 2024 licensing betrayal and decade of feature bloat spawned Valkey, a fork that wins by ignoring AI hype and optimizing what made 2011 Redis indispensable.

Summary

Deep Dive

- Redis succeeded in 2011 as a "memcached but way better" remote dictionary server with tasteful design: single-threaded for atomic operations, event-driven with non-blocking I/O, simple RESP protocol codeable in an hour, well-chosen primitives (lists, hashes, sets, sorted-sets) that covered 80% of web app needs

- Over the next decade Redis chased every database trend: JSON documents (vs MongoDB), full-text search (vs ElasticSearch), graph databases, event streaming (vs Kafka), strong consistency (vs etcd), time-series (vs InfluxDB), and now vector storage for AI

- The author identifies this as ignoring two realities: (1) the simplicity and orthogonality made Redis indispensable, (2) anyone serious about search/streaming/strong-consistency wants the real thing, not a half-baked Redis module inheriting all of Redis's limitations

- Redis Inc (originally Garantia Data, a generic NoSQL hosting service) signed antirez to legitimize themselves, took over trademark rights, then dropped BSD licensing in 2024 for AGPLv3. When this blew up they offered tri-licensing but damage was done

- RESP3 protocol breaks fundamental RESP2 assumption of request/reply, adds client-side caching (Redis the cache now needs caching), represents classic second-system failure per Brooks

- Disque (2015) exemplified the problem: antirez built it in "astronaut mode" without a real use case, admitting it was response to what he saw people doing rather than his own need. Predicted outcome: abandonment. Disque sat at 8K GitHub stars but became abandonware immediately, later rewritten as Redis module also abandoned 7-8 years ago

- Aphyr's Redis-Raft analysis found 21 issues in initial build including unavailability, eight crashes, stale reads, lost updates, infinite loops, corrupt responses. "Essentially unusable" - illustrates the disconnect between Redis as cache/data-structure server vs. Redis as strongly-consistent distributed database

- Valkey's existence and rapid adoption is the market's verdict. Instead of chasing features, Valkey invested in unglamorous work: multi-threaded performance, memory efficiency, cluster reliability and throughput. Performance benchmarks target the 80% who want 2011 Redis features

- Current Redis positioning (2026): "The Real-Time Context Engine for AI Apps" with "Try Redis for Free" and "Get a Demo" buttons signals full enterprise sales transformation

- Recent antirez PR adding array type to Redis (despite having hashes, lists, streams, JSON arrays, time-series, sorted-sets) shows continued feature accumulation while project is "in a bit of a crisis"

- Author clarifies this isn't criticism of antirez's talent/taste but of ambition disconnected from what made Redis successful: developer ambition to solve complex problems, ambition to be everything to everyone, landlord ambition to extract maximum revenue before AWS/GCP finish them off

Decoder

- RESP/RESP2/RESP3: REdis Serialization Protocol, the wire protocol Redis clients use to communicate with the server. RESP2 was simple request/reply. RESP3 added complexity like push messages and client-side caching support.

- Valkey: Open source fork of Redis created after Redis Inc's 2024 licensing change, now developed under Linux Foundation. Focuses on performance improvements rather than new features.

- antirez: Salvatore Sanfilippo, original creator of Redis who worked from 2009-2020. Known for elegant system design and transparent communication.

- astronaut mode: Building software without a concrete use case, driven by what seems intellectually interesting rather than solving real problems. Term implies over-engineering.

- Disque: Abandoned distributed message broker antirez created in 2015, later rewritten as Redis module also abandoned. Example of feature built without real need.

Original Article

Redis and the Cost of Ambition

And they said, Go to, let us build us a city and a tower, whose top may reach unto heaven; and let us make us a name, lest we be scattered abroad upon the face of the whole earth.

I recently skimmed antirez's patch for adding an array type to Redis. The patch itself is not particularly noteworthy except as an example of how AI-assisted tooling can augment the abilities of a talented and tasteful systems engineer. What got me thinking was antirez' prose in the top of the pull-request, explaining the rationale for an array type:

Hashes give you random lookups, but you have to store an index as a key, and have no range visibility. Lists give you appending and trimming, but what is in the middle remains hard to access. Streams give you append-only events, which is another (useful, indeed) beast.

He could have also mentioned Redis' other array-ish faculties like JSON which has arrays natively, time-series, and sorted-sets, which can be made to behave like an array in some situations. Here we are in 2026, Redis is in a bit of a crisis, and yet is sitting with a massive PR to add a new array type. What is going on?

Let us make us a name

Redis has been through a lot over the last decade, driven partly by enterprise DBaaS dynamics, partly by second-system effects:

- Licensing: Redis Inc waged a scorched-earth campaign against its users by dropping BSD in 2024. When this blew up in its face, a strategic retreat was called and a parley offered: tri-licensing choose your own adventure with AGPLv3 as the lone OSI option (AGPL allows Redis Inc to claim being open source, but it's very different than BSD). The VC-backed company behind Redis is an interesting story in itself. Originally named Garantia Data, they were basically another NoSQL cloud hosting service. They got into Redis hosting, started calling themselves Redis Something-or-other, and eventually signed antirez to legitimize themselves. After a couple years they took over the trademark rights, which set the stage for the rugpull later. This post and the comment replies aged about well as you might expect.

- Bloat and lock-in: Redis began with a handful of useful data-types. Over time the feature-set has grown (and grown) to include exotic data-structures, complex stateful systems (streams), semi-proprietary-ish modules (depending on what version you run). I was amazed when I pulled up Redis' landing page today and read that their positioning in 2026 is The Real-Time Context Engine for AI Apps. Additionally notable are the "Try Redis for Free" and "Get a Demo" buttons in this screenshot. I'm not sure which is more surprising - "for Free" or the enterprise sales-coded "demo" CTA.

- The "How many times do we have to tell you we are a web-scale database" dynamic. This is exemplified by the story around Sentinel, Cluster, Redis-Raft, and enterprise features like active-active geo-distribution®, Redis Flex®, Redis-on-Flash®, and whatever else.

- Protocol: RESP3 has a lot of sharp edges and breaks the fundamental assumption in RESP2 of request/reply. The new protocol is in my opinion a classic second system failure mode straight out of Brooks.

- First-class client-side caching support. In a kind of reductio-ad-absurdum, Redis (lately the cache), now needs a new protocol to support caching on the client side as well.

What happened to dear old Redis, I wondered. And the more I thought about it, a satisfying explanation started to coalesce which explains all the above phenomena. To me, the picture that emerges is that of a solution that lost its identity through ambition.

The noble Brutus

Hath told you Caesar was ambitious.

If it were so, it was a grievous fault,

And grievously hath Caesar answered it.

Vernal delight and joy

I would put it some time around 2011...that time of excitement when so many new ideas were coming in vogue among web and web-adjacent developer circles. When NoSQL was blasting off into its hype cycle, web scale was not used ironically, and the Bigtable and Dynamo papers were still widely read and discussed. Alongside this were Ruby on Rails, elegance (a desirable property for CRUD apps), web 2.0, REST and JSON. Redis perfectly captured the zeitgeist, and found its way into everyone's stack practically overnight. A capture from late 2011 has Redis describing itself as an advanced key-value store and data structure server. Notably absent is the word database.

Prior to Redis, memcached was that one indispensable piece of infrastructure running quietly on most web servers. In every deployment I've ever seen Memcached typically handles a variety of ad-hoc usages in addition to just caching, like locks, counters, rate-limits, stuff like that. So when Redis landed, the story at that time was something like "memcached but way better". Redis' name, Remote Dictionary Server, emphasized that it was a fast in-memory dictionary that could be used by all your services. In addition to blobs of bytes, Redis operated on a handful of tastefully-chosen data-structures (linked-list, hash-table, set, sorted-set), which vastly expanded the kinds of ad-hoc use-cases such a service could provide.

I want to call attention to some of the specific design considerations that I think Redis nailed perfectly, and which were instrumental in its initial success:

- Protocol: The beauty of Redis' wire protocol is that it is simple enough that it can be understood and coded in an hour, while being expressive enough that it can represent a number of rich data-types. Building a client library is simple and clean, and the protocol felt right. Anecdotally, my most popular blog post is a write your own Redis tutorial written nearly 10 years ago, which walks through building the protocol and a simple server.

- Single-threaded, event-driven, in-memory: these go together because they combine in a really purposeful way. By being single-threaded, all operations are guaranteed atomic, full-stop. This eliminates a huge class of complexity and makes Redis easy to reason about. In order to make single-threaded work, the server needed to be implemented using non-blocking I/O. Operations on the data itself needed to be fast as hell. Put it together and you have a fast key/value store that can handle tons of clients and do it all from a single thread.

- Data structures: the primitives were chosen well and were suited to a web application's most common needs. Cache? Just use strings and an expiry. Queue? Use a list. Structured data? Use a hash. Locks, counters, rate-limiting, liveness, monitoring, leaderboards, whatever - all easy with the builtin data types.

Adoption grew incredibly quickly and the project was, deservingly, a huge success. At some point along the way, the ambitions of the project changed. Redis took up the mantle of being a database:

Ambition

With diadem and sceptre high advanced,

The lower still I fall; only supreme

In misery; such joy ambition finds.

Some features have been genuinely useful additions, such as BZPOPMIN added in 5.0, which allows a blocking-pop to be performed on a sorted-set (very nice when using sorted-sets as a priority queue). Others struck me as being extremely un-Redis-y, like ACLs. But mostly, there seems to be a desire to make Redis be everything for everyone. The addition of these features closely tracks the "latest cool thing" developers are talking about on HN over the last decade:

- MongoDB stores JSON, Redis should be a document database

- ElasticSearch does full-text search, Redis should be a search engine

- Graph databases are cool, Redis should be a graph database... (actually, maybe not).

- Kafka is generating a lot of buzz, let's be an event streaming platform

- ZooKeeper and other strongly-consistent databases are important. Let's ship it. (Aphyr's analysis should be required reading).

- InfluxDB is cool. Let's be a time series database

- And if we don't have an AI story we'll be on the wrong side of history. Sales wants this done yesterday.

The problem with this mindset are two-fold. First, it ignores the factors that made Redis an indispensable part of everyone's stack in the first place. Redis was simple, the commands were orthogonal and tightly scoped, the protocol was clean, and it was conceptually coherent. Second, it ignores the fact that anyone who is serious about integrating full-text search / event stream processing / strong-consistency kv / time-series / vector storage is going to want the real thing, not some half-baked Redis module that inherits all of Redis' restrictions. Because, at the end of the day, the HA story on Redis is complicated. The persistence story is nuanced and there are important tradeoffs. The protocol pain and client fragmentation is a real hurdle. Redis does not aim to replace Postgres in your stack, and I would argue that ElasticSearch / RabbitMQ / etc / etc are similarly foundational pillars of any system.

Here is a quote from Aphyr's analysis of the initial development build of Redis-Raft:

...we found twenty-one issues, including long-lasting unavailability in healthy clusters, eight crashes, three cases of stale reads, one case of aborted reads, five bugs resulting in the loss of committed updates, one infinite loop, and two cases where logically corrupt responses could be sent to clients. The first version we tested (1b3fbf6) was essentially unusable...

Redis the cache and data-structure server is a fundamentally different proposition from "Redis the etcd" or any of the other databases named above. This is the disconnect.

He heard the sound of the trumpet, and took not warning

When antirez announced Disque back in 2015, I wrote a short piece explaining why I won't use it. My reasoning hinged on this comment by antirez:

Disque was designed a bit in astronaut mode, not triggered by an actual use case of mine, but more in response to what I was seeing people doing with Redis as a message queue and with other message queues.

I read that admission as being predictive of one outcome: abandonment. Projects developed in "astronaut mode", as personal challenges, as learning exercises are wonderful. Without a solid use-case, though, will the maintainer retain interest and focus in order to solve the long-tail of hard problems that crop up as soon as people start using it? While also maintaining Redis? HA message delivery is legitimately difficult to solve well, and whatever side of the CAP theorem you optimize for, you will be forced to make tradeoffs and solve some difficult problems.

Furthermore, I believed nobody would adopt. There were many mature message brokers in 2015. People used Redis as a message broker because they were already using Redis and it was good enough and simple. The need wasn't for a new message broker, nor was the need for Redis to become a more complex message broker. The project misread why people use Redis as a broker in the first place. People use Redis as a message broker specifically because they don't want to use something else.

I believe my predictions held true - Disque became abandonware almost as soon as it was announced, despite sitting at 8K stars on GitHub. Some time later it was rewritten as a Redis module, but that project is also sitting abandoned for the last 7 or 8 years.

I want to be clear that none of this discussion should be taken as overt or implied criticism of antirez. I have enormous respect for his talent and his taste. The main force I see at work in the development of Redis is, as I mentioned in the beginning, ambition. The ambition of a developer to solve complex problems, the ambition to be everything to everyone, the ambition of Redis' landlords to find a way to extract maximum revenue before AWS and GCP finish them off for good. There is nothing inherently wrong with ambition. The problem is when the ambition leads you to lose sight of what made you successful in the first place.

Valkey's existence and adoption is the wider market's final verdict on this dynamic. Rather than chase features and bullet points, Valkey has invested in the un-glamorous work of improving multi-threaded performance, memory efficiency, cluster reliability and throughput. Valkey's performance benchmarks are impressive and aimed squarely at the 80% of Redis users who just want the same features Redis shipped with back in 2011. There's no need for a new array type in Valkey's world.

Amazon employees are “tokenmaxxing” due to pressure to use AI tools

Amazon employees are automating busywork with MeshClaw AI agents just to hit token consumption targets tracked on internal leaderboards.

Summary

Deep Dive

- Amazon's MeshClaw is an internal AI agent platform inspired by OpenClaw (which went viral in February 2026)

- The tool allows employees to create agents that connect to workplace software and act autonomously

- Capabilities include initiating code deployments, triaging emails, monitoring deployments during meetings, and interacting with Slack

- Amazon introduced targets requiring more than 80% of developers to use AI tools each week

- The company tracks AI token consumption on internal leaderboards, recently restricted to show only to individual employees and their managers

- Some employees are "tokenmaxxing" by using MeshClaw to automate unnecessary tasks purely to increase their token usage statistics

- Amazon claims token statistics won't be used in performance evaluations, but multiple employees say managers are monitoring the data

- More than 30 Amazon employees worked on building MeshClaw

- Internal docs describe the bot as dreaming overnight to consolidate learning and working autonomously

- Multiple employees raised security concerns about granting AI agents permission to act on their behalf, citing risks of errors or unintended actions

- Meta employees have engaged in similar "tokenmaxxing" behavior on their internal leaderboards

- Amazon's 2026 capex is expected to reach $200 billion, with the vast majority going to AI and data center infrastructure

Decoder

- Token: Units of data processed by AI language models, roughly equivalent to 3-4 characters of text. Used to measure and bill API usage.

- Tokenmaxxing: Gaming AI usage metrics by generating unnecessary AI activity to increase token consumption statistics, similar to how gamers "max out" stats.

- MeshClaw: Amazon's internal AI agent platform that allows employees to create autonomous agents connected to workplace tools.

- OpenClaw: Open-source AI agent framework that went viral in February 2026, allows running agents locally on personal hardware.

Original Article

Amazon employees are using an internal AI tool to automate non-essential tasks in a bid to show managers they are using the technology more frequently.

The Seattle-based group has started to widely deploy its in-house "MeshClaw" product in recent weeks, allowing employees to create AI agents that can connect to workplace software and carry out tasks on a user's behalf, according to three people familiar with the matter.

Some employees said colleagues were using the software to automate additional, unnecessary AI activity to increase their consumption of tokens—units of data processed by models.

They said the move reflected pressure to adopt the technology after Amazon introduced targets for more than 80 percent of developers to use AI each week, and earlier this year began tracking AI token consumption on internal leader boards.

"There is just so much pressure to use these tools," one Amazon employee told the FT. "Some people are just using MeshClaw to maximize their token usage."

Amazon has told employees that the AI token statistics would not be used in performance evaluations. But several staff members said they believed managers were monitoring the data.

"Managers are looking at it," said another current employee. "When they track usage it creates perverse incentives and some people are very competitive about it."

Silicon Valley groups are pushing to increase adoption of generative AI tools, as companies seek to demonstrate returns on vast spending commitments to AI infrastructure and embed the technology more deeply into day-to-day work.

Amazon this year is expected to spend $200 billion in capital expenditure, the vast majority of which will go toward AI and data center infrastructure.

The e-commerce group had posted team-wide statistics on AI usage by its staff, but recently limited access so that only employees themselves and managers can view their stats. Managers are discouraged from using token use to measure performance, according to a person familiar with the matter.

Meta employees have similarly engaged in so-called "tokenmaxxing" to improve their standing on internal leader boards.

The MeshClaw tool that some employees have used to increase their statistics was inspired by OpenClaw, which became a viral sensation in February. OpenClaw allows users to run agents locally on their own hardware, including computers and laptops.

Amazon's MeshClaw can initiate code deployments, triage emails, and interact with apps such as Slack, according to people familiar with the matter.

The company said in a statement that the tool enabled "thousands of Amazonians to automate repetitive tasks each day" and was one example of the group "empowering teams" to experiment and adopt AI tools.

"We're committed to the safe, secure, and responsible development and deployment of generative AI for our customers," it added.

More than three dozen Amazon employees worked on the in-house tool, according to internal documents. One recent memo describing the bot said: "It dreams overnight to consolidate what it learned, monitors your deployments while you're in meetings, and triages your email before you wake up."

Multiple Amazon employees said they were concerned about the security risks of an AI tool that was granted permission to act on a user's behalf. This risks situations where the agent may make errors or undertake unintended actions.

"The default security posture terrifies me," one employee said. "I'm not about to let it go off and just do its own thing."

What Is Code?

Code's value shifts from machine instructions to conceptual models as LLMs generate syntax but risk accumulating 'cognitive debt' without understanding.

Summary

Deep Dive

- Code serves two purposes: machine instructions (being commoditized by LLMs) and conceptual models for human understanding (increasingly valuable)

- Coding is fundamentally vocabulary building—mapping domain concepts (retail, banking) to technical constructs (web, infrastructure) using programming language features

- Frameworks like Spring are codified vocabularies for technical domains, but business domains require locally-discovered abstractions within bounded contexts

- Domain-Driven Design's bounded contexts define where particular vocabularies are valid; ubiquitous language emerges from developer-domain expert collaboration

- Programming languages (Go's channels, Rust's ownership, Java's OOP) are thinking tools that shape design discovery through their constraints

- LLMs work best with clear vocabulary because training teaches them relationships between names, APIs, and patterns—vague prompts force guessing

- Cognitive debt accumulates when LLMs generate plausible code with vocabulary faster than teams build conceptual understanding

- Writing code actively (not passively reviewing) is necessary for thinking deeply about design—generation without engagement loses this benefit

- Well-designed foundational code with clear abstractions acts as harness and context that constrains LLMs and makes output reliable

- Good abstractions enable DSLs and natural-language interfaces (example: PlantUML) that LLMs can map to reliably with executable behavior as guardrails

- TDD helps discover right vocabulary iteratively by forcing continuous feedback between model and behavior

- Formal specifications like TLA+ can clarify thinking when natural language is vague and code is too verbose

- The future of coding is building better conceptual models, vocabularies, and foundations for both humans and LLMs to work on

Decoder

- Cognitive debt: The gap that accumulates when LLMs generate code with technical vocabulary (controllers, repositories, factories) faster than developers build conceptual understanding of what those structures mean and why they exist.

- TLA+: Temporal Logic of Actions Plus, a formal specification language for designing and verifying concurrent and distributed systems with precise mathematical constraints.

Original Article

What Is Code?

What is code? At a high level, the answer to this question seems obvious. Code is what developers write: instructions expressed in a programming language that tells machines what to do. For years, writing code meant typing it out, word by word. Progress is measured by how efficiently code can be produced, compiled, tested and deployed. With modern LLMs we no longer need to type every word to produce code. Large amounts of executable code can now be generated from high-level descriptions. This forces a deeper question: If producing code becomes cheaper, what remains valuable about code?

Two Aspects of Code

Code has always served two distinct but intertwined purposes.

First, code is a set of instructions to a machine. It directs computation, moves data, interacts with storage, and coordinates execution. In the era of LLMs, this is the part being commoditized.

Second, code is a conceptual model of the problem domain. This is the "design" aspect. A well-designed codebase does not only contain instructions for the machine; it also contains concepts for humans and tools to reason with.

The activity we call coding is where these two aspects meet. We are shaping the concepts, names, boundaries, and relationships through which the system is understood.

Conceptual Models and Vocabulary

Making the conceptual model explicit is the deeper aspect of coding, driven by the domain and the use cases the system is meant to address. Every domain comes with established processes, practices, and more importantly a shared vocabulary. That vocabulary is where the conceptual model becomes visible.

Vocabulary is usually understood as the set of words used in a particular language or subject. I am able to write this article because I know the vocabulary of English. The reader can read it because they share that vocabulary with me.

But to understand this article, knowing English alone is not enough. This article is about software development. Software development is a broad, mature field with its own technical vocabulary. When I use a word like abstraction, I am not merely using an English word. I am referring to a specific software development concept, with its own meaning, history, and implications. A reader unfamiliar with software development may understand the word at the surface level, but miss its deeper meaning in this context. The mature areas with their own established vocabulary are called domains.

This is true of all serious domains. Communication depends on shared vocabulary. Whether we are communicating with a person, a framework, or an LLM, the words we use must map to concepts that the receiver can understand and act upon.

Vocabulary in Code

A well designed codebase is a representation of a certain vocabulary. Where does this vocabulary in code come from? This is where the unique nature of software development truly shines. Software development works on the intersection of various domains. At one end we have the domains such as banking, finance, retail, inventory, healthcare, insurance etc. On the other we have domains like web, infrastructure, AI, data engineering etc.

Someone doing web development needs to have a strong grasp of web architecture, the semantics of web methods, the universal caching potential of GET, and the implications of those semantics. Someone who does not know that will not architect complex systems well. The same is true in other domains. Vocabulary is not just a collection of labels. It carries meaning, constraints, and design consequences.

Consider a retail domain. When we write code for retail we talk about customers, products, orders, shipments, payments etc. When we are doing web development for the retail domain, the code contains concepts that map retail domain to web domain. E.g. Catalog is a resource and we can use GET, POST, PUT, DELETE HTTP methods to perform operations on it. Someone writing code needs to be familiar with both the vocabularies.

Coding for a domain is fundamentally an act of translation. The developer maps the domain vocabulary onto the vocabulary of technical domains. In doing so, a new vocabulary is also built using the constructs provided by a programming language. There are concepts like logs, repositories, quorums, transactions, and specific concepts like money. Concepts become types, relationships become interfaces, rules become invariants, and workflows become compositions.

The precise names of variables, the boundaries of methods, and the hierarchy of classes are discovered step by step. The right abstraction often is not obvious upfront; it reveals itself only as you continually mold and refactor the code against real-world constraints. When used well, the code slowly becomes a readable, highly specific representation of the domain itself.

For the technical domains we typically find frameworks and libraries which provide the base implementation patterns. Frameworks and libraries are codified vocabularies. They capture the most common patterns of usage. That is why ecosystems such as the Spring Framework exist for building enterprise applications involving the web, integration, and related concerns. Different programming languages bring their own flavor, along with specific design constraints that get reflected and codified in their frameworks and libraries.

Bounded Contexts and Local Vocabularies

Frameworks work well when a domain has stable, recurring structures with broadly shared semantics. But something like "online retail" or "stock exchange" is different. Those are not just technical stacks. The main reason there is no universal high-level framework is that the vocabulary is not stable enough across all instances of the domain. The attempt to find universal abstractions become either too generic to be useful, or too opinionated to be widely applicable. The closer you get to the core business model, the more the abstractions must be discovered locally. This is why the idea of a bounded context in Domain-Driven Design is so important. A bounded context marks the boundary within which a particular vocabulary and model are valid. The same word may mean different things in different contexts, and each context needs its own abstractions, rules, and language.

How do we build these local abstractions and vocabulary? A lot of this vocabulary is built through iterative sessions where we write code and reflect on it. Techniques like TDD are excellent for this iterative development of the vocabulary. They help us discover the right names, the right abstractions, and the right boundaries by forcing continuous feedback between the model and its behavior.

Coding can not happen in isolation. There must be close collaboration between domain experts, users and developers. This collaboration is necessary to build these local abstractions and vocabulary.

This connects directly to the lessons of agile software development. The emphasis on individuals and interactions, customer collaboration, working software, and responding to change is not just process advice. It is a way of discovering and refining vocabulary through feedback.

Domain-Driven Design makes this more explicit through the idea of a ubiquitous language: a shared language developed by developers and domain experts and tested continuously against working software.

Programming Languages As Thinking Tools

Building vocabulary through code requires active engagement in writing and reshaping code; not just passive review of generated code . The very act of thinking deeply about code often happens only when we are actively engaged in writing it. Programming languages and their constructs and constraints themselves become thinking tools. The design constraints provided by different programming languages help shape our thinking. The channels and lightweight threads of Go, the object-oriented model of Java, or the ownership model of Rust all push us to see structure, boundaries, and trade-offs in particular ways. In that sense, programming languages do not just help us express a design. They also help us discover it. I recently had to design a custom Future implementation for the asynchronous programming examples. One of the important aspects of future API is to design the compositions to be able to express a sequence of actions.

var future1 = action1();

future1.thenCompose(val1 -> action2(val1))

.thenCompose(val2 -> action3(val2))Knowing the concepts and vocabulary of functional programming is crucial to be able to implement this api well. Not knowing those concepts results in awkward implementation and usage.