Anthropic tests new Bugcrawl tool for Claude Code bug detection (2 minute read)

Anthropic is testing Bugcrawl, a new Claude Code feature that scans entire repositories to detect bugs and suggest fixes.

Deep dive

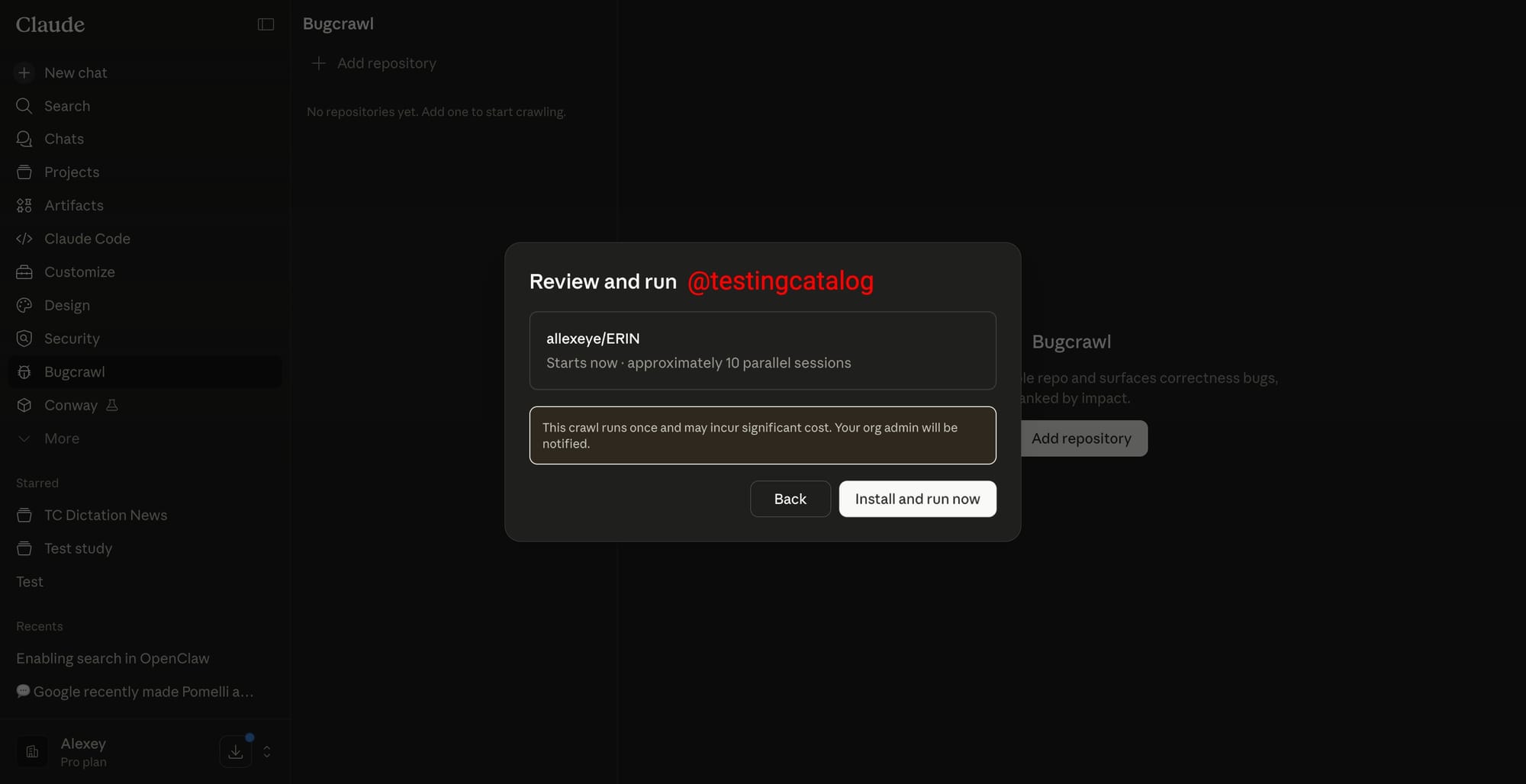

- Bugcrawl appears in Claude Code's sidebar with a repository picker and a warning about high token consumption

- The feature targets general bug detection and fixes, complementing Security (vulnerabilities) and Code Review (PR-level analysis)

- Anthropic could potentially extend it to end-to-end testing where Claude runs the app locally and walks through user flows

- Fits into Claude Code's rapid expansion: Code Security in February 2026, Code Review in March 2026

- Part of industry-wide shift toward repository-wide AI agents, competing with OpenAI Codex, xAI Grok Build, and Google Jules

- High token costs suggest it's aimed at Team and Enterprise tiers

- Not in production yet, no release date, but rapid feature cadence suggests research preview likely soon

Original article

Anthropic appears to be building a tool within Claude Code called Bugcrawl, which surfaces as a dedicated entry in the side navigation. Once opened, the screen presents a repository selection UI alongside a warning that the feature consumes tokens at a high rate, so it's suggested to start with a small repository before pointing it at anything substantial. That caveat alone hints at the scale of work the agent would be carrying out in the background.

The most plausible read is that Bugcrawl will set Claude loose across an entire codebase to hunt for general bugs and propose fixes, while the Security tab already shipping in Claude Code for Enterprises targets vulnerabilities specifically. If Anthropic pushes the concept further, the same loop could extend into end-to-end product testing, where Claude spins up a local instance of the app, walks through user flows, and reports regressions. How feature specifications or test criteria would be passed into a run is still an open question, since the only screen visible so far is the repository picker.

For Anthropic, the move slots cleanly into the Claude Code expansion of recent months, which has already produced Claude Code Security in February and Claude Code Review in March, both built around multi-agent investigation of code. Bugcrawl would round out that lineup by tackling general correctness and quality, the broader, fuzzier category that sits between security scanning and PR-level review. It also fits the wider competitive picture, with OpenAI's Codex, xAI's Grok Build, and Google's Jules each pushing toward agents that reason across full repositories rather than single files.

The likely audience is engineering teams on Team and Enterprise tiers, where the token burn warning is easier to absorb. No release window has surfaced, and the feature does not appear in production builds. Given the cadence of Code Security and Code Review landing within weeks of each other, a research preview on the same web surface looks like the most likely path.