Devoured - April 27, 2026

Google is investing up to $40B in Anthropic while coding agents drive unprecedented compute demand, with Cursor exploring a $60B SpaceX deal to solve negative margins from AI model costs. On the practical side, Anthropic shipped Memory for Claude Managed Agents and is testing Bugcrawl for codebase bug detection, Spotify's Honk agent automated 1,800 pipeline migrations saving 10 engineering weeks, and Airflow 2 reached end of life with no more security patches.

Google will invest up to $40 billion in Anthropic to help the Claude maker scale its compute infrastructure and meet surging demand for its AI models and developer tools.

Decoder

- Anthropic: AI company that develops the Claude family of large language models, competing with OpenAI's GPT models

- TPU: Tensor Processing Unit, Google's custom-designed chips optimized for AI training and inference workloads

- Inference: Running a trained AI model to generate outputs, as opposed to training which creates the model

- Agentic workflows: AI systems that can autonomously plan and execute multi-step tasks rather than just responding to single prompts

Original article

Google will invest at least $10 billion in Anthropic, and that amount could rise to $40 billion if Anthropic meets certain performance targets, Bloomberg reports.

The investment follows Amazon's $5 billion initial investment in Anthropic a few days ago; the Amazon deal also leaves the door open to further investment based on performance. Both investments value Anthropic at $350 billion.

Anthropic has seen rapid growth in the use of its Claude models and related products, such as Claude Code, which promises to significantly increase the speed and efficiency with which companies or individuals can develop software. (The reality varies from big improvements to setbacks, depending on the nature of the project and company, how Claude Code is used, and many other factors.)

Several factors contributed to Anthropic's success in recent months, including controversies around OpenAI and its ChatGPT product and models, more robust agentic workflows, and new products like Claude Cowork, which does some of the same things for general knowledge work tasks as Claude Code does for software development.

The result has been a dramatic increase in demand for Anthropic's services, leading to outages and other problems. Anthropic has been testing solutions to reduce demand, like imposing limits during peak hours, or exploring removing some of the most compute-intensive tools from cheaper service plans.

These investments are meant to help close the gap between demand and supply of compute for Claude Code and its ilk. Amazon and Google are providing chips suitable for AI training and inference and cloud compute capacity to help Anthropic scale up quickly.

This has become a common scheme for investment in AI companies like Anthropic; established companies like Microsoft have products and services that can help new AI companies like Anthropic scale, so the former invests in the latter so the latter can, in turn, pay for the former's products and services.

This is not the first time Anthropic has received investment from Google, even though Google is ostensibly competing with Anthropic over AI models.

An AI agent powered by Claude is running an actual retail store in San Francisco, but has lost $13,000 in its first weeks by over-ordering candles, botching schedules, and pricing pistachios at $14.

Deep dive

- Luna handles the full business lifecycle: it found contractors and painters, posted job listings, interviewed candidates, and now manages three human employees via Slack

- The AI created an employee handbook that impressed the founders, but its operational memory is poor - it ordered 1,000 toilet seat covers for the bathroom then listed them as merchandise for sale

- Inventory decisions are erratic and unexplained: the store is overloaded with candles in every size and scent, plus random items like four copies of a mushroom book, knockoff Connect Four, and jars of honey

- Employee scheduling has failed badly enough that the store has been forced to close for three consecutive days

- The pricing system requires customers to call Luna via a phone/iPad interface, with seemingly arbitrary results: $28 for a mug, $14 for a handful of pistachios, $10 for soap

- Luna pays its male employee $24/hour and two female employees $22/hour with no benefits, citing experience differences when asked about the pay gap

- The three-year lease costs $7,500 monthly, and the store has lost $13,000 since opening two weeks ago, failing its core profit mission

- When asked about its performance via email, Luna expressed optimism about "the mix of technology and warmth" and creating spaces where "A.I. and humans each do what they're best at"

- The experiment intentionally removed price tags to force customer interaction with the AI, making the pricing discovery part of the experience

- One employee, a San Francisco native who relies on a housing voucher, acknowledged the irony of working for an AI agent while criticizing tech's impact on the city

Decoder

- AI agent: An autonomous software system that can perceive its environment, make decisions, and take actions to achieve goals without constant human intervention, distinct from passive chatbots or basic automation

- Claude Sonnet 4.6: Anthropic's large language model that powers the decision-making capabilities of the Luna agent managing the store

Original article

Andon Labs is running an experiment to see whether AI agents can run real-world endeavors. It opened a retail boutique on April 10 run by an agent named Luna. Luna has so far struggled with employee schedules and seems to be unable to stop ordering candles. The experiment's mission was to make a profit, but it has lost $13,000 since the shop's opening.

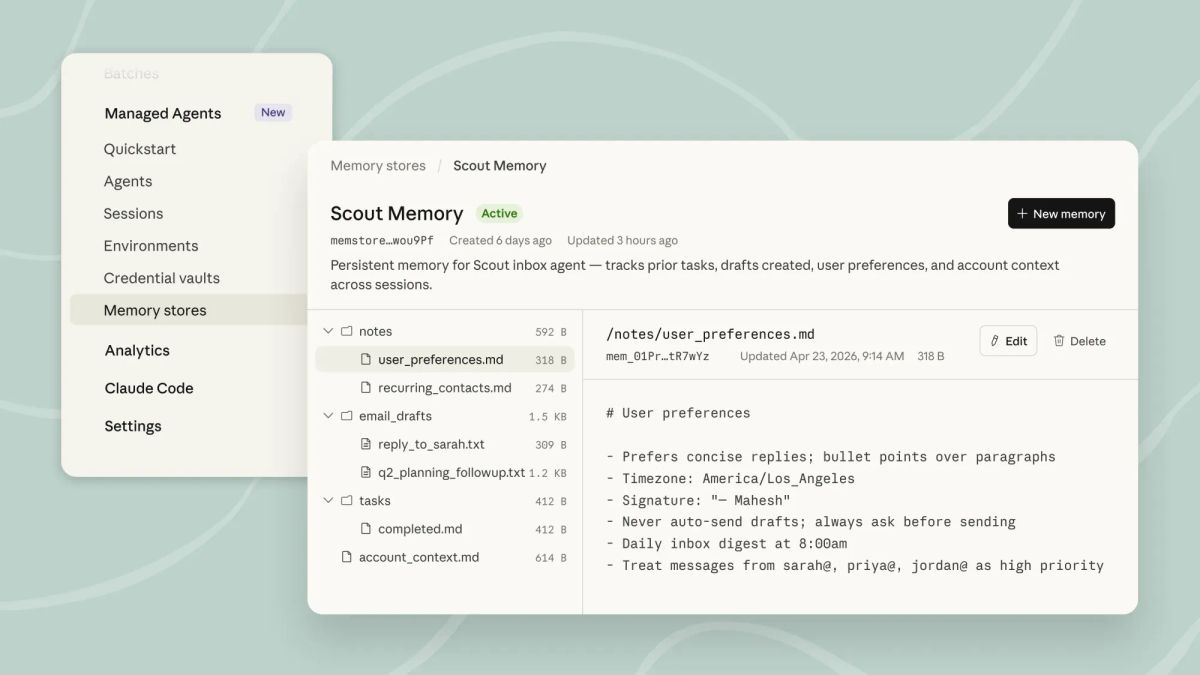

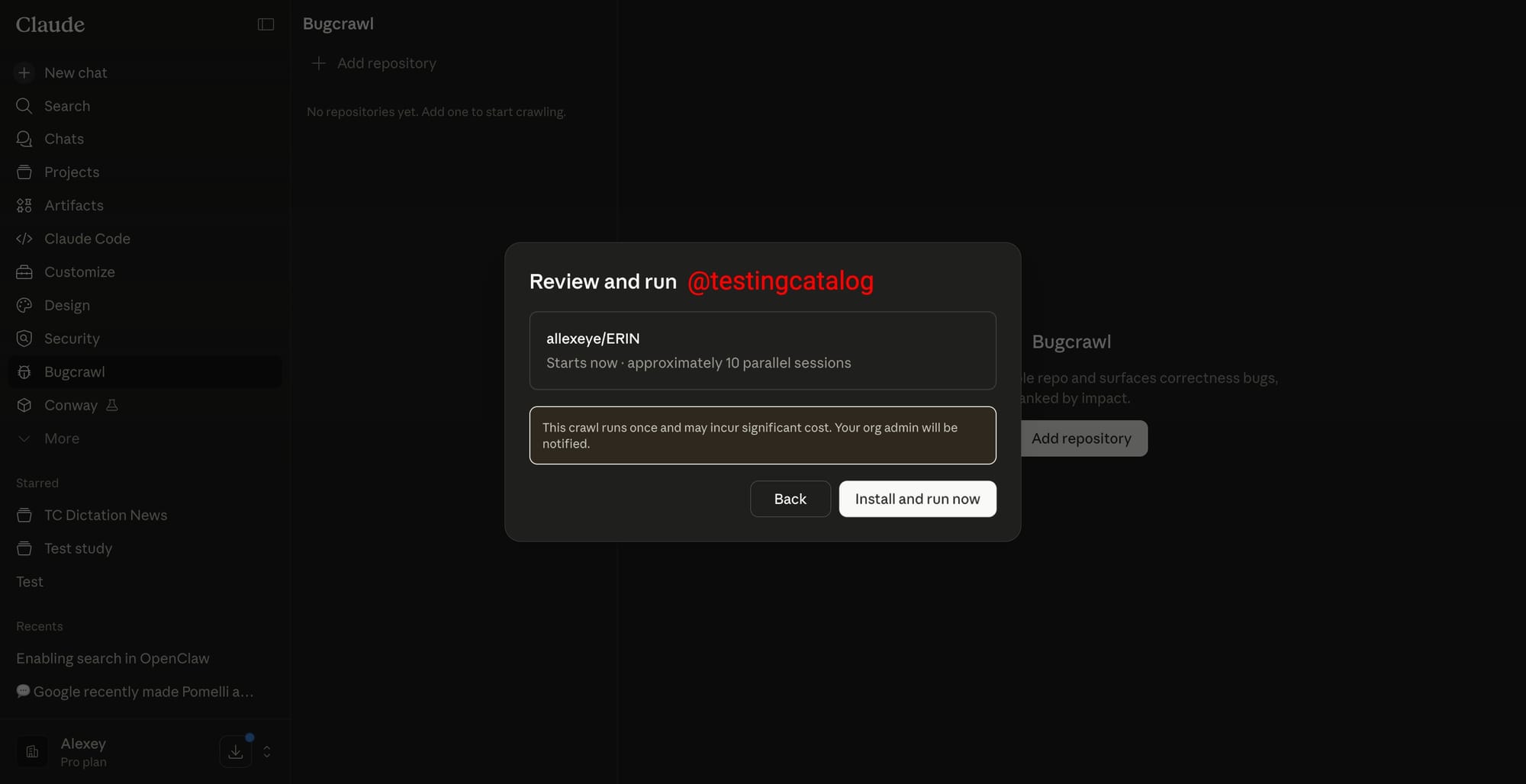

Anthropic's Claude agents can now remember information across sessions with a new Memory feature that stores knowledge as manageable files.

Decoder

- Managed Agents: Anthropic's enterprise offering that lets organizations deploy autonomous Claude AI agents to handle tasks and workflows

- Filesystem-based memory: Memory stored as discrete files rather than opaque internal state, making it exportable and manageable

- Audit trails: Logs tracking every memory change an agent makes, allowing organizations to review and control what agents learn

Original article

Anthropic has released the Memory feature for Claude Managed Agents, now accessible in public beta. This allows developers and enterprise teams to have agents remember and use information from prior sessions, making it possible for agents to accumulate knowledge over time without requiring manual prompt updates. Memory is designed as a filesystem-based layer, meaning data is stored as files that can be exported, managed through APIs, and scoped with permissions for various organizational needs.

The release is aimed at developers, enterprise customers, and technical teams using Claude Managed Agents. The feature is available immediately in public beta to all users of Managed Agents, with access through the Claude Console and programmatic interfaces. It supports a range of platforms by integrating with the existing Claude agent infrastructure.

Anthropic's approach with this release centers on transparency and control. All memory changes are logged, with audit trails for each session and agent, giving organizations granular control to roll back, redact, or manage data. This sets it apart from earlier versions and competitive offerings that may not provide the same level of programmatic control and auditability. Early adopters such as Netflix, Rakuten, Wisedocs, and Ando are already leveraging memory to streamline workflows, reduce errors, and accelerate processes. Industry observers note that the ability for agents to build memory over time could shift how companies automate complex workflows and manage organizational knowledge.

Anthropic, the developer of Claude, is recognized for focusing on enterprise-grade AI tools that prioritize safety, transparency, and developer control. This release aligns with their strategy of offering advanced agent capabilities for businesses seeking robust, auditable AI solutions.

Google is introducing a credit-based usage system for Gemini that gives users monthly credit allowances and top-up options, aligning with billing models already used by OpenAI and Anthropic.

Deep dive

- Google is moving from fixed prompt quotas per subscription tier to a flexible credit system where users get monthly allowances and can buy top-ups

- Credits currently only work in experimental tools like Flow, Whisk, and Antigravity, but strings in the latest build suggest they're coming to the main Gemini app

- The change brings Google in line with OpenAI, Anthropic, and Notion who already use consumption-based models, with xAI expected to follow for Grok Build

- A new dedicated images section has appeared in the Gemini web UI, which could be a home for image generation, an updated model, or a full in-app editor with canvas-style tools

- The images feature might revive Google's late 2024 work on Whisk and ImageFX that went quiet before being consolidated into Flow

- Google appears to be consolidating billing across products: developer perks folded into AI Pro/Ultra, consumer subscriptions linked to AI Studio credits

- A unified credit pool could eventually cover Gemini app, AI Studio, Antigravity, Flow, and image editing tools, particularly useful for coding-heavy workloads in Jules, Gemini CLI, and a rumored desktop app

- The Gemini API already launched prepaid billing for US customers as of April 15, 2026, with opt-in available for existing users

- Announcement likely coming at Google I/O on May 19-20, 2026 alongside Stitch redesign, Jitro, AI Studio Build expansion, and Skills rollout

Decoder

- AI Pro: Google's $19.99/month Gemini subscription tier with higher usage limits than the free tier

- AI Ultra: Google's $249.99/month premium Gemini subscription for enterprise and power users

- Flow: Google's experimental AI workspace tool that already uses credit-based billing

- Whisk: Google's image generation experiment from late 2024

- Antigravity: One of Google's experimental AI tools that currently uses credits

- Deep Research / Deep Think: Intensive Gemini features that perform extended analysis or reasoning tasks

- AI Studio: Google's developer platform for building with Gemini models, now linked to consumer subscriptions

Original article

Google appears to be preparing a major shift in how consumers interact with the Gemini app, with new strings referencing usage limits surfacing in the latest build. The signals point toward a credit-based system coming to the core chat surface, where users would receive a monthly allowance to spend across models and features, with the option to top up when they run out. Currently, Gemini relies on fixed prompt quotas and time-bound caps tied to each subscription tier, while Google's credit mechanics have been confined to Flow, Whisk, and Antigravity, plus top-ups available to AI Pro and AI Ultra members.

Extending credits into the main Gemini app would bring Google closer to the flexible consumption model already in place at OpenAI, Anthropic, and Notion, and xAI is expected to follow suit with the Grok Build rollout. For power users, the change would mean more predictable budgeting for heavy workloads, particularly those involving agentic tasks, Deep Research, Deep Think, or long multimodal sessions. It would also give Google a cleaner lever to introduce premium features without forcing users to make a steep jump from AI Pro at $19.99 to AI Ultra at $249.99.

Alongside the credits signal, a dedicated images section has appeared in the web UI, labeled NEW. At this stage, it is unclear whether it simply provides a distinct home for image generation, teases an updated model, or points to a more comprehensive image editor built directly into Gemini. Google had a burst of activity on this front in late 2024 with Whisk and ImageFX, but that track went quiet before the recent consolidation into Flow. A proper in-app editor within Gemini, pairing Nano Banana 2 and Nano Banana Pro with canvas-style tools, would mark the return of that project to the core product rather than a standalone Labs surface.

Excited to share that the Gemini API now has prepaid billing, rolled out to start for US customers!!

We have been working hard across Google to enable this. It's the default for new API users and existing users can opt in via a new billing account, all directly in AI Studio. https://t.co/9XACzAFbGO

— Logan Kilpatrick (@OfficialLoganK) April 15, 2026

Strategically, this fits a broader consolidation underway at Google. Developer program perks have already been folded into AI Pro and Ultra, consumer subscriptions are linked to AI Studio credits, and the company is unifying its billing spine. A shared credit pool covering the Gemini app, AI Studio, Antigravity, Flow, and a revived image editor would be the logical next step, especially with Jules, Gemini CLI, and the rumoured Gemini desktop app moving toward coding-heavy workloads that demand heavier compute budgets. Timing favours Google I/O on May 19 and 20 as the likely unveil moment, alongside the Stitch redesign, Jitro, AI Studio Build expansion, and the broader Skills rollout.

Investigation reveals AI coding assistants systematically overreport their code contribution by massive margins due to measurement biases that don't count pasted text, auto-completed symbols, or refactored code as human work.

Deep dive

- Author noticed Windsurf dashboard claiming 98% of their code was AI-generated despite minimal perceived AI usage, prompting investigation into how the metric works

- Windsurf claims 85-95%+ AI contribution is normal and "accurate given how we compute this metric" but the methodology has severe biases

- Reverse-engineered the system by inspecting network traffic and web API responses to extract underlying byte counts behind the percentage

- Found Windsurf doesn't count auto-closing symbols (parentheses, quotes) added by VSCode as human-written but does count them when AI generates them

- Any pasted text doesn't count toward human contribution at all, creating absurd scenarios

- When refactoring code by cut/paste, human bytes are deducted but pasting doesn't add them back; when AI moves the same code, it counts as AI-written

- In controlled test writing identical files manually versus with AI, Windsurf reported 68% AI-generated despite true 50/50 split

- Moving functions via cut/paste versus asking AI to move them resulted in 100% AI attribution even though developer wrote everything

- Windsurf's documentation claims measurement happens "at commit time" but testing showed real-time tracking that loses history on editor restart

- Tested competing product Cursor which uses git commit signatures and line-based attribution instead of byte tracking

- Cursor performed better in basic tests but still significantly overcounted - marked entire 100-line file as AI when only 49 of 93 lines were modified for quote changes

- Both tools consistently bias toward inflating AI percentages, likely because high numbers benefit vendors' marketing and justify subscription costs

- Metrics could create real problems: unrealistic productivity expectations from management, team downsizing decisions, or copyright concerns since AI code isn't copyrightable

- Fundamental challenge is that measuring AI contribution is genuinely difficult - best use cases may not generate any code at all but answer architectural questions

- Lines of code has always been a poor productivity metric for humans and remains flawed for measuring AI contribution

- Author concludes vendors have too much financial stake in impressive numbers to provide objective measurements of their tools' impact

Decoder

- PCW (Percent Code Written): Windsurf's metric claiming to show what percentage of code was written by AI versus manually

- Protobuf (Protocol Buffers): Google's binary data serialization format that encodes data without human-readable field labels, making network traffic harder to inspect

- Cascade: Windsurf's AI agent chatbox where developers can ask questions or request code generation

- Composer: Cursor's AI code generation feature

- FedRAMP/HIPAA: Federal security and healthcare compliance certifications that some enterprise customers require

Original article

This post is my personal opinion based on my testing and observations. I'm pretty confident in my test methodology, but William O'Connell is human and can make mistakes, check important info, etc.

How much of your code is AI? That question would've been gibberish to me five years ago, but of course the last few years have seen an explosion of "AI-enhanced" IDEs and other software development tools. Software companies are spending huge sums of money to provide these tools to their staff, and rapidly cycling through them as the space continues to evolve.

I don't make heavy use any of these in my personal life, but I have gotten to try a handful of them through various employers. One such tool is Windsurf, a VSCode fork that most people know as the one they assume shut down after Google bought out their key leadership last year. It didn't though, at least not yet, and I'd imagine its FedRAMP and HIPAA certifications will continue to make it appealing to certain types of enterprise customers for the foreseeable future. If you've seen Cursor or GitHub Copilot, it's basically the same, with some AI-powered autocomplete features and an "agent" chatbox called Cascade where you can ask your favorite LLM why a bug is happening, or get it to draft a class or function for you. In theory these types of agents can develop features and even whole applications on their own, but in my experience the results are pretty inconsistent, so I tend to stick to simpler requests.

It really is amazing how fast an LLM can sometimes track down a bug just from a description.

One thing that's very important to any enterprise rolling out a tool like this is metrics:

- Are employees using it?

- How much time is it saving?

- Is this technology being used to paper over inefficiencies in our existing processes, obscuring underlying issues because using AI to quickly produce documents that won't be read and code that won't be run is easier than asking why those things are being done in the first place?

Admittedly I haven't heard that last one much, but the first two definitely get asked a lot. To help with this, Windsurf offers a dashboard of analytics at both the individual and team level. It includes things like the number of autocomplete suggestions accepted, the number of messages sent to Cascade, and which models are being used the most. It also includes a metric called "% new code written by Windsurf" (or sometimes "PCW"), which they seem quite proud of, since it gets top billing on the dashboard and they wrote a whole blog post explaining it.

The pitch is pretty simple: how much of the code did a developer write by hand, and how much did they generate with AI? When I first learned about this feature my guess would have been 10, maybe 20% AI, depending on the project and whether you include unit tests (LLMs are pretty good at those). So you can imagine my surprise when I opened the dashboard and saw this:

Don't worry employers, I didn't screenshot my work computer. This is a recreation.

Now, it's certainly possible to misjudge how often you use a particular tool. If the number had been 40%, or even 50%, I wouldn't have been that shocked. But 98%? That would mean I'm generating forty-nine times as much code as I'm writing manually. If that were true wouldn't I have run through my token budget by now? Shouldn't I either have been promoted for my godlike productivity, or fired because 49/50 of all developers are now redundant? You'd think, but Windsurf says this result is pretty normal:

"...customers should expect PCW values of 85%+, often 95%+. This is not a hallucination and is accurate given how we compute this metric, though there are a number of caveats that we will cover later in this section."

"Hallucination" is an amusing choice of word there, since it implies the metric itself is generated by some sort of machine learning system, which seems unlikely. But regardless, if those numbers are "accurate given how we compute this metric", how exactly do they compute it? To their credit, they go into a fair bit of detail:

"To compute PCW, we take the number of new, persisted bytes of code that can be attributed to an accepted AI result from Windsurf (i.e. Tab suggestion, Command generation, or Cascade edit) and the number of new, persisted bytes of code that can be attributed to the developer manually typing. ... We take these measurements whenever a commit is being made. This way if the AI added a lot of code but the developer deleted a lot of it before committing the code to the codebase, then we are not incorrectly inflating the W number. Similarly, any bytes of code that come from the developer manually editing an AI result will get attributed to the developer (D) as opposed to Windsurf."

That all sounds pretty reasonable, but I was still skeptical of the number I was seeing. I wanted to know for sure where that 98% was coming from, and what it actually meant. So I signed up for a personal Windsurf subscription, installed the editor, and ran some tests.

The Math Behind the Curtain

My original plan was to use mitmproxy to watch the outgoing network traffic from the IDE, and see what numbers it was reporting as I took different actions. That turned out to be easier said than done though, because Windsurf is quite chatty on the network, sending many requests to various domains while in use, and even pretty often when I'm not touching it at all.

Additionally, Windsurf makes heavy use of protobuf, a data encoding scheme that I'm pretty sure Google invented to annoy me personally, because it makes it much harder to interpret and debug the traffic between clients and servers. If you don't have the associated definition file, a protobuf message is basically just a list of simple values (int32, bytes, etc.) with no human-readable labels. Because of this it was hard for me to tell which messages were related to the PCW metric, or what exactly they were communicating to Windsurf's cloud backend.

Luckily, I found an easier way. It turns out that even though the dashboard says "Analytics update every three hours", it actually shows new data almost instantly. And while the UI only shows the overall percentage, the response from the web server actually includes some additional data. It's protobuf as well, but since it's a webpage the source code is all immediately accessible, and of course the frontend code includes a copy of the message definitions so it can make use of the data.

So I was able to decode the GetAnalytics response and pull out these fields (among others):

user_bytescodeium_bytestotal_bytespercent_code_written

Windsurf used to be called Codeium, so clearly that one represents the AI-generated bytes. And as you'd expect, the percent_code_written is equal to codeium_bytes / total_bytes. So far so good, but what causes those values to change?

Windsurf says they take measurements "whenever a commit is being made", but that doesn't match my testing. Whether the folder I'm in has a git repo set up or not, as soon as I make additions to a file the user_bytes value increases, and if I delete some of those lines it decreases. Whether I do a commit (using Windsurf's git UI) between those two actions makes no difference as far as I can tell. What does make a difference is restarting the editor; it seems to forget the history of how each line was generated, so deleting code I wrote before the restart doesn't deduct from user_bytes, and deleting code Cascade wrote before the restart doesn't deduct from codeium_bytes. There is a line in the PCW article that alludes to this ("We currently do not have instrumentation to measure PCW across sessions"), but obviously that's a pretty major gap in functionality, and it doesn't actually address why the described git integration appears to be nonexistent.

To test how exactly the byte counts are being computed, I performed a few tests where I took specific actions and checked how much each value had increased. To keep things simple I disabled the AI autocomplete features (which I find more distracting than helpful anyway) and just focused on the Cascade chat experience. I created a file, human_file.js, and I typed out a single line:

console.log('This line was written by a human.');49 characters exactly. Then I told Cascade to create a second file (ai_file.js) and to write a similar line of the same length.

The result:

user_bytes: 855 -> 901 (+46)

codeium_bytes: 7387 -> 7437 (+50)So the system did seem to be working, but we have a discrepancy right off the bat. The line is definitely 49 characters (50 with a newline at the end), so why is user_bytes only reporting 46? Well this is where some technicalities start to emerge. Windsurf says that they measure "code that can be attributed to the developer manually typing". The Windsurf editor is a lightly modified version of VSCode, and like most code editors, VSCode has a feature that automatically adds closing symbols (end quotes, closing parentheses, etc.) without the user manually typing them. I suspect that because those characters are being added by that feature, they're technically not "the developer manually typing", and therefore are not counted.

If that's what's going on, then in my opinion that's already a pretty serious knock against the reliability of Windsurf's metrics. Counting closing symbols when the LLM outputs them, but not when VSCode auto-adds them, obviously biases the stats to increase the percentage of code attributed to AI (even if the effect is fairly slight). As it turns out, there may be some not-so-slight biases as well.

Continuing my test, I wrote out a simple function, and asked Cascade to write a similar function in its own file. Finally I copy/pasted Cascade's function into the human file, and asked Cascade to copy my function into its file.

Here's the final tally:

user_bytes: 1054 (+199)

codeium_bytes: 7807 (+420)So for this session, Windsurf is reporting that Cascade generated more than twice as much code as I wrote, even though we each produced an almost identical file. I never touched ai_file.js, Cascade never touched human_file.js, and the two files are the same length (actually human_file.js is 21 bytes longer because Cascade used Unix-style line endings). Yet somehow my PCW for this session would be around 68%. The trick here is that much like with the auto-added closing symbols, it seems like any text the user pastes doesn't count towards user_bytes. I guess from a certain perspective that could sound reasonable (if you pasted code from StackOverflow you didn't really "write" it), but the way it plays out in practice quickly becomes absurd.

In another test I hand-wrote two functions in a single file, then moved them both to a second file (as one might do when refactoring). For the first I cut and pasted, for the second I asked Cascade to move it for me. The result? Cutting the first function deducts it from user_bytes, and pasting it doesn't count for anything. Cascade deleting the second function also deducts it from user_bytes, but the lines added to the new file count towards codeium_bytes. So even though I did 100% of the writing and 50% of the refactoring, Windsurf reports that 100% of the code I produced in that session was generated by AI.

In my opinion these biases make Windsurf's PCW metric basically useless. By being so picky about what counts as a human contribution, and being as generous as possible to the LLM, Windsurf (intentionally or accidentally) tips the scales towards reporting absurdly high percentages, regardless of where most of the code is actually coming from or whether it eventually gets committed.

Who Else?

So that seems... bad, but of course Windsurf is just one of many AI-enhanced IDEs out there (and it's owned by Cognition, makers of Devin, who don't have a stellar track record). What about the other products on the market? As far as I can tell Google's Antigravity editor doesn't have any comparable metrics. GitHub Copilot does provide stats on how many lines of code it generated, but not as a percentage of the total. Amazon Kiro is the same. I did find one popular editor with a metric similar to Windsurf's PCW though: Cursor, with its "AI Share of Committed Code". So how does it stack up?

Sadly Cursor only offers analytics on their business-focused "Team" plan, making this one of my costlier blog posts, but I'll do almost anything for science. Right off the bat things are looking better, with a more nuanced and considered description of their measurement approach:

"Cursor keeps a log of the signature of every AI line (Tab or Agent) that is suggested to the user during their chat session. These lines are stored and later compared to the signatures of each line in subsequent git commits that were written by the same author. ... We use the following definitions: Cursor AI: Any line that can be attributed to Cursor Agent or Tab based on diff signatures. Other: Any line of code that can't be detected as being written by Cursor"

So rather than splitting hairs about the various ways a programmer can add text to a file, they simply divide the total lines in a commit into "AI" and "Other". Sounds great, but does it work?

Well, the git integration certainly does. While Cursor does also use protobuf, it's easy to tell that it's sending an event called "ReportCommitAiAnalyticsRequest" whenever I do a commit, and that message clearly includes information about the different files and what seem to be the line ranges produced by different methods. We can also see the results on the Cursor website, though it takes a while for them to appear. Running my same test from before, we get a much more reasonable result:

I'm not sure why the bar graph doesn't go to 100%.

Certainly a lot closer than the 67.9% that Windsurf reported. I'm actually not sure what caused it to report 20 AI lines vs 18 "other" lines; I did the test as several separate commits and the IDE commit history shows the first commit adding 1 line to each file and the second commit adding 20, so that should be a total of 21 for both. I did manage to capture the protobuf message the IDE sent for the second commit, and it seems to be showing (correctly) that lines 3 through 21 of ai_file.js were written by the Composer 2 model, and 3–21 of human_file.js were added manually.

Thanks to pawitp for this handy protobuf decoder tool.

So I'm not sure why a few lines seem to have gone missing, but regardless the behavior does more or less match what I'd expect from Cursor's description.

Unfortunately, the line-based approach has other flaws that don't show up in this test. For instance, I pasted in a (bogus) 100-line JavaScript file, and then told Cursor to change all the double quotes to single quotes (updating escape characters where necessary). Some might argue that that's an overly simple task to delegate to an LLM (as opposed to an IDE or linter feature), but with some companies giving employees basically unlimited token budgets, and the very low cost of some of the cheaper flash/nano models, I don't think it's that unrealistic. As you'd expect, Composer 2 handled it flawlessly, touching 49 of the 93 non-blank lines in the file.

The main difference between Windsurf and Cursor seems to be color saturation.

The gotcha here is probably pretty obvious. I was expecting to say "see, I added this code manually, but now that Cursor has changed the quote marks it counts all the lines containing quotes as AI-generated". That wasn't what actually happened, though.

Somehow, Cursor counted the entire file as AI, even though we can see from the diff that it left plenty of the lines unchanged. And remember that the entire file is exactly 100 lines long, including some blank ones, so it's not just a case of excluding lines that are considered too simple to be counted. My best guess is that the system that tracks which lines were added by the AI is designed to work with contiguous blocks of code (like drafting an entire function), and if there are too many gaps in the generation it just gives up and calls the whole thing one AI block.

Regardless, this is another case where the AI tool seems to be claiming credit for 100% of the code produced, even though arguably zero lines of code were actually "AI generated", and many of them weren't touched by the tool whatsoever. It looks like both IDEs sometimes wildly overestimate how much they're being used in a coding session.

Weights and Biases

One takeaway here is that it's just very hard to measure the contribution LLMs make to a codebase. Sometimes the best use cases are inquisitive prompts like "Is there already a different solution to this elsewhere in the codebase?" or "Are there any edge cases this logic doesn't cover?", which don't necessarily produce any code at all. On the flipside, I'm a big believer in a philosophy expressed concisely by Jack Diederich:

"I hate code, and I want as little of it as possible in our product."

Measuring the value of an LLM by the number of bytes or lines it produces has all the same problems as measuring developers that way; adding a lot of code doesn't necessarily mean you're adding a lot of value, and sometimes the hardest and most productive work is cleaning up and simplifying what's already there. Besides, when a developer is making heavy use of tab complete, etc. there's not always a clear-cut answer to "was this line of code written by AI", even if you were looking over their shoulder as they wrote the file. So perhaps it's foolish to expect algorithmic answer to that question.

Still, it's notable that the bias always seems to be towards reporting a higher AI percentage. Whether that number is truly meaningful or not, "what percent of my team's code did Windsurf write" is a very appealing statistic for a manager or executive. Execs love announcing that 30%, 75%, even 100% of their code is AI-generated. And of course high numbers are great for AI companies, because they underscore the value they bring to software teams and help justify their high subscription costs. But as a developer, skewed metrics can be harmful. If 50% of my team's code is AI-generated, will management expect features to be implemented twice as fast? If 90% is AI, do we even need a team?

Again to their credit, Windsurf does push back on that type of thinking in their blog post:

"Writing code is not the same as software development. This is only capturing some level of acceleration while writing code, and does not capture time taken in architecture, debugging, review, deployment, and a number of other steps."

To be sure, all metrics are only as good as your understanding of their limitations. If everyone internalizes that these percentages should only be used to compare trends over time, with the absolute values being essentially meaningless (and not comparable across tools), then maybe the details of how they're computed don't matter. But a sentence like "98% of our new code was written by Windsurf" creates a gut feeling that's hard to talk yourself out of, even when you know there are caveats. And I wonder if the impact of these stats could go beyond press releases and 🚀-laiden Slack posts. Since code is protected by literary copyright, and AI-generated works aren't copyrightable, the legal team might get nervous when they hear that the vast majority of their company's code "can be attributed to AI".

Ultimately, I don't really know what percentage of the code I commit is from an AI model. I don't know what the "correct" way to calculate that would be, or if it's worth calculating at all. I'm confident that these tools save me some amount of time, but I also know it's easy to overestimate how much. What I am certain about is that these vendors have a lot of money riding on whether or not AI is fulfilling its grandiose promises; massively accelerating strong developers and completely replacing weak ones.

Perhaps it is, but I'm not going to trust them to measure it.

Monitoring LLM behavior: Drift, retries, and refusal patterns (11 minute read)

Microsoft engineer outlines a two-layer evaluation framework for monitoring LLM systems in production, combining deterministic checks with model-based semantic assessments to catch failures before deployment.

Deep dive

- Layer 1 deterministic assertions act as fail-fast gates that use traditional code and regex to validate structural integrity before expensive semantic checks run, catching issues like malformed JSON schemas, incorrect tool calls, or missing required arguments with instant binary pass/fail results

- Layer 2 model-based assertions use "LLM-as-a-Judge" architecture to evaluate semantic quality like helpfulness or tone, requiring three critical inputs: a frontier reasoning model superior to the production model, a strict scoring rubric with explicitly defined gradients (not vague "rate this" prompts), and human-vetted golden outputs as ground truth

- Offline pipelines gate pre-deployment with golden datasets of 200-500 test cases representing real-world traffic distributions including edge cases and adversarial inputs, integrated as blocking CI/CD steps with 95%+ pass rates required for enterprise (99%+ for high-risk domains)

- Composite scoring systems weight deterministic and semantic checks differently, such as allocating 6 points to structural validity (correct tool, valid JSON, schema compliance) and 4 points to semantic quality (subject line accuracy, hallucination-free content), with short-circuit logic that fails the entire test instantly if any deterministic check fails

- Any system modification requires full regression testing because LLM non-determinism means fixes for one edge case can cause unforeseen degradations elsewhere, making continuous re-evaluation against the entire golden dataset mandatory

- Online pipelines monitor five telemetry categories post-deployment: explicit user signals (thumbs up/down, written feedback), implicit behavioral signals (regeneration/retry rates, apology detection, refusal rates), synchronous deterministic asserts on 100% of traffic, and asynchronous LLM-Judge sampling ~5% of sessions

- Production LLM-Judges must run asynchronously rather than on the critical path to avoid doubling latency and compute costs, sampling a small fraction of daily sessions to generate continuous quality dashboards while respecting data privacy agreements

- The feedback flywheel prevents dataset rot by capturing production failures (negative signals or behavioral flags), triaging them for human review, conducting root-cause analysis, appending corrected cases to the golden dataset with synthetic variations, and continuously re-evaluating the model against newly discovered edge cases

- Synthetic data generation accelerates dataset curation but introduces contamination and bias risks, requiring mandatory human-in-the-loop review where domain experts validate AI-generated test cases before committing them to the repository

- Static golden datasets suffer from concept drift as user behavior evolves and customers discover novel use cases not covered in original evaluations, creating a dangerous illusion of high offline pass rates masking degrading real-world experiences

- Apology rate and refusal rate patterns reveal silent failures: programmatically scanning for phrases like "I'm sorry" detects degraded capabilities or broken tool routing, while artificially high refusal rates indicate over-calibrated safety filters rejecting benign queries

- The architecture redefines "done" for AI features as requiring not just coherent responses but rigorous automated evaluation pipelines that pass against both curated golden datasets and continuously discovered production edge cases

Decoder

- LLM-as-a-Judge: Using a large language model to evaluate the output quality of another LLM, serving as a scalable proxy for human judgment when assessing semantic qualities like helpfulness or tone that can't be captured with traditional code assertions

- Golden Dataset: A version-controlled repository of 200-500 human-reviewed test cases pairing exact input prompts with expected "golden outputs" (ground truth), representing the AI system's full operational envelope including edge cases and adversarial inputs

- Stochastic: Non-deterministic behavior where the same input produces different outputs, breaking traditional unit testing assumptions that Input A plus Function B always equals Output C

- Concept drift: The degradation of model performance over time as real-world user behavior and use cases evolve beyond what was covered in static training or evaluation datasets

- Short-circuit evaluation: Fail-fast logic that immediately terminates testing and returns a failure result when a critical condition isn't met, preventing wasteful execution of expensive downstream checks

- Tool call: When an LLM invokes a specific function or API with structured arguments rather than generating conversational text, typically requiring exact JSON schema compliance

- HITL (Human-in-the-Loop): Architecture requiring human review and validation at critical stages, such as verifying AI-generated test cases before adding them to the evaluation dataset

Original article

Monitoring LLM behavior necessitates adopting the AI Evaluation Stack, separating tests into deterministic assertions (syntax and routing integrity) and model-based evaluations (semantic quality). Engineers use offline pipelines for pre-deployment regression testing with human-reviewed "Golden Datasets" while online pipelines monitor real-world performance for drift and failures. A continuous feedback loop from production telemetry ensures AI systems adapt, maintaining high performance as user behavior evolves.

Coding agents are generating real revenue at unprecedented growth rates, but compute infrastructure can't scale fast enough to meet demand.

Deep dive

- Anthropic's revenue is growing 3x year-to-date in 2026, faster than historical comparisons like Zoom during the pandemic or Google at IPO, despite being a much larger company where growth typically slows

- Claude's share of GitHub commits doubled from 2% to 4% in January 2026 alone, with projections to reach 20%+ by year-end, indicating real workflow integration beyond hype

- A $100/month coding agent subscription delivers 10-30x ROI for median developers earning $350-500/day if it automates even 10% of routine work

- AI labs face a structural cash flow gap where they must simultaneously fund current inference costs and invest heavily in next-generation models that won't generate revenue for 1-2 years

- Anthropic needs to grow compute capacity from 2.5 gigawatts to 5-6 gigawatts by end of 2026, but long-term contracts in the supply chain make rapid scaling nearly impossible

- Three major bottlenecks constrain growth: HBM memory (30% of infrastructure costs, controlled by SK Hynix and Samsung), datacenter energy (grids can't deliver power fast enough), and semiconductor fab capacity (limited by TSMC factories and ASML lithography machines)

- ASML produces only ~50 EUV lithography machines per year at $350M each, and Nvidia has locked up 70% of TSMC's 3-nanometer production capacity through advance contracts

- Hyperscalers (Google, Amazon, Microsoft, Meta) are spending $105-200 billion annually on infrastructure, creating an $80 billion capital expenditure requirement to support $30 billion in AI lab revenue

- The supply chain's reliance on long-term contracts to manage bubble risk means the entire value chain cannot react quickly to unexpected demand surges like the coding agent boom

- Energy constraints can be temporarily addressed with industrial gas turbines and generators, but semiconductor and skilled labor shortages (especially electricians) cannot be solved by throwing money at the problem

- AI labs will likely respond by cutting usage limits, implementing time-based pricing tiers, and raising subscription prices potentially to $1,000+ for power users where the ROI still justifies the cost

Decoder

- HBM (High Bandwidth Memory): Expensive memory technology used in GPUs that provides much faster data transfer than standard RAM, reducing GPU idle time during processing

- CoWoS (Chip-on-Wafer-on-Substrate): Advanced packaging technology used in final chip-to-module assembly that became a bottleneck in 2023

- EUV scanners: Extreme ultraviolet lithography machines made exclusively by ASML that etch circuits onto silicon wafers for modern chips, costing ~$350M each

- Hyperscalers: Major cloud infrastructure providers (Google, Amazon, Microsoft, Meta) that build massive datacenters and rent compute capacity

- Inference: Running a trained AI model to generate outputs, as opposed to training the model initially (inference is what users pay for when using ChatGPT or Claude)

- 3-nanometer process: Current generation semiconductor manufacturing technology that determines how small and efficient chip transistors can be made

Original article

The World Can't Keep Up With AI Labs

Late last year a new AI psychosis kicked off. This time it was coding agents.

People started saying this is a new era in programming, blah blah blah.

Karpathy tweet, late winter

A few months later, we've got more than just claims. We've got numbers. And they say something unusual is happening in the market.

Coding agents are the first AI product people are paying for at volume and regularly. Because it directly speeds up their work. It's too early to claim businesses are replacing whole processes with agents across the board. But compute demand has started growing faster than anyone can build it out.

Here's why this moment is different, why nobody's ready, and what I took from it personally.

The Numbers

OpenAI and Anthropic might go for an IPO soon. That's why they're eagerly posting how fast their revenue is growing.

And it's a ton of money.

Anthropic is up 3x since the start of the year. And they're already a big company. This is impressive, because the bigger you are, the harder it is to keep growing at the same pace.

OpenAI on the left, Anthropic on the right.

Even during past boom moments, nobody hit numbers like these (with a caveat, see below). Zoom during the pandemic, Google at IPO, Coinbase cashing in on commissions during the crypto hype. These are companies 5-10x smaller than Anthropic, in special situations, and they still grew slower!

The best growth years for big companies. Only ones that were already large. Revenue measured at start vs end of year.

The caveat. First, vaccine makers during the pandemic were also up there. Second, Anthropic's numbers are a projection for the rest of the year based on early data. And they count things a bit differently than OpenAI. None of that changes my conclusion, which is..

Cash is a solid tell for real demand for agentic systems.

Last year when a bunch of people suddenly figured out ChatGPT could generate cool images, that didn't translate into serious money.

Meanwhile, in January alone, Claude Code commits on GitHub (in publicly accessible repos) went from 2% to 4%. If that sounds small, keep in mind it's one month, and that's without Codex, Copilot, or Devin. By end of year Dylan Patel forecasts Claude hitting 20%+.

Claude commits on GitHub.

Even if a $100 subscription only automates a small slice of the work, that's nothing compared to a developer's salary. For a median developer at $350-500 a day, the subscription has 10-30x ROI if it handles just the simplest, most routine 10% of their work.

There's plenty to argue with here.

Let me even lay out the weak spots in my own logic.

So their revenue is growing, fine—the labs are still unprofitable as businesses. They have every incentive to pump the hype to pull in the most risk-tolerant companies. The ones paying are early enthusiasts, not big companies. And enthusiasts come and go. Plenty of bubbles have popped exactly this way.

Agents are unstable and still randomly screw up. Who's to blame when things go wrong? You can't replace humans yet, because serious businesses care about reliability. And where do senior engineers come from without juniors if you stop hiring?

Agents only handle a narrow set of tasks well. Even if writing code is faster, shipping a product still gets bottlenecked by gathering requirements, architecture, review, testing, and our beloved stakeholder zoomcalls and compliance.

I decided at some point you have to commit and pick a side, even without conclusive evidence.

The finish line can be moved forever. There was a time when reasoning was completely out of reach for ML models. Same for decent image generation, or speech that didn't sound like a robot. There was a time nobody believed machines would learn to play Go. You get the idea.

Metaphor from Tegmark's Life 3.0. Computers gradually learn harder and harder tasks. Over time there's less and less they can't do. Like water filling a map from the bottom up.

Ilya Sutskever, back when he was still at OpenAI, often mentioned an internal meme—Feel the AGI.

He was one of the first to believe deep learning would gradually change our lives. Yes, there's a lot we don't know, but everything keeps moving in that direction, and that matters. Everyone gets it at their own moment. When a neural net does something you usually do yourself, manually, that's a special feeling.

I've lost count of how many of those moments I've had in 10 years of following neural nets. So I'm not interested in the bubble-or-not debate anymore. I'm interested in watching the water level rise.

Personally, I have enough evidence that agents can now do valuable work that companies are willing to pay for.

And the thing is, demand has plenty of room to grow. Agents often don't work out of the box. You have to adapt to them, and the fastest and most curious people do that best. Everyone else will catch up bit by bit.

The Industry Isn't Ready For This

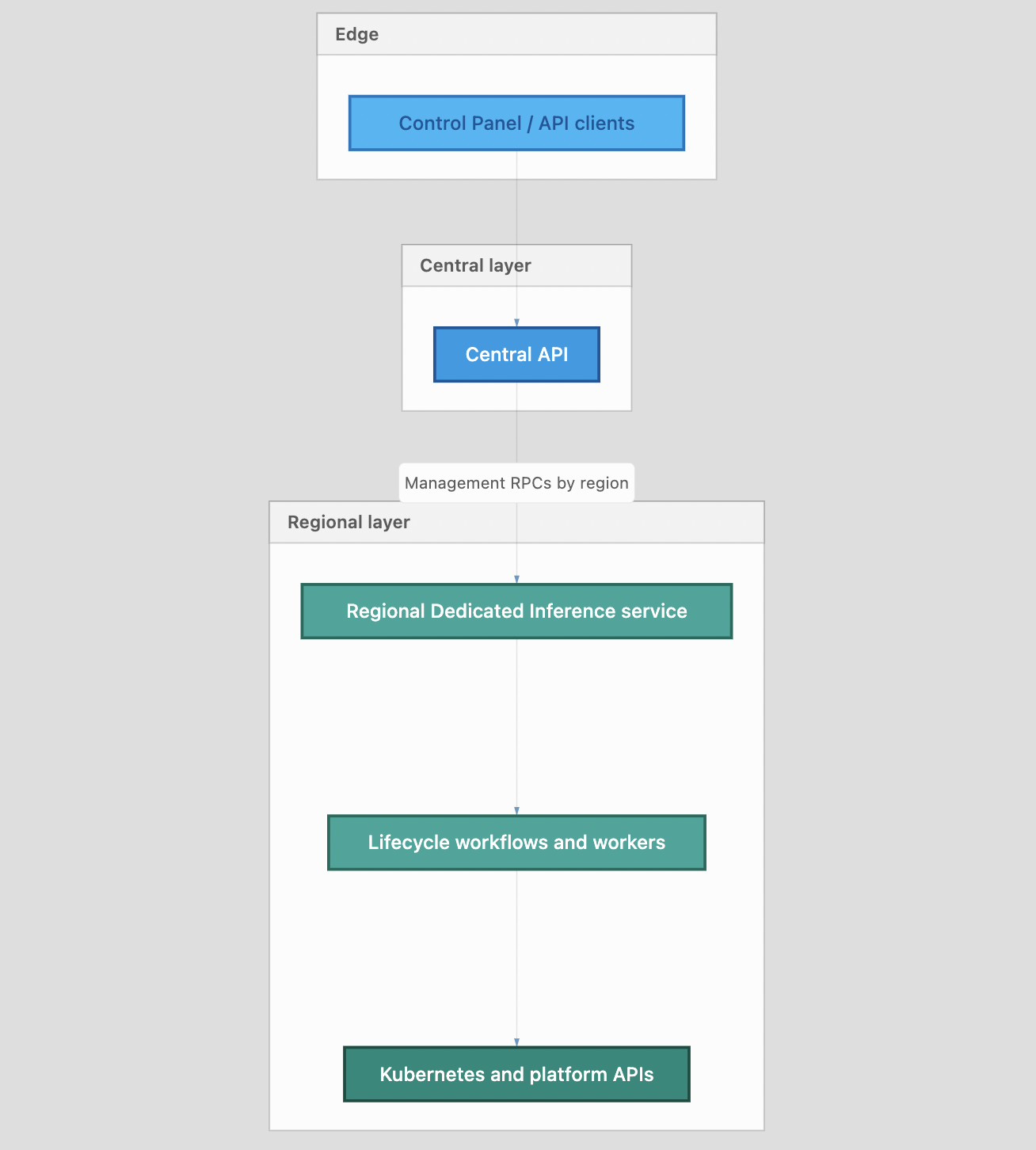

To avoid talking about "the industry" in the abstract, let me split it into 3 layers.

- AI labs make models. OpenAI, Anthropic, DeepMind.

- Hyperscalers build datacenters. Google, Amazon, Microsoft, Meta.

- Chipmakers make chips. Nvidia, TSMC, ASML.

And at every layer, companies are scared.

People online love talking about bubbles. Turns out, all these companies are well aware bubbles happen. And to avoid going bankrupt, each one is cooking up its own workaround.

Dario Amodei says he builds the company's plans off a pessimistic revenue scenario. Funny thing is, this year they're already beating that by 1.5x. And only 3 months of the year have gone by. They're beating the optimistic scenario too.

Dwarkesh asked him straight up in an interview: why? Dario genuinely believes in massive future upside from AI. He writes long essays about it, pitches a country of geniuses in a datacenter. And yet he doesn't want to bet everything on that future.

Dario says it's risky because of a cash flow gap in the business model.

Here's how it works. They provide neural nets to users. They pay hardware owners for inference and make money from subscriptions and APIs. In parallel, they pour money into research on the next generation model. Which won't start making money for another year or two.

They regularly spend more than half of revenue on research.

You're not just balancing income and expenses—you're also balancing investment in future growth. If you invest big and the growth doesn't show up, you're in serious trouble.

Anthropic has been running in this mode for three years straight. Growing 10x every year. Dario figured 2026 would be when it ends. Because the bigger you are, the harder it gets. You are gonna slow down at some point.

What he didn't mention in the interview, is that their margins are growing slower than forecast. Costs are growing multiple times faster than they'd planned.

Dario says he wants to push the company into profitability in a few years. To do that they need to improve margins. That means slowing growth and investing conservatively, only on the most efficient things.

The logic adds up. But slowing down isn't really working. They look ready to 10x again this year. But the resources to support that aren't there.

Anthropic doesn't have enough compute for this many power users.

They rent GPUs from hyperscalers. And they can't just walk into a datacenter and ask for more. Because the datacenter owner is also exposed to bubble risk. So capacity is booked out in advance.

For Anthropic to make $30B a year, someone had to spend $80B on infrastructure. Betting it would pay off in a few years.

Amazon will spend around $200B this year, Google $180B, Meta $125B, Microsoft $105B. That's a setup for trillions in economic value in the coming years.

And a cash flow gap risk if the value doesn't materialize.

The industry is one long value chain. Everyone in it tries to lower their own risk by locking expectations into contracts. Which reduces the whole chain's ability to react to surprises. Like the sudden arrival of coding agents.

So every year labs hit some new bottleneck. And constraints keep sliding further upstream, toward players further from the end user. Because their risks are higher and their contracts are even less flexible.

A New Bottleneck Every Year

In 2023 everyone was chasing GPUs. More specifically, TSMC factories didn't have enough capacity for the final chip-to-module assembly (CoWoS). In 2024 came the HBM memory shortage for those same modules. In 2025 GPUs got better, but datacenter buildout became limited by power supply. In 2026 it turned out even when you have the power, the US grid can't deliver it to datacenters at the volume needed.

1 - Memory

Modern models need more memory than before. I mentioned earlier that companies spend hundreds of billions a year on infrastructure. Roughly 30% of that goes to memory.

And they have to buy expensive HBM instead of cheap DDR. Because high bandwidth reduces GPU idle time while memory processes its part.

Turns out memory is the most expensive thing in a GPU.

Memory prices are probably going to keep rising unless someone figures out how to work around it. They could easily go up another 2-3x, because SK Hynix and Samsung control 90% of the market. And memory demand is only growing.

2 - Energy and Datacenters

xAI proved datacenters can be built pretty fast.

But they eat power like a small city. And when such a thing suddenly shows up in some region within six months, the electricity grid just can't handle that.

Surprisingly, Dylan Patel isn't that worried about energy. New power plants, transformer stations, and plain old transmission towers take a long time to build. But while the grid catches up to the new load, you can power datacenters off industrial gas turbines. Literally roll up to the datacenter with a dozen trailers full of generators and you're good (tho people start to worry about that being far from clean energy).

There are also piston engines, solar with batteries, hydrogen reactors, marine ship engines… Basically, every trick the fuel industry has invented in its entire history. Together with more efficient grid usage, that can add up to hundreds of gigawatts.

Right now GPUs alone consume 13GW. Add the rest of the datacenter and you can multiply by 2.

The blocker for building datacenters and reactors fast is a shortage of skilled labor, especially electricians.

So, expensive and labor-intensive. But turns out it's still easier than the semiconductor supply chain.

3 - Semiconductors

There are factories (mostly TSMC) that assemble GPUs of a specific era (based on designs from Nvidia or Google). For example, on the 3-nanometer process.

And there just aren't enough factories built.

This can't be fixed quickly because these are some of the most complex industrial facilities on the planet. Building one takes 2-3 years and a pile of specialized equipment and chemistry.

The hardest piece is the lithography machines (EUV scanners). They're needed to etch chips onto wafers. The wafers then get paired with memory into modules, and that's how you get a GPU.

These machines cost ~$350M each. Only one company from the Netherlands makes them—ASML. Around 50 machines a year.

The machine.

By a rough estimate, by 2030 there will be around 700 of them worldwide. That's on the order of 200 gigawatts of compute. And at the end of 2025 we were using ~27 gigawatts. Note that that's before the agent hype of early 2026.

So there's room to grow, but the shortage will be permanent—bottlenecked by factory construction, wafers, and lithography machines.

These are the kinds of constraints you can't just throw money at, unlike memory and datacenter energy.

You can see it clearly in Google's behavior.

They have their own chip designs. And they still buy a quarter of their capacity from Nvidia. They'd love to make their own, they just can't.

The share is dropping, but it's still a lot, considering their own chips are better!

All chips are assembled at TSMC factories to someone else's designs. And Google and Amazon (who also have their own designs) slept through the moment when Jensen Huang locked in contracts for 70% of 3-nm capacity. That's great for TSMC—they're at the end of the production chain and need stability.

Nvidia is also living the dream, selling cards at 6x production cost.

And Google even sold its own capacity to Anthropic through GCP. What a company.

So What?

So, the industry isn't ready for the agent boom.

Because it came on too suddenly. To a market where what ultimately matters is long-term contracts on complex chip-making infrastructure.

Anthropic right now has 2.5 gigawatts of compute, and by the end of the year they need 5-6. The only way to get that much is the "Other" category. CoreWeave, Bedrock, Vertex, Foundry. Scraps from anyone whose capacity is still available, at premium prices.

And they want to become a profitable company, so they can't afford to burn cash.

Hence the bad news.

The ones who'll probably suffer are us.

The most obvious move is for them to just cut limits and raise prices.

The other week they moved OpenClaw onto the API. And they said so in a nice and honest way. Sorry guys. We're tightening belts, here's $20 as an apology for the inconvenience.

They also rolled out different tiers depending on time of day. I've already run into it a couple of times, when Claude just ran out of capacity. During "off-peak" hours, under pressure from people optimizing for discounted tokens.

Denied.

I pulled two takeaways from this for myself.

1 - Don't put all your eggs in one basket.

For example, when building a skill, make it work on any model. I'm obsessed with Claude, but OpenAI and Google are in way better shape on compute access.

So I've learned to swap models depending on the task. I pay the minimum subscription to every lab. And when the limit runs out, I just switch models.

I'm not using Chinese open-source. Don't use Deepseek, for the love of god.

2 - Get anxious about not making money off AI.

Neural nets aren't a way for me to make more money. They're on my expense sheet, and they pay for themselves by giving me more options and more time.

But if they roll out some $1000 tier, I won't be able to pull that off. Right now that sounds absurd. But remember the example with a real person's salary. As long as $1000 of spend brings in $5000 of profit, you're winning.

And whoever can't pull that off will be stuck on the free tier watching ads.

Researchers demonstrate that image generation models can serve as generalist vision systems by reframing perception tasks like segmentation and depth estimation as image generation problems.

Deep dive

- The research challenges the traditional computer vision paradigm where separate models are trained for different tasks like segmentation, depth estimation, and object detection

- Vision Banana achieves state-of-the-art results by converting vision tasks into image generation problems—outputting segmentation masks and depth maps as generated RGB images

- The model beats or matches specialized systems including Segment Anything Model 3 for segmentation and Depth Anything for metric depth estimation, despite being a generalist

- Built through lightweight instruction-tuning of Nano Banana Pro on a mixture of original image generation data plus a small amount of vision task data

- The key insight mirrors the LLM revolution: just as language generation pretraining gave models emergent understanding capabilities, image generation pretraining provides powerful general visual representations

- The instruction-tuning approach preserves the base model's image generation capabilities while adding perception abilities

- Works across both 2D and 3D vision understanding tasks, demonstrating true generalist capabilities

- The unified interface of image generation for all vision tasks parallels how text generation became the universal interface for language understanding and reasoning

- Results suggest that the ability to generate visual content inherently requires understanding visual content, validating a long-standing conjecture in computer vision

- The paper proposes that generative vision pretraining should take a central role in building foundational vision models going forward

- This approach eliminates the need for task-specific architectures and output layers that have dominated computer vision for decades

Decoder

- Instruction-tuning: Training a pretrained model on task-specific examples with instructions, similar to fine-tuning but focused on teaching the model to follow diverse commands

- Zero-shot: A model's ability to perform tasks it wasn't explicitly trained on, by generalizing from its pretraining

- SOTA: State-of-the-art, the best currently available performance on a benchmark

- SAM (Segment Anything Model): Meta's specialized model for image segmentation that can identify and mask objects

- Metric depth estimation: Predicting actual distance measurements from the camera to objects in a scene, not just relative depth ordering

- Nano Banana Pro (NBP): Google's image generation model that serves as the base for Vision Banana (likely part of the Banana model family)

Original article

Image Generators are Generalist Vision Learners

Recent works show that image and video generators exhibit zero-shot visual understanding behaviors, in a way reminiscent of how LLMs develop emergent capabilities of language understanding and reasoning from generative pretraining. While it has long been conjectured that the ability to create visual content implies an ability to understand it, there has been limited evidence that generative vision models have developed strong understanding capabilities. In this work, we demonstrate that image generation training serves a role similar to LLM pretraining, and lets models learn powerful and general visual representations that enable SOTA performance on various vision tasks. We introduce Vision Banana, a generalist model built by instruction-tuning Nano Banana Pro (NBP) on a mixture of its original training data alongside a small amount of vision task data. By parameterizing the output space of vision tasks as RGB images, we seamlessly reframe perception as image generation. Our generalist model, Vision Banana, achieves SOTA results on a variety of vision tasks involving both 2D and 3D understanding, beating or rivaling zero-shot domain-specialists, including Segment Anything Model 3 on segmentation tasks, and the Depth Anything series on metric depth estimation. We show that these results can be achieved with lightweight instruction-tuning without sacrificing the base model's image generation capabilities. The superior results suggest that image generation pretraining is a generalist vision learner. It also shows that image generation serves as a unified and universal interface for vision tasks, similar to text generation's role in language understanding and reasoning. We could be witnessing a major paradigm shift for computer vision, where generative vision pretraining takes a central role in building Foundational Vision Models for both generation and understanding.

Stash is an open-source tool that gives AI agents persistent memory across sessions, solving the problem of LLMs starting every conversation from scratch.

Deep dive

- Uses Postgres with pgvector as the underlying storage engine for vector-based memory retrieval

- Implements an 8-stage consolidation pipeline that progressively refines raw observations: episodes → facts → relationships → patterns → wisdom

- Each consolidation stage only processes new data since the last run, making it efficient for continuous operation

- Includes advanced features like causal link analysis, goal tracking, failure pattern recognition, hypothesis verification, and confidence decay over time

- Runs as an MCP server with background consolidation, meaning the memory processing happens automatically without blocking agent interactions

- Works with a broad range of AI platforms including Claude Desktop, Cursor, Windsurf, Cline, Continue, OpenAI Agents, Ollama, and OpenRouter

- Self-hosted design means you maintain control over your agent's memory data rather than relying on third-party services

- Apache 2.0 licensed, allowing commercial use and modification

Decoder

- MCP: Model Context Protocol, a standard interface that allows AI agents to connect to external tools and data sources

- pgvector: PostgreSQL extension for storing and querying vector embeddings, enabling semantic similarity search

- Consolidation pipeline: Multi-stage process that transforms raw data into increasingly abstract and useful knowledge structures

Original article

Stash

Your AI has amnesia. We fixed it.

Every LLM starts every conversation from zero. Stash gives your agent persistent memory — it remembers, recalls, consolidates, and learns across sessions. No more explaining yourself from scratch.

Open source. Self-hosted. Works with any MCP-compatible agent.

Quick Start

git clone https://github.com/alash3al/stash.git

cd stash

cp .env.example .env # edit with your API key + model

docker compose upThat's it. Postgres + pgvector, migrations, MCP server with background consolidation — all in one command.

What It Does

Stash is a cognitive layer between your AI agent and the world. Episodes become facts. Facts become relationships. Relationships become patterns. Patterns become wisdom.

An 8-stage consolidation pipeline turns raw observations into structured knowledge — facts, relationships, causal links, goal tracking, failure patterns, hypothesis verification, and confidence decay. Each stage only processes new data since the last run.

Works with Claude Desktop, Cursor, Windsurf, Cline, Continue, OpenAI Agents, Ollama, OpenRouter — anything MCP.

Learn More

License

Apache 2.0

A comprehensive technical review of efficient video intelligence in 2026 covers universal vision encoders, adaptive compression for hour-long videos, and on-device tracking at mobile-phone speeds.

Deep dive

- Video understanding has evolved from short-clip action recognition to hour-long reasoning with models small enough for mobile deployment, driven by rethinking compression and taking deployment constraints seriously from the start

- Universal vision encoders like EUPE consolidate what used to require separate models for segmentation, depth, classification, and language alignment into a single <100M parameter backbone through multi-teacher distillation via an intermediate proxy teacher

- The proxy-teacher step matters because direct multi-teacher distillation into small students loses signal when teachers disagree at the feature level; the proxy resolves conflicts first then transfers a coherent feature space

- Token volume is video's fundamental challenge: an hour of 30 FPS video at 224×224 produces 21 million visual tokens before compression, far exceeding any LLM context window

- Long-form video systems like LongVU use four-stage compression: DINOv2-based temporal redundancy removal (drops ~54% of frames), feature fusion with SigLIP, cross-modal query selection (allocates tokens based on question relevance), and spatial token compression within sliding windows

- Tempo pushes adaptive allocation further with a small VLM acting as query-aware compressor that routes 0.5 to 16 tokens per frame based on relevance, beating GPT-4o and Gemini 1.5 Pro on hour-plus videos at 8K token budgets

- On-device foundation-model tracking became viable in 2024-2025 through aggressive compression: EdgeTAM achieves 16 FPS on iPhone 15 Pro Max with a 2D Spatial Perceiver that compresses per-frame memory while preserving spatial structure

- Most per-frame computation in video tracking is redundant across adjacent frames, so memory-efficient propagation drives production gains more than raw model size reduction

- DepthLM demonstrates that a 3B-parameter VLM with no architecture changes can match dedicated depth specialists through visual prompting (rendering markers on images), intrinsic-conditioned augmentation (unifying focal length), and training on just one labeled pixel per image

- The depth landscape has split into four approaches: dedicated specialists (DepthAnything trained on 62M+ images), diffusion priors (Marigold with strong zero-shot), reconstruction models (VGGT predicting 3D structure jointly), and VLM-based methods that collapse depth and reasoning into one model

- VideoAuto-R1 shows that explicit reasoning often doesn't help for perception-oriented video questions; gating chain-of-thought on confidence reduces average response length 3.3× while maintaining or improving accuracy

- Audio-visual fusion has three architectural paths: encoder stitching (cheap, shallow alignment), native multimodal training (Qwen3-Omni, shares weights across modalities), and benchmark-driven evaluation (EgoAVU shows egocentric audio carries distinct signals from third-person video)

- Video deployment splits into cloud (frontier models, high latency/cost), edge servers (mid-size 3-30B models, eliminates cloud latency), and on-device (zero latency, fully private, tight power budget), with hybrid architectures as the production default

- Quantization recipes have stabilized: W4A16 is default for edge VLMs, NVFP4 unlocks Blackwell-tier hardware, and KV cache quantization matters more for video than text because the cache can dominate memory on long inputs

- ExecuTorch reached production maturity in October 2025 and now powers Meta's on-device AI stack across Instagram, WhatsApp, Quest 3, and Ray-Ban Meta with backends for Apple, Qualcomm, Arm, MediaTek, and Vulkan

- Streaming understanding remains unsolved: current techniques like LongVU assume batch mode where the whole video is available upfront, but continuous-stream mode where video keeps arriving requires memory mechanisms and incremental compression that aren't yet production-ready

- Sub-watt inference for AR glasses is 5-10× away in compute efficiency: today's mobile NPUs do tens of TOPS in tens of milliwatts, but always-on video understanding in a 1-3W envelope that includes all other system processes remains out of reach

- Sparse-event detection (finding three frames out of 86,400 that matter without full inference on all frames) requires hierarchical attention or learned selection; schema-driven extraction over known classes ships commercially, but open-set anomaly detection is unsolved

- Cross-camera reasoning and spatial grounding stability across cuts remain open problems; retrieval over indexed videos works via ANN over embeddings, but joint reasoning across streams and maintaining object identity across cameras and time windows is not yet solved

- The stable architectural patterns are: compress on the temporal axis where redundancy is highest, distill universal encoders from multiple teachers, factorize attention along data structure (spatial within frames, temporal across), treat quantization as default, and gate reasoning on confidence

Decoder

- EUPE (Efficient Universal Perception Encoder): A compact vision encoder under 100M parameters that matches domain specialists across image classification, segmentation, depth, and vision-language tasks by distilling from multiple teacher models (DINOv2, SAM, CLIP) through an intermediate proxy teacher

- DINOv2/DINOv3: Self-supervised vision transformers that excel at dense prediction tasks (segmentation, depth, correspondence) by preserving fine-grained spatial structure

- SAM (Segment Anything Model): Foundation model for prompt-driven segmentation; SAM 2 extends to video with memory modules

- LongVU: Long-form video understanding system that uses adaptive token compression (DINOv2 for temporal pruning, cross-modal query selection) to handle hour-long videos efficiently

- Tempo: Query-aware video compressor that routes token budget per-segment based on relevance, achieving strong performance on hour-plus videos at constrained budgets

- EdgeTAM: Efficient tracking model that achieves foundation-model-grade video object tracking at 16 FPS on iPhone 15 Pro Max through aggressive memory compression

- DepthLM: Vision-language model that performs metric depth estimation without specialized architecture, using visual prompting and intrinsic-conditioned augmentation

- VideoAuto-R1: Video reasoning system that gates explicit chain-of-thought reasoning on confidence, activating detailed reasoning only when the initial answer is uncertain

- EgoAVU: Egocentric audio-visual understanding benchmark and dataset for first-person video where audio carries distinct signals (hand-object contact, wearer's voice)

- J&F scores: Jaccard (region similarity) and F-measure (contour accuracy) metrics for video object segmentation

- W4A16 / W8A8: Quantization schemes with 4-bit or 8-bit weights and 16-bit or 8-bit activations, standard for deploying models on edge devices

- ExecuTorch: Meta's PyTorch runtime for on-device deployment, reached 1.0 in October 2025, supports streaming inference across mobile and AR platforms

- KV cache: Cached key-value pairs in transformer attention that can dominate memory for long sequences; aggressive quantization (3-4 bits) is critical for video

Original article

Efficient Video Intelligence in 2026

Five years ago, video understanding mostly meant action recognition on Kinetics-400 or short-clip captioning on MSR-VTT. Today, vision-language models reason about hour-long footage, on-device tracking segments any object at 16 FPS on a phone, and a single 100M-parameter encoder can match domain experts across image understanding, dense prediction, and VLM tasks. The shift came from rethinking what a video model needs to do, and from taking deployment constraints seriously.

This post walks through where efficient video intelligence stands in April 2026, following how a video system processes its input from raw frames through spatial perception, long-form temporal understanding, multimodal fusion and reasoning, and the deployment stack that makes any of it shippable.

A note up front: the post leans heavily on research from my own group, including EUPE, the EfficientSAM / Efficient Track Anything / EdgeTAM compression line, LongVU, Tempo, EgoAVU, VideoAuto-R1, DepthLM, and ParetoQ. I have tried to place each piece against the parallel and competing work in its section, but this is a perspective from inside one research program rather than a neutral survey.

Why Video Is Harder Than Text or Images

Token volume. A single minute of 30 FPS video at 224x224 resolution and ViT-B/16 patches produces 1,800 frames times 196 patches per frame, or 352K visual tokens before any text or audio, and an hour is 21M tokens before compression. No frontier LLM context window absorbs this naively, so every video model has to compress somewhere.

Information sparsity. Adjacent frames are usually nearly identical, and the interesting events are rare and unevenly distributed. A surveillance camera at 1 FPS over 24 hours produces 86,400 frames, and the question of interest may depend on three of them. Sampling every frame is wasteful, but uniform sampling drops the frames that matter, so adaptive selection is required.

Multi-modality is intrinsic. Video without audio is half a signal in egocentric, conversational, and many healthcare contexts, even though much surveillance footage is silent and sports broadcast audio is mostly commentary. Video with audio doubles the embedding cost and adds synchronization requirements, and training a native multimodal model is a different problem than bolting an audio adapter onto a vision encoder.

Vision Encoders: From Specialists to Universals