Devoured - April 24, 2026

OpenAI released GPT-5.5 with stronger coding and agentic capabilities, DeepSeek launched V4 models at a fraction of US lab pricing, and Anthropic published a detailed postmortem on three bugs that degraded Claude Code quality between March and April. Kubernetes v1.36 shipped with 70 enhancements including GA user namespaces and volume group snapshots, browsers now support sizes=auto to eliminate manual responsive image calculations, and a critical SSRF in the LMDeploy inference toolkit was weaponized just 12 hours after disclosure.

OpenAI released GPT-5.5, a new language model with enhanced agentic reasoning and tool use that improves coding performance without increasing latency.

Decoder

- Agentic reasoning: The ability of an AI model to autonomously plan, execute multi-step tasks, and make decisions toward goals without constant human guidance

Original article

OpenAI released GPT-5.5 with improved agentic reasoning, tool use, and efficiency, matching prior latency while increasing performance across coding and knowledge tasks.

DeepSeek released its V4 AI model series claiming to match leading US models at a fraction of the cost, intensifying the debate over necessary AI infrastructure spending.

Deep dive

- DeepSeek unveiled V4 Flash and V4 Pro one year after its R1 model triggered market turmoil by demonstrating that competitive AI could be built at far lower costs than US tech giants were spending

- The new models use Hybrid Attention Architecture for improved conversation context retention and support 1 million token context windows, enabling processing of entire codebases or lengthy documents in single prompts

- Pricing undercuts US competitors by 5-10x: $1.74-$3.48 per million tokens versus Anthropic Claude's $3-$15, achieved through Mixture-of-Experts architecture that activates only 37 billion of a trillion total parameters per task

- DeepSeek concedes V4 trails cutting-edge models by 3-6 months but emphasizes its focus on fundamental cost reduction rather than chasing absolute performance benchmarks

- Current computing capacity is severely constrained but expected to expand significantly when Huawei Ascend 950 chip clusters come online in late 2026

- The release boosted Chinese semiconductor stocks (SMIC +10%, Hua Hong +15%) while hurting domestic AI competitors (Zhipu -9%) that lack distribution advantages

- DeepSeek is pursuing its first external funding from Tencent and Alibaba as it scales operations

- Bloomberg Intelligence suggests this won't trigger another "DeepSeek Moment" market disruption but reinforces China's position in cost-efficient AI despite estimated 6-month technical lag

- Both OpenAI and Anthropic have accused DeepSeek of distillation—using their models' outputs to train competing systems—raising intellectual property concerns

- US officials are investigating whether DeepSeek accessed banned Nvidia Blackwell chips for an Inner Mongolia data center, potentially violating export controls

- The cost differential puts pressure on Chinese AI startups like MiniMax and Zhipu that can't match platform companies' distribution reach

- Industry analysts predict performance gaps between models will become imperceptible to users, making cost structure and distribution the decisive competitive factors

Decoder

- Mixture-of-Experts (MoE): Architecture that divides a large model into specialized sub-models and activates only relevant ones for each task, drastically reducing computational costs

- Context window: The amount of text an AI model can process simultaneously; 1 million tokens enables handling entire large codebases or documents in one prompt

- Distillation: Training an AI model by using outputs from a more capable model, potentially violating the original model's terms of service

- Token: Basic unit of text processed by AI models, roughly equivalent to a word or word fragment; API pricing is typically measured per million tokens

- Hybrid Attention Architecture: DeepSeek's technique for improving how models maintain context and memory across extended conversations

- Agentic tasks: Complex, multi-step AI operations where the model acts autonomously to achieve objectives

- Open-source model: AI model with publicly released code and weights, allowing anyone to use, modify, inspect, or deploy it

DeepSeek is raising its first funding round at a $20 billion valuation with Tencent and Alibaba competing for stakes, doubling its valuation in just days.

Original article

DeepSeek is in talks for its first funding round at a $20 billion valuation, with Tencent and Alibaba interested. Tencent is seeking a 20% stake, but DeepSeek doesn't want to lose that much control. The valuation surged from $10 billion to $20 billion in days, illustrating significant investor interest.

Anthropic's valuation hit $1 trillion on secondary markets, surpassing OpenAI's $880 billion, driven by share scarcity and surging demand for its Claude Code developer tool.

Decoder

- Secondary market: Platform where investors buy and sell shares of private companies from existing shareholders, separate from official funding rounds where companies raise new capital directly\n* Forge Global: Trading platform that facilitates secondary market transactions for private company shares\n* Annualized run rate: Current monthly or quarterly revenue projected over a full year to estimate annual performance

Original article

Anthropic just overtook OpenAI with $1 trillion valuation

Anthropic is now valued higher than its main competitor, OpenAI, according to share sales on secondary markets.

The artificial intelligence firm hit a $1 trillion valuation on Forge Global, a financial platform that allows investors to acquire shares from private companies.

The figure is considerably higher than the $380 billion that Anthropic was valued at during a funding round three months ago.

ChatGPT creator OpenAI is currently trading at around $880 billion on Forge Global – roughly equivalent to its $852 billion valuation from its latest funding round.

The inflated value of Anthropic, which owns the Claude chatbot, appears to come from a shortage of available shares, with shareholders reportedly being inundated with unsolicited offers for their stakes.

"Just got offered a $1.05 trillion valuation on my Anthropic shares from a very well known growth fund," Anthropic investor Jesse Leimgruber wrote in a post to X. "Absolutely wild."

Investor interest has been driven by Anthropic's revenue growth, which has risen rapidly amid mass adoption of its Claude Code tool among developers, as well as partnerships with tech giants like Amazon and Palantir.

The firm's annualised run rate rose from $9 billion in late 2025 to $39 billion in March 2026, according to figures seen by Business Insider.

"We receive daily offers, from the ridiculous to the sublime," Bradley Horowitz, a partner at Wisdom Ventures and an early investor in Anthropic, told the publication.

"It's almost less about the return than being able to say they're an Anthropic investor."

Rainmaker Securities CEO Glen Anderson, who received an offer to buy Anthropic shares at a $960 billion valuation, added: "It's been an epic run for Anthropic. Everybody wants to be part of a generational opportunity in AI, and right now, Anthropic is in the pole position."

Some people have even offered to exchange their property for Anthropic shares, according to a post on LinkedIn.

The Independent has reached out to Anthropic and OpenAI for comment.

Anthropic published a detailed postmortem explaining how three separate bugs caused Claude Code quality degradation between March and April 2026, and what they're changing to prevent similar issues.

Deep dive

- On March 4, Anthropic changed Claude Code's default reasoning effort from "high" to "medium" to address complaints about UI freezing from long thinking times, but users reported this made Claude feel less intelligent and the change was reverted April 7

- A March 26 caching optimization intended to reduce costs when resuming idle sessions had a bug that caused it to clear thinking history on every turn instead of just once, making Claude appear forgetful and repetitive

- The caching bug was especially hard to debug because it only affected sessions that had been idle for over an hour, and two unrelated internal experiments masked the issue during testing

- Opus 4.7 was able to catch the caching bug in code review when given full repository context, while Opus 4.6 missed it, leading Anthropic to improve their code review tooling

- On April 16, a system prompt change added strict word limits ("≤25 words between tool calls, ≤100 words in final responses") to combat Opus 4.7's verbosity, but this caused a 3% drop in coding evaluations

- The three issues affected different user segments on different timelines, making the aggregate effect look like broad inconsistent degradation that was hard to distinguish from normal feedback variation

- Anthropic is responding by ensuring more internal staff use the exact public build, improving their internal Code Review tool for wider release, and running broader eval suites for every system prompt change

- The company is adding "soak periods" and gradual rollouts for any changes that might trade off against intelligence, and implementing tighter controls on system prompt modifications

- Anthropic created a new @ClaudeDevs Twitter account to provide detailed explanations of product decisions and reasoning

- As compensation, Anthropic reset usage limits for all Claude Code subscribers on April 23

Decoder

- Reasoning effort: A parameter in Claude that controls how long the model "thinks" before responding, with higher effort producing better outputs but higher latency and token usage

- Prompt caching: An optimization that stores recent prompts to make repeated API calls faster and cheaper by reusing cached input tokens

- Extended thinking: A feature where Claude's internal reasoning process is preserved in conversation history so it can reference why it made previous decisions

- Test-time compute: The computational resources spent during inference when generating responses, as opposed to training time—more thinking at test-time can improve output quality

- Ablations: Experiments where individual components are removed to understand their isolated impact, commonly used in ML to measure what parts of a system contribute to performance

- Evals: Short for "evaluations"—benchmark tests used to measure model performance on specific tasks

Original article

Over the past month, we've been looking into reports that Claude's responses have worsened for some users. We've traced these reports to three separate changes that affected Claude Code, the Claude Agent SDK, and Claude Cowork. The API was not impacted.

All three issues have now been resolved as of April 20 (v2.1.116).

In this post, we explain what we found, what we fixed, and what we'll do differently to ensure similar issues are much less likely to happen again.

We take reports about degradation very seriously. We never intentionally degrade our models, and we were able to immediately confirm that our API and inference layer were unaffected.

After investigation, we identified three different issues:

- On March 4, we changed Claude Code's default reasoning effort from

hightomediumto reduce the very long latency—enough to make the UI appear frozen—some users were seeing inhighmode. This was the wrong tradeoff. We reverted this change on April 7 after users told us they'd prefer to default to higher intelligence and opt into lower effort for simple tasks. This impacted Sonnet 4.6 and Opus 4.6. - On March 26, we shipped a change to clear Claude's older thinking from sessions that had been idle for over an hour, to reduce latency when users resumed those sessions. A bug caused this to keep happening every turn for the rest of the session instead of just once, which made Claude seem forgetful and repetitive. We fixed it on April 10. This affected Sonnet 4.6 and Opus 4.6.

- On April 16, we added a system prompt instruction to reduce verbosity. In combination with other prompt changes, it hurt coding quality and was reverted on April 20. This impacted Sonnet 4.6, Opus 4.6, and Opus 4.7.

Because each change affected a different slice of traffic on a different schedule, the aggregate effect looked like broad, inconsistent degradation. While we began investigating reports in early March, they were challenging to distinguish from normal variation in user feedback at first, and neither our internal usage nor evals initially reproduced the issues identified.

This isn't the experience users should expect from Claude Code. As of April 23, we're resetting usage limits for all subscribers.

A change to Claude Code's default reasoning effort

When we released Opus 4.6 in Claude Code in February, we set the default reasoning effort to high.

Soon after, we received user feedback that Claude Opus 4.6 in high effort mode would occasionally think for too long, causing the UI to appear frozen and leading to disproportionate latency and token usage for those users.

In general, the longer the model thinks, the better the output. Effort levels are how Claude Code lets users set that tradeoff—more thinking versus lower latency and fewer usage limit hits. As we calibrate effort levels for our models, we take this tradeoff into account in order to pick points along the test-time-compute curve that give people the best range of options. In the product layer, we then choose which point along this curve we set as our default, and that is the value we send to the Messages API as the effort parameter; we then make the other options available via /effort.

In our internal evals and testing, medium effort achieved slightly lower intelligence with significantly less latency for the majority of tasks. It also didn't suffer from the same issues with occasional very long tail latencies for thinking, and it helped maximize users' usage limits. As a result, we rolled out a change making medium the default effort, and explained the rationale via in-product dialog.

Soon after rolling out, users began reporting that Claude Code felt less intelligent. We shipped a number of design iterations to make the current effort setting clearer in order to alert people they could change the default (notices on startup, an inline effort selector, and bringing back ultrathink), but most users retained the medium effort default.

After hearing feedback from more customers, we reversed this decision on April 7. All users now default to xhigh effort for Opus 4.7, and high effort for all other models.

A caching optimization that dropped prior reasoning

When Claude reasons through a task, that reasoning is normally kept in the conversation history so that on every subsequent turn, Claude can see why it made the edits and tool calls it did.

On March 26, we shipped what was meant to be an efficiency improvement to this feature. We use prompt caching to make back-to-back API calls cheaper and faster for users. Claude writes the input tokens to the cache when it makes an API request, then after a period of inactivity the prompt is evicted from cache, making room for other prompts. Cache utilization is something we manage carefully (more on our approach).

The design should have been simple: if a session has been idle for more than an hour, we could reduce users' cost of resuming that session by clearing old thinking sections. Since the request would be a cache miss anyway, we could prune unnecessary messages from the request to reduce the number of uncached tokens sent to the API. We'd then resume sending full reasoning history. To do this we used the clear_thinking_20251015 API header along with keep:1.

The implementation had a bug. Instead of clearing thinking history once, it cleared it on every turn for the rest of the session. After a session crossed the idle threshold once, each request for the rest of that process told the API to keep only the most recent block of reasoning and discard everything before it. This compounded: if you sent a follow-up message while Claude was in the middle of a tool use, that started a new turn under the broken flag, so even the reasoning from the current turn was dropped. Claude would continue executing, but increasingly without memory of why it had chosen to do what it was doing. This surfaced as the forgetfulness, repetition, and odd tool choices people reported.

Because this would continuously drop thinking blocks from subsequent requests, those requests also resulted in cache misses. We believe this is what drove the separate reports of usage limits draining faster than expected.

Two unrelated experiments made it challenging for us to reproduce the issue at first: an internal-only server-side experiment related to message queuing; and an orthogonal change in how we display thinking suppressed this bug in most CLI sessions, so we didn't catch it even when testing external builds.

This bug was at the intersection of Claude Code's context management, the Anthropic API, and extended thinking. The changes it introduced made it past multiple human and automated code reviews, as well as unit tests, end-to-end tests, automated verification, and dogfooding. Combined with this only happening in a corner case (stale sessions) and the difficulty of reproducing the issue, it took us over a week to discover and confirm the root cause.

As part of the investigation, we back-tested Code Review against the offending pull requests using Opus 4.7. When provided the code repositories necessary to gather complete context, Opus 4.7 found the bug, while Opus 4.6 didn't. To prevent this from happening again, we are now landing support for additional repositories as context for code reviews.

We fixed this bug on April 10 in v2.1.101.

A system prompt change to reduce verbosity

Our latest model, Claude Opus 4.7, has a notable behavioral quirk relative to its predecessor: as we wrote about at launch, it tends to be quite verbose. This makes it smarter on hard problems, but it also produces more output tokens.

A few weeks before we released Opus 4.7, we started tuning Claude Code in preparation. Each model behaves slightly differently, and we spend time before each release optimizing the harness and product for it.

We have a number of tools to reduce verbosity: model training, prompting, and improving thinking UX in the product. Ultimately we used all of these, but one addition to the system prompt caused an outsized effect on intelligence in Claude Code:

"Length limits: keep text between tool calls to ≤25 words. Keep final responses to ≤100 words unless the task requires more detail."

After multiple weeks of internal testing and no regressions in the set of evaluations we ran, we felt confident about the change and shipped it alongside Opus 4.7 on April 16.

As part of this investigation, we ran more ablations (removing lines from the system prompt to understand the impact of each line) using a broader set of evaluations. One of these evaluations showed a 3% drop for both Opus 4.6 and 4.7. We immediately reverted the prompt as part of the April 20 release.

Going forward

We are going to do several things differently to avoid these issues: we'll ensure that a larger share of internal staff use the exact public build of Claude Code (as opposed to the version we use to test new features); and we'll make improvements to our Code Review tool that we use internally, and ship this improved version to customers.

We're also adding tighter controls on system prompt changes. We will run a broad suite of per-model evals for every system prompt change to Claude Code, continuing ablations to understand the impact of each line, and we have built new tooling to make prompt changes easier to review and audit. We've additionally added guidance to our CLAUDE.md to ensure model-specific changes are gated to the specific model they're targeting. For any change that could trade off against intelligence, we'll add soak periods, a broader eval suite, and gradual rollouts so we catch issues earlier.

We recently created @ClaudeDevs on X to give us the room to explain product decisions and the reasoning behind them in depth. We'll share the same updates in centralized threads on GitHub.

Finally, we'd like to thank our users: the people who used the /feedback command to share their issues with us (or who posted specific, reproducible examples online) are the ones who ultimately allowed us to identify and fix these problems. Today we are resetting usage limits for all subscribers.

We're immensely grateful for your feedback and for your patience.

OpenAI released an open-weight model that detects and removes personally identifiable information from text, enabling developers to run privacy filtering locally.

Decoder

- PII: Personally Identifiable Information like names, addresses, phone numbers, and email addresses that can identify individuals

- Open-weight model: A model whose trained parameters are publicly available, allowing anyone to download and run it locally (similar to open-source but specifically for AI models)

Original article

OpenAI released a lightweight open-weight model for detecting and redacting PII in text, designed for fast, local, context-aware privacy filtering workflows.

Amazon researchers open-sourced a method to expand Mixture-of-Experts language models during training by duplicating experts, cutting training costs by 32% while maintaining performance.

Deep dive

- Demonstrated on a 7B→13B parameter expansion (1B active) with 32→64 experts pre-trained on 380B tokens, matching fixed-size baseline quality (56.4 vs 56.7 avg accuracy across 11 benchmarks, 1.263 vs 1.267 validation loss)

- Reduces training cost by ~32% of GPU hours (27,888 vs 41,328 hours) when training from scratch, or ~67% when starting from an existing checkpoint

- Uses gradient-based importance scores to determine which experts to duplicate more frequently—high-utility experts receive more copies

- Router weights are extended with small bias perturbations to seed routing diversity among duplicate experts

- Stochastic gradient diversity and loss-free load balancing during continued pre-training break symmetry and drive specialization

- Top-K routing remains fixed throughout so per-token inference cost is unchanged

- Generalizes to full MoE architectures with 256→512 experts and TopK=8, achieving 93-95% gap closure across scales from 154M to 1B parameters

- Released under CC-BY-NC-4.0 license (academic/research use only) and integrates with NeMo/Megatron-LM via runtime monkey-patching with no fork required

- Supports multiple duplication strategies including utility-based selection (gradient norm, saliency, Fisher information), exact copy, copy with noise, and SVD perturbation

- Includes 98 tests covering all methods, strategies, and integration scenarios

Decoder

- MoE (Mixture-of-Experts): Neural network architecture with multiple specialized sub-networks (experts) where a router selects which experts process each input

- Top-K routing: Only the K highest-scoring experts are activated for each token, keeping inference cost fixed regardless of total expert count

- Active parameters: The subset of model parameters actually used during inference, versus total parameters available in the model

- Continued pre-training (CPT): Resuming training on a modified model architecture to specialize duplicated components

- All-to-all communication: Distributed training pattern where data must be exchanged between all compute nodes, expensive at scale

- Gradient-based importance scores: Metrics like gradient norm or Fisher information that estimate how valuable each expert is for the task

- Load balancing: Ensuring experts receive roughly equal amounts of training data to prevent some from being underutilized

Original article

Expert Upcycling

Capacity expansion for Mixture-of-Experts models during continued pre-training.

Dwivedi et al., "Expert Upcycling: Shifting the Compute-Efficient Frontier of Mixture-of-Experts" (preprint).

Scaling laws show that MoE quality improves predictably with total expert count at fixed active computation, but training large MoEs from scratch is expensive — memory, gradients, and all-to-all communication all scale with total parameters. Expert upcycling sidesteps this by starting training with a smaller E-expert model and expanding to mE experts mid-training via the upcycling operator:

- Expert replication — each expert is duplicated (high-utility experts receive more copies via gradient-based importance scores).

- Router extension — router weights are copied to new slots with small bias perturbations to seed routing diversity.

- Continued pre-training (CPT) — stochastic gradient diversity and loss-free load balancing break symmetry among duplicates, driving specialization.

Top-K routing is held fixed throughout, so per-token inference cost is unchanged.

Figure 1: Overview of the expert upcycling procedure.

Key results on a 7B→13B total parameter (1B active) interleaved MoE, pre-trained on 380B tokens:

- The upcycled model (32→64 experts) matches the fixed-size 64-expert baseline across 11 downstream benchmarks (56.4 vs. 56.7 avg accuracy) and validation loss (1.263 vs. 1.267).

- Training cost is reduced by ~32% of GPU hours (27,888 vs. 41,328 hours). When a pre-trained checkpoint already exists (e.g., from a prior training run or a public release), the pre-training cost is already paid and only the CPT phase is needed, bringing savings to ~67%.

- Results generalize to full MoE architectures (256→512 experts, TopK=8) with 93–95% gap closure across scales from 154M to 1B total parameters.

Figure 2: GPU hours, validation loss, and downstream accuracy for the 7B→13B upcycled model vs. baselines.

Installation

Recommended: NeMo 2.x container

Start from the official NeMo container — PyTorch, Megatron-LM, Transformer Engine, NeMo, Lightning, and omegaconf are all pre-installed.

docker run --gpus all --ipc=host --ulimit memlock=-1 --ulimit stack=67108864 \

-v /path/to/expert-upcycling:/workspace/expert-upcycling \

-it nvcr.io/nvidia/nemo:24.09 bash

# Inside the container:

cd /workspace/expert-upcycling

pip install -e .

pip install daciteDo not use

pip install -e ".[nemo]"inside the container — it would conflict with the container's pre-installed NeMo.

From scratch (no NeMo container)

Install dependencies manually, then install the package with the relevant extras:

# Core only (torch + numpy):

pip install -e .

pip install dacite

# With Megatron-LM integration:

pip install -e ".[megatron]"

# Full NeMo entrypoint (installs NeMo, Lightning, omegaconf):

pip install -e ".[nemo]"Quick Start

Option A: NeMo entrypoint (recommended)

Edit configs/upcycle.yaml to set your model dimensions, then run from the repo root:

# Single GPU

cd /workspace/expert-upcycling

python -m expert_upcycling.entrypoint \

--config-path=configs --config-name=upcycle \

resume.restore_config.path=/path/to/base/checkpoint

# Multi-GPU (e.g. 8 GPUs with tensor parallelism)

torchrun --nproc_per_node=8 -m expert_upcycling.entrypoint \

--config-path=configs --config-name=upcycle \

resume.restore_config.path=/path/to/base/checkpoint \

strategy.tensor_model_parallel_size=8The callback fires on the first optimizer step, doubles the expert count, saves the upcycled checkpoint, and exits. The output path defaults to <input_checkpoint>-upcycled.

Option B: Patch existing training script

import expert_upcycling

expert_upcycling.apply_patches()

# Now TEGroupedMLP has .upcycle_experts() and TopKRouter has .upcycle_router()

# Call them during training at the desired transition point.

# Note: model is typically wrapped — unwrap to reach the decoder:

inner = model

for attr in ("module", "module"):

if hasattr(inner, attr):

inner = getattr(inner, attr)

for i, layer in enumerate(inner.decoder.layers):

if hasattr(layer.mlp, 'experts'):

selected = layer.mlp.experts.upcycle_experts(optimizer, i, expert_cfg)

if hasattr(layer.mlp, 'router'):

layer.mlp.router.upcycle_router(router_cfg, selected)Option C: Use the model-level API

from expert_upcycling import perform_expert_upcycling

perform_expert_upcycling(

model, optimizer,

expert_cfg={"usefulness_metric": "gradient_norm", "selection_strategy": "greedy"},

router_cfg={"method": "bias_only", "bias_noise_scale": 0.01},

)Upcycling Strategies

Expert duplication

| Strategy | Description |

|---|---|

| Utility-based (recommended) | Duplicate high-importance experts using gradient-based scores (weight norm, saliency, gradient squared, approx Fisher) |

copy |

Exact duplication (baseline) |

copy_noise |

Duplication + Gaussian noise |

drop_upcycle |

Re-initialize a fraction of columns |

svd_perturb |

SVD decomposition + perturbation |

| + 6 more | See expert_upcycling.config.UpcycleMethod |

Router expansion

| Strategy | Description |

|---|---|

bias_only (recommended) |

Keep weights identical, add noise to bias |

copy |

Exact duplication |

copy_noise |

Duplication + noise |

| + 7 more | See expert_upcycling.config.RouterUpcycleMethod |

Architecture

This package treats Megatron-LM and NeMo as third-party dependencies — no fork required. Upcycling methods are injected at runtime via monkey-patching:

expert-upcycling/ # pip install -e .

├── expert_upcycling/

│ ├── config.py # All enums + dataclasses (no deps)

│ ├── expert_upcycler.py # Heuristic strategies (torch only)

│ ├── expert_selector.py # Utility-based selection (torch + numpy)

│ ├── router_upcycler.py # Router strategies (torch only)

│ ├── optimizer_utils.py # Optimizer state handling (torch only)

│ ├── patch.py # Monkey-patches onto Megatron-LM classes

│ ├── upcycle_model.py # Model traversal

│ └── entrypoint.py # NeMo launch script

├── configs/

│ └── upcycle.yaml # Example config

└── scripts/

└── run_upcycle.sh # Example launch scriptRunning Tests

# CPU tests (no GPU, no Megatron install required)

python tests/test_comprehensive.py # 91 tests: all methods, all strategies

pytest tests/test_integration.py -v # 7 end-to-end integration tests

# GPU test (requires NeMo container + GPU)

python tests/test_entrypoint_gpu.py # real TEGroupedMLP + TopKRouter, 32->64 expertsCitation

@article{dwivedi2025expertupcycling,

title={Expert Upcycling: Shifting the Compute-Efficient Frontier of Mixture-of-Experts},

author={Dwivedi, Chaitanya and Gupta, Himanshu and Varshney, Neeraj and Jayarao, Pratik and Yin, Bing and Chilimbi, Trishul and Huang, Binxuan},

year={2026}

}License

CC-BY-NC-4.0

This code is being released solely for academic and scientific reproducibility purposes, in support of the methods and findings described in the associated publication. Pull requests are not being accepted in order to maintain the code exactly as it was used in the paper.

Oracle's $300 billion AI data center partnership with OpenAI has saturated Wall Street's debt markets, forcing banks to reject new projects and pushing developers to find alternative tenants or financing structures.

Deep dive

- Banks like JPMorgan struggled for months to syndicate billions in construction loans for Oracle-leased data centers in Texas and Wisconsin, as institutional investors hit regulatory limits on single-counterparty exposure

- The concentration problem forced at least one developer (Crusoe) to switch from Oracle to Microsoft as tenant for an Abilene, Texas expansion after lenders refused to finance more Oracle exposure

- Oracle-related project finance deals are among the largest ever: $10 billion for Crusoe's original Abilene site, $38 billion for Vantage's Texas/Wisconsin campuses, and $18 billion for Stack's New Mexico facility

- Oracle plans to raise $50 billion in stock and bonds for 2026 needs, but Morgan Stanley analysts estimate the company still requires over $100 billion more for 2027 and early 2028

- Big tech companies are projected to spend $3 trillion on AI through 2028 but can only self-fund about half from cash generation, making debt access critical to AI infrastructure buildout

- Oracle is in a comparatively weaker financial position than rivals like Google, Microsoft, and Meta—it has a lower investment-grade credit rating, more existing debt, and is currently burning cash

- The cost of protecting Oracle bonds against default via credit-default swaps quadrupled between late September and late March 2026, though it has declined slightly since

- Most of the borrowing was structured as short-term construction loans by data center developers with Oracle as tenant and OpenAI as subtenant, keeping the debt off Oracle's balance sheet

- Vantage's Texas and Wisconsin loans took until Q4 2025 to largely syndicate and required more than 50 lenders to achieve successful distribution levels

- Related Digital's Michigan data center campus chose Bank of America as lead arranger partly because it had less Oracle exposure than competing banks, and switched to bond issuance after seeing the construction-loan market struggles

- Wall Street is generally providing flexible financing for the most creditworthy tech companies like Google, Microsoft, and Meta, but Oracle's financial profile makes lenders more cautious

- Any slowdown in data center construction would hamper AI companies already hitting limits on what they can offer users as computing demand exceeds supply

Decoder

- Counterparty exposure limits: Regulatory and internal risk rules capping how much money a bank or investor can lend to or have tied up with a single borrower or tenant

- Syndication: The process where a lead bank distributes portions of a large loan to other lenders to spread risk and free up balance sheet capacity

- Project finance: Loans structured around a specific project (like a data center) where the debt is secured by the project's assets and future cash flows rather than the developer's overall creditworthiness

- Credit-default swaps (CDS): Insurance-like contracts that pay out if a company defaults on its bonds; rising CDS costs indicate markets see increased default risk

- Investment-grade rating: A credit rating indicating relatively low default risk, typically BBB-/Baa3 or higher from rating agencies; Oracle has this but at a lower level than tech giants

- Burning cash: Spending more cash than the company generates from operations, requiring external financing or asset sales to fund activities

Original article

Oracle's $300 billion megadeal with OpenAI is testing the limit of Wall Street's appetite for debt tied to the datacenter boom. Banks have struggled for months to spread the risk of the billions of dollars in loans they made to build data centers leased to Oracle in Texas and Wisconsin. Bank balance sheets are now clogged, constraining the financing prospects of future projects tied to Oracle and OpenAI. Silicon Valley needs access to debt to meet its goals for AI-related spending, but so far, Wall Street is largely giving a blank check for the AI ambitions of the most creditworthy tech companies.

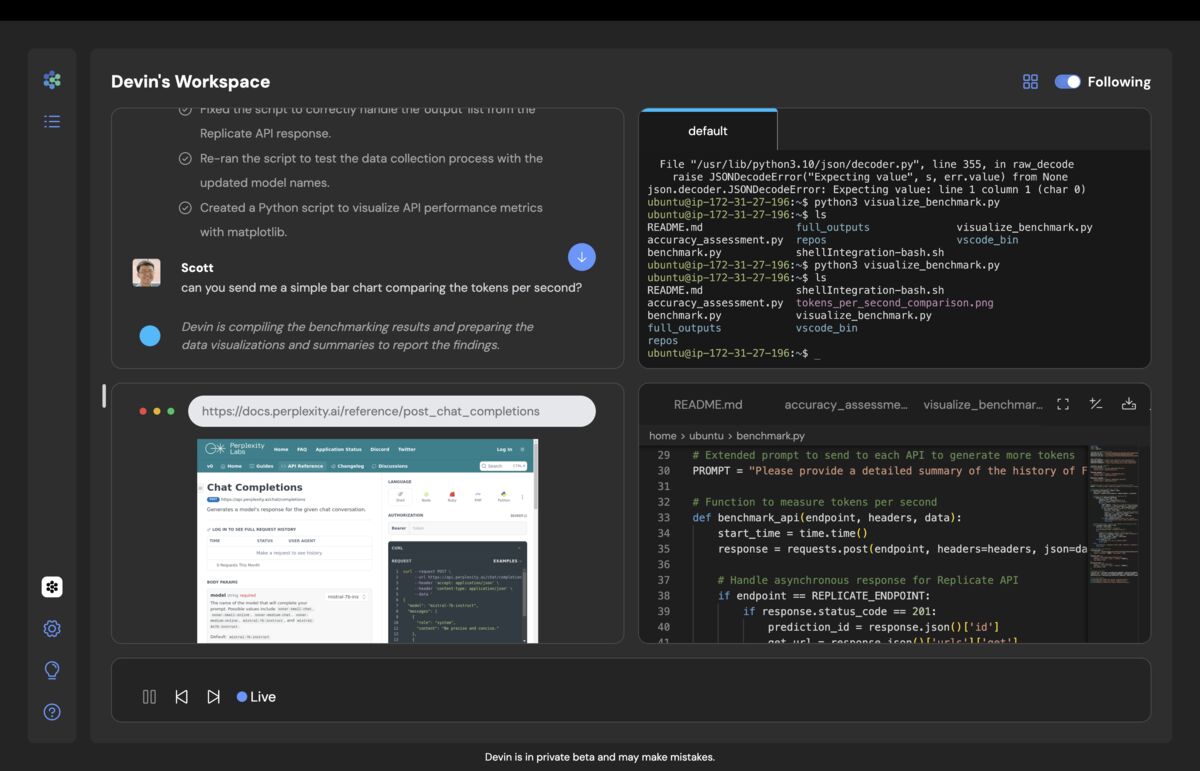

Cognition AI, maker of the Devin AI coding assistant, is raising funding at a $25 billion valuation amid a consolidation wave in AI developer tools.

Original article

Cognition AI is in early talks to raise a round of funding that would more than double its valuation to $25 billion. The talks are ongoing and the terms could change. Cognition uses AI to streamline the process of writing and debugging code, with a focus on selling to businesses. Its flagship product, Devin, is being used by companies like Anduril and Microsoft.

The debate between using code or natural language to specify AI agent behavior is a false choice, as production systems require both structure and flexibility.

Deep dive

- Code-maximalism enforces reliability through deterministic workflows but fails to be agent-native because it strips out the reasoning capability that makes agents useful in the first place

- The runbook approach in AI SRE tools exemplifies code-maximalism's failure: agents execute predefined workflows reliably but become useless when alerts differ from expected patterns or infrastructure changes

- Code-maximalist approaches prevent agents from exploring multiple hypotheses in parallel, forcing them to follow the same single-path debugging humans would use instead of leveraging their computational advantages

- Encoded workflows don't evolve autonomously and provide no meaningful visibility into agent reasoning, only confirmation that predefined steps were executed

- Markdown-maximalism optimizes for flexibility but breaks down in production where engineering decisions require strict constraints around context management, model selection, cost control, and coordination

- AI slide generation tools illustrate Markdown-maximalism's failure mode: outputs are unpredictable and cannot be corrected at granular levels, forcing users to regenerate everything when small details are wrong

- Even sophisticated Markdown-maximalist approaches that use skills.md and agent loops end up requiring code harnesses for context management, model routing, and orchestration

- Hybrid architectures have emerged independently across serious agent implementations (Claude Code, RunLLM) because they're the only approach that supports what agents actually need to do

- The architectural work that matters is determining which parts of a system need reasoning flexibility versus which need deterministic enforcement and constraints

- Agent-native design requires agents to evaluate multiple hypotheses in parallel, provide visibility into their reasoning, adapt to system changes autonomously, and allow correction at appropriate granularity levels

- The Python versus Markdown debate is actually a symptom of the industry still treating agents as workflow automators rather than as systems capable of intelligent planning and execution

Decoder

- Code-maximalism: Using programming languages like Python to define strict, deterministic workflows that agents must follow step-by-step, prioritizing reliability over flexibility

- Markdown-maximalism: Using natural language instructions to describe goals and constraints, allowing agents to plan their own approach rather than following predefined steps

- Agent-native: Design approaches that leverage agents' unique capabilities (parallel hypothesis testing, reasoning, adaptation) rather than simply copying human workflows

- Runbook: A predefined set of procedures for handling specific scenarios, commonly used in operations and incident response

- Harness: The code infrastructure and tooling that manages agent execution, including context management, model routing, and orchestration

Original article

Agents can't choose between structure and flexibility

Why maximizing in either direction is a failure mode

I think it's safe to say that when the LLM hype cycle started a few years ago, no one expected one of the great debates of our time would be between Python and Markdown as agent specification languages. But here we are, and this has quickly turned into one of the most consequential architectural questions in AI.

Before we dive into the consequences of this debate, we'll take a moment to define our terms.

The Python camp uses code to express strict requirements for the steps an agent should take to accomplish a task. The Markdown camp uses English to express broad goals and constraints and lets the agent plan its way to the outcome. The tradeoffs are fairly straightforward. Code creates strong guardrails and reduces the chance that the agent's plan goes off the rails. Markdown gives powerful models the freedom to explore, adapts flexibly across tools and models, but risks the agent doing something unexpected and undesirable.

Most of the debate treats this as a choice between two defensible positions. It isn't. Both maximalist positions are, in fact, failure modes, and the reason is the same: Neither one is actually agent-native. Agents, like humans, are increasingly being given complex tasks, and that requires the flexibility to choose the right tool for the right task (or subtask). Code-maximalism forces agents to follow deterministic workflows and strips out the reasoning that makes them useful. Markdown-maximalism abdicates control and produces systems you can't debug, correct, or improve. Picking a side is how you avoid the hard work of designing an agent.

We're publishing this as part of the Agent Native series because these two approaches increasingly define how agent interactions get built — and because both maximalist versions end up in the same place we wrote about last week: copy-pasting what a human would do, just in different syntax.

What code-maximalism gets wrong

The code-maximalist pitch is reliability. You tell the agent exactly what to do in specific cases, surface errors when things break, and get tightly scoped results. Given that LLMs make mistakes, misunderstand intent, and generally do all sorts of weird things, this sounds appealing in theory. Enforce correctness at the code layer. Don't trust the model to do the right thing.

We're intimately familiar with where this can go wrong in the AI SRE space. Almost every vendor in our space tells customers they have to write runbooks. The product then encodes those runbooks as workflows and has the agent execute them in response to specific alerts. The results are trustworthy in the narrow sense: the agent does roughly what you expected. It's also useless the moment an alert looks different from anything that's come before or the underlying architecture changes. We started down this misguided path ourselves in the early days and quickly learned that it would rarely work in practice.

This approach fails to be agent-native in three ways. First, it copy-pastes what a human does. A human picks one hypothesis — the most likely based on experience — and runs it down. That works when the human is confident, but when the initial hypothesis is wrong, it creates a lot of wasted work. An agent doesn't have to fall into that trap. It can evaluate multiple hypotheses in parallel, and some will be dead ends, but the chance it lands on the right answer goes up dramatically. That's the architecture we've built RunLLM around, and it's consistently how we see real incidents get resolved.

Second, the runbook approach gives humans no meaningful visibility. SREs don't need to confirm that the agent executed Step 3 of the runbook. They need to know what the agent tried, what it ruled out, and why. A well-worn path automates some tedious work, but it doesn't let the human trust or learn from the agent's reasoning.

Third, encoded workflows don't evolve – they lose the intelligence that agents promise. When the underlying system changes or requirements shift, every encoding has to change with it. There's no way for the agent to take feedback, understand that the expected behavior has changed, and adapt on its own without someone going back into the harness.

What Markdown-maximalism gets wrong

The Markdown-maximalist is optimized for flexibility. Describe the goal, hand it to a capable model, let it figure things out. This is portable, expressive, and gets you something working quickly. Where creativity or open-ended problem-solving matters, it can be dramatically more useful than a fixed workflow.

The degenerate version of this is AI slide generation. We don't know the exact architecture behind these tools, but from the outside they read as "let the LLM do everything" applications — one prompt in, a whole slide deck out. The failure mode is familiar to anyone who's used one. Something is off. The layout is weird on slide 7, the chart doesn't match the claim, the flow of the argument is scrambled. You want to say: "On slide 7, make the flow vertical instead of horizontal and move the chart to the bottom." You usually can't get this to work the way you expect. There's no discrete layout logic to adjust, no separable step for chart placement, no addressable unit smaller than the whole generation. You re-prompt, get a new deck that's wrong in a different way, and start over.

It would be easy to write this off as a strawman. Serious Markdown-maximalists aren't arguing for one-shotting every single application. The sophisticated version of the position is skills.md plus a basic agent loop — rich context, thoughtful instructions, and a capable model reasoning its way through. Guide the agent through context, the argument goes, rather than constraining it with fine-grained LLM calls.

Complex applications expose the gap. When you're grappling with reality, there are plenty of engineering decisions that still require strict constraints: Context management and summarization, model selection, cost management, and cross-agent coordination to name a few. In each one of these cases, the challenge is not trusting the model to reason intelligently. It is building the tooling and infrastructure that allows a thoughtful model to execute these tasks efficiently and reliably.

In production, this results in a code harness that manages context, routes between models, orchestrates sub-agents, and handles the predictable places where pure prompting breaks down. That ends up being a hybrid architecture with markdown doing the guidance work and code doing the structural work — which is exactly the position the debate was supposed to be between.

If you start with a Markdown-maximalist architecture, you're probably going to end up building plenty of narrow, harness-like capabilities – content management, model routing, etc. – to enforce constraints whether you like it or not. The question is just whether you design those hooks intentionally or let the code component grow organically. You should be intentional about the design.

The hybrid isn't a compromise

The teams building serious agents have, largely independently, landed in the same place: Markdown for intent and domain guidance, code for enforcement, tool execution, and anything that must not fail silently. Claude Code works this way. We built RunLLM this way.

It's tempting to read this as an unopinionated compromise. That's the wrong framing. The whole point of agents is that – unlike traditional software – they have an understanding of the problem to be solved and can use the right tools to get there. Code-maximalism compromises on the planning and Markdown-maximalism compromises on execution and learning.

The reason hybrid architectures are winning is because they're the only architectures that support what agents are actually supposed to do. An agent needs reasoning flexibility to handle situations it hasn't seen before, and it needs deterministic guardrails so humans can trust it and intervene when needed. Neither extreme gives you both, which means neither maximalist position gives you a truly flexible agent. It gives you either a workflow with aspirations or a wish with nothing to execute it.

The architectural work is figuring out, for each part of your system, which layer it belongs to. What needs to be expressed as intent and reasoned about? What needs to be enforced and checked? Where does the agent need creativity, and where does it need constraints? This is the hard part, and it's the part that picking a side lets you avoid.

What agent-native actually requires

When you stop treating Python vs. Markdown as the debate, the architectural priorities come into focus. Can your agent evaluate multiple hypotheses in parallel, or does it march down one? Can a human see what the agent tried and why, or do they just get a final answer? Can the agent adapt when the underlying system changes, or does someone need to go edit the harness? Can a user correct the output at the level of granularity they care about, or is it all-or-nothing?

The maximalist debates are a symptom of an industry still thinking about agents as workflow automators — either very rigid ones, or very loose ones. The teams building agent-native products are past that argument, because they've figured out that the argument was never really about Python or Markdown. It was about whether you were willing to do the work to build something that actually behaves like an agent.

White House accuses China of industrial-scale AI model distillation, commits to intelligence sharing with OpenAI, Anthropic, Google (11 minute read)

The White House formally accused China of systematically copying US AI models through mass querying and committed to sharing threat intelligence with OpenAI, Anthropic, and Google to combat the practice.

Deep dive

- OpenAI accused DeepSeek in February of using obfuscated third-party proxies to circumvent access restrictions and extract outputs at scale, violating terms prohibiting creation of "imitation frontier AI models"

- Anthropic provided detailed evidence naming three Chinese labs: DeepSeek (150,000+ exchanges on logic and alignment), MiniMax (13 million exchanges), and Moonshot AI (3.4 million exchanges on agentic reasoning and tool use)

- The fraudulent accounts used jailbreaking techniques to expose proprietary information and commercial proxy services to bypass geographic restrictions

- OpenAI, Anthropic, and Google began sharing distillation threat intelligence through the Frontier Model Forum in early April, modeled on cybersecurity threat-sharing frameworks—notable because these are fierce competitors

- The OSTP memo directs federal agencies to share intelligence with US developers and explore accountability measures, but announces no specific sanctions or enforcement actions yet

- Representative Bill Huizenga's bill (H.R. 8283) would direct the government to identify entities using "improper query-and-copy techniques" and impose Commerce Department blacklist sanctions

- The legal foundation remains uncertain—whether extracted model outputs qualify as trade secrets under the Protecting American Intellectual Property Act (signed January 2023) is an open question

- The shift from hardware-only controls acknowledges that chip export restrictions (in place since October 2022) are being circumvented through smuggling and domestic Chinese chip development

- Open-source models like Meta's Llama complicate the picture—Chinese researchers fine-tuned Llama 13B to create ChatBIT for military intelligence, which Meta cannot prevent once weights are public

- Meta's response was to open Llama to US military and Five Eyes allies while maintaining bans for adversaries—a policy distinction that is "legally meaningful and practically unenforceable"

- Model-level restrictions require different enforcement than chip controls: distillation happens over the internet through API calls that can be routed through any jurisdiction, requiring behavioral analysis rather than customs inspections

- The memo positions AI model protection as both a national security imperative and a negotiating chip for the May 14 Trump-Xi summit in Beijing

- DeepSeek demonstrated that frontier AI performance no longer requires Silicon Valley-scale resources, raising the question of how much efficiency was innovation versus extraction

- The emerging architecture is defense in depth: control the chips, control the models, and track both—with proposals to tag AI chips with unique identifiers as a third layer

Decoder

- Model distillation: A technique where you query an AI model thousands or millions of times with carefully crafted questions, then use those responses to train a cheaper model that approximates the original's capabilities without accessing the underlying model weights

- OSTP: Office of Science and Technology Policy, a White House office that advises on science and technology matters

- Model weights: The numerical parameters that define how a neural network operates—the actual "brain" of an AI model

- Jailbreaking: Techniques to circumvent an AI model's safety restrictions or usage policies to extract information it's designed to withhold

- Geofencing: Geographic restrictions that block access to services from certain countries or regions

- Entity list: The Commerce Department's trade restriction blacklist that prohibits US companies from doing business with listed foreign entities

- Frontier models: The most advanced, capable AI models available at any given time

- Five Eyes: Intelligence alliance between the US, UK, Canada, Australia, and New Zealand

Original article

Summary: The White House OSTP released a policy memo accusing China of "industrial-scale" distillation of US AI models, committing to share intelligence with US AI companies and explore accountability measures. OpenAI accused DeepSeek of distilling its models in February; Anthropic named DeepSeek, MiniMax, and Moonshot AI as having created 24,000 fraudulent accounts generating 16+ million exchanges with Claude. The Deterring American AI Model Theft Act (H.R. 8283) was introduced on 15 April. The memo arrives three weeks before a planned Trump-Xi summit on 14 May.

The White House accused China on Wednesday of conducting "industrial-scale" theft of American artificial intelligence, releasing a policy memorandum that commits the government to sharing intelligence with US AI companies about foreign distillation campaigns and exploring measures to hold the perpetrators accountable. Michael Kratsios, director of the Office of Science and Technology Policy, said the US "has evidence that foreign entities, primarily in China, are running industrial-scale distillation campaigns to steal American AI. We will be taking action to protect American innovation." The memo lands three weeks before a planned Trump-Xi summit in Beijing on 14 May, positioning AI technology protection as both a national security imperative and a negotiating chip.

Distillation is the technique at the centre of the dispute. It does not require stealing model weights or breaking into servers. A distiller feeds thousands or millions of carefully constructed queries to a frontier AI model, collects the responses, and uses those responses to train a cheaper rival model that approximates the original's capabilities at a fraction of the cost. It is, in effect, learning from the teacher's answers rather than the teacher's brain. The legal status of this technique is unsettled. The strategic implications are not.

The evidence

The OSTP memo builds on allegations that US AI companies have been making since February. OpenAI sent a formal memo to the House Select Committee on China on 12 February accusing DeepSeek of distilling its models. OpenAI said it had identified accounts associated with DeepSeek employees that developed methods to circumvent access restrictions, routing queries through obfuscated third-party proxies to extract outputs at scale. OpenAI's terms of service explicitly prohibit using outputs to create "imitation frontier AI models." DeepSeek has not publicly responded to the allegations.

Anthropic published more detailed evidence on 23 February, naming three Chinese laboratories. DeepSeek, it said, conducted more than 150,000 exchanges with Claude focused on foundational logic and alignment techniques. MiniMax drove the most traffic, with more than 13 million exchanges. Moonshot AI generated more than 3.4 million exchanges targeting agentic reasoning, tool use, coding, and computer vision. Across the three firms, Anthropic identified approximately 24,000 fraudulent accounts that generated more than 16 million exchanges with Claude. The accounts used jailbreaking techniques to expose proprietary information and circumvented geofencing through commercial proxy services.

By early April, OpenAI, Anthropic, and Google had begun sharing distillation threat intelligence through the Frontier Model Forum, a coalition originally founded in 2023 with Microsoft. The arrangement is modelled on cybersecurity threat-sharing frameworks: when one company detects an attack pattern, it flags it for the others. That three fierce competitors agreed to cooperate on anything is itself a measure of how seriously they take the threat. DeepSeek proved that frontier AI performance no longer requires Silicon Valley-scale resources, and the question the US government is now asking is how much of that efficiency was earned and how much was extracted.

The policy response

The OSTP memo is a policy statement, not an executive order or a binding regulation. It directs federal departments to share intelligence with US AI developers about foreign distillation attempts, help industry strengthen technical defences, and explore accountability measures for foreign actors. No specific sanctions, entity list additions, or enforcement actions were announced on Wednesday. The memo's practical force will depend on what follows it.

Congress is moving in parallel. On 15 April, Representative Bill Huizenga introduced the Deterring American AI Model Theft Act of 2026, co-sponsored by Representative John Moolenaar, who chairs the House Select Committee on China. The bill would direct the government to identify entities using "improper query-and-copy techniques" and impose sanctions through the Commerce Department blacklist. The House Select Committee held a hearing on 16 April titled "China's Campaign to Steal America's AI Edge," with witnesses from Brookings, the Silverado Policy Accelerator, and the America First Policy Institute. The issue has bipartisan support. Roll Call reported that "winning the AI arms race holds appeal for both parties."

The legal theory underpinning prosecution remains unclear. The Protecting American Intellectual Property Act, signed in January 2023, authorises sanctions for trade secret theft, but whether extracted model outputs qualify as trade secrets under existing frameworks is an open question. The South China Morning Post noted that Anthropic's distillation charges "expose an AI training grey area," and legal analysts at Just Security have argued that the case for imposing costs on distillation requires targeted government intervention precisely because existing intellectual property law does not cleanly cover it.

The second line of defence

The shift from hardware controls to model-level protections represents an acknowledgement that the first line of defence is leaking. The US has been restricting China's access to advanced AI chips since October 2022, broadening the rules in October 2023 and again with the AI Diffusion Rule in January 2025. In January 2026, the Bureau of Industry and Security shifted its review of H200 and AMD MI325X exports to China from a presumption of denial to case-by-case review, while the White House simultaneously imposed a 25% tariff on advanced semiconductors. Nvidia was permitted to sell its H20 inference chip; AMD its MI308.

But hardware controls are circumvented in practice. A $2.5 billion scheme to smuggle Nvidia AI chips to China through Super Micro's co-founder was charged in March. Jensen Huang warned that DeepSeek optimising for Huawei chips would be a "horrible outcome" for America, because it would eliminate the hardware chokepoint entirely. If advanced chips can be smuggled despite export controls, and if Chinese chipmakers are closing the gap with domestic alternatives, then preventing access to the models themselves becomes the critical second layer of the technology denial strategy. Proposals to tag AI chips with unique identifiers represent a third layer, tracking hardware flows to prevent diversion. The emerging architecture is defence in depth: control the chips, control the models, and track both.

The open-source complication

Distillation is not the only channel through which US AI technology reaches Chinese laboratories. Meta's Llama models are open source, meaning the weights are publicly available for download. Chinese researchers from PLA-linked institutions fine-tuned Llama 13B on military data to create ChatBIT, a model designed for military intelligence applications. Meta's acceptable use policy prohibits military and espionage applications, but the company has no technical means to enforce that restriction on open-source releases. Once the weights are published, control is relinquished. Meta responded by opening Llama to the US military and Five Eyes allies while maintaining the ban for adversaries, a policy distinction that is legally meaningful and practically unenforceable.

The tension between open-source AI and national security has been building for years but has not produced a coherent policy resolution. Open-source models drive research, attract talent, and create ecosystems that benefit American companies. Restricting them would slow US innovation while pushing Chinese developers toward domestic alternatives. Not restricting them means providing the foundational technology for adversary military applications. The Huizenga bill focuses on distillation, the unauthorised extraction of capability from closed models, rather than on open-source distribution, sidestepping the harder question.

What comes next

The US-China chip war has already drawn allies into the effort, with the Netherlands restricting ASML's lithography exports under American pressure. Model-level restrictions would require a different enforcement architecture. Chips are physical objects that cross borders. Distillation happens over the internet, through API calls that can be routed through any jurisdiction. Detecting it requires the kind of behavioural analysis that Anthropic performed when it identified 24,000 fraudulent accounts, not the kind of customs inspection that catches smuggled hardware.

The Trump-Xi summit on 14 May will test whether the OSTP memo is the beginning of a sustained enforcement campaign or a negotiating position designed to extract concessions. China wants the US to loosen technology controls, remove more than 1,000 Chinese firms from entity lists, and reduce investment restrictions. The US wants China to stop distilling its AI models, stop smuggling its chips, and stop fine-tuning its open-source models for military use. The gap between those positions is wide enough that neither side is likely to get what it wants. What the memo establishes, regardless of the summit's outcome, is that the US now treats AI model protection as a category of national security alongside chip export controls and semiconductor equipment restrictions. The question is no longer whether distillation is a problem. It is whether the government can enforce a border around something that has no physical form.

Google is rolling out AI-powered search summaries in Gmail that answer natural language questions by synthesizing information across multiple email threads.

Decoder

- Gemini for Workspace: Google's AI assistant product for business email and productivity tools

- AI Overviews: Google's feature that uses AI to generate summarized answers from search results or content

- Workspace Intelligence: Google's AI capabilities built into Workspace products

Original article

During its Google Cloud Next conference on Wednesday, the company announced a slew of Workspace-focused updates, including the addition of its AI Overviews feature to Gmail. The feature, which today uses AI to summarize Google Search results, will now do the same for Gmail users in the workplace.

According to Google, this will allow Gmail users to ask questions in search using natural language and then get concise answers without having to open and read different emails.

The company suggests the feature could be used to ask business-related questions about topics typically shared in emails, like those about performance improvements, project milestones, invoices, comments on decks, trip details, and more with straightforward answers.

The AI Overview will create an instant summary pulled from across multiple emails and conversations.

While not everyone prefers to have AI as their first step to finding an answer, it is rapidly becoming the norm, both within Google's products and elsewhere on the web.

In this case, Google says the AI Overviews in Gmail will be the default setting if the company has Gemini for Workspace in Gmail enabled and if Workspace Intelligence access to Gmail is enabled. (End users must have "Smart features in Gmail, Chat, and Meet" and "Google Workspace smart features" enabled, too.)

The feature was previously available to consumers with Google AI Pro and Ultra subscriptions. Google says it will also now come to business, enterprise, and education customers as well through the following products:

- Business: Business Starter, Standard, and Plus

- Enterprise: Enterprise Starter, Standard, and Plus

- Consumers: Google AI Pro and Ultra

- Other Editions: Frontline Plus

- AI Add-ons: Google AI Pro for Education

Alongside the launch, Google said it's also making AI Overviews in Drive broadly available to eligible Workspace and Google AI plans. It was previously in beta.

Microsoft to invest $1.8B in Australia to expand AI, cloud, and digital infrastructure (2 minute read)

Microsoft is committing $18 billion to expand AI and cloud infrastructure in Australia by 2029, its largest investment in the country to date.

Decoder

- Azure: Microsoft's cloud computing platform and service offering

- GPU offerings: Graphics processing units optimized for AI and machine learning workloads, increasingly sold as cloud services

- Cloud regions: Geographically distributed data center clusters that provide localized cloud services with lower latency and data residency compliance

Original article

Microsoft is investing $1.8 billion to significantly expand its cloud computing and artificial intelligence infrastructure across Australia.

AI2's OlmoEarth Studio now exports pre-computed embedding vectors from satellite imagery that enable similarity search, land-cover mapping, and change detection with minimal training data or compute.

Deep dive

- OlmoEarth Studio computes embeddings on-demand rather than serving pre-computed archives, so you can specify exact time ranges (1-12 monthly periods) and capture seasonal dynamics instead of just annual snapshots

- Three encoder variants offer different trade-offs: Nano (128-dim, 1.4M params), Tiny (192-dim, 6.2M params), and Base (768-dim, 89M params), with Tiny delivering strong performance at lower compute and storage cost

- Embeddings are exported as Cloud-Optimized GeoTIFFs with one band per dimension, stored as int8 (-127 to +127) for efficient distribution, then dequantized to floating-point for analysis

- Similarity search works by computing cosine similarity between a query pixel and all other pixels—urban areas cluster together, agricultural parcels form distinct groups, with no labels required

- Few-shot segmentation with a simple logistic regression on 192-dimensional embeddings produced coherent land-cover maps from just 60 labeled pixels (20 per class) with F1=0.84, and accuracy saturated quickly because embeddings do the heavy lifting

- Change detection compares embeddings from two time periods using cosine distance—monthly embeddings from September 2023 vs 2024 immediately highlighted the Park Fire burn scar in California with no training

- PCA reduction to three dimensions creates false-color visualizations where similar embeddings get similar colors automatically, revealing landscape structure like crop parcel boundaries without supervision

- All examples use frozen embeddings with zero task-specific training, showing the foundation model already learned useful representations, though supervised fine-tuning is available for higher-performance applications

- The code is remarkably simple: load the multi-band GeoTIFF with rasterio, reshape to (pixels, dimensions), train sklearn StandardScaler + LogisticRegression on labeled pixels, predict everywhere

- Outputs work with standard geospatial tools (QGIS, GDAL, rasterio) and integrate into existing workflows without specialized infrastructure

- Global visualization of 1.1M samples shows embeddings cluster by season and land type when reduced with PCA and k-means, demonstrating the model learned meaningful Earth surface patterns during pretraining

- Performance depends on input imagery quality—persistent cloud cover, atmospheric artifacts, or missing observations can affect embedding quality, so validation is recommended for each use case

Decoder

- Embeddings: Compact numerical vector representations that encode semantic information about data—similar locations get similar vectors, enabling comparison via simple operations like cosine similarity or clustering

- Foundation model: A large pre-trained neural network trained on broad data that learns general-purpose representations, which can then be adapted to specific tasks with minimal additional training

- COG (Cloud-Optimized GeoTIFF): A standard geospatial raster format optimized for efficient streaming and partial reads over HTTP, widely supported by GIS tools

- Sentinel-2 L2A: European Space Agency satellite providing multi-spectral optical imagery at 10-60m resolution with atmospheric correction applied (Level-2A processing)

- Sentinel-1 RTC: ESA radar satellite data processed to Radiometric Terrain Correction, which accounts for topographic effects and provides imagery that works through clouds

- Linear probe: A standard evaluation technique where you freeze a pre-trained model's representations and train only a simple linear classifier on top, measuring how much task-relevant information the representations already contain

- PCA (Principal Component Analysis): Dimensionality reduction technique that finds the directions of maximum variance in high-dimensional data, often used to compress embeddings to 2-3 dimensions for visualization

Original article

Introducing OlmoEarth embeddings: Custom embedding exports from OlmoEarth Studio for downstream analysis

OlmoEarth Studio, our platform for building Earth observation models, now lets you compute and export embedding vectors—compact numerical representations of Earth-observation data produced by our open source OlmoEarth foundation models. The source code and model weights are publicly available alongside the research paper, so the community can inspect exactly how these embeddings are generated.

Embeddings are a fast, cost-effective entry point for leveraging OlmoEarth: they support a wide range of downstream tasks, from similarity search to segmentation to unsupervised exploration. Locations with similar surface characteristics end up with similar vectors; locations that differ land far apart. OlmoEarth embeddings have shown strong performance in our own benchmarking and in independent evaluations. The exported Cloud-Optimized GeoTIFFs (COGs) are lightweight and easy to share. Choose your area of interest, time range, encoder variant, resolution, and imagery sources via the Studio UI or API, and get back a COG you can use however you like. If your application requires higher performance, Studio also supports supervised fine-tuning (SFT).

Custom-computed embeddings are now available for users of OlmoEarth Studio. Reach out if you're interested in gaining access. Instructions for using the publicly available OlmoEarth models to compute your own embeddings are available here.

Computing embeddings in Studio

Computing embeddings follows the same workflow as any other prediction in Studio. First configure a model and run it, and then download the results. Several parameters tailor the output:

- Area of interest: Draw or upload any polygon; Studio handles imagery acquisition and tiling.

- Time span: 1-12 monthly periods.

- Encoder variant: Nano (128-dim, 1.4M params), Tiny (192-dim, 6.2M params), or Base (768-dim, 89M params).

- Spatial resolution: 10 meter, 20 meter, 40 meter, or 80 meter per pixel.

- Imagery sources: Sentinel-2 L2A, Sentinel-1 RTC, or both.

Studio delivers a COG with one band per embedding dimension. Vectors are stored as signed 8-bit integers (int8). Values range from -127 to +127, with -128 reserved for nodata. To recover floating-point vectors, see dequantize_embeddings in olmoearth_pretrain.

Because everything is computed on demand rather than pulled from a pre-computed global archive, your embeddings reflect exactly the conditions you care about. You can generate monthly embeddings to capture seasonal dynamics, not just annual snapshots.

What you can do with OlmoEarth embeddings

The examples below all use OlmoEarth-v1-Tiny (192-dim) embeddings at 40-meter resolution with Sentinel-2 L2A composites (annual for most examples; monthly for change detection). Tiny is a lightweight encoder but still highly performant; for your own applications, you can swap it for a larger variant at the cost of higher compute and storage.

Similarity search: Finding "more like this"

Pick a query pixel, extract its embedding, and compute cosine similarity against every other pixel. The result is a heatmap showing where the landscape looks most and least like your query pixel.

This query sits near the Merced urban center in California. Urban fabric and road corridors light up coherently while agricultural parcels stay dark. The model distinguishes built-up surfaces from cropland without any labels.

Switching the query to a small agricultural window, we define the query vector as the mean of the embedding vectors over that window, then pull Sentinel-2 imagery at the highest- and lowest-similarity locations to see what the model treats as similar and dissimilar.

The most similar patches (0.89 and above) are all agricultural parcels with irrigated fields. The least similar (around zero) are an airport with surrounding bare ground, a reservoir with dry terrain, and arid rangeland. No training data, no labels, just a dot product in embedding space.

Few-shot segmentation: Labeling the landscape

Similarity search tells you "where is it like this?" but sometimes you need discrete labels across a region. Because the representations are already rich, a simple linear classifier can produce a wall-to-wall land-cover map from very few labeled pixels.

To test this, we labeled just 60 pixels (20 per class) over Ca Mau, Vietnam, a coastal mangrove region. Using ESA WorldCover 2021 as the label source for three classes (mangrove, water, other), we randomly sampled 20 pixels per class, trained a logistic regression with per-feature standardization, and predicted every pixel in the region.

From 60 labeled pixels, the classifier produces a coherent map with weighted F1 = 0.84. Mangrove stands, tidal channels, and open water are delineated across the entire region. The classifier saturates quickly: increasing from 30 to 300 labels barely changes accuracy, because the embeddings are doing most of the heavy lifting.

The core of the analysis is a few lines of Python:

import rasterio

import numpy as np

from sklearn.pipeline import make_pipeline

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

# Load the 192-band embedding COG exported from Studio

with rasterio.open("embeddings.tif") as ds:

emb = ds.read().astype(np.float32) # (192, H, W)

C, H, W = emb.shape

X = emb.reshape(C, -1).T # (H*W, 192)

# Train on labeled pixels, predict everywhere

clf = make_pipeline(StandardScaler(), LogisticRegression(max_iter=2000))

clf.fit(X[train_idx], labels[train_idx])

prediction = clf.predict(X).reshape(H, W)This is a linear probe, a standard evaluation for foundation models. The fact that a logistic regression over 192 dimensions recovers land-cover boundaries from so few labels means the Tiny encoder has organized these ecological distinctions during pretraining. Larger variants (Base, 768-dim) encode even richer representations.

If you have ground-truth polygons, field survey points, or a coarse existing map, you can train a similar classifier and produce a wall-to-wall map for your own region of interest.

Change detection: Spotting what shifted

Because Studio can generate embeddings at any temporal resolution (monthly through annual), you can compare two time periods directly to identify where surface conditions have changed. Below, we computed monthly Sentinel-2 embeddings for the same region in September 2023 and September 2024 and measured per-pixel cosine distance. The Park Fire (July-September 2024) burn scar in Butte County, California lights up immediately.

No labels or training required—just two embedding COGs and a few lines of Python.

Unsupervised exploration: Seeing what the model sees

Sometimes you have no query location or reference labels. You just want to understand what structure exists in the embeddings. Principal Component Analysis (PCA) is a clean way to do this: reduce to three dimensions, map to R/G/B, and display as a false-color image. Similar embeddings get similar colors automatically.

Flevoland, in the Netherlands, is a reclaimed polder landscape with a regular grid of agricultural parcels. The PCA false-color image reproduces those boundaries with high fidelity. Different crop types, water bodies, and urban areas each get distinct hues. The embedding has internalized landscape structure without ever being told what a parcel or crop is.

This kind of unsupervised view is a quick way to see what structure the model has picked up across your area of interest.

From export to insight