Devoured - April 23, 2026

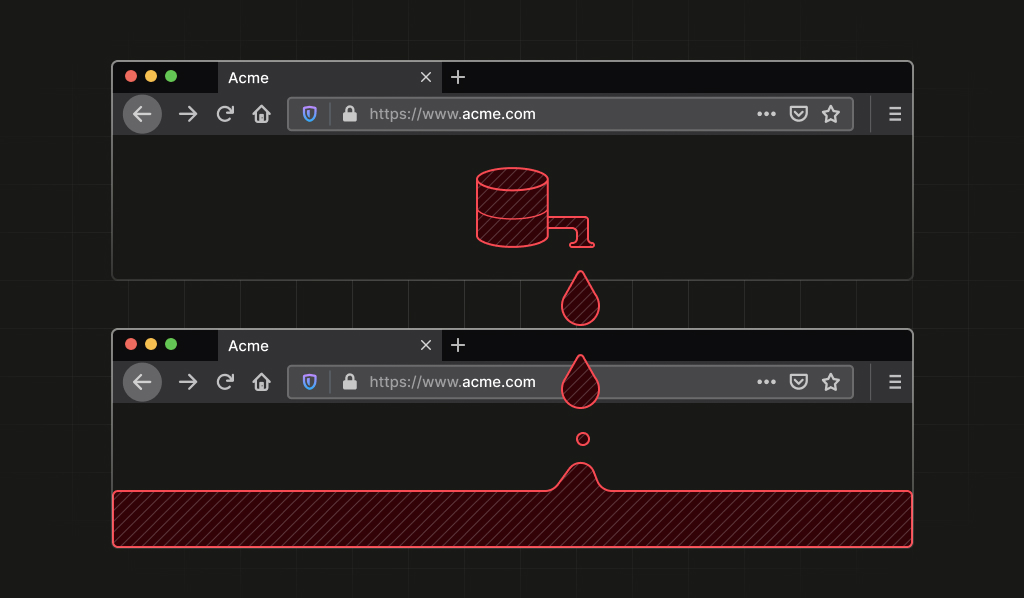

Microsoft is moving all GitHub Copilot subscribers to token-based billing in June, while Anthropic detailed production patterns for MCP-based agents surpassing 300M SDK downloads monthly. On the model side, Qwen3.6-27B delivers flagship-level coding performance in a 16.8GB quantized package, and a critical Firefox vulnerability linking Tor identities via IndexedDB ordering has been patched in Firefox 150.

OpenAI launched workspace agents in ChatGPT, enabling teams to create shared AI assistants that handle complex workflows like code generation, reporting, and communication.

Original article

OpenAI introduced workspace agents in ChatGPT, allowing teams to create shared AI agents for complex tasks and workflows. These agents, powered by Codex, perform tasks like generating reports, writing code, and managing communication, while integrating with various tools like Slack. Workspace agents are currently available in research preview for select ChatGPT plans, aiming to streamline collaboration and improve productivity.

Google debuts Workspace Intelligence for Gemini Workspace (4 minute read)

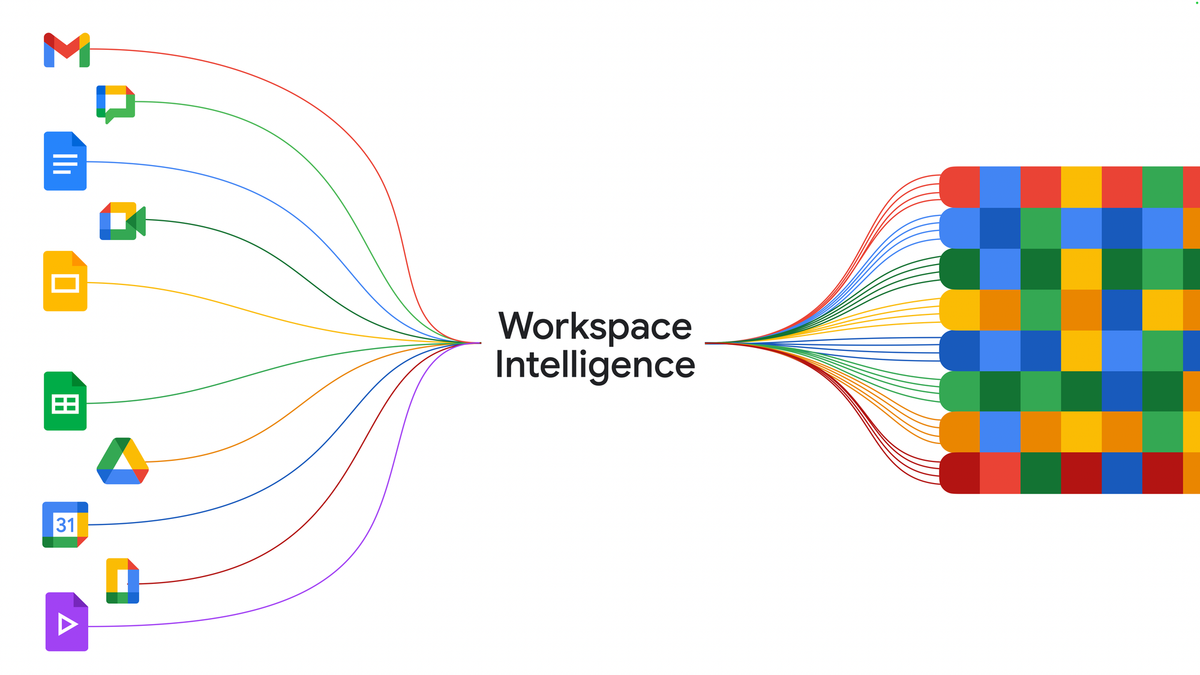

Google launched Workspace Intelligence to give Gemini AI agents cross-application context across all Google Workspace tools, turning the productivity suite into an integrated AI control layer.

Deep dive

- Workspace Intelligence creates a semantic layer that understands relationships between emails, chats, files, collaborators, and active projects across Google Workspace

- Google Chat with Gemini becomes a command-line interface for work, handling daily briefings, file retrieval by description, document generation, and meeting scheduling

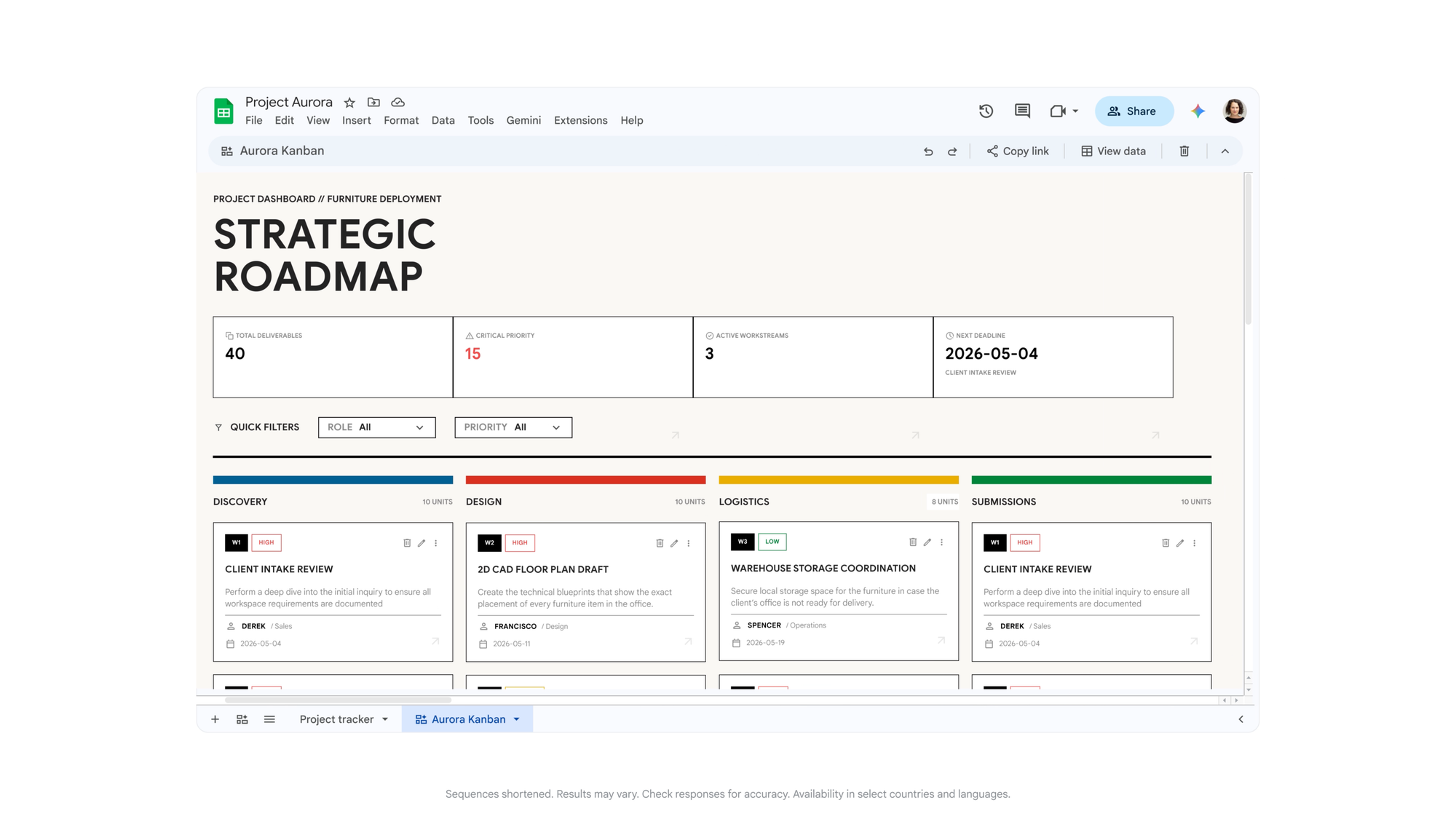

- Sheets gains natural-language spreadsheet creation, third-party imports from HubSpot and Salesforce, and a canvas layer for dashboards and kanban views

- Docs can now generate data-grounded infographics, batch-edit images for consistency, and automate comment triage

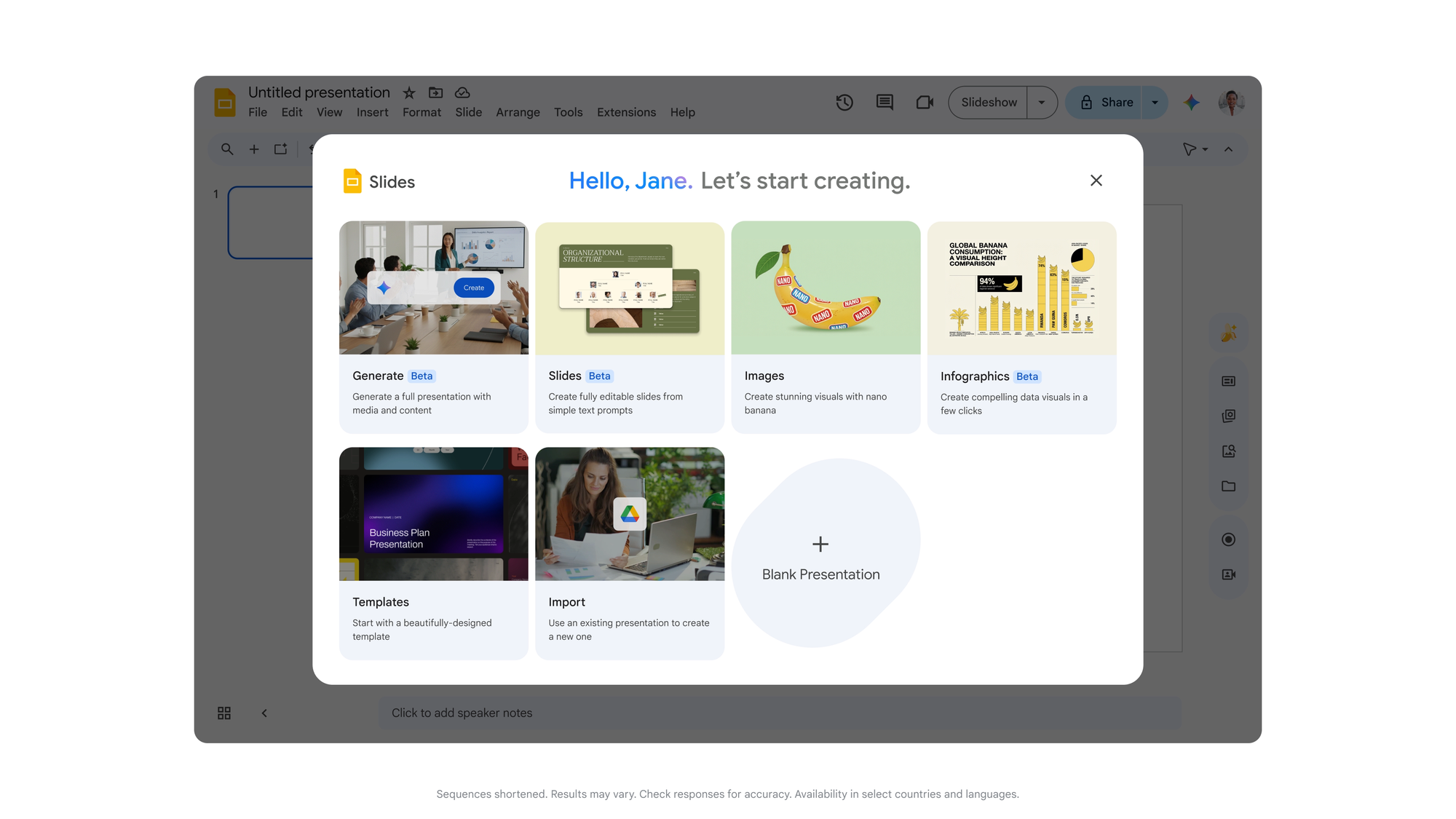

- Slides generates full editable decks in one pass using company templates and visual rules, moving beyond slide-by-slide creation

- Gmail receives AI Inbox and AI Overviews in search to surface relevant information automatically

- Drive Projects launches as a shared context hub that combines files and related emails in one workspace

- Security architecture runs on compliant infrastructure with customer data isolation, no use for ads or unauthorized model training, and admin governance tools

- Sovereign data controls available for US and EU, with Germany and India planned for future expansion

- Workspace MCP Server enables external AI apps and agents to connect to Workspace data and actions

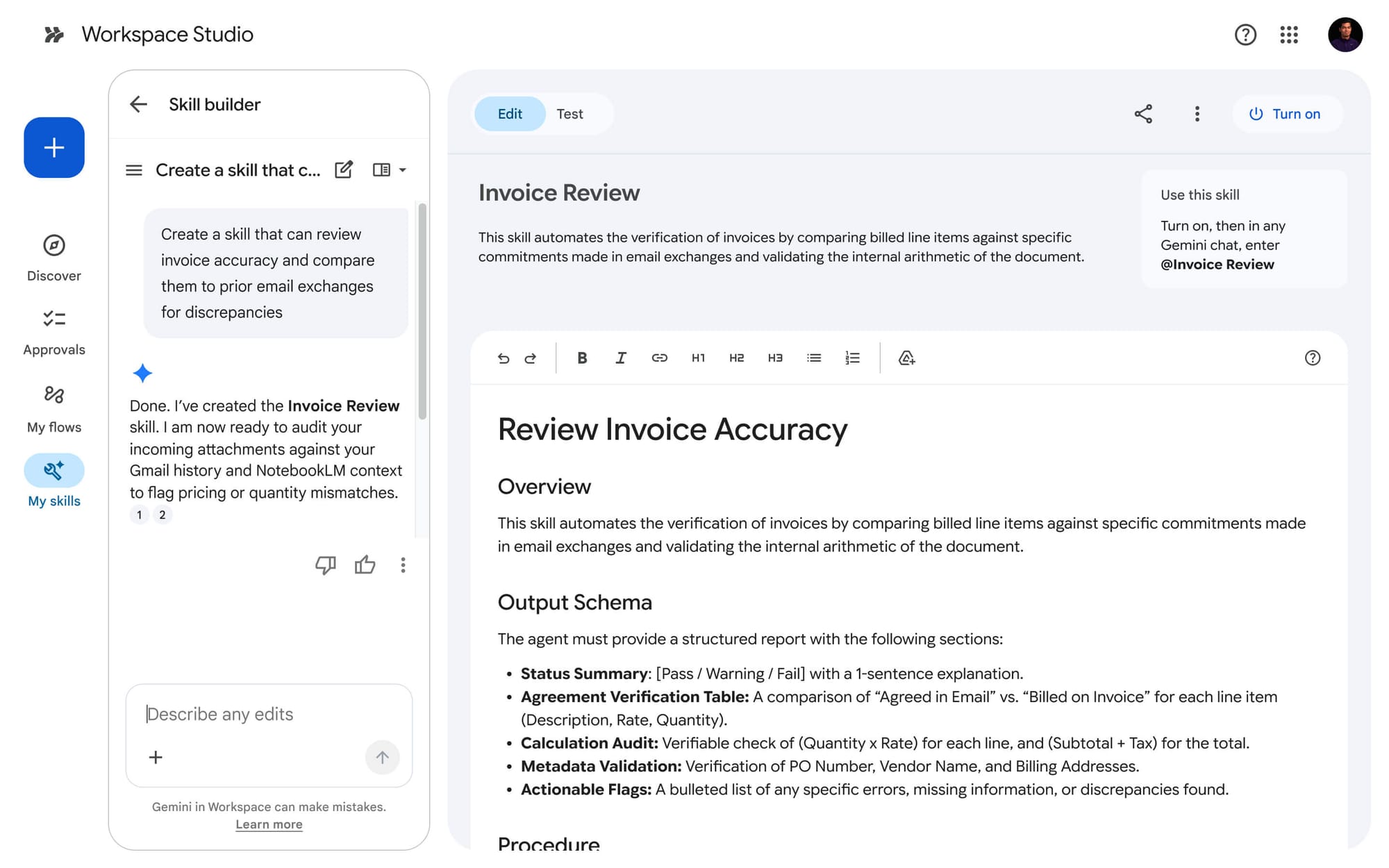

- Builds on Workspace Studio (December 2025 GA) and Personal Intelligence in Gemini app (January 2026)

- Rollout is staged with some features available now, others in preview or private preview including Workspace actions in Gemini Enterprise and auto-browse in Chrome Enterprise

Decoder

- Semantic layer: A data abstraction layer that understands relationships and meaning between different data sources, not just raw data storage

- Workspace Intelligence: Google's system that maps and connects context across all Workspace applications for AI agent use

- MCP Server: Model Context Protocol server that allows external AI applications to access and interact with Workspace data

- Workspace Studio: No-code platform for building and sharing custom AI agents within Google Workspace

- Client-side encryption: Data encryption that happens on the user's device before transmission, keeping keys away from the service provider

- Sovereign data controls: Governance features that ensure data processing and storage comply with specific country regulations and remains within geographic boundaries

Original article

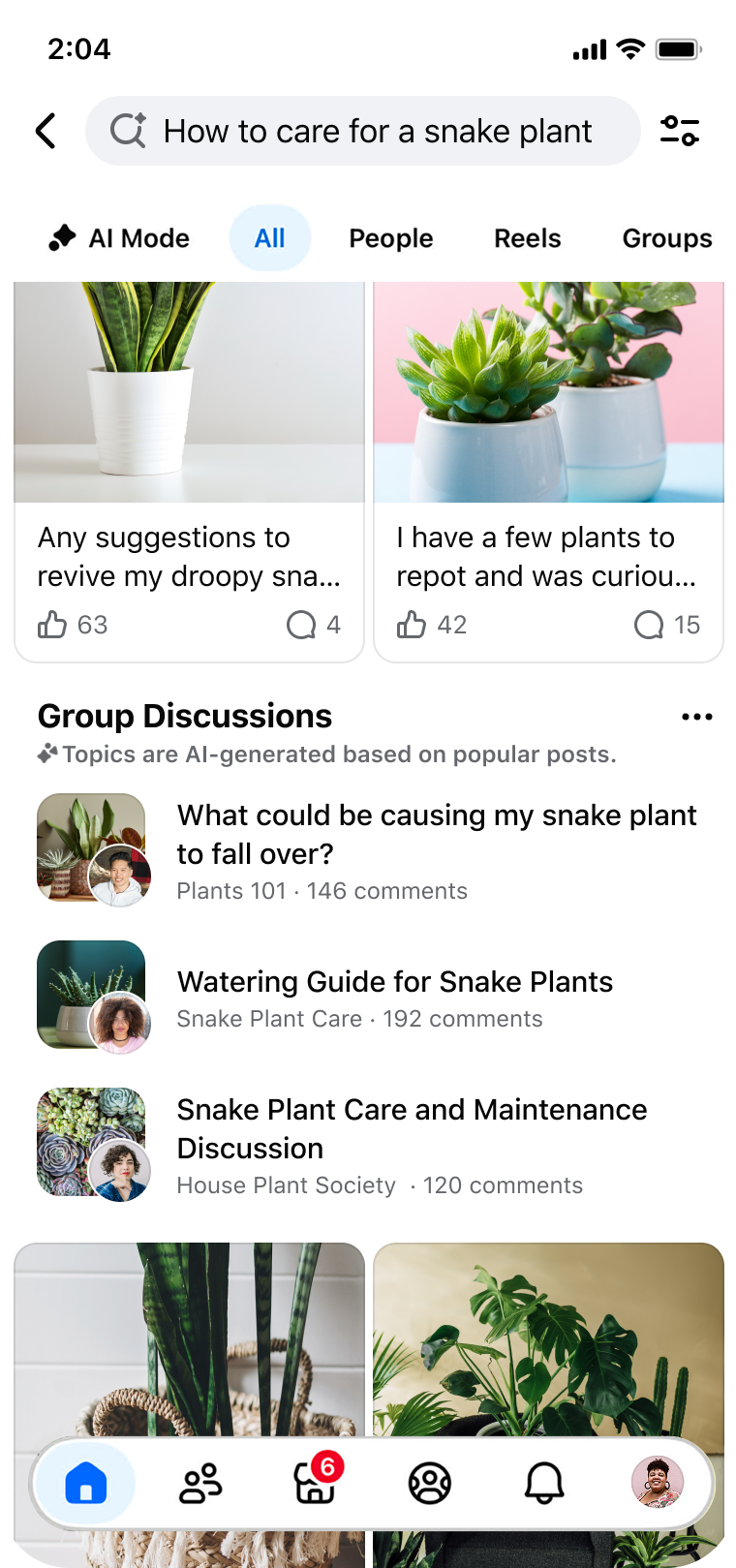

Google used Cloud Next on April 22, 2026, to introduce Workspace Intelligence, a new semantic layer for Google Workspace that maps emails, chats, files, collaborators, and active projects into shared context for Gemini-powered agents. The idea is to shift Workspace from a set of separate productivity apps into a system that can understand what a worker is trying to do, pull together the right context in real time, and act on it across the suite. Cloud Next '26 runs April 22 through April 24 in Las Vegas, and Google had already signaled before the event that connected enterprise workflows, agentic collaboration, and security would be central to its Workspace push.

Workspace Intelligence is aimed at organizations that want AI to do more than draft text. Google says the system can gather relevant material across Workspace, rank priorities, track key stakeholders, and adapt outputs to a user's writing, formatting, and communication patterns. In practice, that gives Google Chat a larger role. Ask Gemini in Chat is being positioned as a command line for work, with daily briefings, file retrieval by description, document and slide generation, and meeting scheduling tied to the rest of a company's Workspace footprint.

Google paired the launch with a wide product update across the suite. Sheets is getting natural-language spreadsheet building, third-party imports from apps such as HubSpot and Salesforce, and a new canvas layer for dashboards, heat maps, and kanban-style views. Docs can generate infographics grounded in business data, edit batches of images for visual consistency, and handle comment triage. Slides can now generate full editable decks in one pass using company templates and visual rules. Gmail is getting AI Inbox and AI Overviews in search, while Drive adds Drive Projects as a shared context hub for files and email.

The move also fits into a broader product arc Google has been building for months. Google Workspace Studio reached general availability in December 2025 as a no-code way to build and share AI agents inside Workspace, and Google introduced Personal Intelligence in the Gemini app in January 2026 to connect Gemini with user data from Google apps. Workspace Intelligence brings that context-first model deeper into the business stack, where Google is now tying it directly to Docs, Sheets, Slides, Drive, Gmail, and Chat.

Security is central to the pitch because Google is asking companies to let AI reason across mail, files, chat, and calendars. Google says Workspace Intelligence runs on the same compliant infrastructure as the rest of Workspace, with customer data not used for ads or for model training outside Workspace without permission. Admin controls, agent governance tools, client-side encryption, and sovereign data controls for the US and EU are part of the rollout, with Germany and India named as future countries for expanded data processing and storage controls. Some companion features are rolling out in the coming weeks, while others remain in preview or private preview, including Workspace actions inside the Gemini Enterprise app, Gemini auto browse in Chrome Enterprise for US customers, and a new Workspace MCP Server for outside AI apps and agents.

For Google, this is another step in turning Workspace into a control layer for everyday business operations rather than a place where documents and messages simply live. The company has spent the past year pushing Gemini deeper into Docs, Drive, Meet, Gmail, and Chat, while using Cloud Next to sell that stack to larger organizations that care about governance, cross-app context, and migration from Microsoft 365. Google Workspace has long ranked among the company's biggest business software products, and this launch shows Google now wants that installed base to serve as the context engine for enterprise agents as well.

Ex-OpenAI researcher Jerry Tworek launches Core Automation to build the most automated AI lab in the world (1 minute read)

A former OpenAI researcher has launched an AI lab focused on automating research itself and developing alternatives to current pre-training and transformer architectures.

Decoder

- Pre-training: The initial phase of training large language models on massive datasets before fine-tuning for specific tasks

- Reinforcement learning: A machine learning approach where models learn by receiving rewards or penalties for their actions

- Transformers: The neural network architecture underlying models like GPT and Claude, which uses attention mechanisms to process sequences

Original article

Ex-OpenAI researcher Jerry Tworek launches Core Automation to build the most automated AI lab in the world

Jerry Tworek, a former OpenAI researcher, has unveiled his new AI lab, "Core Automation," with the goal of building "the most automated AI lab in the world," starting by automating its own research.

Instead of chasing ever-larger models trained on more data, Core Automation says it's developing new learning algorithms that go beyond pre-training and reinforcement learning, plus architectures designed to scale better than transformers.

The team pulls together experts in frontier models, optimization, and systems engineering. The vision is small teams with capable AI agents doing work that used to take entire organizations.

Tworek left OpenAI in January 2026 after seven years, saying this kind of fundamental research was no longer possible there. In his view, deep learning research "is done."

Core Automation joins a growing list of so-called Neo Labs founded by OpenAI alumni and others, including Thinking Machines Lab (led by the former CTO) and Safe Superintelligence (led by the former chief scientist). They all share the belief that real progress in AI now depends on fundamentally new approaches.

Perplexity details their two-stage training approach combining supervised fine-tuning with reinforcement learning to build language models that search effectively while maintaining factual accuracy.

Deep dive

- Perplexity uses a two-stage training pipeline: supervised fine-tuning (SFT) to teach basic search behavior, then reinforcement learning (RL) to optimize for accuracy and efficiency

- The approach deliberately separates compliance training from search capability improvement to maintain safety guardrails while enhancing performance

- Built on Qwen3 base models as the foundation for search-augmented capabilities

- Reinforcement learning phase optimizes for multiple objectives simultaneously: factual accuracy, user preference alignment, and efficient tool usage

- Models showed improved performance on FRAMES and FACTS OPEN benchmarks measuring factual accuracy in open-domain questions

- Achieved lower cost per query compared to baseline models, making the approach more economically viable at scale

- Demonstrates better tool-use efficiency than GPT-5.4, using search capabilities more judiciously

- The separation allows the model to learn when to search versus when to rely on its parametric knowledge

Decoder

- SFT (Supervised Fine-Tuning): Training a model on labeled examples to teach it specific behaviors or capabilities

- RL (Reinforcement Learning): Training approach where models learn by receiving rewards or penalties for their actions

- Search-augmented language models: LLMs that can query external search systems to retrieve current information before generating responses

- FRAMES: Benchmark for evaluating factual accuracy in language model responses

- FACTS OPEN: Open-domain factual accuracy benchmark testing models on verifiable claims

- Qwen3: Base language models from the Qwen series used as Perplexity's starting point

- Tool-use efficiency: How effectively a model decides when and how to use external tools like search

- Guardrails: Safety mechanisms preventing models from generating harmful or inappropriate content

Original article

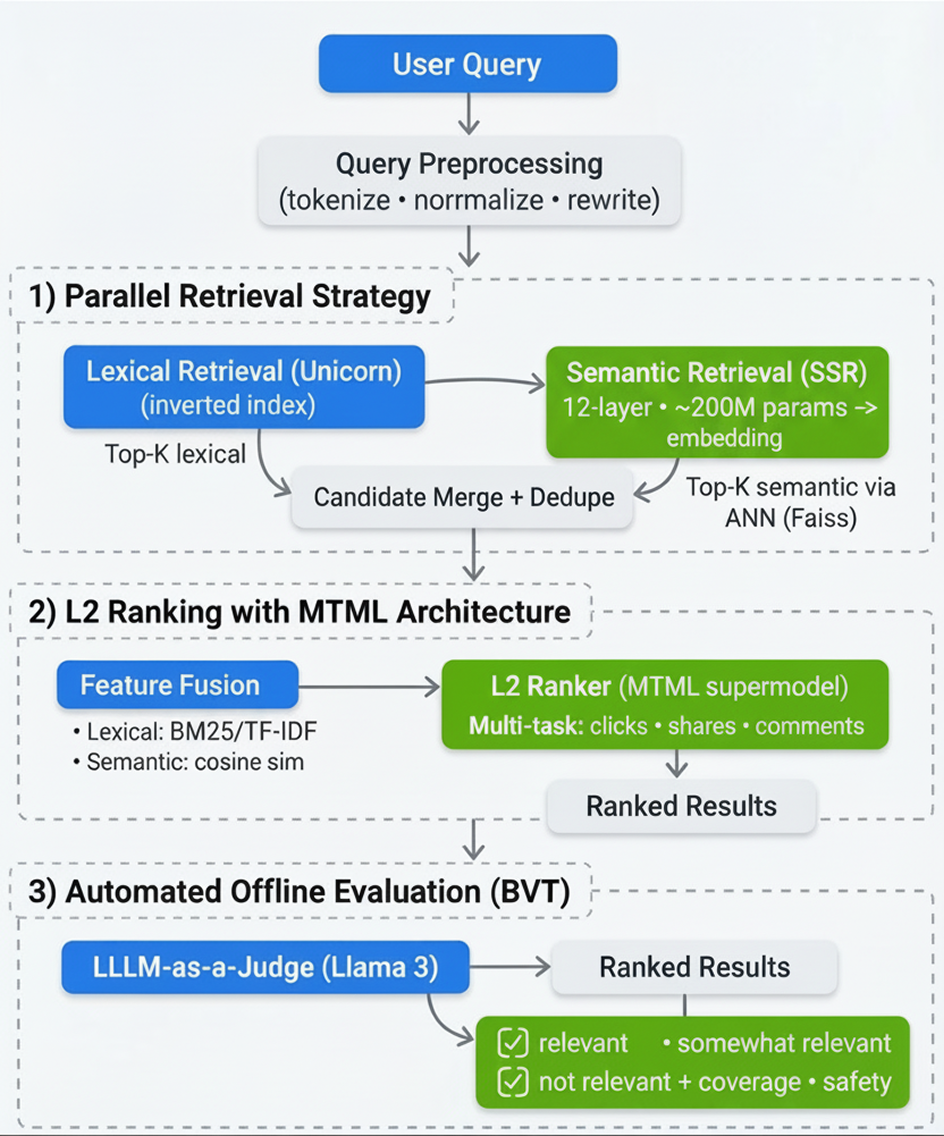

Applied Compute released an open-source benchmark for testing LLM inference engines on agentic workloads, revealing that multi-turn tool-calling agents stress KV cache management far differently than traditional chatbot benchmarks.

Deep dive

- Traditional LLM benchmarks use fixed single-turn patterns like 1k input/8k output tokens, designed for simple chatbot interactions rather than complex agent workflows

- Production agentic workloads average about 20 tool turns per trace but can extend into hundreds, with assistant responses of 200-300 tokens and tool outputs around 500 tokens, both with heavy tails into tens of thousands

- The three released workload profiles cover SWE-Bench coding tasks with heavy tool harnesses, terminal-based repository search for code QA, and office work involving document manipulation

- Metrics that matter depend on deployment context: batch workloads prioritize completion throughput per GPU, background tasks with SLAs need bounded end-to-end trace latency, and interactive agents require low time-to-first-answer-token

- Time to first answer token (TTFAT) is distinct from time to first token (TTFT) because agents complete many intermediate tool-calling turns before producing the final user-visible response

- The benchmarking harness replays real production traces by having each trace occupy one concurrency slot for its full lifetime, issuing each assistant turn as a new completion request

- Benchmarks of vLLM and SGLang running DeepSeek R1 on 8xB200 GPUs show comparable performance between the engines, with both degrading noticeably at high concurrency

- KV cache capacity emerges as the primary bottleneck, with eligible cache hit rates dropping significantly when concurrency is too high, causing evictions and reduced throughput

- Using a mean trace approach (averaging all quantities) overestimates throughput by 10-20% compared to replaying the full workload distribution, due to the convex cost of larger requests

- Recommended optimization directions include KV cache offloading to host RAM or disk, workload-aware routing policies based on cache residency and predicted trace lengths, and shared prefix optimization for post-training rollouts

Decoder

- KV cache: Key-Value cache storing intermediate attention computations to avoid recomputing them across turns

- TTFT: Time to First Token, measuring latency from request to first generated token on a single turn

- TTFAT: Time to First Answer Token, measuring latency from trace start to first token of the final user-visible response

- Prefill: Processing input tokens before generation begins

- Decode: The token generation phase after prefill

- SWE-Bench: Software Engineering Benchmark for testing coding agents on real GitHub issues

- TP/EP: Tensor Parallelism and Expert Parallelism strategies for distributing model computation across GPUs

- MFU: Model FLOPS Utilization, measuring compute efficiency as percentage of theoretical peak

- vLLM/SGLang: Popular open-source inference engines optimized for serving LLMs at scale

Original article

Large language model inference engines are typically benchmarked with prompt-heavy, decode-heavy, or balanced workloads. InferenceX from SemiAnalysis, for example, tests a workload with a fixed number of input and output tokens (e.g. 1,000 tokens in, 8,000 tokens out). Before the advent of agents that could aggressively call tools, most workloads were simple: chatbots that would think while answering a math problem, API calls that would summarize a long body of text, or coding autocomplete that would take in the current file and emit a short suggestion.

Agentic applications today have a very different shape: multi-turn, tool-using workloads that have produced a surge in the demand for inference capacity. These workloads present a new set of challenges compared to the single-turn pattern, including KV cache management from long-running traces, scheduler pressure from a large volume of short output requests, and heavy-tailed token distributions.

In this post, we'll share some learnings from our production post-training runs and deployments on what these traffic patterns look like. We release three distinct workload profiles and an open-source benchmarking harness for replaying them, with the hope that these will help define clearer targets when optimizing inference engines and hardware accelerators. We also clarify a few of the metrics that are important for different deployment contexts including batch, background, and interactive agents.

How modern workloads differ

Inference benchmarking today primarily consists of single-turn, single-request workloads where we send a prompt of P tokens, generate D tokens, and then measure time-to-first-token, tokens-per-second, and completion throughput. Engines are load-tested on a sample of these (P, D) pairs: 1k/8k for decode-heavy, 8k/1k for prefill-heavy, and 1k/1k for balanced. Those workloads are designed to model human-chatbot interactions where the input/output patterns are for short question-answer sessions or multi-turn interactions with relatively short user inputs and negligible latency between user interactions.

In contrast, a session with an agent produces a back-and-forth somewhat resembling a multi-turn user conversation: the model thinks and generates a response, calls a tool, receives the output after waiting for a response from a tool server, and generates again given the tool's output as context. This loop repeats up to hundreds of times and completes when the model no longer needs to call a tool. Each tool call output requires a new round of prefill before appending to the cache built up over previous turns, and between turns the server must decide whether to keep or evict that cache while the tool executes.

Over a hundred of our production multi-turn post-training runs sampled from different deployments, we observe a mean of about twenty tool turns per trace, with a long tail into the hundreds. Assistant responses within a turn are centered around 200 - 300 tokens while tool outputs are concentrated around 500 tokens, but both have a meaningful chunk that extends into the tens of thousands. Input prompts are centered around 10k, mostly from long system prompts that include tool descriptions. Tool-call latencies are short overall, around one second, but can extend past hundreds of seconds in the tail.

We pull three real workloads for our experiments.

- An agentic coding workload involving tasks from SWE-Bench Verified with a heavy tool harness that takes up thousands of tokens.

- A lightweight code QA workload that involves agentic terminal-based search over a repository to answer user questions.

- An office work workload involving document, spreadsheet, and slide deck manipulation over a large filesystem with a heavy tool harness.

Statistics from production deployments

We use the following terminology:

- A single request is the set of input parameters into the HTTP POST for an engine's /completion or /chat/completion endpoint. An 8k-in, 1k-out workload is effectively just one request.

- A trace is a single session with an agent, consisting of all its requests and tool calls. One Claude Code or Codex session, for example, can be considered a trace.

- A workload is a set of traces, typically captured during production inference.

We can model a trace with the following attributes:

- Input prompt length: the token count of the initial request, including system prompt, tool definitions, and user prompt.

- Number of turns: how many tool-call round trips occur. This determines how many completion requests a single conversation generates.

- Assistant output per turn: the number of sampled tokens at each step. This is the generation component of each request that the inference engine runs.

- Tool output per turn: the number of new tokens appended as context between turns from the model executing tools against the environment.

- Tool call latency: the wall-clock delay, in seconds, between receiving the model's response and sending the next request.

While the last point may seem like an implementation detail, it can meaningfully contribute to observed throughput by affecting scheduler and cache eviction decisions. Correspondingly, this affects the optimal concurrency and queries-per-second for an engine.

These three workloads are different, but share a few core elements. The number of tool calls is typically in the dozens while assistant output per turn and tool output per turn are usually in the low hundreds of tokens. Most attributes have heavy tails, especially number of turns, assistant tokens per turn, tool output tokens per turn, and tool call latency.

Of the three, the agentic coding use case is relatively shorter-horizon but spikier in tool latency: it averages about 20 tool turns per trace, starts from a smaller prompt, ends with a shorter final response, but has a heavier tail in tool wait time. The office work use case averages about 41 turns per trace, begins with a larger prompt, produces larger tool outputs overall, and ends with much longer final responses. The code QA use case has the most range with the number of tool call turns going up to 200, showing the difficulty of some of the tasks in the workload.

Note that for the purposes of the workload we collapse parallel tool calls into one, so a parallel tool call with t1 and t2 tool call output tokens respectively would be logged in a workload file as one tool call output of size t1 + t2. This doesn't change workload semantics as from the engine's perspective parallel tool call outputs are still just one larger prefill request.

Why capture full workloads?

For simplicity, we could instead compute the mean trace where each quantity is the average from across the workload. For example, for the office work use case, the mean trace would have 41 turns and 8.9k input prompt tokens. We could then replay just this trace repeatedly. Unfortunately, this would overstate our engine performance on the real workload as:

- There is more request scheduling and KV allocation pressure with high variance requests.

- LLM inference is "convex": a request with twice the input prompt length is more than twice as expensive. Meanwhile a request with half the input prompt length is less than half as expensive.

We show this ablation in the appendix.

Metrics for batch, background, and interactive deployments

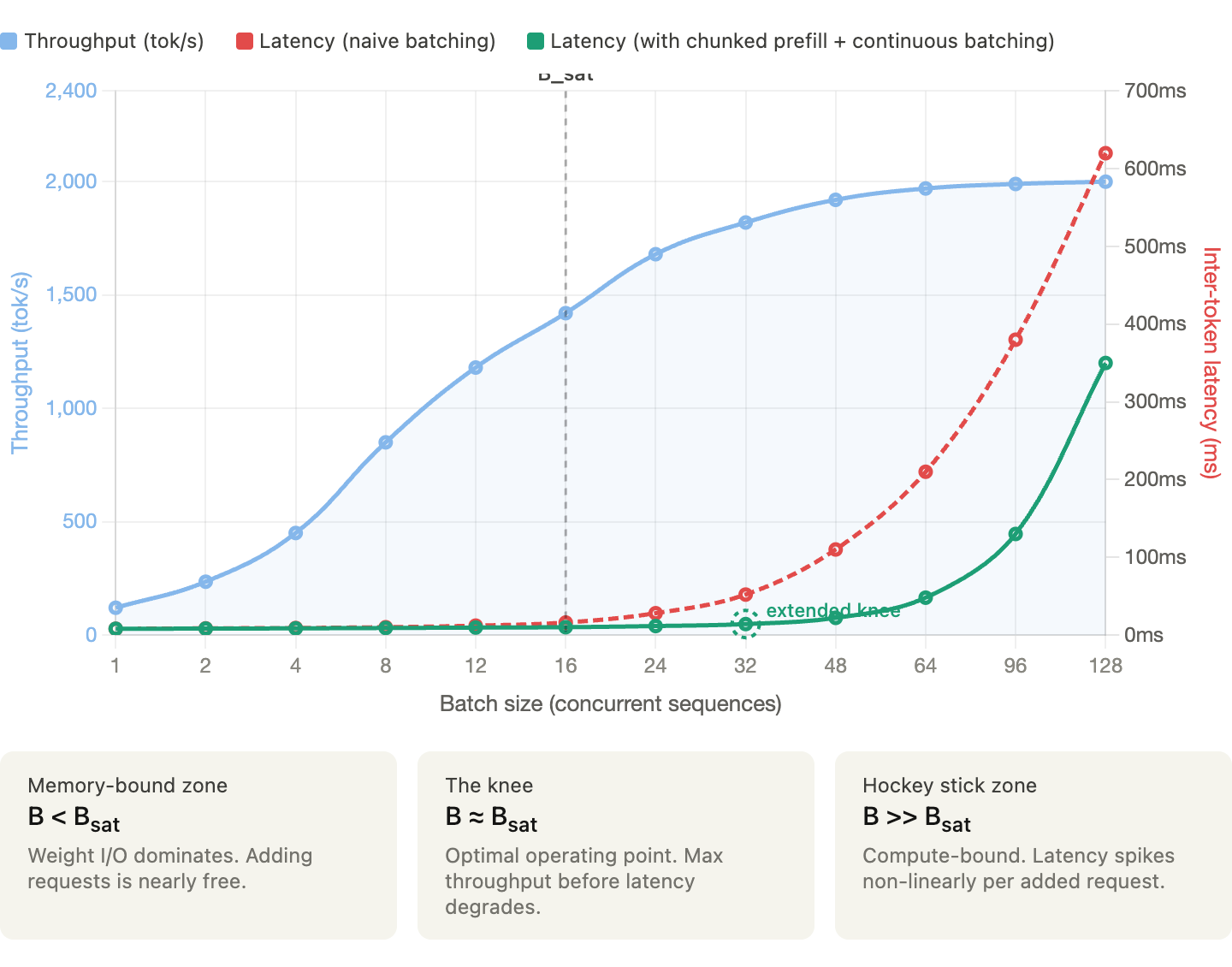

We evaluate engines by replaying the same workload against each endpoint. For each engine configuration, we sweep concurrency at a fixed run duration and report both per-trace latency metrics and per-workload throughput metrics. Which metrics matter most depends on the deployment context. We consider three.

1. Pure throughput (minimizing $/token). For workloads that are not sensitive to latency — asynchronous tasks, synthetic data generation, RL training — the goal is to maximize throughput per dollar. We use completion throughput per GPU as the primary measure of system goodput, since the inference engine's primary task is to sample new tokens. We don't consider pure MFU because a system with poor KV cache management could have very high overall FLOPs utilization from constantly prefilling evicted tokens, but would take much longer to complete the overall workload.

Steady-state throughput is computed from the later portion of the benchmark after excluding the initial warmup period. We build a cumulative completion-token curve over benchmark time, drop the first 20% of benchmark wall-clock time, and report throughput from the remaining portion. This reduces the influence of startup effects such as an initially empty cache and short early sequence lengths. We also report total prompt throughput, cached prompt throughput, uncached prompt throughput, and cache hit rate as diagnostic metrics.

2. Background tasks with an SLA (meeting a time budget). Many agentic workloads fall between pure batch and fully interactive. For example, a coding agent running in CI, a deep research task kicked off in the background, or an enterprise workflow that must be completed before a review in a few hours. The user is not directly working in sync with the agent, but it would be valuable to bound how long the request takes to complete. Here the headline metric is end-to-end trace latency: the time from the first request of a trace to the final token of the final response. Throughput still matters as it determines the cost per task but another constraint is that individual traces must finish within the SLA. Tail latency (p90, p99) becomes especially important to track.

3. User-facing agents (streaming to a user). When a user is working in sync with an agent, an important latency metric is time to first answer token (TTFAT): the wall-clock time from the start of a trace to the first streamed token of the final, user-visible assistant response. This is distinct from the standard time to first token (TTFT), which measures only the latency of the first turn's prefill. In a multi-turn agentic workflow, the model may complete many intermediate tool-calling turns before producing the answer the user actually sees. The right metric is partly a product decision: some applications may stream intermediate steps rather than waiting for the final answer. Interactivity, defined as streaming tokens per second per user, also matters here, since a fast first answer token that trickles out slowly can result in a degraded experience.

An open-source harness for replaying agent traces

To study these workloads, we release a small evaluation harness for replaying multi-turn agentic traces against OpenAI-compatible inference endpoints. The harness is lightweight at <1k lines of Python. It replays traces by having each occupy one concurrency slot for its full lifetime, including tool wait time, and each successive assistant turn is issued as a new completion request against the accumulated prompt.

We release three primary workload files: agentic_coding_8k.jsonl, code_qa_8k.jsonl, and office_work_8k.jsonl. These are concrete recorded traces from production workloads. They correspond to the distributions shown above.

The suite reports two classes of metrics. The first are per-trace metrics, which summarize the distribution of end-to-end trace latency, time to first answer token, interactivity, cache hit rate, and eligible cache hit rate across completed traces. Eligible cache hit rate measures hit rate only on the prefix tokens that are expected to be cacheable, excluding tokens from the initial request's input prompt and subsequent requests' most recent tool call output. The second are per-workload metrics, which summarize total prompt throughput, cached prompt throughput, uncached prompt throughput, and completion throughput, each reported as overall, last-30-second, and steady-state values, with an optional per-GPU normalization.

To launch a run, a user will specify an endpoint, a model name, a tokenizer, a workload file, a concurrency level, and a run duration.

uv run python trie \

workload_path=office_work_8k.jsonl \

endpoint=<http-endpoint> \

model=<served-model-name> \

tokenizer_model=<model-name-or-tokenizer-path> \

concurrency=24 \

duration=3600 \

num_gpus=8Engine performance for Deepseek R1

We evaluate all three workloads on vLLM and SGLang each running a replica of Deepseek R1 on a 8xB200 node with TP8EP8 for simplicity. For each engine, we mostly use the default configuration except for turning up CUDA graph batch size granularity. Setup commands are given in the appendix.

Each point in the main comparison is a two-hour run at a fixed concurrency, with streaming enabled so that we can measure time to first answer token and interactivity. Unless otherwise noted, the figure below sweeps concurrency in {8, 16, 24, 32, 40, 48}. The figure should be read as a Pareto plot. For the left-hand side, points that are higher and further right are preferable. For the right-hand side, points that are higher and further left are preferable. On these workloads, vLLM and SGLang are comparable. Naturally, these results are workload-, model-, and tuning-specific. Different settings may lead to more substantial differences.

We observe that both engines degrade noticeably once concurrency is turned up too high. This is due to KV cache evictions which cause the cache hit rate to decrease. The figure below shows the eligible cache hit rate which under ideal prefix caching conditions would be at or near 100%.

Conclusion

The surge in agentic use cases has increased demand for inference compute with meaningfully different workload characteristics. Knowing the end workload can be a powerful tool for better inference and hardware accelerator optimization. For example, knowing our workloads has motivated us to pursue better tune concurrency, inference serving parallelism, quantization, and load balancer policies. Our tuning is metric-specific; for RL training, in particular, we care primarily about inference throughput. On the workloads above, we've observed that our primary bottleneck is KV capacity.

We hope this benchmark will engage the community's effort on optimizing agentic inference workloads. Some further directions include:

KV cache offloading and eviction. Since KV capacity is a primary bottleneck for performance, offloading to host RAM or disk would be helpful. Workload statistics on tool call latency and context lengths could be incorporated into determining which blocks from which traces should be evicted first.

Multi-engine load balancing. Performance here can be measured with the same harness, but with the endpoint exposed by a router rather than a single engine.

Workload-aware routing. A router may benefit from reasoning over workload statistics: current cache residency, expected future turns, recent tool latencies, and predicted remaining trace length. This opens the door to workload-aware routing policies that preserve cache locality and avoid sending sessions to engines that, even if less busy now, will predictably be busy within a few turns.

Shared prefixes across requests. This is especially relevant in post-training where we generate multiple rollouts for a single task. A router policy should be aware of shared prefix overlap and try to route traces with the same prefix to the same engine.

Disaggregated layouts. When doing prefill-decode disaggregation, the optimal ratio of each type of worker depends closely on the ratio between assistant output tokens (decode) and tool output tokens (prefill).

Appendix

1. Mean trace ablation

For the office work workload, we compute the mean trace where we average across all quantities. For any integer values (such as number of tool call turns) we round up. Despite this, we find that replaying the mean trace leads to overestimating throughput by between 10 - 20% making the mean trace not representative as an optimization target.

2. Engine configurations

For vLLM, we use the 'vllm/vllm-openai:latest' image.

export VLLM_USE_FLASHINFER_MOE_FP8=1

vllm serve /tmp/DeepSeek-R1 \

--tensor-parallel-size 8 \

--enable-expert-parallel \

--trust-remote-code \

--quantization fp8 \

--enable-prompt-tokens-details \

--cudagraph-capture-sizes 1 2 4 6 8 10 12 14 16 18 20 22 24 26 28 30 32 34 36 38 40 42 44 46 48 64 96 128 160 192 224 256 288 320 352 384 416 448 480 512 544 576 608 640 672 704 736 768 800 832 864 896 928 960 992 1024 1056 1088 1120 1152 1184 1216 1248 1280 1312 1344 1376 1408 1440 1472 1504 1536 1568 1600 1632 1664 1696 1728 1760 1792 1824 1856 1888 1920 1952 1984 2016 2048For SGLang, we use the 'lmsysorg/sglang:v0.5.9' image due to cuda graph instabilities. Also, prefill piecewise cuda graphs were not enabled for sglang because of compilation errors inside the 'flashinfer_trtllm' backend.

python -m sglang.launch_server \

--trust-remote-code \

--model-path /tmp/DeepSeek-R1 \

--quantization fp8 \

--model-loader-extra-config '{"enable_multithread_load": true, "num_threads": 8}' \

--tp 8 \

--enable-metrics \

--enable-cache-report \

--cuda-graph-max-bs 48 \

--cuda-graph-bs 1 2 4 6 8 10 12 14 16 18 20 22 24 26 28 30 32 34 36 38 40 42 44 46 48

A good AGENTS.md is a model upgrade. A bad one is worse than no docs at all (11 minute read)

Research shows well-crafted AGENTS.md files can boost AI coding agent quality as much as upgrading from a basic to advanced model, while poorly written ones make output worse than no documentation at all.

Deep dive

- A systematic study using real pull requests as evaluation data found that AGENTS.md quality variance equals multiple model generations—best files matched upgrading from Claude Haiku to Opus, worst files degraded output below baseline

- The same documentation file can have opposite effects on different tasks: one file boosted best practices 25% on a bug fix but dropped completeness 30% on a feature task by causing excessive exploration of reference materials

- Progressive disclosure (100-150 line core files with focused references) consistently outperformed comprehensive documentation, with gains reversing once main files exceeded that length

- Procedural workflows describing tasks as numbered steps were among the strongest patterns, moving agents from 40% failure rates to 90% success on complex multi-file tasks like integration deployments

- Decision tables that force upfront choices between similar approaches (e.g., React Query vs Zustand) improved adherence to codebase conventions by 25% by resolving ambiguity before code generation

- Real production code snippets of 3-10 lines improved code reuse by 20%, but more examples caused pattern-matching on the wrong abstractions

- The overexploration trap is the most common failure mode: excessive architecture overviews or long lists of warnings cause agents to read dozens of docs, load 80K+ irrelevant tokens, and produce worse output

- Documentation discovery is heavily skewed: AGENTS.md files are found 100% of the time, direct references 90%, directory READMEs 80%, nested READMEs 40%, and orphan docs in _docs/ folders under 10%

- Warning-only documentation ("don't do X") consistently underperformed when not paired with concrete alternatives ("use Y instead"), causing agents to become overly cautious and exploratory

- Module-level AGENTS.md files for ~100 core files vastly outperformed repo-root files, but even good docs failed when surrounded by massive documentation sprawl (one module with 500K characters of specs showed no improvement from AGENTS.md alone)

- New architectural patterns not yet in the codebase cause agents to follow outdated AGENTS.md guidance—one agent built a polling solution when WebSockets were required because docs only covered existing REST patterns

- Different documentation patterns optimize different metrics: decision tables improve best practices adherence, procedural docs improve completeness, progressive disclosure reduces context rot, and "don't"+"do" pairs improve gotcha handling

Decoder

- AGENTS.md: A documentation file specifically written for AI coding agents rather than human developers, designed to help agents understand codebase patterns and conventions

- Haiku/Opus: Different tiers of Anthropic's Claude models, representing a significant quality gap (Haiku is faster/cheaper, Opus is more capable)

- AuggieBench: An internal evaluation suite that compares AI agent output against high-quality pull requests that were actually merged after senior engineer review

- Progressive disclosure: A documentation pattern that covers common cases at high level while pushing detailed information into separate reference files loaded on demand

- Context rot: When an AI agent loads too much irrelevant documentation into its context window, degrading output quality due to information overload

- Decision tables: Structured tables that help agents choose between similar approaches by mapping specific conditions to recommended solutions

Original article

We pulled dozens of AGENTS.md files from across our monorepo and measured their effect on code generation. The best ones gave our coding agent a quality jump equivalent to upgrading from Haiku to Opus. The worst ones made the output worse than having no AGENTS.md at all.

That gap was surprising enough that we built a systematic study around it.

What we found: most of what people put in AGENTS.md either doesn't help or actively hurts, and the patterns that work are specific and learnable.

The same file can help one task and hurt another by 30%

A single AGENTS.md isn't uniformly good or bad. The same file boosted best_practices by 25% on a routine bug fix and dropped completeness by 30% on a complex feature task in the same module.

On the bug fix, a decision table for choosing between two similar data-fetching approaches helped the agent pick the right pattern immediately and stay within codebase standards. On the feature task, the agent read that same file, got pulled into the reference section, opened dozens of other markdown files trying to verify its approach against every guideline, created unnecessary abstractions, and shipped an incomplete solution.

Different blocks of the document had opposite effects on different tasks.

What follows is which patterns work, which fail, and how to tell which is which for your codebase.

How we measured this

We used AuggieBench, one of our internal eval suites, to evaluate how well agents do our internal dev work. We start with high-quality PRs from a large repo that reflect typical day-to-day agent tasks, set up the environment and prompt, and ask the agent to do the same task. Then we compare its output against the golden PR, the version that actually landed after review by multiple senior engineers. We filtered out PRs with scope creep or known bugs.

For this study, we added two more filters: PRs had to be contained within a single module or app, and the scope had to be one where information in an AGENTS.md might plausibly help. We then ran each task twice, with and without the file, and compared scores.

What works

1. Progressive disclosure beats comprehensive coverage

Treat your AGENTS.md like a skill. Cover the common cases and workflows at a high level, then push details into reference files the agent can load on demand. Keep each reference's scope clear so the agent knows when to pull it in.

The 100–150 line AGENTS.md files with a handful of focused reference documents were the top performers in our study, delivering 10–15% improvements across all metrics in mid-size modules of around 100 core files. Once the main file got longer than that, the gains started reversing.

2. Procedural workflows take agents from failing to finishing

Describing a task as a numbered, multi-step workflow was one of the strongest patterns we measured. A well-written workflow can move the agent from unable to complete a task to producing a correct solution on the first try.

One example from our codebase: a six-step workflow for deploying a new integration. The agent followed it step by step. The share of PRs with missing wiring files dropped from 40% to 10%, and the agent finished faster on average. Correctness went up 25%. Completeness went up 20%.

For complex workflows, keep the main file concise and use reference files for branching cases.

3. Decision tables resolve ambiguity before the agent writes code

When your codebase has two or three reasonable ways to do something, decision tables force the choice up front. This is the pattern that most directly improved adherence to codebase conventions.

Example: resolving React Query vs Zustand for state management.

| Question | React Query | Zustand |

|---|---|---|

| Server is the only data source? | ✅ | |

| Multiple code paths mutate this state? | ✅ | |

| Need optimistic updates mixed with local state? | ✅ |

PRs in this area scored 25% higher on best_practices. The table resolved the ambiguity before the agent wrote a single line of code.

4. Examples from the real codebase improve code reuse

Short snippets of 3–10 lines from actual production code improved reuse and pattern adherence. Keep it to a few examples that are most relevant and not duplicative. More than that and the agent starts pattern-matching on the wrong thing.

Example: we included copy-paste templates for Redux Toolkit primitives: createSlice with typed initial state, createAsyncThunk with proper error handling, and the typed useAppSelector hook. code_reuse went up 20%. The agent followed the template instead of inventing its own state management pattern, and the codebase stayed consistent.

5. Domain-specific rules still matter

This is the pattern most people already associate with AGENTS.md: language- or org-specific gotchas.

Example: Use Decimal instead of float for all financial calculations. The agent catches truncation, rounding, and precision issues that it would otherwise miss. best_practices improves whenever the rule is directly relevant to the task.

This works when the rule is specific and enforceable. It stops working when you stack dozens of them. See the overexploration section below.

6. Pair every "don't" with a "do"

Warning-only documentation consistently underperformed documentation that paired prohibitions with a concrete alternative.

If you add Don't instantiate HTTP clients directly, pair it with Use the shared apiClient from lib/http with the retry middleware.

The first on its own makes the agent cautious and exploratory. The pair tells it what to do and moves on.

AGENTS.md files with 15+ sequential "don'ts" and no "dos" caused the agent to over-explore, stay conservative, and do less work. More on that below.

7. Keep your code modular, and AGENTS.md too

The best-performing agent docs described relatively isolated submodules. Mid-size modules, around 100 core files, with a 100–150 line AGENTS.md and a few reference documents, were where we saw the 10–15% cross-metric gains. Examples: UI components of the client, standalone services.

Huge, cross-cutting AGENTS.md files at the repo root underperformed module-level ones. But the document itself is only part of the story.

In our study, the worst-performing AGENTS.md files were the ones sitting on top of massive surrounding documentation. One module had 37 related docs totaling about 500K characters. Another had 226 docs totaling over 2MB. In both cases, removing just the AGENTS.md barely changed agent behavior. The agent kept finding and reading the surrounding doc sprawl, and the sprawl was the problem.

If your AGENTS.md is good but your module has 500K of specs around it, the specs are what the agent is reading. Fix the documentation environment, not just the entry point.

Where AGENTS.md falls short

The overexploration trap

This is the most common failure mode we observed, and it's essentially context rot.

Two patterns cause it:

1. Too much architecture overview

The agent gets pulled into reading documentation files, sometimes dozens of them, trying to "better understand the architecture." It loads tens or hundreds of thousands of tokens of context, and the output gets worse.

Example: an AGENTS.md included a full service topology covering the event bus, message queues, API gateway routing, and shared middleware layers, with reasoning for every architectural decision. The task: a two-line config change. The agent read 12 documentation files trying to understand the architecture before touching code, loaded about 80K tokens of irrelevant context, got confused about which service owned the config, and produced an incomplete fix. completeness dropped 25%.

Fix: keep architecture descriptions concise and isolated. Vague descriptions of component responsibilities push the agent into exploration mode. Highlight boundaries. Focus on the what, not the why.

2. Excessive warnings

A big section of "don'ts" without matching "dos" produces a specific failure. The agent reads each instruction, tries to figure out whether it applies to the current task, and starts verifying its solution against every single warning. With 30–50 warnings, that means reading migration scripts, checking API version compatibility, and exploring auth middleware code, even on a task where none of it matters.

Example: an AGENTS.md with 30+ "don't" rules covering database migrations, API versioning, deployment safety, and auth boundaries. The task: a simple CRUD endpoint. The agent checked each warning for relevance and explored code it didn't need to touch. The PR took twice as long and was 20% less complete on average.

Fix: keep the core gotchas in the main file and move the majority into reference files. Pair every "don't" with a "do" whenever possible.

New patterns break old documentation

If you're introducing a pattern that doesn't exist in your codebase yet, AGENTS.md can actively steer the agent in the wrong direction.

Example: the AGENTS.md documented existing REST + polling patterns. The task was to build real-time collaborative editing using WebSockets. The agent followed the docs and built a polling-based solution, technically functional but architecturally wrong. The golden PR used WebSockets with a completely different data flow.

Fix: the fix isn't a better AGENTS.md. It's spec-driven development for net-new architecture.

Know what you're optimizing for

Different patterns move different metrics. Pick the patterns that target the problem you actually have.

| If you want to improve... | Use this pattern |

|---|---|

| Reuse of existing code | Several clear and relevant examples from the prod code |

| Following established practices in the codebase | Decision tables for components and libraries |

| Ensuring proper wiring of big features | Procedural AGENTS.md |

| Handling of gotchas | "Don't" paired with "Do" |

| Context rot | Progressive disclosure of information via reference files |

| Context rot | Clear logical separation of what is in different reference files. Outline in AGENTS.md what exactly is there, but go no deeper |

| Context rot | Obvious advice, but AGENTS.md should only contain guidance relevant to the surrounding code |

How agents actually find your docs

Before deciding how to migrate your existing documentation, it helps to know what the agent actually reads. We traced documentation discovery across hundreds of sessions. The discovery rates are lopsided enough to shape migration priorities.

AGENTS.mdfiles are discovered automatically in 100% of cases, for every file in the hierarchy from the working directory by most harnesses.- References out of

AGENTS.mdare loaded on demand and read in over 90% of sessions when the agent has a reason to pull them in. - Directory-level

README.mdfiles aren't auto-loaded, but the agent reads them in 80%+ of sessions when it's working in that directory.

After that, discovery falls off a cliff.

- Nested

READMEs, meaningREADMEfiles in subdirectories the agent isn't currently working in, get discovered only about 40% of the time. - Orphan docs in

_docs/folders that nothing references get read in under 10% of sessions. One service in our codebase had 30K of detailed protocol design, throttling rules, and security docs in_docs/. The agent never opened most of them across dozens of sessions.

AGENTS.md is the only documentation location with reliable discovery. If something needs to be seen, it either lives there or is directly referenced from there. Moving the content into a referenced location is usually higher leverage than writing more docs.

Migrating existing docs

Every company already has READMEs, architecture docs, and design specs scattered across the repo. Here's how to turn that into something an agent can actually use.

Should you just rename your README.md to AGENTS.md?

README.md and AGENTS.md serve different audiences, but they can be reused. Agents are good enough at codebase summarization now that human-oriented docs are less necessary than they used to be. You can either write an agentic doc from scratch, or reuse your README.md. If you reuse it, trim it aggressively. Keep it short, follow the patterns above, and cut any section that's there for humans to skim.

When to keep existing documentation

If the docs are high quality, current, to the point, and have examples, reuse them. Reference them from module- or folder-level AGENTS.md files. Don't put more than 10–15 references in a single AGENTS.md and keep the context lean. And audit the surrounding environment: if the module around your AGENTS.md has dozens of architecture docs and spec files, the agent will find and read them whether you reference them or not. A focused 150-line AGENTS.md sitting on top of 500K of surrounding specs won't save the agent from the specs.

AGENTS.md isn't the only path

Agents find reference material through grep and semantic search too. About half of all search-result hits in our traces came from those tools, not from AGENTS.md references. If you're keeping legacy documentation, make sure the docs include relevant code examples and descriptive text that's searchable. A well-structured AGENTS.md gives you more control over what ends up in the context window, but it isn't the only way in.

What this study didn't cover

We focused on one-shot trajectories and the agent's ability to finish coding tasks without human intervention. We didn't look at best practices for maintaining AGENTS.md over time, though we're exploring that now. We also didn't cover operational, interactive, or analytics tasks. Those are coming in future posts.

Qwen's new 27B parameter model reportedly matches flagship-level coding performance in a package 14x smaller than their previous best model.

Deep dive

- Qwen3.6-27B achieves what the team claims is flagship-level coding performance while being 807GB → 55.6GB in full size compared to their previous best model

- The previous generation Qwen3.5-397B-A17B was a mixture-of-experts architecture with 397B total parameters and 17B active, while the new model is a 27B dense architecture

- Simon Willison tested a 16.8GB quantized version (Q4_K_M) locally using llama-server and found it delivered impressive results on SVG generation tasks

- The quantized model generated complex SVG images (pelican on bicycle) at 25.57 tokens/s for generation, producing 4,444 tokens in under 3 minutes on consumer hardware

- Testing methodology used specific llama-server configuration with reasoning mode enabled and thinking preservation to maximize coding performance

- The model downloaded to local cache (~17GB) on first run, making it practical for offline use once cached

- Performance remained strong even with aggressive quantization, suggesting the model's capabilities are robust across different compression levels

- SVG generation quality was described as "outstanding" for a 16.8GB local model, demonstrating practical coding assistance capabilities

- The breakthrough suggests that architectural improvements and training advances are enabling smaller dense models to compete with much larger sparse models

Decoder

- Dense model: A model where all parameters are used for every inference, unlike mixture-of-experts which activates only a subset

- MoE (Mixture of Experts): Architecture with many parameter groups where only some are active per request, allowing larger total size with lower active memory

- Quantized: Compressed model using lower precision numbers (like 4-bit instead of 16-bit) to reduce size while preserving most capability

- GGUF: A file format for storing quantized models optimized for efficient inference on CPUs and GPUs

- Agentic coding: AI systems that can autonomously plan, execute, and iterate on programming tasks rather than just generating code snippets

- Q4_K_M: A specific quantization scheme using 4-bit precision with medium-size optimization for balancing quality and size

Original article

Qwen3.6-27B: Flagship-Level Coding in a 27B Dense Model

Big claims from Qwen about their latest open weight model:

Qwen3.6-27B delivers flagship-level agentic coding performance, surpassing the previous-generation open-source flagship Qwen3.5-397B-A17B (397B total / 17B active MoE) across all major coding benchmarks.

On Hugging Face Qwen3.5-397B-A17B is 807GB, this new Qwen3.6-27B is 55.6GB.

I tried it out with the 16.8GB Unsloth Qwen3.6-27B-GGUF:Q4_K_M quantized version and llama-server using this recipe by benob on Hacker News, after first installing llama-server using brew install llama.cpp:

llama-server \

-hf unsloth/Qwen3.6-27B-GGUF:Q4_K_M \

--no-mmproj \

--fit on \

-np 1 \

-c 65536 \

--cache-ram 4096 -ctxcp 2 \

--jinja \

--temp 0.6 \

--top-p 0.95 \

--top-k 20 \

--min-p 0.0 \

--presence-penalty 0.0 \

--repeat-penalty 1.0 \

--reasoning on \

--chat-template-kwargs '{"preserve_thinking": true}'

On first run that saved the ~17GB model to ~/.cache/huggingface/hub/models--unsloth--Qwen3.6-27B-GGUF.

Here's the transcript for "Generate an SVG of a pelican riding a bicycle". This is an outstanding result for a 16.8GB local model:

Performance numbers reported by llama-server:

- Reading: 20 tokens, 0.4s, 54.32 tokens/s

- Generation: 4,444 tokens, 2min 53s, 25.57 tokens/s

For good measure, here's Generate an SVG of a NORTH VIRGINIA OPOSSUM ON AN E-SCOOTER (run previously with GLM-5.1):

That one took 6,575 tokens, 4min 25s, 24.74 t/s.

Introducing Gemini Enterprise Agent Platform, powering the next wave of agents (17 minute read)

Google rebrands Vertex AI as Gemini Enterprise Agent Platform, offering a comprehensive suite for building, deploying, and managing autonomous AI agents with enterprise-grade security and governance.

Deep dive

- Google is consolidating its enterprise AI strategy by retiring standalone Vertex AI and making Agent Platform the exclusive path forward for all future AI development services

- The platform processes over six trillion tokens monthly through ADK and supports access to 200+ models including Gemini 3.1 Pro, Gemma 4, and third-party models like Anthropic's Claude family

- Agent Runtime now delivers sub-second cold starts and supports multi-day autonomous workflows, allowing agents to maintain state and context for extended periods unlike traditional request-response AI systems

- Memory Bank enables persistent, long-term context storage with Memory Profiles for low-latency recall, while Custom Session IDs let developers map agent interactions directly to existing CRM and database records

- Agent Studio bridges the gap between low-code and full-code development by allowing visual agent building that can be exported directly to ADK for deeper customization

- Agent Identity assigns cryptographic IDs to every agent creating auditable trails, while Agent Registry provides a centralized catalog of approved tools and Agent Gateway enforces unified security policies across the agent fleet

- Agent Sandbox provides hardened, isolated environments for running model-generated code and browser automation tasks without risking core systems

- Security features include real-time Agent Anomaly Detection using LLM-as-a-judge frameworks, Agent Threat Detection for malicious activity like reverse shells, and a unified security dashboard powered by Security Command Center

- Agent Simulation allows pre-production testing against synthetic user interactions, while Agent Evaluation continuously scores live agents on multi-turn conversations rather than single responses

- Agent Optimizer automatically analyzes production failures and suggests refined system instructions rather than requiring manual log analysis

- Customer deployments span diverse use cases: Color Health's Virtual Cancer Clinic for breast cancer screening, Comcast's Xfinity Assistant for customer support, Gurunavi's UMAME restaurant discovery app claiming 30%+ satisfaction improvements, and PayPal's Agent Payment Protocol (AP2) for secure agent-based commerce

- The platform supports multi-agent orchestration with both deterministic and generative patterns, allowing complex workflows where agents delegate tasks while maintaining compliance through well-specified paths

- Native Ecosystem Integrations provide plug-and-play connectivity to internal data and tools, while Batch & Event-driven agents can process asynchronous tasks from BigQuery and Pub/Sub

- Agent Garden offers pre-built templates for code modernization, financial analysis, economic research, invoice processing, and other common enterprise use cases as starting points for multi-agent systems

Decoder

- ADK (Agent Development Kit): Code-first framework for building AI agents with programmatic control over logic, orchestration, and tool integration

- Memory Bank: Persistent storage system that allows agents to retain context and user preferences across sessions spanning multiple days

- Agent Runtime: The execution environment that runs deployed agents in production, handling state management and performance

- Agent Sandbox: Isolated, hardened environment where agents can safely execute code and perform automation tasks without accessing core systems

- MCP (Model Context Protocol): Protocol for securely connecting agents to enterprise data sources and applications

- Model Armor: Security layer that protects against prompt injection attacks and prevents data leakage in agent interactions

- LLM-as-a-judge: Framework where large language models evaluate other AI system outputs to detect anomalies or assess quality

- AP2 (Agent Payment Protocol): PayPal's protocol for enabling secure payment transactions initiated by AI agents

Original article

Introducing Gemini Enterprise Agent Platform, powering the next wave of agents

In the early days of generative AI, building safe and reliable business tools took massive engineering effort and a high tolerance for trial and error. We helped solve that with Vertex AI, our trusted AI development platform. But today, we're managing a different level of complexity, with agents interacting across multiple systems — and often without security and governance guardrails.

To move toward a truly autonomous enterprise, one where agents can act with the same independence and reliability as a member of your team, you need a foundation that can sustain that level of trust.

What's new: Today, we're launching Gemini Enterprise Agent Platform — our new, comprehensive platform to build, scale, govern, and optimize agents. It's the evolution of Vertex AI, bringing the model selection, model building, and agent building capabilities that customers love, together with new features for agent integration, DevOps, orchestration, and security.

Agent Platform provides a single destination for your technical teams to build agents that can transform your products, services, and operations. These agents can be seamlessly delivered to your employees through the Gemini Enterprise app, all while remaining tightly integrated with your IT operations to help ensure control, governance, and security as you scale.

The platform also provides first-class access to more than 200 of the world's leading models through Model Garden. This includes our latest first-party breakthroughs like Gemini 3.1 Pro, Gemini 3.1 Flash Image, and Lyria 3, alongside our open models like Gemma 4. And, of course, customers have full flexibility to use the best model for the job with support for third-party models like Anthropic's Claude Opus, Sonnet and Haiku.

Moving forward, all Vertex AI services and roadmap evolutions will be delivered exclusively through the Agent Platform, rather than as a standalone service, to power the next generation of agent development.

Why Agent Platform matters for your business: Agent Platform helps you move from managing individual AI tasks to delegating business outcomes with total confidence. You can:

- Build: Choose the right environment for the job — from the low-code, visual interface of the new Agent Studio, to the code-first logic of the upgraded Agent Development Kit (ADK). We've simplified the entire lifecycle with AI-native coding capabilities to help you ship production-grade agents faster.

- Scale: Clear the path to production with the re-engineered Agent Runtime. This supports long-running agents that maintain state for days at a time and are backed by Memory Bank for persistent, long-term context.

- Govern: Establish centralized control with Agent Identity, Agent Registry, and Agent Gateway. These capabilities help ensure every agent — whether built on Agent Platform or sourced from our partner ecosystem — has a trackable identity and operates within enterprise-grade guardrails.

- Optimize: Guarantee quality with Agent Simulation, Agent Evaluation, and Agent Observability. These tools provide full execution traces and a real-time lens into agent reasoning to help ensure your agents always hit their goals.

Get started with Agent Platform: Visit Agent Platform in the Google Cloud console to explore new features and start building today.

Keep reading for a deeper look at our latest releases and how Agent Platform helps you deliver the production-ready agents you can trust at every stage of the journey.

How customers are achieving more with Gemini Enterprise Agent Platform

"Burns & McDonnell uses Agent Platform to transform how organizational knowledge is applied across the enterprise. Using ADK, we are building an AI agent that turns decades of project data into real-time, actionable intelligence. Agent Platform enables this innovation to scale responsibly by combining deterministic business rules with probabilistic reasoning — making AI a trusted operational capability, not just a productivity tool. With Agent Platform, we aren't just managing knowledge; we are activating experience to drive faster, more confident decisions." – Matt Olson, Chief Innovation Officer, Burns & McDonnell

"Color Health uses Agent Platform to power our Virtual Cancer Clinic, delivering end-to-end care. By building our Color Assistant with the Agent Development Kit (ADK) and scaling it via Agent Runtime, we are helping more women get screened for breast cancer. The Color Assistant engages users to check screening eligibility, connects them to clinicians, and helps schedule appointments. The power of the agent lies in the scale it enables — helping us reach more people and respond to individual risk and eligibility in real time." – Jayodita Sanghvi, PhD., Head of AI Platform, Color

"By rebuilding Comcast's Xfinity Assistant with Agent Development Kit (ADK), we've moved beyond simple scripted automation to conversational generative intelligence that delivers personalized troubleshooting and self-service support to our customers. Agent Runtime has been a massive accelerator, allowing us to deploy a sophisticated multi-agent architecture that increases digital containment while ensuring secure, grounded interactions via Gemini. We aren't just reducing repeat interactions by solving customers' issues the first time; we're redefining the customer experience at scale." – Rick Rioboli, Chief Technical Officer, Connectivity & Platforms, Comcast

"Geotab uses Agent Platform to rapidly accelerate our AI Agent Center of Excellence. Google's Agent Development Kit (ADK) provides the flexibility to orchestrate various frameworks under a single, governable path to production, while offering an exceptional developer experience that dramatically speeds up our build-test-deploy cycle. For Geotab, ADK is the foundation that allows us to rapidly and safely scale our agentic AI solutions across the enterprise" – Mike Branch, Vice President, Data & Analytics, GeoTab

"Gurunavi uses Agent Platform to power 'UMAME!', an AI restaurant discovery app that leverages Memory Bank to achieve a deep understanding of user context. Unlike conventional prompt-based systems, our agent remembers a user's past actions and preferences to proactively present the best options. This eliminates the need for manual searches and creates a seamless experience that will improve user satisfaction by 30% or more. We view this memory function as a non-negotiable feature for the future of new culinary experiences." – Toshiaki Iwamoto, CTO, Gurunavi

"At L'Oréal, Beauty Tech is not just a support function — it is a powerful catalyst to create the beauty that moves the world. To live up to that ambition, we decided to build our own proprietary Beauty Tech Agentic Platform, powered by Google Cloud. Leveraging Agent Development Kit (ADK), we are leading a fundamental shift: moving from deterministic workflow automation to autonomous, outcome-oriented agent orchestration. Our agents are not locked in a vacuum — through Model Context Protocol (MCP), they are securely connected to our single sources of truth, including our Beauty Tech Data Platform and core operational applications. Google Cloud gives us the resilience, the multi-LLM flexibility, and the enterprise-grade trust framework we need to scale this platform globally, while keeping human oversight at the center." – Etienne BERTIN, Group CIO, L'Oréal

"Payhawk uses Agent Platform to transform our AI agents from simple task executors into genuine financial assistants. By leveraging Memory Bank, we have moved from stateless interactions to long-term context retention. Our agents now act like dedicated team members, autonomously recalling user-specific constraints and history. For example, our Financial Controller Agent now remembers a user's habits to auto-submit expenses, reducing submission time by over 50%. This shift allows our agents to anticipate needs based on past behavior rather than just reacting to prompts." – Diyan Bogdanov, Principal Applied AI Engineer, Payhawk

"PayPal uses Agent Platform to rapidly build and deploy agents in production. Specifically, we use Agent Development Kit (ADK) and visual tools to inspect agent interactions, and manage multi-agent workflows. This provides the step-by-step visibility we need to visualize the flow of intent and payment mandates. Finally, Agent Payment Protocol (AP2) on Agent Platform provides the critical foundation for trusted agent payments. helping our ecosystem accelerate the shipping of secure agent-based commerce experiences." – Nitin Sharma, Principal Engineer, AI, PayPal

Build AI agents

Build agents quickly and easily by empowering your developers, business users and everyone in between to build and deploy agents at scale.

Build smarter agents, faster

- A major upgrade to ADK: More than six trillion tokens are processed monthly on Gemini models through ADK. Unlock more powerful reasoning by organizing agents into a network of sub-agents. This new, graph-based framework allows you to define clear, reliable logic for how agents work together to solve complex problems.

- Workspaces are secure-by-design: Give agents a hardened, sandboxed environment to run bash commands and manage files safely, isolated from your core systems.

- Multimodal streaming: Bring human-like stability to real-time interactions with multimodal support for live audio and video cues.

Connect your agents to the enterprise

- Securely access any system: Use plug-and-play architecture with Native Ecosystem Integrations to connect agents to your internal data and tools without custom coding.

- Automate background operations: Activate your data in BigQuery and Pub/Sub with Batch & Event-driven agents. This way, you can run massive, asynchronous tasks like content evaluation or data analysis in the background.

Go from idea to production in hours

- Enable AI-driven development: A programmatic interface for coding agents to access Google's complete suite of agentic capabilities, allowing them to build, evaluate, and deploy production-ready agents on your behalf.

- Bringing agent building directly to Agent Studio: Now, you can move seamlessly from building simple prompts to deploying complex agents in Agent Studio. Once you're ready for deep customization, export your logic directly into ADK to continue development in a full-code environment.

- Get a head start with pre-built agents: Access a curated set of agent templates in Agent Garden — including code modernization, financial analysis, economic research, invoice processing, and more — that serve as immediate building blocks for your multi-agent systems.

Scale AI agents

To move from a proof-of-concept to a live environment, you need a platform that can handle the performance, state, and security requirements of real-world work.

Powering high-performance agent execution

- The latest Agent Runtime: Our revamped Agent Runtime delivers sub-second cold starts and allows you to provision new agents in seconds.

- Support for multi-day workflows: You can now deploy long-running agents that run autonomously for days at a time. This allows your agents to manage complex, multi-step workflows and deep reasoning tasks that require extended persistence, like managing a sales prospecting sequence.

- Autonomous action with security-by-design environments: Agent Sandbox provides a hardened environment to safely execute model-generated code and perform computer use tasks like browser-based automation without risk to your host systems.

- Agent-to-agent orchestration: Enables agents to seamlessly delegate tasks to one another, including support for complex, generative, and deterministic orchestration patterns. This ensures that for critical flows such as compliance, your agents follow well-specified paths every time.

Move beyond temporary session data to high-accuracy context

- Personalize interactions: Agent Memory Bank dynamically generates and curates long-term memories from conversations. Using new Memory Profiles, agents can recall high-accuracy details with low latency, ensuring context is never lost.

- Link AI interactions to your existing records: Store and manage history using Agent Sessions. With Custom Session IDs, you can use your own unique identifiers to track sessions and map them directly to your internal database and CRM records.

- Enable real-time, human-like interactions: Using the WebSocket protocol for Bidirectional Streaming, you can help ensure your agents are highly responsive during live customer or employee interactions, processing audio and video without lag.

Govern AI agents

Govern with a secure-by-design architecture that applies enterprise rigor to every agent in your fleet — from the ones you build on Agent Platform to the ones you source from our partner ecosystem.

Manage all of your agents through a single source of truth for identity and access

- Assign every agent a verifiable identity: Agent Identity improves the security posture of your agents by ensuring every agent receives a unique cryptographic ID. This creates a clear, auditable trail for every action an agent takes, mapped back to defined authorization policies.

- Maintain a central library of approved tools: Our new Agent Registry provides a single source of truth for your enterprise. It indexes every internal agent, tool, and skill, simplifying discovery and ensuring only governed, approved assets are available to your users.

- Manage your agent fleet from one control point: Agent Gateway acts as the air traffic control for your agent ecosystem. It provides secure, unified connectivity between agents and tools across any environment, while enforcing consistent security policies and Model Armor protections to safeguard against prompt injection and data leakage.

Use AI-powered insights to detect hidden risks and suspicious behavior before they impact your business

- Detect suspicious behavior in real-time: Agent Anomaly Detection uses statistical models and an LLM-as-a-judge framework to flag unusual reasoning. This works alongside Agent Threat Detection to provide visibility into malicious activity, such as reverse shells or connections to known bad IP addresses.

- Uncover vulnerabilities automatically: A new Agent Security dashboard, powered by Security Command Center, unifies threat detection and risk analysis. It allows your teams to map relationships between agents and models, automate asset discovery, and scan for vulnerabilities in the underlying operating system and language packages.

Optimize AI agents

Agent Platform gives you the visibility needed to understand how your AI is performing, making it easy to refine their logic and get smarter over time.

Test your agents before they ship

- Simulate realistic conversations: Use Agent Simulation to test agents against human-like synthetic user interactions and virtualized tools in a controlled environment. Agents are automatically scored based on task success and safety across multi-step conversations.

Monitor and improve in production

- Track live performance: Use Agent Evaluation to continuously score agents against live traffic using multi-turn autoraters that can evaluate the logic of an entire conversation, not just a single response. With turnkey dashboards and Agent Observability, you can visually trace complex reasoning to debug issues as they happen.

- Automate agent refinement: Instead of manually digging through logs, Agent Optimizer automatically clusters real-world failures and suggests refined system instructions to improve accuracy.

Detailed technical guides and a full list of updates are available in our updated documentation and release notes. Agent Platform is the new standard for enterprise agent development, built to help you move from experimentation to production-scale impact, starting today.

Anthropic explains why Model Context Protocol (MCP) is becoming the standard for connecting production AI agents to external systems, with SDK downloads growing from 100 million to 300 million per month.

Deep dive

- Production AI agents are increasingly cloud-hosted to enable continuous operation and scale, requiring a standardized way to connect to remote systems where data lives and work happens

- Three integration approaches exist: direct API calls (simple but creates bespoke integrations for each agent-service pair), CLIs (lightweight but limited to local environments with filesystems), and MCP (requires upfront investment but provides portability)

- MCP SDK downloads jumped from 100 million to 300 million per month in early 2026, with millions of daily users and adoption across enterprises and major agentic platforms

- The protocol now underpins Claude Cowork, Claude Managed Agents, and channels in Claude Code, with over 200 servers in Anthropic's directory

- Best practice for MCP servers: build remote servers instead of local ones to reach web, mobile, and cloud-hosted agents across all major clients

- Design tools around user intent rather than mirroring API structure—a single create_issue_from_thread tool outperforms separate get_thread, parse_messages, create_issue, and link_attachment primitives

- For services with hundreds of operations (AWS, Kubernetes, Cloudflare), expose a minimal tool surface that accepts code which runs in a sandbox against your API—Cloudflare's MCP server covers ~2,500 endpoints with just two tools in roughly 1,000 tokens

- MCP Apps extension allows tools to return interactive interfaces like charts, forms, and dashboards rendered inline in chat, driving meaningfully higher adoption and retention than text-only responses

- Elicitation lets servers pause mid-execution to request user input via form mode (for missing parameters or confirmations) or URL mode (for OAuth flows or payment collection) without breaking the user's flow

- OAuth with CIMD (Client ID Metadata Documents) provides fast first-time auth and fewer re-auth prompts, now supported across MCP SDKs, Claude.ai, and Claude Code as the recommended auth approach

- Claude Managed Agents' vault system lets you register OAuth tokens once, reference them by ID at session creation, and automatically handles credential injection and refresh without building a secret store

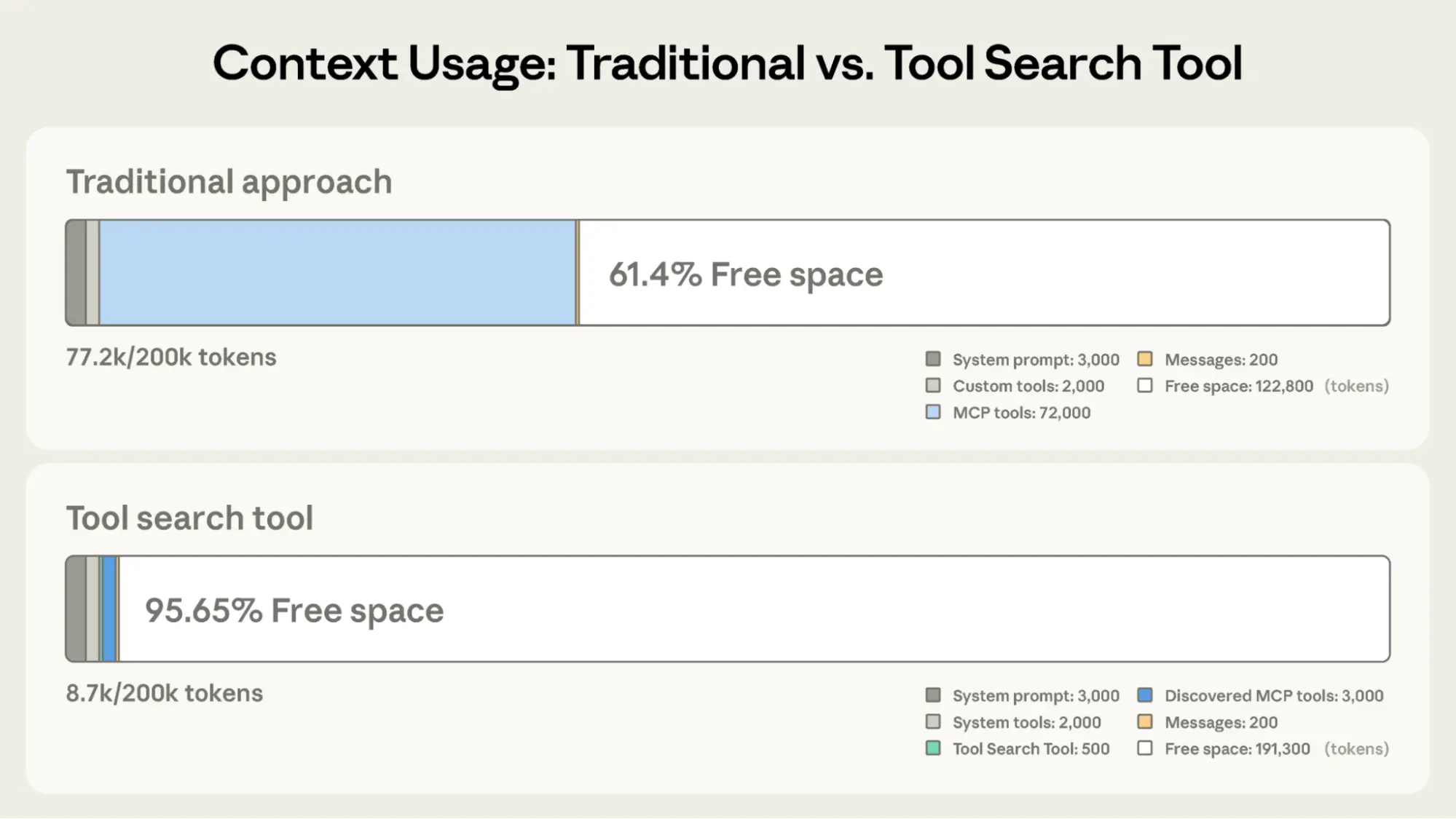

- Tool search pattern loads tool definitions on demand rather than upfront, reducing tool-definition token usage by 85%+ while maintaining selection accuracy

- Programmatic tool calling processes results in code-execution sandboxes instead of returning them raw to the model, cutting token usage by roughly 37% on complex workflows

- Skills complement MCP by teaching agents procedural knowledge of how to use tools—the most capable agents combine both, either bundled as plugins or with skills distributed directly from MCP servers

- MCP compounds over time: as more clients adopt the spec and extensions land, existing servers become more capable automatically without new deployments

Decoder

- MCP (Model Context Protocol): A standardized protocol that defines how AI agents (clients) connect to and interact with external tools and data sources (servers), handling auth, discovery, and semantics

- CIMD (Client ID Metadata Documents): An OAuth extension that enables client registration for smooth authentication flows with fewer re-authorization prompts

- Tool search: A pattern where agents search a tool catalog at runtime and load only relevant tool definitions into context, rather than loading all tools upfront

- Programmatic tool calling: A technique where tool results are processed in a code-execution sandbox using loops, filters, and aggregation, with only final output sent to the model's context

- Elicitation: A capability that lets MCP servers pause mid-execution to request user input, either via native forms or browser handoff for OAuth/payments

- MCP Apps: A protocol extension allowing tools to return interactive UI components (charts, forms, dashboards) that render inline in the chat interface

- Skills: Procedural knowledge or playbooks that teach agents how to orchestrate tools to accomplish real work, complementing raw tool access

- Remote server: An MCP server accessible over the network (not local-only), enabling use across web, mobile, and cloud-hosted agent platforms

Original article

Building agents that reach production systems with MCP