Design Systems are Now Inference Systems (7 minute read)

Design systems are evolving from static component libraries into "Inference Systems" where AI agents dynamically generate interfaces using adaptive parameters instead of fixed patterns.

Deep dive

- Traditional design systems from the 2010s defined fixed patterns (buttons, modals, forms) that designers assembled into screens, assuming stable layouts and fixed input modalities

- AI agents now generate interfaces dynamically mid-conversation, creating surfaces that didn't exist as designed artifacts when the interaction started—breaking the assumptions of traditional design systems

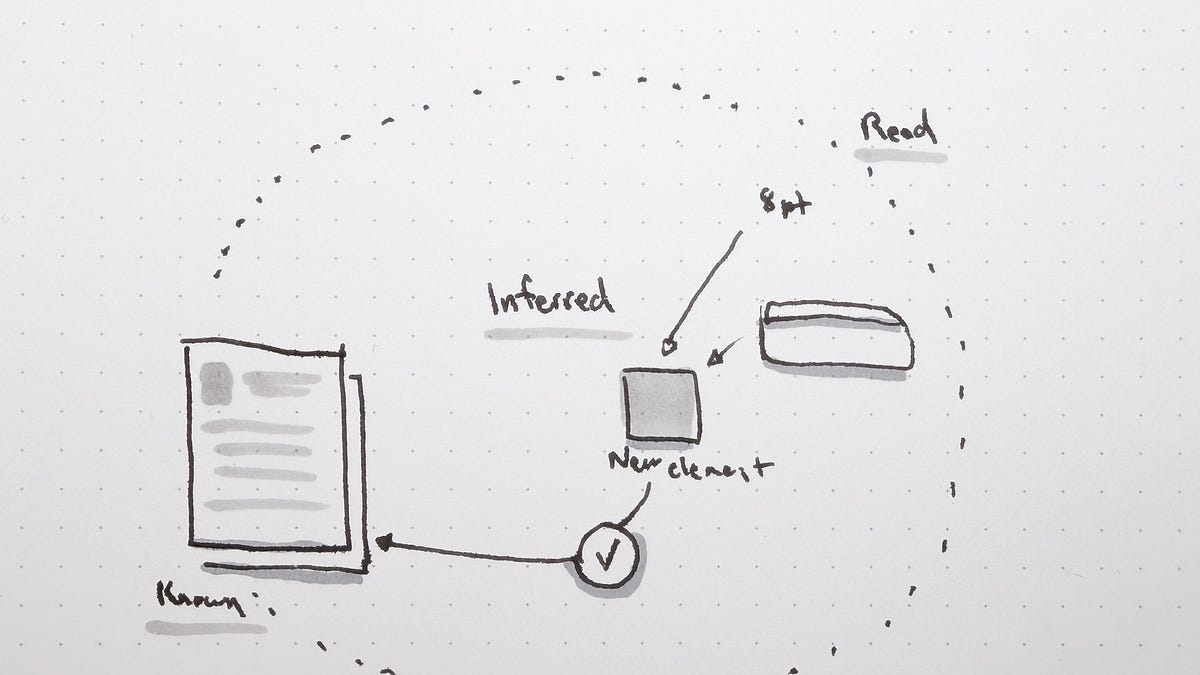

- The shift from patterns to parameters means instead of defining "the modal is 480px wide with 24px padding," you define "the modal expresses focused attention; its width compresses when context is dense and expands for multi-step tasks"

- Parameters give the system behavioral rules it can apply in conditions the original designer never anticipated, allowing it to invent components the library doesn't contain

- Design tokens are evolving from storing values (

--color-primary: #0066FF) to storing intent (--color-interactive-primarywith semantic meaning, usage rules, and relationships) - When models know why to use interactive-primary ("the most prominent action in a given context"), they can make defensible choices in unseen layouts rather than just matching colors

- MCP servers and Google Stitch's design.md file exemplify the shift: design artifacts become structured data that both humans and AI can consume as first-class outputs

- Traditional governance through human review (checking if teams used the right component) breaks when AI generates layouts and revises them seconds later—the volume of decisions jumps by orders of magnitude

- Inference systems need to evaluate conformance as work is produced and learn from what gets built, treating the system as a sensor rather than a wall

- A single deviation is likely a mistake, but fourteen teams independently building the same off-system component signals the system is behind its users and needs to evolve

- Airbnb is classifying 150+ components with ML so AI tools can assemble prototypes from user behavior rather than from a designer's blank canvas

- Success metrics must evolve from "adoption" (how many teams use your components) to "adaption" (how well the system learns from what teams and agents actually build)

- Prototypes become first-class context and signals of what needs to grow, though not every prototype should go to production—the insight is the artifact

- Teams who understand this shift will rebuild their design systems to look less like component catalogs and more like the model's understanding of their product

Decoder

- Design System: A collection of reusable UI components, patterns, and guidelines that ensure consistency across a product

- Design Tokens: Variables that store design decisions like colors, spacing, and typography (e.g.,

--color-primary: #0066FF) - Agentic Experience: Interactions driven by AI agents that dynamically generate and adapt interfaces rather than following predefined flows

- MCP (Model Context Protocol): A protocol that allows AI models to access structured data about design artifacts like Figma files

- Inference System: A design system that AI models can reason about and use to generate appropriate UI dynamically, rather than just a reference catalog

- Semantic Tokens: Design tokens that capture intent and meaning (why to use something) rather than just values (what it looks like)

- Blitzscaling: The rapid growth strategy many tech companies pursued in the 2010s

Original article

Design systems, built in the 2010s for human-scale processes, are becoming "Inference Systems" as AI agents now assemble interfaces dynamically rather than following prescribed patterns. The shift involves three core changes: static patterns give way to adaptive parameters, human-readable documentation becomes machine-parseable context, and governance evolves from review checkpoints into continuous feedback loops. Success metrics must also evolve — adoption alone is insufficient, and adaptation, meaning how well the system learns from what teams actually build, becomes the defining measure.