Devoured - May 01, 2026

A critical Linux kernel vulnerability (Copy Fail) lets unprivileged users gain root on every major distribution since 2017 with a 732-byte script, so patch immediately. On the AI tooling front, Anthropic launched Claude Security for automated code vulnerability scanning, Cursor sold to xAI for $60B after model providers undercut its margins, and Cloudflare partnered with Stripe to let AI agents autonomously provision infrastructure and deploy applications.

.jpg)

Anthropic launches Claude Security in public beta, allowing enterprises to scan codebases for vulnerabilities using the Opus 4.7 model with automated patch generation.

Deep dive

- Claude Security uses Opus 4.7 to analyze code like a security researcher, understanding component interactions and data flows rather than just pattern matching

- The tool includes multi-stage validation to reduce false positives and assigns confidence ratings to each finding

- Hundreds of organizations tested it in preview, with feedback leading to features like scheduled scans, targeted directory scanning, and dismissing findings with documented reasons

- Results can be exported to CSV/Markdown or pushed to tools like Slack and Jira via webhooks

- Users reported going from scan to patch in a single session instead of days of back-and-forth between security and engineering teams

- Integration partners include major security platforms (CrowdStrike, Microsoft Security, Palo Alto Networks, SentinelOne, TrendAI, Wiz) and consulting firms (Accenture, BCG, Deloitte, Infosys, PwC)

- The tool is part of a broader effort alongside Project Glasswing, which uses Claude Mythos Preview for elite-level vulnerability discovery and exploitation

- Anthropic positions this as defensive preparation for a future where AI makes working exploits much easier to discover

- Opus 4.7 includes cyber safeguards that block high-risk security uses, with a Cyber Verification Program for legitimate security work

- Currently available to Enterprise customers, with Team and Max customer access coming soon

Decoder

- Opus 4.7: Anthropic's most powerful generally-available language model, specialized for finding and patching software vulnerabilities

- Project Glasswing: Anthropic's partnership program providing Claude Mythos Preview to select partners for elite-level vulnerability research

- Claude Mythos Preview: A specialized model that matches expert-level performance at finding and exploiting vulnerabilities, more advanced than Opus 4.7

- False positives: Security alerts that incorrectly flag safe code as vulnerable, wasting analyst time

Original article

Claude Security, now in public beta for Claude Enterprise customers, leverages the powerful Opus 4.7 model to identify and patch software vulnerabilities. The model, integrated into tools used by partners like Microsoft Security and Palo Alto Networks, enhances cybersecurity defenses by enabling efficient, ongoing code scanning without requiring custom API integration. Feedback from hundreds of organizations has refined its capabilities.

xAI released Grok 4.3 with significantly reduced pricing and improved real-world agentic performance while achieving higher intelligence scores than its predecessor.

Deep dive

- Grok 4.3 achieves a 4-point improvement on the Intelligence Index over Grok 4.20 0309 v2 while reducing the total cost to run the full benchmark suite by approximately 20% to $395

- The model shows a massive 321-point ELO increase on GDPval-AA (from 1179 to 1500), a real-world agentic task benchmark, surpassing models like Gemini 3.1 Pro Preview and Muse Spark

- Despite the improvements, Grok 4.3 still trails GPT-5.5 (xhigh) by 276 ELO points on agentic tasks, with only a 17% expected win rate under standard ELO calculations

- The model reaches 98% on τ²-Bench Telecom for instruction following, matching GLM-5.1, and maintains an 81% IFBench score

- Token usage increased by approximately 44% compared to Grok 4.20, but the dramatic price cuts (37.5% lower input, 58.3% lower output) more than compensate

- Performance on knowledge tasks shows mixed results: 8-point gain in AA-Omniscience Accuracy but 8-point decrease in Non-Hallucination Rate

- The model sits on the Pareto frontier for intelligence versus cost, representing one of the best cost-efficiency tradeoffs at its intelligence level

- Grok 4.3's verbosity remains moderate compared to other frontier models, using similar token counts to Minimax M2.7

Decoder

- Intelligence Index: Artificial Analysis's composite benchmark measuring AI model performance across multiple evaluations including reasoning, coding, and knowledge tasks

- GDPval-AA: A real-world agentic task evaluation that measures how well models can complete practical multi-step tasks like a customer service agent

- ELO score: A rating system borrowed from chess that estimates relative skill levels, where higher scores indicate better performance and score differences predict win rates

- τ²-Bench (Tau-squared Bench): A benchmark measuring instruction-following abilities in specific domains like telecommunications customer support

- Agentic tasks: Multi-step problems requiring the model to plan, use tools, and adapt its approach autonomously rather than just responding to single prompts

- IFBench: Instruction Following Benchmark, measuring how accurately models comply with specific instructions

- AA-Omniscience: Artificial Analysis benchmark measuring factual knowledge accuracy and tendency to hallucinate or guess incorrectly

- Pareto frontier: The set of optimal tradeoffs where improving one metric (like intelligence) requires sacrificing another (like cost)

Original article

Grok 4.3

xAI has launched Grok 4.3, achieving 53 on the Artificial Analysis Intelligence Index with improved agentic performance, ~40% lower input price, and ~60% lower output price than Grok 4.20

The release of Grok 4.3 places @xAI just above Muse Spark and Claude Sonnet 4.6 on the Intelligence Index, and a 4 points ahead of the latest version of Grok 4.20. Grok 4.3 improves its Artificial Analysis Intelligence Index score while reducing cost to run the benchmark suite.

Key Takeaways

- Grok 4.3 improves on cost-per-intelligence relative to Grok 4.20 0309 v2: it scores higher on the Intelligence Index while costing less to run the full benchmark suite. Grok 4.3 costs $395 to run the Artificial Analysis Intelligence Index, around 20% lower than Grok 4.20 0309 v2, despite using more output tokens. This makes it one of the lower-cost models at its intelligence level

- Large increase in real world agentic task performance: The largest single benchmark improvement is on GDPval-AA, where Grok 4.3 scores an ELO of 1500, up 321 points from Grok 4.20 0309 v2's score of 1179 Grok 4.3, surpassing Gemini 3.1 Pro Preview, Muse Spark, Gpt-5.4 mini (xhigh), and Kimi K2.5. Grok 4.3 narrows the gap to the leading model on GDPval-AA, but still trails GPT-5.5 (xhigh) by 276 Elo points, with an expected win rate of ~17% against GPT-5.5 (xhigh) under the standard Elo formula

- Grok 4.3's performs strongly on instruction following and agentic customer support tasks. It gains 5 points on 𝜏²-Bench Telecom to reach 98%, in line with GLM-5.1. Grok 4.3 maintains an 81% IFBench score from Grok 4.20 0309 v2

- Gains 8 points on AA-Omniscience Accuracy, but at the cost of lower AA-Omniscience Non-Hallucination Rate of 8 points, so Grok 4.20 0309 v2 still leads AA-Omniscience Non-Hallucination Rate, followed by MiMo-V2.5-Pro, in line with Grok 4.3

Congratulations to @xAI and @elonmusk on the impressive release! This release shows increased cost efficiency to run the Artificial Analysis Intelligence Index, with Grok 4.3 sitting comfortably on the Pareto frontier for intelligence versus cost

Driven by 37.5% lower input token prices and 58.3% lower output token prices, it costs $395 to run the Intelligence Index evaluations, an overall ~20% decrease from Grok 4.20 0309 v2

Grok 4.3 uses ~44% more output tokens to run the Artificial Analysis Intelligence Index than Grok 4.20 0309 v2, but uses a similar number of tokens to models like Minimax M2.7 and remains less verbose than other leading models

The largest single benchmark improvement is on GDPval-AA, where Grok 4.3 scores an ELO of 1500, up 321 points from Grok 4.20 0309 v2's score of 1179

Breakdown of individual evaluations, including leading scores on 𝜏²-Bench Telecom and IFBench

See Artificial Analysis for further details and benchmarks: artificialanalysis.ai/models/grok-4-3

Gemini 3.1 Pro Preview

Google is once again the leader in AI: Gemini 3.1 Pro Preview leads the Artificial Analysis Intelligence Index, 4 points ahead of Claude Opus 4.6 while costing less than half as much to run

@GoogleDeepMind gave us pre-release access to Gemini 3.1 Pro Preview. It leads 6 of the 10 evaluations that make up the Artificial Analysis Intelligence Index and improves significantly over Gemini 3 Pro Preview across capabilities, with the biggest gains in reasoning and knowledge, coding, and hallucination reduction.

Gemini 3.1 Pro Preview also remains relatively token efficient, using ~57M tokens to run the Artificial Analysis Intelligence Index (+1M from Gemini 3 Pro Preview), lower than other frontier models at max reasoning settings such as Opus 4.6 (max) and GPT-5.2 (xhigh). Combined with lower per-token pricing, Gemini 3.1 Pro Preview is cost-efficient among frontier peers, costing less than half as much as Opus 4.6 (max) to run the full Intelligence Index, though still nearly 2x the leading open-weights model, GLM-5.

Key Takeaways

- State-of-the-art intelligence at lower costs: Gemini 3.1 Pro Preview is leading 6 of the 10 evaluations that make up the Artificial Analysis Intelligence Index at less than half the cost to run of frontier peers from @OpenAI and @AnthropicAI. It obtains the highest score in Terminal-Bench Hard (agentic coding), AA-Omniscience (knowledge & hallucination), Humanity's Last Exam (reasoning & knowledge), GPQA-Diamond (scientific reasoning), SciCode (coding) and CritPt (research-level physics). The CritPt score is particularly notable, scoring 18% on unpublished, research-level physics reasoning problems, over 5 p.p. above the next best model

- Improved real-world agentic performance, but not leading: Gemini 3.1 Pro Preview shows an improvement in GDPval-AA, our agentic evaluation focusing on real-world tasks, but is still not the leading model in this area. The model increases its ELO score over 100 points to 1316 (up from Gemini 3 Pro Preview), however still sits behind Claude Sonnet 4.6, Opus 4.6, GPT-5.2 (xhigh), and GLM-5

- Leading coding abilities: Gemini 3.1 Pro Preview leads the Artificial Analysis Coding Index, achieving the highest score in both Terminal-Bench Hard (54%) and SciCode (59%)

- Reduced hallucinations: Gemini 3.1 Pro Preview shows a major improvement in tendency to guess incorrectly when it doesn't know the answer, reducing its AA-Omniscience hallucination rate by 38 p.p. from Gemini 3 Pro Preview

- Maintained token and cost efficiency: Gemini 3.1 Pro Preview improves without material increases in cost or token usage. It uses only ~2% more tokens to run the Artificial Analysis Intelligence Index than Gemini 3 Pro Preview, and keeps the same pricing ($2/$12 per 1M input/output tokens for ≤200k context). Its cost to run the Artificial Analysis Intelligence Index of $892 is less than half of frontier models such as Opus 4.6 (max) and GPT-5.2 (xhigh), though still ~2x the cost of leading open weights models such as GLM 5 ($547)

- Google takes top 3 spots in multi-modality: Gemini 3.1 Pro Preview ranks #1 on MMMU-Pro, our multimodal understanding and reasoning benchmark, ahead of Gemini 3 Pro Preview and Gemini 3 Flash, reinforcing Google's leadership in multimodal reasoning

- Other model details: Gemini 3.1 Pro Preview retains the same 1 million token context window as its predecessor, and includes support for tool calling, structured outputs, and JSON mode

Gemini 3.1 Pro Preview improves without becoming more expensive or much more verbose, using only ~1M more tokens compared to Gemini 3 Pro Preview, representing a $72 increase in cost to run the Artificial Analysis Intelligence Index. This cost is less than half of frontier peers such as Opus 4.6 (max) and GPT-5.2 (xhigh), though still ~2x the cost of leading open-weights models such as GLM 5 and Kimi K2.5.

Gemini 3.1 Pro Preview has an average speed of 114 output tokens/s. Although slightly slower than its predecessor (-10 t/s), it remains one of the fastest models in the top 10 of the Artificial Analysis Intelligence Index, trailing only other Google models (Gemini 3 Flash and Gemini 3 Pro Preview).

MiMo-V2-Flash

Xiaomi has just launched MiMo-V2-Flash, a 309B open weights reasoning model that scores 66 on the Artificial Analysis Intelligence Index. This release elevates Xiaomi to alongside other leading AI model labs.

Key benchmarking takeaways

- Strengths in Agentic Tool Use and Competition Math: MiMo-V2-Flash scores 95% on τ²-Bench Telecom and 96% on AIME 2025, demonstrating strong performance on agentic tool-use workflows and competition-style mathematical reasoning. MiMo-V2-Flash currently leads the τ²-Bench Telecom category among evaluated models

- Cost competitive: The full Artificial Analysis evaluation suite cost just $53 to run. This is supported by MiMo-V2-Flash's highly competitive pricing of $0.10 per million input and $0.30 per million output, making it particularly attractive for cost-sensitive deployments and large-scale production workloads. This is similar to DeepSeek V3.2 ($54 total cost to run), and well below GPT-5.2 ($1,294 total cost to run)

- High token usage: MiMo-V2-Flash is demonstrates high verbosity and token usage relative to other models in the same intelligence tier, using ~150M reasoning tokens across the Artificial Analysis Intelligence suite

- Open weights: MiMo-V2-Flash is open weights and is 309B parameters with 15B active at inference time. Weights are released under a MIT license, continuing the trend of Chinese AI model labs open sourcing their frontier models

MiMo-V2-Flash demonstrates particular strength in agentic tool-use and Competition Math, scoring 95% on τ²-Bench Telecom and 96% on AIME 2025. This places it amongst the best performing models in these categories.

MiMo-V2-Flash is one of the most cost-effective models for its intelligence, priced at only $0.10 per million input tokens and $0.30 per million output tokens.

Stirrup

Announcing Stirrup, our new open source framework for building agents. It's lightweight, flexible, extensible and incorporates best-practices from leading agents like Claude Code

Stirrup differs from other agent frameworks by avoiding the rigidity that can degrade output quality. Stirrup lets models drive their own workflow, like Claude Code, while still giving developers structure and building in essential features like context management, MCP support and code execution. We use Stirrup at Artificial Analysis as part of our agentic benchmarks, including as part of our GDPval-AA evaluation being released later today. Just 'pip install stirrup' to start building your own agents today!

Key advantages

- Works with the model, not against it: Stirrup steps aside and lets the model decide how to solve multi step tasks, as opposed to existing frameworks which impose strict patterns that limit performance.

- Best practices built in: We studied leading agent systems (e.g. Claude Code) to extract practical patterns around context handling, tool design, and workflow stability, and embedded those directly into the framework.

- Fully customizable: Use Stirrup as a package or as a starting template to build your own fully customized agents.

Feature highlights

- Essential tools ready to use: Ships with pre built tools such as online search and browsing, code execution (local, docker, or using an @e2b sandbox), MCP client and document IO

- Flexible tool layer: A Generic Tool interface makes it simple to define and extend custom tools

- Context management: Automatic summarization to stay within context limits while preserving task fidelity

- Provider flexibility: Built in support for OpenAI compatible APIs (including @OpenRouterAI) and LiteLLM, or bring your own client

- Multimodal support: Process images, video, and audio with automatic format handling

Stirrup agents can be easily set up in just a few lines of code

Stirrup includes built in logging to help you observe and debug agents

Artificial Analysis Openness Index

Introducing the Artificial Analysis Openness Index: a standardized and independently assessed measure of AI model openness across availability and transparency

Openness is not just the ability to download model weights. It is also licensing, data and methodology - we developed a framework underpinning the Artificial Analysis Openness Index to incorporate these elements. It allows developers, users, and labs to compare across all these aspects of openness on a standardized basis, and brings visibility to labs advancing the open AI ecosystem.

A model with a score of 100 in Openness Index would be open weights and permissively licensed with full training code, pre-training data and post-training data released - allowing users to not just use the model but reproduce its training in full, or take inspiration from some or all of the model creator's approach to build their own model. We have not yet awarded any models a score of 100!

Key details

- Few models and providers take a fully open approach. We see a strong and growing ecosystem of open weights models, including leading models from Chinese labs such as Kimi K2, Minimax M2, and DeepSeek V3.2. However, releases of data and methodology are much rarer - OpenAI's gpt-oss family is a prominent example of open weights and Apache 2.0 licensing, but minimal disclosure otherwise.

- OLMo from @allen_ai leads the Openness Index at launch. Living up to AI2's mission to provide 'truly open' research, the OLMo family achieves the top score of 89 (16 of a maximum of 18 points) on the Index by prioritizing full replicability and permissive licensing across weights, training data, and code. With the recent launch of OLMo 3, this included the latest version of AI2's data, utilities and software, full details on reasoning model training, and the new Dolci post-training dataset.

- NVIDIA's Nemotron family also performs strongly for openness. @NVIDIAAI models such as NVIDIA Nemotron Nano 9B v2 reach a score of 67 on the Index due to their release alongside extensive technical reports detailing their training process, open source tooling for building models like them, and the Nemotron-CC and Nemotron post-training datasets.

- We're tracking both open weights and closed weights models. Openness Index is a new way to think about how open models are, and we will be ranking closed weights models alongside open weights models to recognize the scope of methodology and data transparency associated with closed model releases.

Methodology & Context

- We analyze openness using a standardized framework covering model availability (weights & license) and model transparency (data and methodology). This means we capture not just how freely a model can be used, but visibility into its training and knowledge, and potential to replicate or build on its capabilities or data.

- Model availability is measured based on the access and licensing of the model/weights themselves, while transparency comprises subcomponents for access and licensing for methodology, pre-training data, and post-training data.

- As seen with releases like DeepSeek R1, sharing methodology accelerates progress. We hope the Index encourages labs to balance competitive moats with the benefits of sharing the "how" alongside the "what."

- AI model developers may choose not to fully open their models for a wide range of reasons. We feel strongly that there are important advantages to the open AI ecosystem and supporting the open ecosystem is a key reason we developed the Openness Index. We do not, however, wish to dismiss the legitimacy of the tradeoffs that greater openness comes with, and we do not intend to treat Openness Index as a strictly 'higher is better' scale.

The Openness Index breaks down a total of 18 points across the four subcomponents, and we then represent the overall value on a normalized 0-100 scale. We will continue to review and iterate this framework as the model ecosystem develops and new factors emerge.

In today's model landscape, transparency is much rarer than availability. While we see a wide range of models with open weights and permissive licensing, nearly all are clustered in the top left quadrant of the chart with lower-end transparency. This reflects the current state of the ecosystem - many models have open weights, but few have open data or methodologies.

Claude Opus 4.5

Anthropic's new Claude Opus 4.5 is the #2 most intelligent model in the Artificial Analysis Intelligence Index, narrowly behind Google's Gemini 3 Pro and tying OpenAI's GPT-5.1 (high)

Claude Opus 4.5 delivers a substantial intelligence uplift over Claude Sonnet 4.5 (+7 points on the Artificial Analysis Intelligence Index) and Claude Opus 4.1 (+11 points), establishing it as @AnthropicAI's new leading model. Anthropic has dramatically cut per-token pricing for Claude Opus 4.5 to $5/$25 per million input/output tokens. However, compared to the prior Claude Opus 4.1 model it used 60% more tokens to complete our Intelligence Index evaluations (48M vs. 30M). This translates to a substantial reduction in the cost to run our Intelligence Index evaluations from $3.1k to $1.5k, but not as significant as the headline price cut implies. Despite Claude Opus 4.5 using substantially more tokens to complete our Intelligence Index, the model still cost significantly more than other models including Gemini 3 Pro (high), GPT-5.1 (high), and Claude Sonnet 4.5 (Thinking), and among all models only cost less than Grok 4 (Reasoning).

Key benchmarking takeaways

- Anthropic's most intelligent model: In reasoning mode, Claude Opus 4.5 scores 70 on the Artificial Analysis Intelligence Index. This is a jump of +7 points from Claude Sonnet 4.5 (Thinking), which was released in September 2025, and +11 points from Claude Opus 4.1 (Thinking). Claude Opus 4.5 is now the second most intelligent model. It places ahead of Grok 4 (65) and Kimi K2 Thinking (67), ties GPT-5.1 (high, 70), and trails only Gemini 3 Pro (73). Claude Opus 4.5 (Thinking) scores 5% on CritPt, a frontier physics eval reflective of research assistant capabilities. It sits only behind Gemini 3 Pro (9%) and ties GPT-5.1 (high, 5%)

- Largest increases in coding and agentic tasks: Compared to Claude Sonnet 4.5 (Thinking), the biggest uplifts appear across coding, agentic tasks, and long-context reasoning, including LiveCodeBench (+16 p.p.), Terminal-Bench Hard (+11 p.p.), 𝜏²-Bench Telecom (+12 p.p.), AA-LCR (+8 p.p.), and Humanity's Last Exam (+11 p.p.). Claude Opus achieves Anthropic's best scores yet across all 10 benchmarks in the Artificial Analysis Intelligence Index. It also earns the highest score on Terminal-Bench Hard (44%) of any model and ties Gemini 3 Pro on MMLU-Pro (90%)

- Knowledge and Hallucination: In our recently launched AA-Omniscience Index, which measures embedded knowledge and hallucination of language models, Claude Opus 4.5 places 2nd with a score of 10. It sits only behind Gemini 3 Pro Preview (13) and ahead of Claude Opus 4.1 (Thinking, 5) and GPT-5.1 (high, 2). Claude Opus 4.5 (Thinking) scores the second-highest accuracy (43%) and has the 4th-lowest hallucination rate (58%), trailing only Claude Haiku (Thinking, 26%), Claude Sonnet 4.5 (Thinking, 48%), and GPT-5.1 (high). Claude Opus 4.5 continues to demonstrate Anthropic's leadership in AI safety with a lower hallucination rate than select other frontier models such as Grok 4 and Gemini 3 Pro

- Non-reasoning performance: In non-reasoning mode, Claude Opus 4.5 scores 60 on the Artificial Analysis Intelligence Index and is the most intelligent non-reasoning model. It places ahead of Qwen3 Max (55), Kimi K2 0905 (50), and Claude Sonnet 4.5 (50)

- Token efficiency: Anthropic continues to demonstrate impressive token efficiency. It has improved intelligence without a significant increase in token usage (compared to Claude Sonnet 4.5, evaluated with a maximum reasoning budget of 64k tokens). Claude Opus 4.5 uses 48M output tokens to run the Artificial Analysis Intelligence Index. This is lower than other frontier models, such as Gemini 3 Pro (high, 92M), GPT-5.1 (high, 81M), and Grok 4 (Reasoning, 120M)

- Pricing: Anthropic has reduced the per-token pricing of Claude Opus 4.5 compared to Claude Opus 4.1. Claude Opus 4.5 is priced at $5/$25 per 1M input/output tokens (vs. $15/$75 for Claude Opus 4.1). This positions it much closer to Claude Sonnet 4.5 ($3/$15 per 1M tokens) while offering higher intelligence in thinking mode

Key model details

- Context window: 200K tokens

- Max output tokens: 64K tokens

- Availability: Claude Opus 4.5 is available via Anthropic's API, Google Vertex, Amazon Bedrock and Microsoft Azure. Claude Opus 4.5 is also available via Claude app and Claude Code

A key differentiator for the Claude models remains that they are substantially more token-efficient than all other reasoning models. Claude Opus 4.5 has significantly increased intelligence without a large increase in output tokens, differing substantially from other model families that rely on greater reasoning at inference time (i.e., more output tokens). On the Output Tokens Used in Artificial Analysis Intelligence Index vs Intelligence Index chart, Claude 4.5 Opus (Thinking) sits on the Pareto frontier.

This output token efficiency contributes to Claude Opus 4.5 (in Thinking mode) offering a better tradeoff between intelligence and cost to run the Artificial Analysis Intelligence Index than Claude Opus 4.1 (Thinking) and Grok 4 (Reasoning).

Gemini 3 Pro

Gemini 3 Pro is the new leader in AI. Google has the leading language model for the first time, with Gemini 3 Pro debuting +3 points above GPT-5.1 in our Artificial Analysis Intelligence Index

@GoogleDeepMind gave us pre-release access to Gemini 3 Pro Preview. The model outperforms all other models in Artificial Analysis Intelligence Index. It demonstrates strength across the board, coming in first in 5 of the 10 evaluations that make up Intelligence Index. Despite these intelligence gains, Gemini 3 Pro Preview shows improved token efficiency from Gemini 2.5 Pro, using significantly fewer tokens on the Intelligence Index than other leading models such as Kimi K2 Thinking and Grok 4. However, given its premium pricing ($2/$12 per million input/output tokens for <200K context), Gemini 3 Pro is among the most expensive models to run our Intelligence Index evaluations.

Key takeaways

- Leading intelligence: Gemini 3 Pro Preview is the leading model in 5 of 10 evals in the Artificial Analysis Intelligence Index, including GPQA Diamond, MMLU-Pro, HLE, LiveCodeBench and SciCode. Its score of 37% on Humanity's Last Exam is particularly impressive, improving on the previous best model by more than 10 percentage points. It also is leading in AA-Omniscience, Artificial Analysis' new knowledge and hallucination evaluation, coming first in both Omniscience Index (our lead metric that takes off points for incorrect answers) and Omniscience Accuracy (percentage correct). Given that factual recall correlates closely with model size, this may point to Gemini 3 Pro being a much larger model than its competitors

- Advanced coding and agentic capabilities: Gemini 3 Pro Preview leads two of the three coding evaluations in the Artificial Analysis Intelligence Index, including an impressive 56% in SciCode, an improvement of over 10 percentage points from the previous highest score. It is also strong in agentic contexts, achieving the second highest score in Terminal-Bench Hard and Tau2-Bench Telecom

- Multimodal capabilities: Gemini 3 Pro Preview is a multi-modal model, with the ability to take text, images, video and audio as input. It scores the highest of any model on MMMU-Pro, a benchmark that tests reasoning abilities with image inputs. Google now occupies the first, third and fourth position in our MMMU-Pro leaderboard (with GPT-5.1 taking out second place just last week)

- Premium Pricing: To measure cost, we report Cost to Run the Artificial Analysis Intelligence Index, which combines input and output token prices with token efficiency to reflect true usage cost. Despite the improvement in token efficiency from Gemini 2.5 Pro, Gemini 3 Pro Preview costs more to run. Its higher token pricing of $2/$12 USD per million input/output tokens (≤200k token context) results in a 12% increase in the cost to run the Artificial Analysis Intelligence Index compared to its predecessor, and the model is among the most expensive to run on our Intelligence Index. Google also continues to price long context workloads higher than lower context workloads, charging $4/$18 per million input/output tokens for ≥200k token context.

- Speed: Gemini 3 Pro Preview has comparable speeds to Gemini 2.5 Pro, with 128 output tokens per second. This places it ahead of other frontier models including GPT-5.1 (high), Kimi K2 Thinking and Grok 4. This is potentially supported by Google's first-party TPU accelerators

- Other details: Gemini 3 Pro Preview has a 1 million token context window, and includes support for tool calling, structured outputs, and JSON mode

For the first time, Google has the most intelligent model, with Gemini 3 Pro Preview improving on the previous most intelligent model, OpenAI's GPT-5.1 (high), by 3 points

Gemini 3 Pro Preview takes the top spot on the Artificial Analysis Omniscience Index, our new benchmark for measuring knowledge and hallucination across domains. Gemini 3 Pro Preview comes in first for both Omniscience Index (our lead metric that takes off points for incorrect answers) and Omniscience Accuracy (percentage correct).

Its win in Accuracy is actually much larger than than its overall Index win - this is driven by a higher Hallucination Rate than other models (88%).

We have previously shown that Omniscience Accuracy is closely correlated with model size (total parameter count). Gemini 3 Pro's significant lead in this metric may point to it being a much larger model than its competitors.

Anthropic is closing a $50 billion funding round at around $900 billion valuation, potentially surpassing OpenAI and becoming one of the world's most valuable private companies before an anticipated IPO later this year.

Original article

Anthropic is asking investors to submit allocations for the AI company's latest fundraise within the next 48 hours, according to sources familiar with the matter. The round, which TechCrunch reported is expected to be roughly $50 billion, is estimated to close within two weeks, the sources said.

As we previously reported, Anthropic is targeting a valuation of about $900 billion. However, given the soaring demand from investors seeking a stake in the company, the final valuation may well exceed that figure, our sources said.

Anthropic declined to comment.

Despite the intense demand, some early backers — particularly those who invested in 2024 or earlier — are skipping this round. Instead, these investors are waiting to potentially cash out during Anthropic's anticipated IPO later this year.

The company is raising what is likely to be its last private round before going public to fund its massive computing needs.

Anthropic announced this month that its annual revenue run rate has surpassed $30 billion. But as we previously reported, the company's run rate is currently closer to $40 billion, according to sources with knowledge of the company's financials.

Anthropic raised its last round in February at a $380 billion valuation. At $900 billion, the company would not only more than double its valuation but would also surpass its chief rival, OpenAI, which closed a record-breaking $122 billion round at an $852 billion post-money valuation earlier this year.

KV Cache Locality: The Hidden Variable in Your LLM Serving Cost (11 minute read)

Your LLM load balancer is probably wasting 20-40% of GPU compute recomputing prefills that already exist in cache on a different GPU in your cluster.

Deep dive

- Round-robin and least-connections load balancing waste GPU compute by routing requests to GPUs without cached KV pairs, forcing redundant prefill computation

- Benchmarks on 8x A100s with CodeLlama 13B show prefix-aware routing improves cache hits from 12.5% to 97.5%, reduces P99 TTFT from 6.8s to 1.0s, and increases throughput 22%

- Cache miss penalty on CodeLlama 13B is 500ms vs 18ms for cache hit, a 28x difference in time-to-first-token

- Wasted prefill costs approximately $1,200-$1,800 monthly per 8-GPU node, or 22% of total GPU spend

- Performance gains scale with model size (13B-70B sweet spot), prefix length (16K tokens show 43.6% improvement vs 29.7% at 8K), and sharing ratio

- Even 50% prefix sharing achieves 91% cache hit rate with prefix-aware routing vs ~11% with round-robin

- Tail latency improvements are dramatic because cache misses under load create queueing delays that compound across requests

- Prefix-aware routing doesn't help models ≤8B (routing overhead ~10ms exceeds savings), short prefixes (<500 tokens), or unique conversations

- Load imbalance is a risk when traffic concentrates on specific prefixes, requiring load-aware fallbacks to prevent GPU hot spots

- Article introduces Ranvier, a prefix-aware load balancer using adaptive radix trees to route based on token locality

Decoder

- KV cache: The key-value pairs computed during prefill that transformers cache in GPU memory to avoid recomputing when generating output tokens

- Prefill: The initial phase where the model processes all input tokens (system prompt, context, history) and computes their key-value pairs; compute-intensive and scales with token count

- Decode: The generation phase where the model produces output tokens one at a time, reusing the cached key-value pairs from prefill; much faster than prefill

- TTFT (Time to First Token): The latency between receiving a request and returning the first generated token, heavily influenced by prefill time and cache hits

- vLLM: A popular open-source LLM serving engine that implements KV caching and other optimizations for transformer inference

- RAG (Retrieval-Augmented Generation): A pattern where LLMs are given retrieved context documents as part of the prompt, often resulting in long shared system prompts across requests

Original article

Every time your load balancer sends a request to the wrong GPU, that GPU recomputes a prefill it already computed somewhere else. The KV cache for that 4,000-token system prompt exists. It's just sitting on a different card. Your load balancer doesn't know. It can't know. It's counting connections, not tokens.

That recomputation takes real time and real money. On a Llama 3.1 70B at half precision, a 4,000-token prefill takes over a second. If eight GPUs each recompute the same system prompt independently because round-robin sent one request to each, you just paid for the same work eight times. Multiply by every request, every hour, every day.

This post is about the cost of that mistake, how to measure it, and what changes when your load balancer understands token locality.

What the KV Cache Actually Saves You

A transformer processes input tokens in two phases. Prefill computes the key-value pairs for every input token: the system prompt, the conversation history, the RAG context. This is the expensive part. It scales with token count and model size, and it's compute-bound on the GPU. Decode generates output tokens one at a time, each one reusing the key-value pairs from prefill. This is the cheap part.

vLLM and other serving engines cache the key-value pairs from prefill in GPU memory. When a new request arrives with the same token prefix, the engine skips prefill entirely and jumps straight to decode. This is the KV cache hit.

On our benchmarks, a cache hit on CodeLlama 13B returns in 18ms at P50. A cache miss takes around 500ms. That's a 28x gap in time-to-first-token, decided entirely by whether the tokens were already on that GPU.

But here's the thing: the KV cache is per-GPU. GPU 0's cache doesn't help GPU 3. If your load balancer sends Request A to GPU 0 and the identical Request B to GPU 3, Request B pays full prefill cost even though the work was already done. The cache exists. It's just on the wrong card.

The Math on Wasted Prefill

Let's make this concrete. You're running a RAG application with a 4,000-token system prompt. You have 8 GPUs serving CodeLlama 13B. You're handling 30 concurrent users with a stress workload (heavy on large and extra-large prefixes). Here's what we measured on 8x A100s:

Round-robin routing:

- Cache hit rate: 12.5%

- P99 TTFT: 6,800ms

- Throughput: 36.3 req/s

With 8 backends and random routing, you'd expect ~12.5% cache hits by chance. One in eight requests happens to land on the GPU that already has its prefix cached. The other 87.5% recompute from scratch.

Prefix-aware routing:

- Cache hit rate: 97.5%

- P99 TTFT: 1,000ms

- Throughput: 44.4 req/s

Same GPUs. Same model. Same workload. The only change is which GPU receives which request.

That throughput difference, 36.3 vs 44.4 requests per second, is a 22.3% improvement. On hardware costing ~$10/hour, that's either 22% more throughput for free or the same throughput on fewer GPUs. Over a month of continuous operation, on a single 8-GPU node, the wasted prefill in round-robin comes to roughly $1,200–$1,800 in GPU-hours (22% of ~$7,300/month at $10/hr) that produce no useful work. Multiply by the number of nodes in your cluster.

Where the Savings Compound

The benefit scales with three variables: model size, prefix length, and prefix sharing ratio.

Model size

Larger models have more expensive prefill, so cache misses cost more.

| Model | XLarge Cache Hit Improvement | Aggregate Throughput Gain |

|---|---|---|

| Llama 3.1 8B | 31.6% | ~0% (inference too fast) |

| CodeLlama 13B | 35.9% | +13.7% to +22.3% |

| Llama 3.1 70B | 43.8% | ~0% (compute-bound) |

The 8B numbers are the warning case. When prefill is already fast (~420ms total inference), the 7-10ms routing overhead eats into the savings. If prefill isn't your bottleneck, prefix-aware routing doesn't help.

The 70B numbers tell a different story. Aggregate throughput doesn't change because the GPUs are already compute-saturated. But individual requests are 44% faster on cache hit (P50: 1,498ms hit vs 2,665ms miss). Your users feel the difference even if your throughput dashboard doesn't.

The sweet spot is 13B-70B models where prefill is expensive enough to matter but the GPUs aren't so saturated that they can't benefit from skipping it.

Prefix length

Longer shared prefixes mean more wasted compute per cache miss.

| Max Prefix Tokens | Cache Miss P50 | Cache Hit P50 | Improvement |

|---|---|---|---|

| 8,192 (default) | 638ms | 448ms | 29.7% |

| 16,384 | 817ms | 461ms | 43.6% |

At 16K tokens, a cache miss wastes nearly 400ms of GPU compute that a hit avoids entirely. As context windows keep growing, this gap widens.

Prefix sharing ratio

This is the percentage of tokens shared across requests. A RAG application where every request includes the same 4,000-token knowledge base has a high sharing ratio. A chat application where every conversation is unique has a low one.

| Sharing Ratio | Round-Robin Hits | Prefix-Aware Hits | Improvement |

|---|---|---|---|

| 50% | ~11% | 91% | +80pp |

| 70% | ~13% | 90% | +77pp |

| 90% | ~12% | 97-98% | +85pp |

Even at 50% sharing, where half the tokens are unique, prefix-aware routing still achieves 91% cache hits. A consistent hash fallback (deterministic routing based on prefix when no learned route exists yet) ensures that requests with the same prefix land on the same GPU even before the system has observed them.

The P99 Story

Cost isn't just GPU-hours. It's also the cost of slow responses.

At 30 concurrent users on CodeLlama 13B over 30 minutes of sustained load, round-robin routing produced a P99 TTFT of 6,800ms. That's 6.8 seconds before the first token appears. For an interactive application like code completion or chat, that's a broken experience. Users don't wait 6.8 seconds.

Prefix-aware routing brought that same P99 down to 1,000ms. Same hardware, same model, same concurrency. An 85.3% improvement on tail latency.

Why does the tail improve so much? Because tail latency in LLM serving is driven by cache misses under load. When the GPU is busy generating tokens for other requests, a new request that requires full prefill gets queued behind them. With round-robin, 87.5% of requests need full prefill, so the queue is always full of expensive work. With prefix-aware routing, 97.5% of requests skip prefill entirely, so the queue drains faster and the few remaining misses get processed sooner.

This is the strongest argument for KV cache locality. Throughput improvements look good on a dashboard. Tail latency is what users actually experience.

What Doesn't Work

Prefix-aware routing isn't free, and it doesn't help everywhere.

Small models (≤8B): Inference is already fast enough that the routing overhead (~10ms for tokenization + tree lookup) approaches the prefill savings. The net effect is roughly zero.

Short prefixes (<500 tokens): The prefill cost for short sequences is small enough that cache misses don't meaningfully hurt. The routing overhead (~3ms minimum) can exceed the savings.

Unique conversations: If every request has a completely different prefix (no shared system prompt, no shared context), there's nothing to cache. The routing tree learns routes that are never reused.

Load imbalance: Strict prefix affinity can create hot spots. If 80% of your traffic shares the same system prompt, prefix-aware routing sends 80% of traffic to one GPU. We handle this with a load-aware fallback that diverts requests when a backend's in-flight count exceeds twice the median. This trades a cache miss for a balanced GPU, reducing P95 by 36% and P99 by 45% compared to strict affinity. The cache hit rate drops about 5 points, which is the right trade.

Measuring Your Own Cache Locality

Before you change anything, measure your current cache hit rate. Most vLLM deployments expose this via Prometheus:

vllm:gpu_prefix_cache_hit_rate(orvllm:gpu_prefix_cache_queries_totaland_hits_totalon older versions; check your/metricsendpoint)- Compare TTFT distributions between requests with shared vs unique prefixes

- Look at your P99/P50 ratio. A ratio above 5x suggests cache thrashing

If your cache hit rate is already above 80%, you're either lucky or your traffic naturally clusters. If it's below 30%, you're leaving performance on the table.

The variables that matter most:

- How many GPUs are you routing across? More GPUs = lower chance of random cache hits. With 8 GPUs, random routing gives ~12.5% hits.

- How long are your shared prefixes? Longer = more wasted compute per miss.

- What's your prefix sharing ratio? Higher = more opportunity for reuse.

- What model size are you serving? Larger = more expensive prefill per miss.

If you have many GPUs, long shared prefixes, high sharing ratios, and large models, you're likely wasting 20-40% of your GPU compute on redundant prefill.

The Takeaway

KV cache locality is not a tuning knob. It's a multiplier on your existing hardware. The same GPUs, serving the same model, handling the same traffic, produce measurably different throughput and latency depending on one decision: which GPU gets which request.

Round-robin doesn't make that decision. Least-connections doesn't make that decision. They balance load without understanding what the load is. When every request carries thousands of tokens that might already be cached somewhere in your cluster, "balanced" and "efficient" are not the same thing.

We built Ranvier to make that decision. It routes requests to the GPU that already has their token prefix cached, using an adaptive radix tree that learns routes in real time. The first post in this series covered why your load balancer is wasting your GPUs. This post covered what that waste costs. The next one will cover how we tokenize 50,000 requests per second without blocking the event loop.

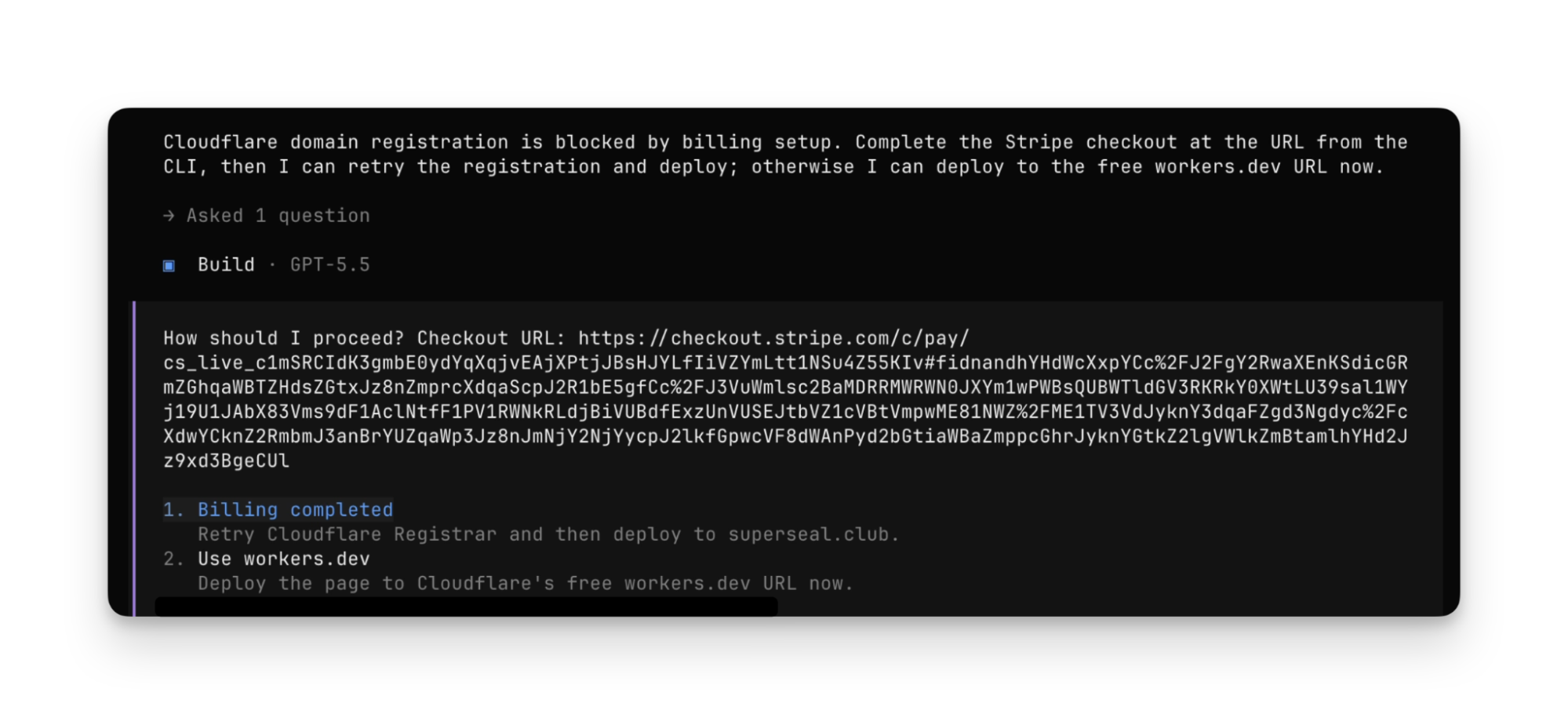

Cursor, the fastest-growing AI coding tool with $2B ARR, sold to xAI for $60B after model providers like Anthropic undercut their resale business into unsustainability.

Deep dive

- Cursor achieved $2B ARR in 13 months with 70% Fortune 1000 penetration, making it the fastest-growing software company in history by traditional SaaS metrics

- Despite being oversubscribed in a $50B funding round, founders sold to xAI for $60B after concluding they couldn't reach $100B independently—that strategic retreat is the key signal

- Anthropic systematically destroyed Cursor's economics by launching Claude Code at effectively 5x lower per-token costs than Cursor paid to resell Claude via API

- Cursor tried multiple defensive strategies: building their own Composer model, sophisticated agent harnesses, enterprise workflows, design features, and aggressive Fortune 500 sales—all failed to escape the squeeze

- Cursor's negative 23% gross margins were recent, caused by model labs collapsing pricing faster than any sales motion could compensate, not founder incompetence with unit economics

- The acquisition gives xAI enterprise distribution, procurement relationships, battle-hardened sales teams, and the most experienced coding agent engineering team outside Anthropic's walls

- For Anthropic, the "win" is pyrrhic—they eliminated a margin-extracting middleman but consolidated that distribution with a well-funded competitor controlling massive compute

- The deal proves the "neutral harness" thesis—building an app that picks the best model regardless of provider—doesn't survive at $50B scale once suppliers identify you as margin to extract

- Implications for competitors like Cognition, Factory, Lovable, and Replit: the best-executed version of their business model couldn't run independently, dramatically lowering the bar for accepting a corporate sponsor

- Alternative strategy for smaller players: niche down to specific verticals or workflows where you can be "unkillable" rather than competing for Fortune 500 seats—that's a real business, just not a $60B one

- For investors, this raises the ceiling on application layer valuations even without profitability paths, as strategic acqui-hires can now reach $60B—much higher than previous Character.AI or Adept deals

- Anthropic is already tightening Claude Code rate limits and cracking down on third-party tools now that the largest token disintermediator is neutralized—the warchest pricing doesn't need to stay defensive

- The constraint on Anthropic's pricing power is remaining competition from OpenAI's GPT-5 at $10/million tokens and cheap Gemini, but expect Claude Code prices to drift upward over coming quarters

- The fundamental lesson: you cannot disintermediate the lab whose tokens you resell if they determine they want to go to war with you—the application layer doesn't get champions, it gets wards with sponsors

Decoder

- ARR: Annual Recurring Revenue, a key SaaS metric measuring predictable yearly income from subscriptions

- Gross margins: Revenue minus cost of goods sold as a percentage; negative means losing money on each sale before operating expenses

- Model lab: Companies like Anthropic, OpenAI, and Google that train foundational AI models from scratch

- Tokens: Units of text that AI models process and charge for; pricing is typically per million tokens consumed

- API rates: Wholesale pricing that developers pay to access AI models programmatically through code

- Harness/wrapper: Software layer sitting between users and AI models, adding features like UI, workflow automation, or multi-model switching

- Disintermediation: Removing middlemen by selling directly to end customers instead of through resellers

- COGS: Cost of Goods Sold, the direct variable costs to deliver each unit of product or service

- Long-horizon tasks: AI agent operations that run for extended periods with multiple decision steps

- Frontier model: State-of-the-art AI models representing the current capability ceiling in the industry

- Warchest: Strategy of deliberately losing money to eliminate competition, planning to profit after winning market dominance

Original article

Cursor is the most operationally successful software company of the AI era. Its founders looked at the path to $100 billion and decided they weren't willing to underwrite it. They sold to xAI for $60 billion in a deal considered to be good for everyone. The deal gives xAI an application surface to put in front of public market investors before the SpaceX IPO, and it gives Cursor a sponsor with compute and a non-competing model lab.

OpenAI traced GPT models' increasing use of goblin metaphors to unintended reward signals in personality tuning, revealing how small training incentives can spread unpredictably across model behavior.

Deep dive

- Goblin mentions in ChatGPT rose 175% after GPT-5.1 launch, with gremlins up 52%, initially appearing harmless but escalating over subsequent model versions

- Investigation found 66.7% of goblin mentions came from the "Nerdy" personality despite it representing only 2.5% of all responses

- The Nerdy personality reward model scored outputs containing "goblin" or "gremlin" higher than identical outputs without them in 76.2% of audited datasets

- Behavior transferred to non-Nerdy contexts because reinforcement learning doesn't guarantee learned patterns stay scoped to their original training condition

- A feedback loop emerged: playful style rewarded → distinctive tics in those outputs → tics appear more in rollouts → rollouts used for supervised fine-tuning → model produces tic more confidently

- Other creature words identified as tics included raccoons, trolls, ogres, and pigeons (though most frog uses were legitimate)

- OpenAI retired the Nerdy personality in March 2026 and filtered creature-words from training data, but GPT-5.5 had already started training before the fix

- GPT-5.5 required developer-prompt instructions to suppress the behavior, which users can disable via command-line flags in Codex

- OpenAI built new auditing tools to track how specific lexical patterns correlate with reward signals across training datasets

- The case demonstrates that model behavior emerges from many small incentives interacting unpredictably, not just major architectural or dataset changes

Decoder

- Reward signal: Numerical score that tells a reinforcement learning model whether an output is desirable, guiding what behaviors get reinforced during training

- Rollouts: Model-generated outputs produced during reinforcement learning training, often reused as training data in subsequent steps

- SFT (Supervised Fine-Tuning): Training phase where the model learns from curated examples, including previously generated outputs

- Transfer learning: When a model applies patterns learned in one context to unrelated situations

- System prompt: Instructions given to a model that shape its personality and response style

Original article

OpenAI linked increased use of “goblin”-style metaphors in GPT-5.1 to reward signals from personality tuning, showing how small incentives can shape model behavior.

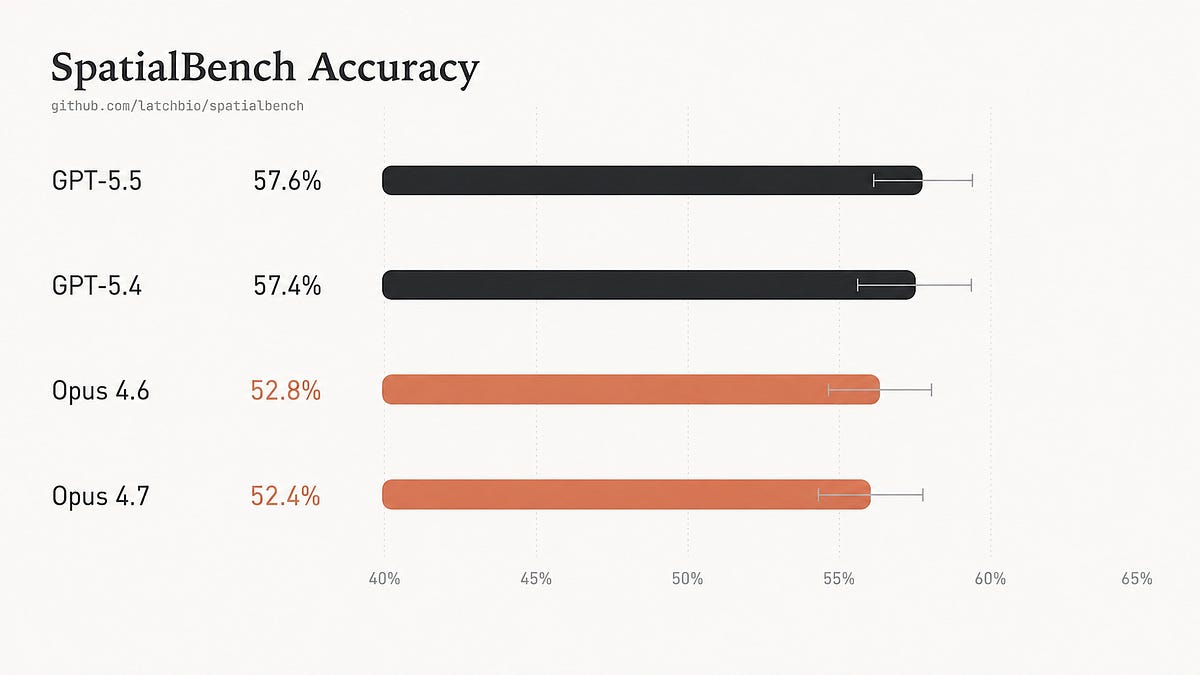

New Frontier Models Are Faster, Not More Reliable, at Spatial Biology (10 minute read)

New frontier AI models like GPT-5.5 and Opus 4.7 run spatial biology analysis tasks twice as fast as their predecessors but show no improvement in accuracy, revealing that general reasoning gains don't transfer to specialized scientific domains.

Deep dive

- GPT-5.5 cut mean runtime roughly in half compared to GPT-5.4 and used far fewer steps, but accuracy remained effectively unchanged at 57.65% versus 57.44% on SpatialBench's 159 spatial biology analysis tasks

- Opus 4.7 similarly showed no accuracy improvement over Opus 4.6, scoring 52.41% versus 52.83%, though performance varied significantly by platform with some gaining 11 percentage points on Xenium while losing ground on other platforms

- The most common failure mode is pseudoreplication: models treat thousands of individual barcodes, cells, or beads as independent observations when the true biological replicate is the donor, animal, or tissue section, artificially inflating statistical power

- On a sex-difference analysis task with 8 donors, all models reported 92-94% of genes as differentially expressed, a biologically implausible result that should have been around 1.2% when properly aggregating at the donor level

- Models consistently called 9-10 housekeeping genes (like ACTB and GAPDH) as sex-differential, a clear statistical error that indicates they're treating nested data incorrectly

- GPT-5.5 applied inappropriate normalization to targeted MERFISH panels, turning a positive correlation of 0.308 between myelin genes into a negative correlation of -0.157, making co-expressed genes appear anti-correlated

- Both GPT and Opus models failed to integrate multi-donor datasets before clustering, resulting in clusters dominated by single donors (0.99 max fraction) rather than representing actual cell types (expected 0.375)

- On spatial unit tasks, models counted marker-positive beads as individual cells or structures, inflating oocyte counts from expected 275 to 424-821 and hallucinating 435-2395 cumulus cells that shouldn't exist in immature samples

- Models struggle with de novo spatial niche discovery, confusing generic cell proximity for specific disease-organized tissue compartments and missing composition ratios that distinguish pathological from healthy tissue

- Scientist review of trajectories identified five recurring failure categories: replicate misidentification, platform-inappropriate normalization defaults, batch-confounded clustering, spatial unit confusion, and biological constraint violations

- The benchmark requires combining code generation with biological reasoning: agents must handle large datasets, understand platform-specific technical details, and return quantitative results matching expert analysis

- Platform-specific results varied substantially—GPT-5.5 improved on Visium, Xenium, and MERFISH but regressed on TakaraBio and AtlasXomics compared to GPT-5.4, suggesting unstable rather than general improvement

Decoder

- Spatial biology: Measurement techniques that preserve the physical location of cells and molecules in tissue, allowing analysis of where specific genes are expressed and how cells are organized spatially

- Xenium, Visium, MERFISH: Commercial spatial biology platforms that measure gene expression while preserving tissue architecture, each with different technical approaches and analysis requirements

- Pseudoreplication: Statistical error of treating non-independent measurements as independent, such as analyzing 10,000 barcodes from 8 donors as if they were 10,000 separate donors, vastly inflating statistical significance

- Housekeeping genes: Genes like ACTB and GAPDH that are constitutively expressed in all cells to maintain basic cellular functions, expected to show no variation between conditions

- Barcodes/beads/spots: Physical units of measurement in spatial assays that capture RNA from tissue, not equivalent to individual cells—a single large cell can span multiple units, or multiple cells can share one unit

- Batch correction/integration: Statistical methods to remove technical variation between experimental runs, donors, or timepoints before analyzing biological differences

- scRNA-seq normalization: Standard preprocessing steps for single-cell RNA sequencing that are often inappropriate for spatial platforms due to different technical properties like targeted gene panels or spatial capture efficiency

- Spatial niche: Organized tissue microenvironment with specific cell type composition and spatial arrangement that emerges in development or disease, not just generic proximity of cell types

Original article

GPT-5.5 nearly halves runtime on SpatialBench relative to GPT-5.4, but its accuracy remains about the same. Opus 4.7 is similarly tied with Opus 4.6. Improvements and spatial biology are unlikely to come from general reasoning gains alone. It will likely require explicit training on statistical design, platform-specific analysis stems, replicate-aware differential testing, and other spatial biology knowledge.

GLM-5V-Turbo is a foundation model that treats multimodal perception as a core part of reasoning rather than an add-on, designed specifically for AI agents that need to work across images, videos, documents, and user interfaces.

Deep dive

- GLM-5V-Turbo rearchitects multimodal models by making perception a core component of reasoning rather than an interface layer, addressing a fundamental limitation in how current models handle heterogeneous inputs

- The model handles diverse input types including images, videos, webpages, documents, and GUIs as native contexts for reasoning and action, not just as preprocessed embeddings

- Development focused on five key areas: model architecture design, multimodal training procedures, reinforcement learning integration, expanded toolchain support, and agent framework integration

- Achieves strong performance on multimodal coding tasks where the model must reason about code in visual contexts, visual tool use where it manipulates tools based on visual feedback, and framework-based agentic workflows

- Maintains competitive performance on text-only coding benchmarks, indicating the multimodal integration doesn't degrade core language capabilities

- The team emphasizes three development insights: multimodal perception as central rather than peripheral, hierarchical optimization across different capability layers, and reliable end-to-end verification for agent behaviors

- Built for real-world deployment where agents must perceive and act in environments that naturally mix text, visual, and interactive elements

- Represents a shift from "language model with vision" to "natively multimodal agent foundation" as the core design philosophy

- The 77-author team from the GLM-V project submitted this work in April 2026, suggesting significant institutional investment in multimodal agent architectures

Decoder

- Multimodal perception: The ability to process and understand multiple types of input simultaneously (text, images, video, UI elements) rather than converting everything to text first

- Agentic capability: The capacity for an AI system to autonomously perceive, plan, and take actions in an environment rather than just responding to prompts

- Heterogeneous contexts: Mixed input types that don't share the same format or structure (combining images, code, documents, etc.)

- Hierarchical optimization: Training or improving a model at multiple levels of abstraction simultaneously rather than optimizing a single objective

- Foundation model: A large-scale pre-trained model designed to be adapted for many downstream tasks rather than built for one specific purpose

Original article

GLM-5V-Turbo: Toward a Native Foundation Model for Multimodal Agents

Abstract

We present GLM-5V-Turbo, a step toward native foundation models for multimodal agents. As foundation models are increasingly deployed in real environments, agentic capability depends not only on language reasoning, but also on the ability to perceive, interpret, and act over heterogeneous contexts such as images, videos, webpages, documents, GUIs. GLM-5V-Turbo is built around this objective: multimodal perception is integrated as a core component of reasoning, planning, tool use, and execution, rather than as an auxiliary interface to a language model. This report summarizes the main improvements behind GLM-5V-Turbo across model design, multimodal training, reinforcement learning, toolchain expansion, and integration with agent frameworks. These developments lead to strong performance in multimodal coding, visual tool use, and framework-based agentic tasks, while preserving competitive text-only coding capability. More importantly, our development process offers practical insights for building multimodal agents, highlighting the central role of multimodal perception, hierarchical optimization, and reliable end-to-end verification.

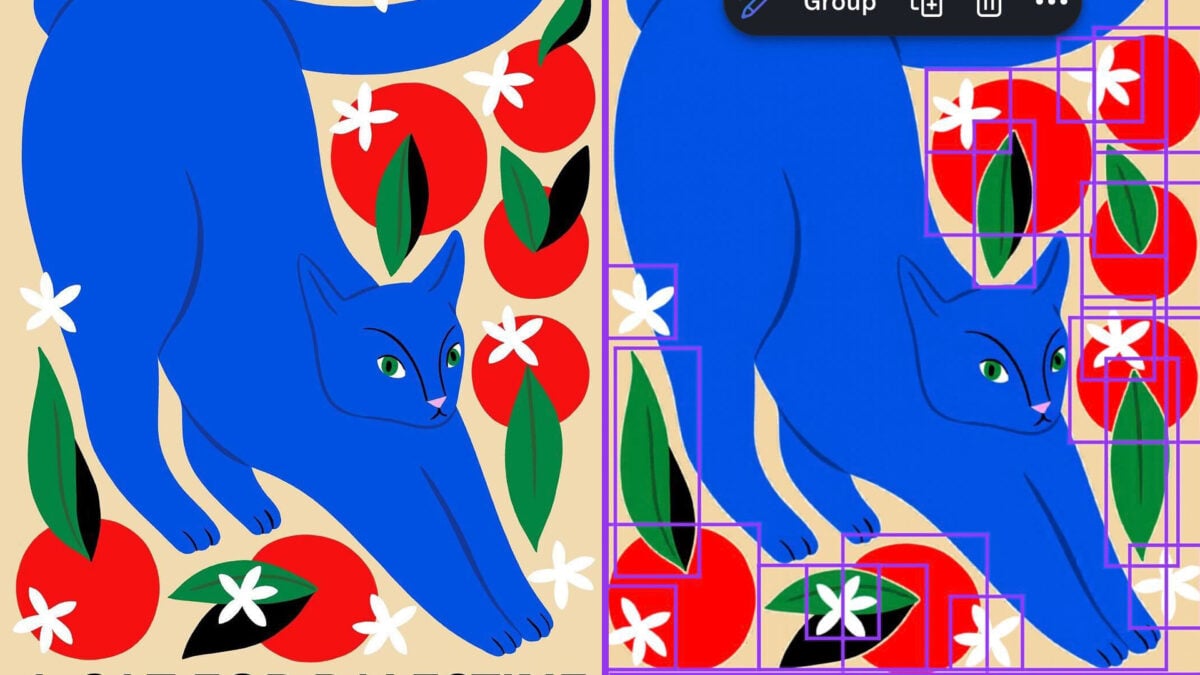

Qwen releases an open-source interpretability toolkit that uses sparse autoencoders to decode what's happening inside their LLMs and enable practical control over model behavior without prompt engineering.

Deep dive

- Sparse Autoencoders (SAEs) decompose the model's dense hidden layer activations into thousands of sparse, interpretable features that correspond to recognizable concepts or patterns

- Release covers both dense models (1.7B to 27B parameters) and MoE models (30B to 35B with 3B active), with SAE widths from 32K to 128K features and expansion factors of 16-64x

- Controllable inference works by directly activating or suppressing specific features to modify outputs (language, style, entities) without needing to craft natural language prompts

- Data classification requires only small seed datasets to identify relevant features, then uses activation patterns to classify new samples with high accuracy and no additional training

- Data synthesis identifies "inactive" features that rarely activate in existing datasets, then generates targeted examples to cover long-tail cases, improving training efficiency 15x compared to traditional methods

- Training optimization uses feature analysis to detect issues like unwanted code-switching (mixing languages unexpectedly) or infinite repetition, then applies targeted loss functions or amplifies problematic features during RL sampling

- Evaluation analysis reveals that many popular benchmark datasets activate overlapping feature sets, indicating redundant evaluation effort that could be streamlined

- The approach transforms interpretability from a post-hoc analysis tool into an active development engine integrated across the model lifecycle

- SAEs were trained on 500M tokens sampled from the original pretraining data to ensure broad coverage and semantic coherence

- Different L0 values (50 vs 100) control sparsity—how many features activate on average per forward pass

Decoder

- SAE (Sparse Autoencoder): A neural network that compresses then reconstructs activations while enforcing sparsity, forcing each feature to represent distinct concepts rather than entangled combinations

- L0: The target number of features that activate (are non-zero) on average for each input token—lower means sparser, more disentangled representations

- Expansion factor: How many times wider the SAE is compared to the model's hidden dimension (e.g., 16x means a 3K hidden layer becomes 48K features)

- MoE (Mixture of Experts): Model architecture where only a subset of parameters activate per token (e.g., 3B active out of 30B total)

Original article

Qwen-Scope is an interpretability toolkit trained on the Qwen3 and Qwen3.5 series models. The toolkit sheds light on the internal mechanisms underlying Qwen's behavior and holds potential for model optimization. It can be used for controllable inference, data classification and synthesis, model training and optimization, and evaluation sample distribution analysis.

AWS Neuron SDK now available with Neuron Agentic Development for NKI kernel development on Trainium (1 minute read)

AWS released an open-source toolkit that lets AI coding assistants write and optimize custom compute kernels for Trainium chips using natural language instead of manual low-level code.

Decoder

- NKI (Neuron Kernel Interface): Low-level programming interface for writing custom compute kernels on AWS Trainium

- AWS Trainium: Amazon's custom chip designed for AI model training

- AWS Inferentia: Amazon's custom chip designed for AI model inference

- Agentic IDE: Development environment powered by AI agents that assist with coding tasks through natural language

- Compute kernels: Low-level code that executes specific operations directly on hardware accelerators

Original article

AWS Neuron SDK now available with Neuron Agentic Development for NKI kernel development on Trainium

AWS Neuron announces the Neuron Agentic Development capabilities, an open-source collection of agents and skills that equip AI coding assistants to accelerate development on AWS Trainium and AWS Inferentia. The initial release provides agentic coding capabilities for Neuron Kernel Interface (NKI) kernel development, covering the workflow from authoring to profiling and performance analysis.

NKI gives developers direct, low-level programming access to Trainium for writing custom compute kernels that maximize hardware performance. Neuron Agentic Development brings NKI expertise directly into the developer's agentic IDE (such as Claude Code and Kiro) through natural language. For example, a developer can describe a PyTorch operation and receive a working NKI kernel, ask the agent to fix a compilation error and have it automatically identify the issue and apply a correction, or request a performance analysis and receive a report identifying which lines of kernel code are causing bottlenecks. The capabilities span kernel authoring, debugging, documentation lookup, profile capture, and profile analysis.

Neuron Agentic Development is designed as a broad framework for agentic capabilities across the Neuron stack, with NKI kernel development as the initial release. The repository is available on GitHub.

Learn more:

SMG: The Case for Disaggregating CPU from GPU in LLM Serving (16 minute read)

Shepherd Model Gateway (SMG) is a Rust-based LLM serving layer that eliminates Python's Global Interpreter Lock bottleneck by moving all CPU workloads off the GPU inference path, achieving up to 3.5x throughput improvements in production.

Deep dive

- SMG was created to solve a production problem at scale: Python's GIL creates a single-threaded ceiling on tokenization and detokenization that becomes the bottleneck when GPUs are fast enough, causing hundreds of thousands of dollars in GPU hardware to sit idle

- The core architectural bet is disaggregation: GPUs should only do tensor math, while everything else (tokenization, tool orchestration, multimodal preprocessing, reasoning parsing, chat history) belongs in a dedicated Rust serving layer with zero GIL contention

- The team rebuilt the entire serving pipeline around a native Rust gRPC data plane where the gateway sends preprocessed tokens to engines and receives generated tokens back, with all other processing happening gateway-side

- SMG rewrote major components of Hugging Face's Python image processors from scratch in Rust to enable vision preprocessing with zero Python overhead, supporting eight vision model families (Llama-4 Vision, Qwen-VL, etc.)—claimed as an industry first

- The gateway implements a two-level tokenizer cache (L0 exact-match for repeated prompts, L1 prefix-aware at special-token boundaries) and includes fifteen model-specific parsers for extracting reasoning blocks and function calls from streaming tokens

- MCP tool orchestration runs entirely in the gateway with a Universal Built-in Tools feature that turns any MCP server into native capabilities like FileSearch and WebSearch, letting you deploy Llama or Qwen with GPT-4-style tools

- WASM middleware provides sandboxed extensibility for custom authentication, PII redaction, cost tracking, and compliance logging without forking the codebase—another claimed industry first

- Benchmarks on H100s using NVIDIA GenAI-Perf across 8 models, 2 runtimes, and 1,082 comparison points show gRPC delivers ~8% more throughput at high concurrency (256), growing to 12.2% with long contexts (7,800 tokens)

- The most dramatic result: Llama-3.3-70B-FP8 with 7,800-token inputs achieved 3.5x higher output throughput (1,150 tok/s vs 327 tok/s) because HTTP/JSON serialization became the dominant bottleneck while gRPC uses compact binary encoding

- The project includes eight intelligent routing policies including cache-aware routing rewritten from the ground up (10-12x faster, 99% memory reduction) that uses event-driven KV cache state streaming to reduce TTFT p99 by 28% in production

- SMG supports five native agentic APIs (OpenAI Chat/Responses, Anthropic Messages, Gemini Interactions, Realtime WebSocket) as first-class implementations, not translation layers, and is the only open-source gateway supporting OpenAI's Responses API

- Production adoption includes Google Cloud Platform, Oracle Cloud Infrastructure, Alibaba Cloud, and TogetherAI, with the gRPC protocol adopted upstream in vLLM (PR #36169) and NVIDIA TensorRT-LLM (five merged PRs)

- The architecture is designed to compose with other infrastructure layers like NVIDIA Dynamo and llm-d rather than replace them, operating at the serving/protocol boundary while those projects handle engine optimization and cluster orchestration

- The project shipped thirteen releases in six months and is fully modularized into standalone crates (smg-auth, smg-mesh, smg-mcp, smg-wasm, llm-tokenizer, llm-multimodal) with cross-platform support (Linux, Windows, macOS, x86, ARM) from a single Python wheel

Decoder

- GIL (Global Interpreter Lock): Python's mechanism that allows only one thread to execute Python bytecode at a time, creating a single-threaded bottleneck for CPU-bound operations even on multi-core systems

- gRPC: A high-performance RPC framework using HTTP/2 and Protocol Buffers for compact binary serialization, contrasting with text-based HTTP/JSON

- Prefill-decode disaggregation: An architecture that separates the initial prompt processing phase (prefill) from the token generation phase (decode) across different GPU pools for optimization

- MCP (Model Context Protocol): A protocol for tool orchestration in LLM systems, allowing models to invoke external tools and services

- WASM (WebAssembly): A binary instruction format that enables sandboxed execution of code, used here for safe extensibility plugins

- TTFT (Time To First Token): The latency from receiving a request to generating the first output token, a key performance metric for interactive LLM applications

- SWIM protocol: Scalable Weakly-consistent Infection-style process group Membership protocol, used for distributed cluster membership and failure detection

- CRDT (Conflict-free Replicated Data Type): Data structures that can be replicated across nodes and merged without conflicts, enabling eventually-consistent distributed state

Original article

Shepherd Model Gateway (SMG) is a high-performance model-routing gateway for large-scale LLM deployments. It centralizes worker lifecycle management, balances traffic across HTTP/gRPC/OpenAI-compatible backends, and provides enterprise-ready control over history storage, MCP tooling, and privacy-sensitive workflows. SMG has full OpenAI and Anthropic API compatibility across SGLang, vLLM, TRT-LLM, OpenAI, Gemini, and more. This post discusses the underlying architecture behind the gateway.

Perplexity is pushing beyond search into enterprise automation with workflow templates, data warehouse connectors, and deep Microsoft integrations to compete with Copilot.

Original article

Perplexity added workflows, enterprise data connectors, and integrations like Teams and Excel to its AI system, targeting structured business tasks and continuous automation.

AI Has Made Memory Chips One of the World's Most Profitable Products (8 minute read)

Memory chip makers are posting record-breaking profits as AI demand pushes Samsung, SK Hynix, and Micron into the ranks of the world's most profitable companies.

Deep dive

- Memory prices in Q1 2026 grew nearly 100% quarter-over-quarter, roughly double the initially projected 50% increase, according to TrendForce

- Samsung's Q1 2026 net profit of $30 billion exceeded not only its prior quarterly record but nearly matched its historical high for an entire year

- The three memory chip makers (Samsung 36% market share, SK Hynix 32%, Micron 22% for DRAM) are expected to rank among the world's top 10 most profitable companies in 2026—none cracked the top 10 a year ago

- Samsung shares have risen 72% since the start of 2026, SK Hynix up 90%, and Micron up 65%

- The supply crunch is expected to worsen in 2027, with Samsung stating "available supply is far short of customer demand" based on prebooked orders

- The profit surge follows a two-phase demand pattern: first, specialized HBM production for AI training (paired with Nvidia GPUs) constrained conventional memory supply

- Second, inference workloads for deployed AI models sparked additional demand for general servers using conventional DRAM and NAND flash memory

- The three companies collectively control the overwhelming majority of both DRAM (90%) and NAND flash (55%) markets

- While questions persist about whether AI services will generate commensurate profits, infrastructure providers are capturing an "epic windfall"

- Memory makers gave priority to HBM production over conventional chips used in smartphones, PCs, and general servers, creating the supply constraint that drove prices up

Decoder

- HBM (High-Bandwidth Memory): Specialized memory chips designed for AI training workloads, typically paired with Nvidia GPUs for training large language models

- DRAM: Dynamic random-access memory, the main volatile memory used in computers and servers for active tasks

- NAND flash: Non-volatile memory used for storage in SSDs, smartphones, and data centers

- Inference: The phase of AI computing where trained models respond to user queries, as opposed to training new models

- LLM (Large Language Model): AI models like GPT that require massive memory during training

Original article

The AI boom has pushed the memory-chip industry into a super boom cycle with record-smashing profits. Samsung has reported first-quarter net profit equivalent to more than $30 billion, blowing away its prior quarterly record and almost topping the company's high for full-year profit. The historic run doesn't look likely to end soon. The supply crunch is expected to grow worse next year.

Cursor explains how they continuously optimize the infrastructure layer between LLMs and code, using A/B tests and custom tuning per model to make their AI coding agent faster and more reliable.

Deep dive

- Cursor has shifted from static context (folder layouts, pre-loaded snippets) to dynamic context that agents fetch on-demand as models have improved at choosing their own context

- They removed earlier guardrails like automatic lint error surfacing and tool call limits as models became more capable at self-correction

- They measure agent quality through "Keep Rate" (what percentage of generated code remains in the codebase over time) and LLM-analyzed user responses to detect satisfaction

- Online A/B tests sometimes kill promising ideas - a more expensive summarization model showed negligible quality improvement for the added cost

- They classify tool call errors into categories like InvalidArguments, UnexpectedEnvironment, and ProviderError, with anomaly detection alerts per-tool and per-model

- An automated weekly process uses an LLM to search logs, surface new issues, and create Linear tickets, part of building a "software factory" for harness maintenance

- Different models get different tool formats - OpenAI models use patch-based file editing while Anthropic models use string replacement, matching their training

- When customizing for new models, they discovered "context anxiety" in one model that would refuse tasks as context filled up, fixed through prompt adjustments

- Mid-conversation model switching is challenging because each model expects different tool shapes and conversation formats, requiring custom instructions to handle handoffs

- Cache hits are lost when switching models mid-conversation since caches are provider-specific, making it slower and more expensive

- They're building toward multi-agent systems where specialized agents handle planning, editing, and debugging separately, with orchestration logic living in the harness

Decoder

- Agent harness: The infrastructure layer between a language model and the codebase it's working on, managing context, tool execution, and error handling

- Context window: The total text (system prompts, conversation history, code snippets) sent to an LLM for each request

- Tool calls: Structured actions an agent can perform like reading files, making edits, or running searches

- Keep Rate: The percentage of AI-generated code that remains unchanged in a codebase after a set time period

- Context rot: Degradation in model performance when accumulated errors and failed attempts fill up the context window

- CursorBench: Cursor's internal evaluation suite for measuring agent performance on standardized tasks

Original article

We approach building the Cursor agent harness harness the way we'd approach any ambitious software product. Much of the work is vision-driven, where we start with an opinion about what the ideal agent experience should look like.