Devoured - April 30, 2026

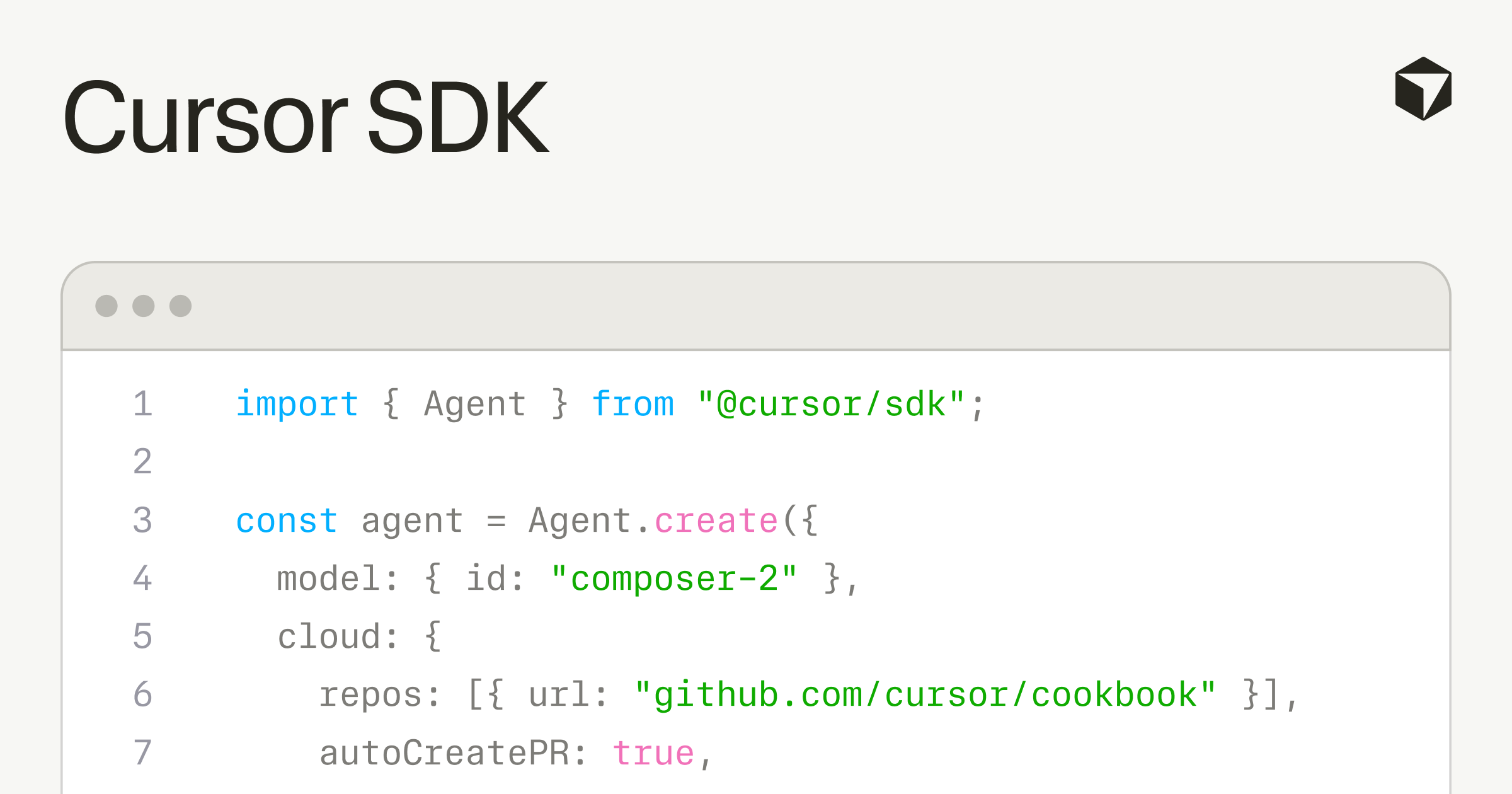

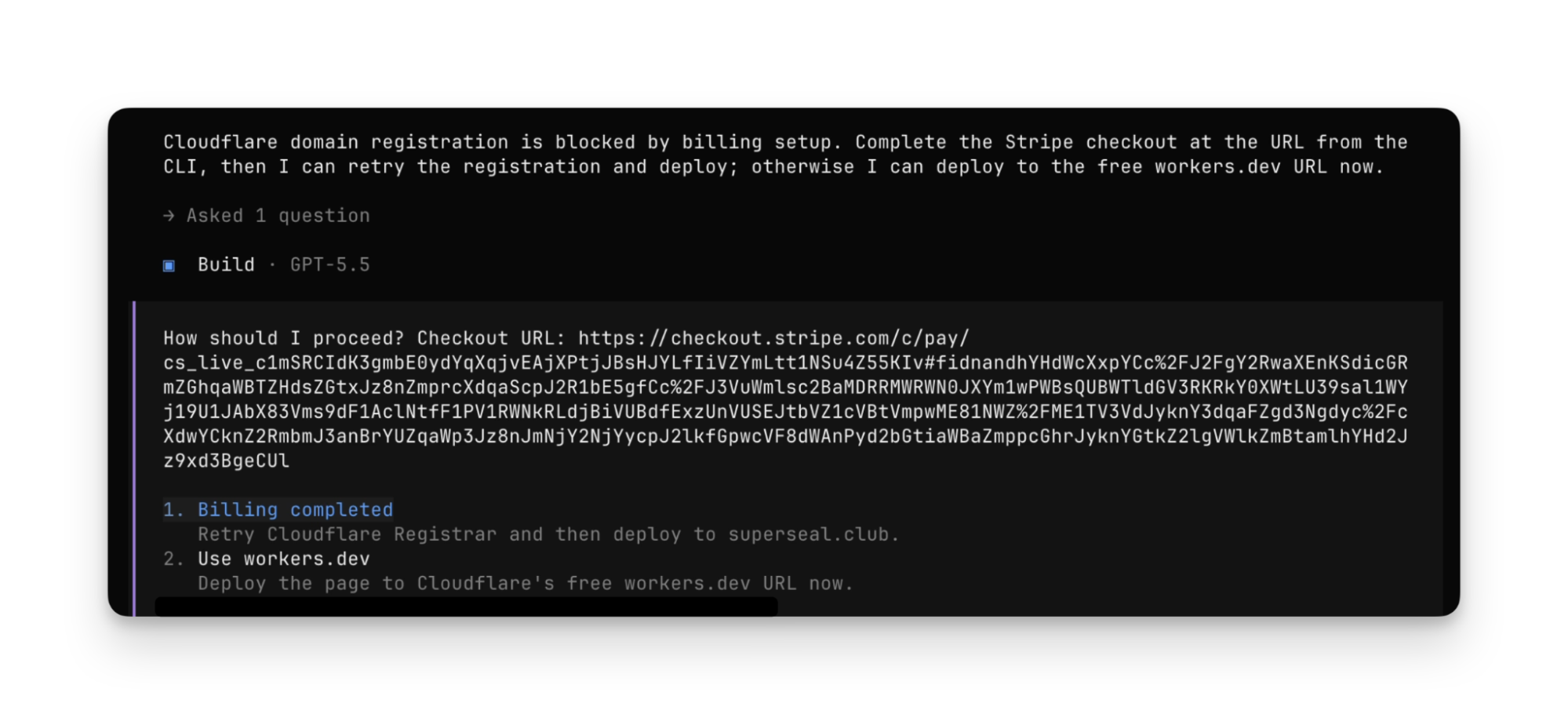

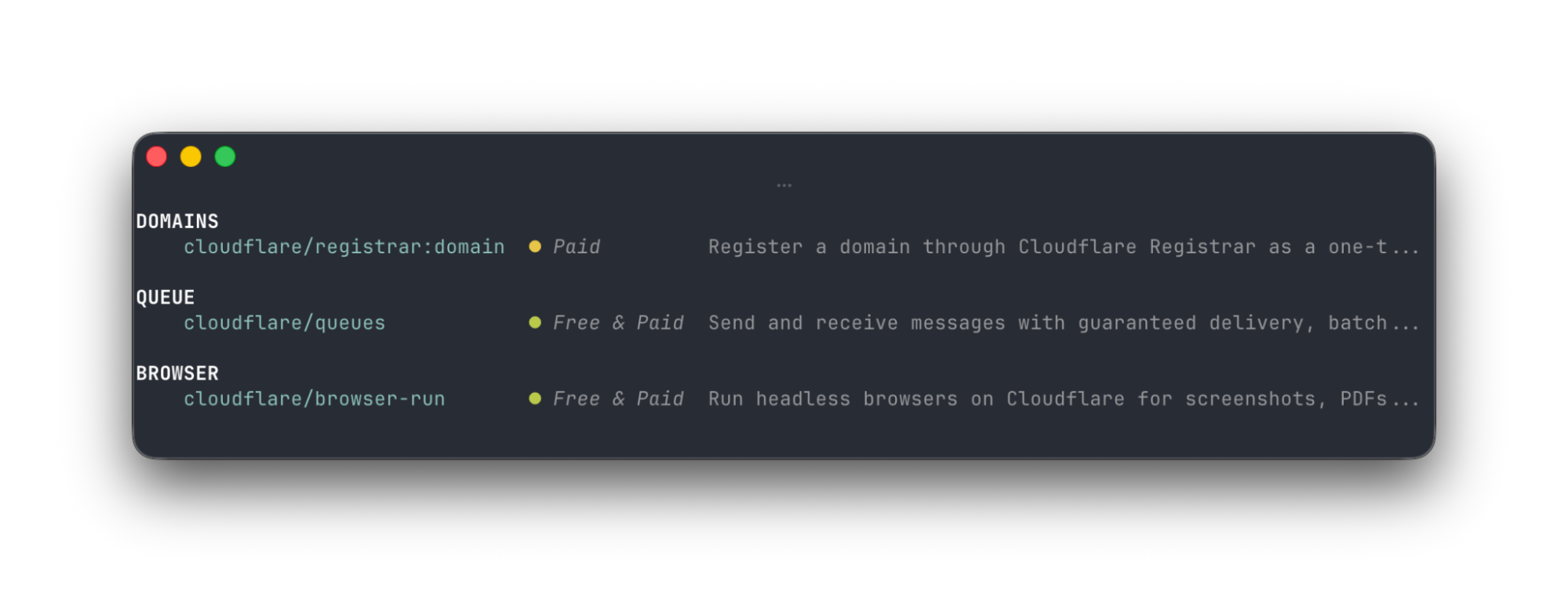

Cursor released an SDK that lets developers programmatically deploy coding agents into CI/CD pipelines and products, while Cloudflare and Stripe launched a protocol enabling AI agents to autonomously create accounts, buy domains, and deploy applications without manual setup. On the infrastructure side, Linux 7.0's scheduling change cut PostgreSQL performance in half (fixed by enabling huge pages), and an AI-powered reverse engineering tool discovered a critical GitHub remote code execution vulnerability in under 48 hours.

OpenAI has effectively abandoned first-party Stargate data centers in favor of more flexible deals (5 minute read)

OpenAI has abandoned plans to build its own data centers through the Stargate joint venture, opting instead to lease compute capacity as cash flow concerns mount.

Original article

In early 2025, OpenAI announced Stargate, a joint venture with Oracle and SoftBank, which aimed to invest $500 billion in AI data centers in the United States. But after more than a year of challenges and disagreements, it seems that the startup has abandoned the original idea of directly owning infrastructure alongside its two partners. According to the Financial Times, OpenAI now prefers to rely on third-party providers and lease capacity in the long term.

This is a sensible idea for the startup, which is burning through cash and has reportedly missed internal revenue targets in recent months. But it has also caused chaos among its partners and put its reliability into question. According to the report, OpenAI has "in practice... abandoned the joint venture," choosing instead large bilateral deals with Oracle and more. One person involved with Stargate reportedly said the company had "sidelined first-party data centres," while OpenAI itself admitted that Stargate is merely an "umbrella for our compute strategy."

Stargate's initial goal was to build 20 data centers, with the first project at Abilene, Texas, already operational. However, the three partners reportedly squabbled among themselves for months as they could not agree on who would have ultimate control of the planned data centers. In the end, SoftBank agreed to own and develop the Texas data center, while OpenAI would design and operate it on a long-term lease.

Other Stargate projects located in other areas have also been hit by uncertainties. The UK government signed a deal with OpenAI, among other partners, to build a data center in the UK, but the startup has put it on hold earlier this month. It cited "restrictive regulations" and "high energy costs" as the reason behind the move, but UK AI Minister Kanishka Narayan told the Financial Times that the "only thing that has changed [since] the moment of those commitments…has been the financing environment for OpenAI."

It has also done the same for another Stargate project in Narvik, Norway, with Microsoft stepping up to take over the lease for the site. OpenAI will then lease compute capacity from Redmond, instead of getting it directly from Nscale, the British company that developed the site and also worked on the canceled UK project.

All these changes have got some partners "feeling let down and misled by OpenAI," a person familiar with Microsoft's decision said. Thankfully, the software giant has stepped in on some of the projects that the startup has supposedly abandoned. One source told the publication that money is not unlimited, no matter what Sam Altman might say, while another said that they prefer Microsoft over OpenAI as a tenant, as "they are more creditworthy."

Even though OpenAI has made a name for itself in AI, the startup has not turned a profit since it was founded in 2015. Many institutions believe in its potential, though, with the firm securing $110 billion in its latest funding round — the biggest amount secured in Silicon Valley history and $10 billion more than what the company initially targeted. Still, some analysts estimate that it could run out of cash by mid-2027 with the massive amounts of money it's been throwing around to secure more compute.

Anthropic CEO Dario Amodei criticized moves like this, saying that some of his company's rivals are pushing infrastructure investments too far. However, OpenAI says that it's ahead of the exponential compute curve, allowing it to have an advantage over everyone else. For example, Anthropic has had to limit access to some features on its various products due to limited resources, and Amodei has had to spend more on securing capacity to satisfy the increasing demand

The biggest difference between startups, like OpenAI and Anthropic, and their more established rivals, like Microsoft, Google, Meta, and Amazon, is cash flow. The startups still rely on external funding to fuel their growth, while the big tech companies have billion-dollar revenue that they can rely on to pour into expensive hardware and infrastructure projects.

Google to sell TPU chips to 'select' customers in latest shot at Nvidia (2 minute read)

Google is shifting from renting cloud TPU access to selling its custom AI chips directly to select customers for their own data centers, intensifying competition with Nvidia.

Decoder

- TPU: Tensor Processing Unit, Google's custom-designed chips optimized specifically for machine learning workloads

- Inferencing: Running trained AI models to make predictions, as opposed to training which creates the models

- Gigawatt agreement: Energy capacity commitment for data center chip deployments (1 gigawatt powers roughly 700,000 homes)

Original article

Google to sell TPU chips to 'select' customers in latest shot at Nvidia

Google parent Alphabet (GOOG, GOOGL) on Wednesday said that it plans to sell its custom Tensor Processing Units (TPUs) to select customers who will install the chips in their own data centers.

The move is a change from Google's prior strategy, which saw it rent out TPU capacity to customers from its own data centers — and is yet another strike at AI chip king Nvidia (NVDA).

The announcement, during the company's Q1 earnings call, comes a week after Alphabet announced two new TPUs: its TPU 8t for AI training and TPU 8i for inferencing.

"As TPU demand grows from AI labs, capital markets firms, and high-performance computing applications, we'll begin to deliver TPUs to a select group of customers in their own data centers in a hardware configuration to expand our addressable market opportunity," Alphabet CEO Sundar Pichai said during the company's first quarter earnings call.

Alphabet didn't disclose potential customers, but it signed a multiple-gigawatt agreement for next-generation TPUs with Anthropic (ANTH.PVT) earlier this month, with chips expected to begin coming online in 2027.

And according to The Information, Alphabet has also entered into a multibillion-dollar chip deal with Meta (META).

Alphabet's TPU maneuvers put it into ever greater competition with Nvidia, which has largely dismissed any fears that Alphabet's offerings will erode its lead in the space, saying that its chips offer greater flexibility for AI developers.

Google isn't the only company moving in on Nvidia's turf. Amazon (AMZN) is also offering up its own chips to customers.

In his annual shareholder letter, Amazon CEO Andy Jassy said that the company's chip business, which includes its Graviton, Trainium, and Nitro processors, has an annual revenue run rate of greater than $20 billion.

But because Amazon only monetizes its chips through its AWS EC2 (Elastic Compute Cloud) service, the CEO explained that $20 billion is likely an understatement and that it would probably be closer to $50 billion.

Like Google, Amazon signed a new agreement for 5 gigawatts of AI chip capacity with Anthropic, but also inked a deal for 2 gigawatts of chips with OpenAI.

On the CPU side, Amazon said it will deploy its AWS Graviton chips for Meta (META) to use across its agentic AI workloads.

Mistral releases Medium 3.5, a 128-billion parameter open-weight model that powers cloud-based coding agents to run long asynchronous tasks independently.

Deep dive

- Mistral Medium 3.5 merges instruction-following, reasoning, and coding capabilities into a single 128B dense model with a 256k context window, marking Mistral's first flagship merged model

- The model achieves 77.6% on SWE-Bench Verified, ahead of Devstral 2 and Qwen3.5 397B A17B, and scores 91.4 on τ³-Telecom for agentic capabilities

- Self-hosting is practical on as few as four GPUs, making it accessible for organizations wanting to run their own infrastructure rather than relying on API calls

- Reasoning effort is configurable per request, allowing the same model to handle quick chat responses or complex multi-step agentic workflows without reloading

- The vision encoder was trained from scratch to handle variable image sizes and aspect ratios, rather than forcing images into fixed dimensions

- Vibe remote agents move coding sessions to the cloud where they run independently, in parallel, and notify developers when complete, eliminating the need to keep local terminals open

- Developers can "teleport" ongoing local CLI sessions to the cloud mid-task, preserving session history, task state, and approval settings for seamless continuation

- Each coding session runs in an isolated sandbox supporting broad edits and installs, with integration into GitHub, Linear, Jira, Sentry, Slack, and Teams for pull requests and notifications

- Work mode in Le Chat uses the new model to execute complex multi-step tasks like cross-tool workflows, research synthesis, and inbox triage with visible tool calls and approval gates for sensitive actions

- The model is priced at $1.5 per million input tokens and $7.5 per million output tokens via API, with open weights available on Hugging Face under a modified MIT license

- Mistral built Vibe originally for internal use, then for enterprise customers, and is now opening it to all developers for launching coding tasks from the web without local terminal dependencies

- The system is designed for high-volume, well-defined work like module refactors, test generation, dependency upgrades, and CI investigations that take developer time but not judgment

Decoder

- Dense model: A neural network architecture where all parameters are used for every inference, as opposed to sparse or mixture-of-experts models that activate only subsets of parameters

- SWE-Bench Verified: A benchmark measuring how well AI models can solve real-world software engineering tasks from GitHub issues, with the "Verified" version being a curated subset with confirmed correct solutions

- Context window: The maximum amount of text (measured in tokens) that a model can process at once, including both input and output; 256k tokens is roughly 190,000 words

- τ³-Telecom: A benchmark for measuring agentic capabilities, specifically how well models can perform multi-step tasks with tool usage

- Open weights: The trained model parameters are released publicly, allowing anyone to download and run the model, though this differs from fully "open source" which would include training code and data

- NVIDIA NIM: NVIDIA Inference Microservice, a containerized solution for deploying AI models at scale on NVIDIA GPUs

Original article

Remote agents in Vibe. Powered by Mistral Medium 3.5.

Introducing Mistral Medium 3.5, remote coding agents in Vibe, plus new Work mode in Le Chat for complex tasks.

Coding agents have mostly lived on your laptop. Today we're moving them to the cloud, where they run on their own, in parallel, and notify you when they're done. You can start them from the Mistral Vibe CLI or directly in Le Chat, offloading a coding task without leaving the conversation.

Powering this is Mistral Medium 3.5 in public preview, our new default model in Mistral Vibe and Le Chat, built to run for long stretches on coding and productivity work. The new Work mode in Le Chat (Preview) extends this with a powerful agent for complex, multi-step tasks like research, analysis, and cross-tool actions.

Highlights.

- Mistral Medium 3.5, a new flagship model that merges instruction-following, reasoning, and coding into a single 128B dense model. Released as open weights, under a modified MIT license.

- Strong real-world performance at a size that runs self-hosted on as few as four GPUs.

- Mistral Vibe remote agents for async coding: sessions run in the cloud, can be spawned from the CLI or Le Chat, and a local CLI session can be teleported up to the cloud.

- Start Mistral Vibe coding tasks in Le Chat. Sessions run on the same remote runtime and keep going while you step away.

- Work mode in Le Chat runs on a new agent, powered by Mistral Medium 3.5, that works through multi-step tasks, calling tools in parallel until the job is done.

Mistral Medium 3.5.

Mistral Medium 3.5 is our first flagship merged model, available in public preview. It is a dense 128B model with a 256k context window, handling instruction-following, reasoning, and coding in a single set of weights. It performs strongly in real-world use, with self-hosting possible on as few as four GPUs. Reasoning effort is now configurable per request, so the same model can answer a quick chat reply or work through a complex agentic run. We trained the vision encoder from scratch to handle variable image sizes and aspect ratios.

Mistral Medium 3.5 scores 77.6% on SWE-Bench Verified, ahead of Devstral 2 and models like Qwen3.5 397B A17B. It also has strong agentic capabilities and scores 91.4 on τ³-Telecom.

The model was built for long-horizon tasks, calling multiple tools reliably, and producing structured output that downstream code can consume. It is the model that made async cloud agents in Vibe practical to ship.

Mistral Medium 3.5 becomes the default model in Le Chat. It also replaces Devstral 2 in our coding agent, Vibe CLI.

Vibe remote agents.

From today, coding sessions can work through long tasks while you're away. Many can run in parallel, and you stop being the bottleneck on every step the agent takes.

You can start the cloud agents from the Mistral Vibe CLI or from Le Chat. While they run, you can inspect what the agent is doing, with file diffs, tool calls, progress states, and questions surfaced as you go. Ongoing local CLI sessions can be teleported up to the cloud when you want to leave them running, with session history, task state, and approvals carrying across.

Vibe sits between the systems engineering teams already use, with humans in the loop wherever they're needed. It plugs into GitHub for code and pull requests, Linear and Jira for issues, Sentry for incidents, and apps like Slack or Teams for reporting.

Each coding session runs in an isolated sandbox, including broad edits and installs. When the work is done, the agent can open a pull request on GitHub and notify you, so you review the result instead of every keystroke that produced it.

It fits the high-volume, well-defined work that takes a developer's time without taking their judgment: module refactors, test generation, dependency upgrades, CI investigations, as well as bug fixes.

We use Workflows orchestrated in Mistral Studio to bring Mistral Vibe into Le Chat. We originally built this for our own in-house coding environment, then for our enterprise customers. Today the capability opens up to everyone, who can now launch coding tasks from the web. And without being tied to a local terminal, a developer can run several in parallel.

You can start coding sessions directly in Le Chat, so a task described in chat runs on the same remote runtime as the CLI and the web, and comes back later as a finished branch or a draft PR.

New Work mode in Le Chat (Preview).

Work mode is a powerful new agentic mode for complex tasks in Le Chat, powered by a new harness and Mistral Medium 3.5. The agent becomes the execution backend for the assistant itself, so Le Chat can read and write, use several tools at once, and work through multi-step projects until it completes what you've asked.

Here's what Work mode enables you to do today.

- Cross-tool workflows: catch up across email, messages, and calendar in a single run; prepare for a meeting with attendee context, latest news, and talking points pulled from your sources.

- Research and synthesis: dive into a topic across the web, internal docs, and connected tools, then produce a structured brief or report you can edit before exporting or sending.

- Triage your inbox and draft replies; create issues in Jira from your team and customer discussions; send a summary to your team on Slack.

Sessions persist longer than a typical chat reply, so an agent can keep going across many turns, through trial-and-error, and through to completion. In Work mode, connectors are on by default rather than chosen manually, which lets the agent reach into documents, mailboxes, calendars, and other systems for the rich context it needs to take correct action.

Every action the agent takes is visible: you see each tool call and the thinking rationale. Le Chat will ask for explicit approval—based on your permissions—before proceeding with sensitive tasks like sending a message, writing a document, or modifying data.

Get started.

Mistral Medium 3.5 is available today in Mistral Vibe and Le Chat, and powers remote coding agents and Work mode in Le Chat on the Pro, Team, and Enterprise plans.

Through API, it's priced at $1.5 per million input tokens and $7.5 per million output tokens. Open weights are on Hugging Face under a modified MIT license.

It is also available for prototyping, hosted on NVIDIA GPU-accelerated endpoints on build.nvidia.com and as a scalable containerized inference microservice, NVIDIA NIM.

IBM's Granite 4.1 demonstrates that an 8 billion parameter dense model can match the performance of a 32 billion parameter mixture-of-experts model through better training data and techniques.

Decoder

- Dense architecture: A neural network where all neurons in each layer connect to all neurons in the next layer, as opposed to mixture-of-experts (MoE) models that route inputs to specialized sub-networks

- Decoder-only architecture: A transformer model that generates text by predicting the next token based on previous tokens, similar to GPT models

- Parameters (B): The number of trainable weights in a neural network, measured in billions; generally more parameters mean more model capacity

- Reinforcement learning pipeline: A training process where the model learns by receiving feedback on its outputs rather than just predicting the next word

Original article

Granite 4.1 LLMs utilize a dense, decoder-only architecture with models of 3B, 8B, and 30B parameters, trained on 15 trillion tokens and using a five-phase pre-training approach. The 8B model matches the performance of the previous 32B Mixture-of-Experts model through a multi-stage reinforcement learning pipeline focused on data quality. These models, designed for efficient, reliable enterprise use, demonstrate competitive instruction-following and tool performance while maintaining cost efficiency and stable usage.

AI evaluation costs have exploded to tens of thousands of dollars per benchmark run, creating an accountability barrier that limits who can independently validate frontier AI systems.

Deep dive

- The Holistic Agent Leaderboard spent approximately $40,000 to run 21,730 agent rollouts across 9 models and 9 benchmarks, with independent reproduction arriving at $46,000, establishing a new cost threshold for comprehensive agent evaluation

- Individual benchmark costs vary by four orders of magnitude across tasks and three orders within single benchmarks, with a single GAIA run on frontier models costing $2,829 before caching and some configurations exceeding $1,600 per run

- Scaffold choice—the framework wrapping the model—emerges as a first-order cost driver with 33× cost spreads on identical tasks, and higher spending does not reliably improve accuracy (9× cost difference for two-percentage-point accuracy gains observed)

- Static LLM benchmarks like HELM originally cost roughly $100,000 in aggregate and compression techniques like Flash-HELM, tinyBenchmarks, and Anchor Points achieved 100–200× reductions while preserving model rankings, but these methods fail on agent tasks

- Agent benchmarks compress only 2–3.5× using mid-difficulty filtering (tasks with 30–70% historical pass rates), far below static benchmark gains, because each item is a multi-turn rollout with inherent variance rather than a single prediction

- Training-in-the-loop benchmarks like The Well (960 H100-hours per architecture, 3,840 for full sweep), PaperBench ($9,500 per evaluation), and MLE-Bench ($5,500 per seed) resist compression entirely because the unit being evaluated is the trained model itself

- For small scientific ML models, evaluation compute can exceed training compute by two orders of magnitude, reversing the traditional deep learning cost model where training dominated

- Reliability measurement multiplies all costs: moving from single-run accuracy to 8-run consistency would take HAL from $40,000 to roughly $320,000, and agent performance can drop from 60% on single runs to 25% under consistency tests

- The field pays redundantly for the same evaluations because results are reported as single accuracy numbers in PDFs or leaderboard entries rather than shared instance-level outputs in reusable formats, with frontier labs, academic groups, auditors, and journalists each paying retail for overlapping measurements

- Academic groups now hit budget constraints before technical ones when attempting independent validation, with a single GAIA run exceeding typical graduate student travel budgets and three-seed comparisons of six models pushing above $150,000

- Cost-blind leaderboards reward waste by ranking raw accuracy without cost reporting, while Pareto-front analysis reveals that accuracy-optimal configurations cost 4.4–10.8× more than Pareto-efficient alternatives with comparable real-world performance

- HAL's log analysis revealed that agents violated explicit benchmark instructions over 60% of the time on failed tasks, experienced environmental errors in roughly 40% of runs on some benchmarks, and a "do-nothing" agent passed 38% of one benchmark's tasks under original construction

- The concentration of evaluation capability in well-funded labs undermines external validation and creates a dynamic where "whoever can pay for the evaluation gets to write the leaderboard," with implications for AI governance and accountability

- Standardized documentation and data reuse represent the highest-leverage cost reduction available, potentially offering 2× savings that would exceed gains from all compression techniques combined by allowing subsequent research to build on rather than repeat baseline measurements

- The EvalEval Coalition's Every Eval Ever project provides metadata schema, validators, and converters from popular harnesses (HELM, lm-eval-harness, Inspect AI) to enable one-step transformation of evaluation logs into shared formats hosted on Hugging Face

Decoder

- Scaffold: The framework or harness code that wraps an AI model to enable it to use tools, interact with environments, or follow multi-step reasoning patterns; scaffold choice can change costs by 33× on identical tasks

- H100-hours: A unit measuring the cost of renting NVIDIA H100 GPUs for training or evaluation, typically converted at $2.50 per hour in this article's accounting

- Rollout: A complete execution of an agent attempting a task from start to finish, including all tool calls, reasoning steps, and environment interactions

- Training-in-the-loop: Evaluation protocols that require training a model from scratch as part of the benchmark, such as training neural operators on scientific datasets or ML agents training pipelines on Kaggle competitions

- Pass^k consistency: The percentage of tasks an agent solves correctly across k repeated runs, measuring reliability rather than single-attempt accuracy; pass^8 can be far lower than pass^1

- Item Response Theory (IRT): A statistical framework from psychometrics used to identify which test items carry the most information about model differences, enabling aggressive compression of static benchmarks

- Pareto frontier: The set of configurations where no alternative offers both lower cost and higher accuracy simultaneously, used to identify efficient agent configurations versus wasteful ones

Original article

AI evals are becoming the new compute bottleneck

Summary. AI evaluation has crossed a cost threshold that changes who can do it. The Holistic Agent Leaderboard (HAL) recently spent about $40,000 to run 21,730 agent rollouts across 9 models and 9 benchmarks. A single GAIA run on a frontier model can cost $2,829 before caching. Exgentic's $22,000 sweep across agent configurations found a 33× cost spread on identical tasks, isolating scaffold choice as a first-order cost driver, and UK-AISI recently scaled agentic steps into the millions to study inference-time compute. In scientific ML, The Well costs about 960 H100-hours to evaluate one new architecture and 3,840 H100-hours for a full four-baseline sweep. While compression techniques have been proposed for static benchmarks, new agent benchmarks are noisy, scaffold-sensitive, and only partly compressible. Training-in-the-loop benchmarks are expensive by construction, and when you try to add reliability to these evals, repeated runs further multiply the cost.

Making static LLM benchmarks cheaper

The cost problem started before agents. When Stanford's CRFM released HELM in 2022, the paper's own per-model accounting showed API costs ranging from $85 for OpenAI's code-cushman-001 to $10,926 for AI21's J1-Jumbo (178B), and 540 to 4,200 GPU-hours for the open models, with BLOOM (176B) and OPT (175B) at the top end. Perlitz et al. (2023) restate the larger HELM cost pattern, and IBM Research notes that putting Granite-13B through HELM "can consume as many as 1,000 GPU hours." Across HELM's 30 models and 42 scenarios, the aggregate of reported costs and GPU compute came to roughly $100,000.

Another shocking observation came from Perlitz et al.'s analysis of EleutherAI's Pythia checkpoints: developers pay for evaluation repeatedly during model development. Pythia released 154 checkpoints for each of 16 models spanning 8 sizes, or 2,464 checkpoints if each model checkpoint is counted separately, so the community could study training dynamics. Running the LM Evaluation Harness across all those checkpoints turns eval into a multiplier on training: Perlitz et al. (2024) noted that evaluation costs "may even surpass those of pretraining when evaluating checkpoints." For small models, evaluation becomes the dominant compute line item across the whole development cycle. When we scale inference-time compute, we scale evaluation costs.

Perlitz et al. then asked how much of HELM actually carried the rankings. The result was striking: a 100× to 200× reduction in compute preserved nearly the same ordering, with larger reductions still useful for coarse grouping under the paper's tiered analysis. Flash-HELM turned that finding into a coarse-to-fine procedure: run cheap evaluations first, then spend high-resolution compute only on the top candidates. Much of HELM's compute was confirming rankings that the field could have inferred much more cheaply.

Other work reached the same conclusion from different angles. tinyBenchmarks compressed MMLU from 14,000 items to 100 anchor items at about 2% error using Item Response Theory. The Open LLM Leaderboard collapsed from 29,000 examples to 180. Anchor Points showed that as few as 1 to 30 examples could rank-order 87 language-model/prompt pairs on GLUE, and others followed, reducing dataset sizes by 90%. Static benchmarks had a weakness you could exploit: model differences often concentrate in a small subset of items, so ranking can survive aggressive subsampling.

That trick weakened sharply once benchmarks moved from static predictions to agents.

Agent evals are messier

A very nice public accounting of agent evaluation comes from the Holistic Agent Leaderboard (Kapoor et al., ICLR 2026). HAL runs standardized agent harnesses across nine benchmarks covering coding, web navigation, science tasks, and customer service, with shared scaffolds and centralized cost tracking. The headline cost: $40,000 for 21,730 rollouts across 9 models and 9 benchmarks. By April 2026, the leaderboard had grown to 26,597 rollouts. Ndzomga's independent reproduction arrives at almost the same number: $46,000 across 242 agent runs.

Behind that aggregate, the cost of a single benchmark run varies by four orders of magnitude across HAL tasks, and by three orders within some individual benchmarks.

Figure 1. Each bar shows the minimum-to-maximum cost across HAL configurations on a single benchmark. Highlighted bars cross the round $1,000-per-run threshold. A "run" is one full agent evaluation across all tasks. Within-benchmark spread reflects the model × scaffold × token-budget product.

Behind these numbers is a blunt pricing fact. Claude Opus 4.1 charges $15 per million input tokens and $75 per million output. Gemini 2.0 Flash charges $0.10 and $0.40, a two-order-of-magnitude spread on input alone. Agent benchmarks rarely benchmark "the model" in isolation. They benchmark a model × scaffold × token-budget product, and small scaffold choices can multiply costs 10×.

Worse, higher spend does not reliably buy better results. On Online Mind2Web, Browser-Use with Claude Sonnet 4 cost $1,577 for 40% accuracy. SeeAct with GPT-5 Medium hit 42% for $171. The HAL paper notes "a 9× difference in cost despite just a two-percentage-point difference in accuracy." On GAIA, an HAL Generalist with o3 Medium cost $2,828 for 28.5% accuracy, while a different agent hit 57.6% for $1,686. CLEAR finds across 6 SOTA agents on 300 enterprise tasks that "accuracy-optimal configurations cost 4.4 to 10.8× more than Pareto-efficient alternatives" with comparable real-world performance.

The static-era toolkit should have helped, but it has only gone so far. Ndzomga's mid-difficulty filter, which selects tasks with 30 to 70% historical pass rates, achieves a 2× to 3.5× reduction while preserving rank fidelity under scaffold and temporal shifts. That is useful, but it falls far short of the 100× to 200× gains available for static benchmarks. When each item is a multi-turn rollout with its own variance, the unavoidable long trajectory per single question becomes the expensive object.

Some evals are just training

Some benchmarks escape the API-cost framing altogether because their evaluation protocol trains models from scratch.

The Well gives a very interesting example of this. It bundles 16 scientific machine-learning datasets spanning biological systems, fluid dynamics, magnetohydrodynamics, supernova explosions, viscoelastic instability, and active matter, totaling 15 TB. Using the paper's headline 16-dataset grid, the protocol leaves little room to economize: train each baseline model for 12 hours on a single H100, try five learning rates per (model, dataset) pair, repeat across four architectures and 16 datasets. That headline-grid sweep consumes 3,840 H100-hours, or roughly $9,600 under the conversion assumptions below. A single new architecture still costs about 960 H100-hours, or about $2,400.

Training one neural operator can take a single 12-hour H100 run, while evaluating it across the benchmark requires 80 such trainings. That asymmetry is what makes The Well important. In this corner of ML, evaluation compute exceeds training compute by roughly two orders of magnitude, reversing the old deep-learning mental model.

The same pattern recurs across SciML. PDEBench covers 11 PDE families and reports per-epoch timing tables across datasets and model families, but a clean per-architecture dollar figure depends on the chosen training protocol and hardware. MLE-Bench (OpenAI) sits between agent and training regimes. Each agent attempt at one of 75 Kaggle competitions runs 24 hours on a single A10 GPU, training real ML pipelines. The paper is explicit: "A single run of our main experiment setup of 24 hours per competition attempt requires 24 hours × 75 competitions = 1,800 GPU hours of compute," plus o1-preview consuming 127.5M input and 15M output tokens per seed. At $1.50 per A10-hour, the GPU floor alone is $2,700; adding o1-preview API usage brings a one-seed run to roughly $5,500. Three seeds × six models would therefore land near $100,000 before any additional grading or retry overhead.

METR's RE-Bench caps each of seven research engineering environments at 8 hours on 1 to 6 H100s. A single pass across the suite is therefore 56 to 336 H100-hours before adding repeated attempts, multiple seeds, or multiple agents; the human baseline, with 71 expert attempts, raises the implicit budget much further. Because the benchmark gives agents and humans the same wall-clock compute, a real-time training process sets the cost floor. A token budget no longer bounds it from above.

ResearchGym (ICLR 2026) makes the agent run actual ML research. Five test tasks (39 sub-tasks) drawn from ACL, ICLR, and ICML papers, including ACL Highlights, ICML Spotlight, ICLR Spotlight, and ICLR Oral categories, with the proposed methods withheld. The agent has to propose hypotheses, train models, and beat the original authors' baselines. The budget is tight: $10 in API plus 12 to 24 hours on a single GPU under 24 GB per task. A full pass (5 tasks × 24h × 3 seeds) consumes about 360 GPU-hours per agent.

The cost picture turns brutal in PaperBench. Twenty ICML 2024 Spotlight or Oral papers must be replicated from scratch, graded against rubric trees with 8,316 leaf-node criteria. Each rollout uses an A10 GPU for 12 hours, and the per-paper math is straightforward:

- $400 in API per o1 IterativeAgent rollout, times 20 papers, comes to about $8,000 per evaluation.

- Grading runs $66 per paper with the o3-mini judge, or $1,320 for the full benchmark.

- Using o1 as judge would push grading to about $830 per paper.

PaperBench Code-Dev drops execution on purpose. That choice halves rollout cost to about $4,000 and cuts grading to $10 per paper (85% lower). OpenAI built the variant because many groups cannot afford the full benchmark.

The historical precedent is NAS-Bench-101, whose tabular construction required over 100 TPU-years of training. Without that one-time investment, every NAS algorithm comparison would have cost 1 to 100+ GPU-hours per run, which would have made comparison pricier than the algorithms themselves.

Figure 2. All values in USD per single evaluation of one model or agent through the full benchmark protocol. GPU costs converted at $2.50/H100-hr, $1.50/A10-hr; API and grading costs included where applicable. Highlighted bars denote benchmarks costing at least the round $5,000-per-evaluation threshold. The most expensive of these match the most expensive agent benchmarks (Figure 1) and require GPU compute that has no API substitute.

As benchmarks move closer to real work, compression gets harder: static prediction leaves room for large savings, agent rollouts leave less, and in-the-loop training leaves almost none.

Figure 3. The toolkit for compressing evaluation does not transfer as benchmarks become more complex. Bars show the maximum measured compression that preserves model-rank fidelity; labels give the published range. The highlighted bar flags the ~1× baseline where no general compression method exists. Static benchmarks routinely compress 100–200× without losing rankings. Agent benchmarks compress 2–3.5× at best. Training-in-the-loop benchmarks resist subsampling because the unit being evaluated is the trained model.

Reliability is the expensive part

Most of the costs above buy only single-run measurements with limited statistical power. When you measure reliability across repeated runs, static benchmarks, agent benchmarks, and training-in-the-loop benchmarks all become more expensive.

Agent reliability can fall hard when you stop treating one run as evidence. The best-known example comes from Yao et al.'s τ-bench, later reframed in CLEAR (Mehta, 2025): performance can drop from 60% on a single run to 25% under 8-run consistency. Kapoor et al.'s "AI Agents That Matter" found that simple baseline agents Pareto-dominate complex SOTA agents (Reflexion, LDB, LATS) on HumanEval at 50× lower cost. Their holdout analysis found that 7 of 17 benchmarks had no holdout set; among the 10 that did, only 5 held out tasks at the appropriate level of generality, so 12 of 17 failed their holdout criterion overall. The HAL paper notes that a "do-nothing" agent passes 38% of τ-bench airline tasks under the original construction. HAL's own log analysis revealed data leakage in the TAU-bench Few Shot scaffold, forcing its removal in December 2025.

Another recent reliability accounting comes from Rabanser, Kapoor et al.'s "Towards a Science of AI Agent Reliability", which proposes twelve metrics across consistency, robustness, predictability, and safety. Their finding: "recent capability gains have only yielded small improvements in reliability." HAL's internal analysis shows how much fragility hides behind aggregate accuracy. On SciCode and CORE-Bench, agents almost never completed a run without a tool-calling failure. On AssistantBench and CORE-Bench, environmental errors occurred in roughly 40% of runs. Agents violated explicit benchmark instructions in their final answer over 60% of the time on failed tasks.

A statistically credible HAL-style evaluation with k = 8 reruns per cell takes the $40K aggregate to roughly $320K. The same multiplier on PaperBench's $9,500-per-run cost pushes a single agent's evaluation past $75K, and on The Well, a multi-seed protocol takes the per-architecture cost from ~960 H100-hours to several thousand. Reliability acts as a multiplier on every cost category above.

HAL has paused new model evaluations to focus on reliability: the field's headline numbers still carry too much noise, and reducing that noise costs real money. And the figures above are lower bounds; many evaluators are already priced out.

What this means for ML as a field

Eval cost is now an accountability barrier

Academic groups, AI Safety Institutes, and journalists now hit the budget constraint before the technical one when they try to evaluate frontier agents independently. A single GAIA run can exceed an annual graduate student travel budget. A single PaperBench evaluation, including the LLM judge, runs about $9,500. Three-seed comparisons of six models, the kind of study one might publish, push above $150,000. The established practice of "running a benchmark once and reporting the accuracy number" has roughly the rigor of crash-testing one car in perfect weather. Moving past it requires money the academic system does not currently allocate as research compute.

The compute divide now includes evaluation

Ahmed, Wahed and Thompson (Science 2023) documented that industry models in 2021 were 29× larger than academic ones by parameter count, and that about 70% of AI PhDs went to industry in 2020 versus 21% in 2004. The original "compute divide" story mostly ignored evaluation because evaluation used to look cheap next to training. Many benchmarks have reversed that relationship. A lab that can fine-tune a 7B model can no longer assume it can afford the benchmarks the field takes seriously.

Cost-blind leaderboards reward waste

When leaderboards report raw accuracy and omit cost, researchers can rationally pour tokens into a problem until the number ticks up. The HAL paper finds that higher reasoning effort actually reduces accuracy in the majority of runs: extra inference compute does not reliably improve even the metric it is supposed to optimize. Pareto frontiers fix the comparison by ranking accuracy against cost. HAL implements them, but most leaderboards still do not.

If only frontier-lab compute budgets can produce statistically reliable benchmark numbers on the highest-cost agentic and scientific benchmarks, the social process of evaluating AI systems becomes concentrated inside the same labs that build them, rendering external validation partial, and sometimes absent, unless someone subsidizes the cost directly.

Cost summary across benchmark types

| Benchmark | Type | USD per single evaluation | What "one evaluation" means |

|---|---|---|---|

| HELM (per LLM, 2022) | Static LLM | $85 – $10,926 API; 540 – 4,200 GPU-hrs open | One LLM through 42 scenarios; per-model table in HELM §6 p. 43 |

| ScienceAgentBench | Agentic, science | $0.19 – $77 | One agent config across 102 tasks |

| TAU-bench Airline | Agentic | $0.31 – $180 | One agent across all airline tasks |

| SciCode | Agentic, science | $0.12 – $625 | One agent across 338 sub-problems |

| CORE-Bench Hard | Agentic, replication | $2 – $510 | One agent across 45 papers |

| SWE-bench Verified Mini | Agentic, coding | $4 – $1,600 | One agent across 50 issues |

| Online Mind2Web | Agentic, web | $5 – $1,610 | One agent across 300 web tasks |

| GAIA | Agentic, multimodal | $7.80 – $2,829 | One agent across GAIA tasks |

| ResearchGym (full pass) | ML research, training | $540 – $1,260 | 5 tasks × 24h × 3 seeds (~360 GPU-hrs) + API |

| RE-Bench (single pass) | ML R&D, training | $140 – $840 | 7 environments × 8h × 1–6 H100s |

| The Well (per architecture) | SciML, training | ~$2,400 | Headline 16-dataset grid: 5 LRs × 16 datasets × 12h H100 |

| MLE-Bench (1 seed) | ML R&D, training | ~$5,500 | 75 Kaggle competitions × 24h on A10 + o1-preview API |

| PaperBench Code-Dev | Scientific, code only | ~$4,200 | One agent across 20 papers, no execution |

| The Well (full sweep) | SciML, training | ~$9,600 | 4 architectures under the headline 16-dataset grid |

| PaperBench (full) | Scientific | ~$9,500 | One agent across 20 papers, full protocol |

| HAL aggregate | 9 benchmarks × 9 models | ~$40,000 | All 81 cells, single seed each |

All figures normalized to USD per single evaluation. GPU compute converted at $2.50/H100-hour, $1.50/A10-hour; API and grading costs included where applicable. Pythia ("eval can exceed pretraining"), PDEBench (per-architecture cost depends on the selected training protocol and hardware), and NAS-Bench-101's 100 TPU-year construction cost are excluded because they do not normalize cleanly to a per-evaluation USD figure.

Stop paying twice for the same eval

One reason these numbers stay high is that the field keeps re-running the same evaluations. A frontier lab pays for a HAL sweep, an academic group pays again for a partial reproduction, an audit organization pays a third time for the model versions it cares about, and a journalist pays a fourth to spot-check the leaderboard. Most of those runs cover overlapping models on overlapping benchmarks. Almost none of the underlying instance-level outputs end up in a place where the next team can build on them, because results get reported as a single accuracy number in a PDF, in a model card table, or in a leaderboard entry that hides scaffold, prompt, and seed. The cost figures above are large in part because the field is paying retail every time, on artifacts the rest of the community could not reuse if it wanted to.

Standardized documentation is the cheapest lever available here, and it is the one reliability work needs anyway. If a $9,500 PaperBench rollout exports its full grading trace in a shared schema, the next group studying the same papers can spend its budget on new perturbations instead of repeating the baseline. If a multi-seed HAL run publishes per-trajectory tool-call logs, agent reliability research can answer questions that a single accuracy number cannot. The saving compounds: even a 2× reuse rate on the high-cost benchmarks would put more money back in the ecosystem than every compression technique combined.

Sharing Eval Data. The EvalEval Coalition's Every Eval Ever project is the standardized format we use for this. It bundles a metadata schema, validators, and converters from popular harnesses such as HELM, lm-eval-harness, and Inspect AI, so existing eval logs can be transformed into a shared format with one step. The community repository on Hugging Face already hosts results from dozens of contributors, with an open Shared Task for adding more. If you ran one of the costly evaluations in this post, depositing the artifacts in a unified, transparent, verifiable and reproducible manner is the highest-leverage cost-reduction move available to the rest of the field. Additionally, if your benchmark is on Hugging Face, you can also expose your results on hub leaderboards and model pages via Community Evals!

Where this leaves us

The economics have changed. Not long ago, training was expensive and evaluation was cheap. For frontier LLMs trained at $50 million to $100 million, evaluation still looks like a rounding error, but that rounding error now costs tens of thousands of dollars per benchmark run and often leaves noisy results behind. For neural operators, ML research agents, and replication benchmarks, the ratio has flipped: a credible evaluation can cost more than training the candidate model.

We already know how to make static evaluation cheaper. Flash-HELM, tinyBenchmarks, and Anchor Points work. Agent evaluation has only partial fixes: mid-difficulty filtering helps, and Pareto-front leaderboards help, but the toolkit remains thin. Training-in-the-loop evaluation has no general compression method; tabular precomputation and tight budget caps can reduce cost only by narrowing what the benchmark measures. Reliability adds another layer because repeated runs raise the price of every protocol.

The field still talks as if capability sets the main constraint, but evaluation points to reliability as the tighter one. Governance institutions should want to measure the gap between single-run accuracy and pass^k consistency, yet that gap costs the most to measure. Static-benchmark compression does not transfer to agent or training-in-the-loop benchmarks, and mid-difficulty filtering remains the only credible partial substitute. Cost-blind leaderboards now mislead by design, because they reward extra spending without reporting what that spending bought.

Evaluation now has its own compute budgets, statistical methods, and failure modes. Its price also shapes who gets to evaluate powerful systems in the first place. Whoever can pay for the evaluation gets to write the leaderboard.

Sources

- Ying et al. (2019). NAS-Bench-101: Towards Reproducible Neural Architecture Search. arXiv:1902.09635.

- Liang et al. (2022). Holistic Evaluation of Language Models. arXiv:2211.09110.

- Takamoto et al. (2022). PDEBench: An Extensive Benchmark for Scientific Machine Learning. arXiv:2210.07182.

- Ahmed, Wahed and Thompson (2023). The growing influence of industry in AI research. Science 379(6635).

- Biderman et al. (2023). Pythia: A Suite for Analyzing Large Language Models Across Training and Scaling. arXiv:2304.01373.

- IBM Research (2023). Efficient LLM Benchmarking. research.ibm.com.

- Perlitz et al. (2023). Efficient Benchmarking of Language Models. arXiv:2308.11696.

- Vivek et al. (2023). Anchor Points: Benchmarking Models with Much Fewer Examples. arXiv:2309.08638.

- Chan et al. (2024). MLE-bench: Evaluating Machine Learning Agents on Machine Learning Engineering. arXiv:2410.07095.

- Chen et al. (2024). ScienceAgentBench: Toward Rigorous Assessment of Language Agents for Data-Driven Scientific Discovery. arXiv:2410.05080.

- Kapoor et al. (2024). AI Agents That Matter. arXiv:2407.01502.

- Wijk et al. (METR, 2024). RE-Bench: Evaluating Frontier AI R&D Capabilities of Language Model Agents Against Human Experts. arXiv:2411.15114.

- Ohana et al. (2024). The Well: a Large-Scale Collection of Diverse Physics Simulations for Machine Learning. arXiv:2412.00568.

- Polo et al. (2024). tinyBenchmarks: evaluating LLMs with fewer examples. arXiv:2402.14992.

- Siegel et al. (2024). CORE-Bench: Fostering the Credibility of Published Research Through a Computational Reproducibility Agent Benchmark. arXiv:2409.11363.

- Tian et al. (2024). SciCode: A Research Coding Benchmark Curated by Scientists. arXiv:2407.13168.

- Kapoor et al. (2025). Holistic Agent Leaderboard: The Missing Infrastructure for AI Agent Evaluation. arXiv:2510.11977.

- Li et al. (2025). Adaptive Testing for LLM Evaluation: A Psychometric Alternative to Static Benchmarks. arXiv:2511.04689.

- Mehta (2025). Beyond Accuracy: A Multi-Dimensional Framework for Evaluating Enterprise Agentic AI Systems. arXiv:2511.14136.

- Starace et al. (2025). PaperBench: Evaluating AI's Ability to Replicate AI Research. arXiv:2504.01848.

- UK AISI (2025). Evidence for inference scaling in AI cyber tasks: increased evaluation budgets reveal higher success rates. aisi.gov.uk.

- Bandel et al. (2026). General Agent Evaluation. arXiv:2602.22953.

- Garikaparthi et al. (2026). ResearchGym: Evaluating Language Model Agents on Real-World AI Research. arXiv:2602.15112.

- Ndzomga (2026). Efficient Benchmarking of AI Agents. arXiv:2603.23749.

- Rabanser et al. (2026). Towards a Science of AI Agent Reliability. arXiv:2602.16666.

- Holistic Agent Leaderboard (live). hal.cs.princeton.edu.

Citation

@misc{ghosh2026evalbottleneck,

author = {Ghosh, Avijit and Mai, Yifan and Channing, Georgia and Choshen, Leshem},

title = {{AI} evals are becoming the new compute bottleneck},

year = {2026},

month = apr,

howpublished = {EvalEval Coalition Blog},

url = {https://evalevalai.com/research/2026/04/29/eval-costs-bottleneck/}

}

AutoSP is a compiler that automatically converts standard transformer training code into sequence-parallel code, making it vastly easier to train LLMs on extremely long contexts (100k+ tokens) across multiple GPUs.

Deep dive

- AutoSP implements DeepSpeed-Ulysses as its sequence parallelism strategy because communication overhead remains constant with increasing GPU counts on NVLink or fat-tree networks, though it's limited to scaling SP-size up to the number of attention heads in the model (32 for 7-8B models)

- The tool introduces Sequence-aware Activation Checkpointing (SAC), a custom strategy that exploits unique long-context FLOP dynamics and is less conservative than PyTorch 2.0's automated max-flow min-cut approach, releasing intermediate activations of cheap-to-compute operators to save memory

- Built within DeepCompile (a compiler ecosystem in DeepSpeed), AutoSP performs program analysis to automatically insert communication collectives, partition input contexts and intermediate activations, and overlap communication with computation for both forward and backward passes

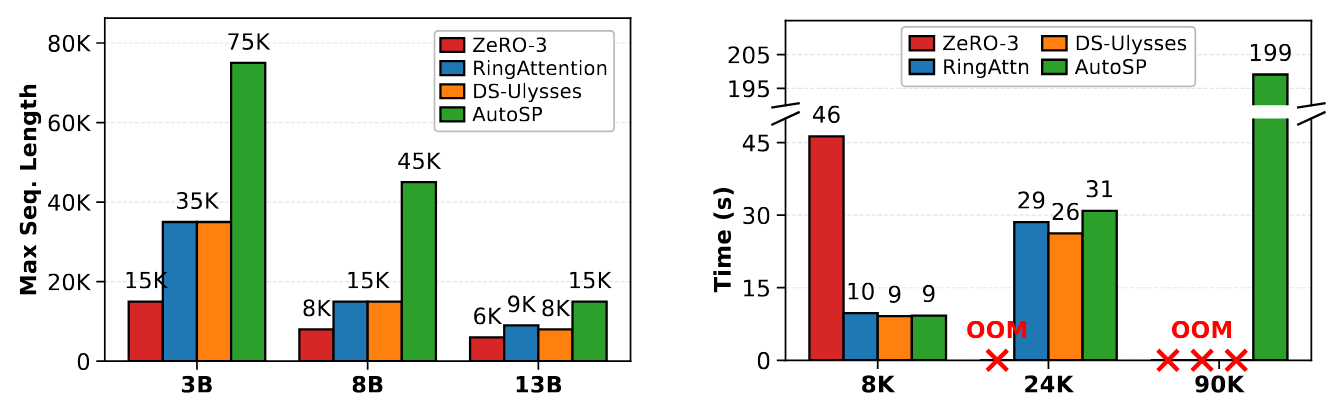

- Benchmarks on Llama 3.1 models using 8 A100-80GB GPUs show AutoSP increases maximum trainable sequence length while maintaining runtime performance comparable to hand-written baselines of RingFlashAttention, DeepSpeed-Ulysses, and ZeRO-3

- The tool composes automatically with ZeRO stage 0/1 out of the box, combining parameter sharding with sequence parallelism through simple config flags

- Performance portability is a key advantage: embedding sequence parallelism in the compiler means highly performant implementations can be realized on diverse hardware without vendor-specific engineering

- SAC marginally reduces training throughput when enabled but can be selectively activated only for configurations that would otherwise cause out-of-memory errors

- Two main limitations: the entire transformer must be compiled as a single artifact (no stitching together individually compiled functions), and graph breaks in compilable artifacts are disallowed as they complicate information propagation analysis

Decoder

- Sequence parallelism (SP): Partitioning input tokens across multiple devices to enable training on longer contexts, distributing the memory burden across GPUs rather than fitting everything on one device

- DeepSpeed: Microsoft's open-source deep learning optimization library that provides memory and speed optimizations for training large models

- ZeRO/FSDP: Zero Redundancy Optimizer and Fully Sharded Data Parallel - techniques that shard model parameters, gradients, and optimizer states across GPUs to reduce memory usage

- Activation checkpointing: Trading compute for memory by discarding intermediate activations during the forward pass and recomputing them as needed during the backward pass

- DeepSpeed-Ulysses: A specific sequence parallelism strategy that uses all-to-all communication patterns to distribute attention computation across GPUs

- Context length/window: The number of tokens an LLM can process at once - longer contexts enable models to consider more information but require more memory

Original article

TL;DR: AutoSP automatically converts standard transformer training code into sequence-parallel code for long-context LLM training across multiple GPUs. Integrated with DeepSpeed, it increases maximum trainable context length with little runtime overhead versus hand-written baselines.

Increasingly, Large-Language-Models (LLMs) are being trained for extremely long-context tasks, where token counts can exceed 100k+. At these token counts, out-of-memory (OOM) issues start to surface, even when scaling device counts using conventional training techniques such as ZeRO/FSDP. To circumvent these issues, sequence parallelism (SP): partitioning the input tokens across devices to enable long-context training with increasing GPU counts, is a commonly used parallel training technique.

However, implementing SP is notoriously difficult, requiring invasive code changes to existing libraries such as DeepSpeed or HuggingFace. These code changes often involve partitioning input token contexts (and intermediate activations), inserting communication collectives, and overlapping communication with computation, all of which must be done for both the forward and backwards pass. This results in researchers who want to experiment with long context capabilities spending significant effort on engineering the system's stack to enable such capability, repeating this effort for different hardware vendors.

To avoid this complexity, we introduce AutoSP: a fully automated compiler-based solution that automatically converts easy-to-write training code to multi-GPU sequence parallel code that efficiently uses GPUs to train on longer input contexts while composing with existing parallel strategies (such as ZeRO). This avoids the cumbersome need for developers to repeatedly modify training pipelines for long-context training. Users can now simply import AutoSP and compile arbitrary models using the AutoSP backend, giving the power of long-context training to anyone. Moreover, by embedding this technology into the compiler, our approach is performance-portable: highly performant SP can be realised on diverse hardware.

We structure this post as follows: (1) AutoSP and how model scientists can use it to enable long-context training, (2) Key design decisions of AutoSP, (3) key AutoSP results, demonstrating its ease-of-use and impact, (4) some limitations and things AutoSP cannot do.

AutoSP Usage

A key design philosophy of AutoSP is simplicity in abstracting most of the complexity in programming multiple GPUs from users. To do this, we implement AutoSP within DeepCompile: a compiler ecosystem within DeepSpeed to programmatically enable diverse optimisations for deep neural network training. With this, any user who uses DeepSpeed can automatically enable Sequence Parallelism with almost zero hassle. We take a look at an example next.

# We instantiate a deepspeed config.

# Assume 8 GPUs with 2 DP ranks and 4 SP ranks.

config = {

"train_micro_batch_size_per_gpu": 1,

"train_batch_size": 2,

"steps_per_print": 1,

"optimiser": {

"type": "Adam",

"params": {

"lr": 1e-4

}

},

"zero_optimization": {

"stage": 1, # AutoSP interoperates with ZeRO 0/1.

},

# Simply turn on deepcompile and set

# the AutoSP pass to be triggered on.

"compile": {

"deepcompile": True,

"passes": ["autosp"]

},

"sequence_parallel_size": 4,

"gradient_clipping": 1.0,

}

# Initialise deepspeed with model.

model, _, _ = deepspeed.initialize(config=config,model=model)

# Compiles model and automatically applies AutoSP passes.

model.compile(compile_kwargs={"dynamic": True})

for idx, batch in enumerate(train_loader):

# Custom function that we expose within:

# deepspeed/compile/passes/sp_compile.

inputs, labels, positions, mask = prepare_auto_sp_inputs(batch)

loss = model(

input_ids=inputs,

labels=labels,

position_ids=positions,

attention_mask=mask

)

... # Backwards pass, optimiser step etc...

As seen in the example above, users take existing training code that runs on a single device and do the following: (1) use the prepare_autosp_input utility function (exposed in DeepSpeed) for lightweight tagging of input tokens, attention masks and position ids for use in program analysis within AutoSP. (2) Adjust the DeepSpeed config to turn DeepCompile on, specifying the "passes" flag to "autosp". The rest is handled through the AutoSP compiler passes, called when compiling the model, which automatically enable sequence-parallelism alongside other long-context training optimisations. AutoSP additionally automatically composes with ZeRO stage 1 out of the box, simply set the ZeRO-1 flag in DeepSpeed alongside the AutoSP flags to combine both strategies.

AutoSP Compiler Passes

Since AutoSP transforms user code to enable longer-context training, we briefly cover the key design points of AutoSP and code transformations, as well as its consequences to users for transparency.

Sequence Parallelism Code Transformations. AutoSP automatically converts single-GPU code to multi-GPU sequence parallel (SP) code. The specific SP strategy AutoSP converts code into is DeepSpeed-Ulysses. We specifically focus on DeepSpeed-Ulysses over other strategies (e.g. RingAttention) as its communication overhead stays constant with increasing GPU counts on NVLink network topologies or fat-tree networks. However, DeepSpeed-Ulysses only enables scaling the SP-size to the number of heads in a model (32 in 7-8B models).

Activation Checkpointing for longer-context training. AutoSP additionally applies a custom activation-checkpointing (AC) strategy curated for long-context modelling. AC releases intermediate activations of cheap-to-compute operators, recomputing them in the backwards pass as required to compute relevant gradients. PyTorch-2.0 introduces an automated max-flow min-cut based AC formulation, but we find this to be overly conservative for long-context modelling. We accordingly introduce a novel AC strategy targeted for long-context training: Sequence-aware AC (SAC), which exploits unique long-context FLOP dynamics. When triggered on (the default setting in AutoSP), this marginally reduces training throughput. However, without it, training on longer contexts is infeasible, so the user can selectively choose to turn this pass on only for configurations that OOM.

Evaluating AutoSP on Real Models

To demonstrate AutoSP's viability, we evaluate its performance on models of varying sizes on NVIDIA GPUs to show that its ease of use comes at little to no cost to runtime performance. We benchmark different Llama 3.1 models on an 8 A100-80Gb SXM node. We use PyTorch 2.7 with CUDA 12.8, comparing AutoSP to torch-compiled hand-written baselines of: RingFlashAttention, DeepSpeed-Ulysses, and ZeRO-3. We summarise key results in the figure below:

Not only can AutoSP increase the maximum trainable sequence length given the same resources (left figure – higher is better), but also these benefits come at little cost to runtime performance (right figure – lower is better).

Limitations

There are two key limitations of AutoSP. First, we require that the user forcefully compile a transformer as a single compilable artifact. Occasionally, PyTorch users may compile many functions individually and stitch them together into one model. This is disallowed in AutoSP as we need to compile and see the entire model to correctly shard input sequences and propagate this information throughout the entire graph. Second, we disallow any graph breaks in compilable artifacts. This complicates analysis and propagation of information, and we leave extending AutoSP to be graph-break resilient to future research.

Conclusion

AutoSP enables users to easily extend arbitrary transformer training code to enable Sequence Parallelism, with a custom AC strategy for enhanced long-context training. Integration with DeepSpeed allows users to easily use existing DeepSpeed training code to train on longer contexts by simply changing a config file. We have prepared end-to-end examples for users to play around with on real model workloads (e.g. Llama 3.1 8B) here. Give it a try to see how easy long context training has become.

A practical guide to designing MCP servers that guide AI models through multi-step workflows by embedding breadcrumbs rather than expecting models to plan ahead.

Deep dive

- Models don't have hidden planners—they scan available tools and pick whatever seems most probable based on conversation context, so servers must make the next call blindingly obvious at every step

- The author's Office server exposes 100+ tools but funnels models toward 8 core verbs through instructions, treating specialized tools as fallback/diagnostic options to prevent five-call detours for one-call jobs

- Consistent naming exploits probability: all Word tools are

word_*, Excel toolsexcel_*, unified toolsoffice_*—models that just calledoffice_inspectwill naturally reach foroffice_patchnext because the prefix matches - Every tool response should include a breadcrumb dictionary with

next_toolsandusagehints showing exact call syntax—smaller models will copy these verbatim because it's the most likely token sequence - Discovery should be a callable tool like

office_help(goal=...)that returns structured recommendations with rationale and next steps, not prose documentation—called with no arguments it returns the catalogue, with unknown input it returns the supported set instead of erroring - Use stable addressing like anchors, IDs, or structured paths instead of byte offsets or natural language descriptions that models lose between calls—if you return data the model has to describe back in natural language, your chain will misfire

- Collapse similar tools into mode parameters (

dry_run,best_effort,safe,strict) rather than separate tools—discovery cost scales with tool count not mode count, and models figure out escalation chains like dry_run → safe → strict on their own - Return standardized diagnostic envelopes with named fields like

matched_targetsandunmatched_targetsthat create branching points and recovery loops without forcing the model to re-read entire context - Always provide read-only introspection tools so confused models can "look again" without destructive consequences—the penalty becomes one extra round-trip instead of breaking files

- The design checklist includes: pick 5-10 core verbs and name them in instructions, use consistent prefixes, embed forward breadcrumbs in responses, provide stable addresses, give mutation tools mode enums, cache recovery loop calls, make repeat calls safe, and reject unknown arguments strictly

Decoder

- MCP (Model Context Protocol): A protocol for exposing tools and functions that AI models can call to interact with external systems and data sources

- Activation sets: The subset of available tools that are surfaced to the model at any given time, keeping the visible tool list small while maintaining access to a larger set

- Breadcrumbs: Structured hints embedded in tool responses that guide the model toward the next appropriate tool call in a workflow chain

Original article

Lessons on Building MCP Servers

I've been building MCP servers for a while now–I wrote about the general approach last year, started out by creating umcp, and I've recently opened up an Office server that's been battered by enough models against enough real documents that the patterns have settled.

I'm still not a fan of MCP, but what follows is what I've learned about making tool chains actually work, condensed from swearing at logs rather than reading papers.

Disclaimer: This is a condensed version of

CHAINING.md, which was itself stapled together from a bunch of notes in my Obsidian vault. The full version has more code examples and a techniques inventory table that Opus just _had to add, and I've since beaten that out of it and restored most of the original text (minus typos).

The short version: the MCP servers I design do most of the work, while the model walks breadcrumbs.

Models don't plan

They look at the conversation, scan the tool list, and grab whatever looks more probable. That's it. There is no hidden planner. If you want chains that finish somewhere sensible, the server has to make the next call blindingly obvious at every step.

After a year or so, I have pared down my approach into these three things, roughly in order of how much pain they save you:

- A small named core verb set covering most intents

- Output that suggests the next call

- An addressing scheme that survives between calls–anchors, IDs, paths, anything but line numbers.

Core verbs beat surface area

The Office server exposes over 100 tools. Its get_instructions() funnels models toward eight:

…start with

office_help, then preferoffice_read,office_inspect,office_patch,office_table,office_template,office_audit, andword_insert_at_anchor. Treat specialised tools as fallback, diagnostic, legacy-compatibility, or expert tools when the core flow is insufficient.

That single sentence does an outsized amount of work–it tells the model there is a recommended path, that the path is verb-shaped (help -> read -> inspect -> patch -> audit), and that everything else is opt-in.

Without it, models cheerfully reach for word_parse_sow_template when office_read would do, and you end up with five-call detours for one-call jobs.

So I quickly realized that I needed to be ruthless about which tools to surface and when. The specialised ones still ship–hidden under a "for experts" framing, and a handful of legacy ones filtered out of tools/list entirely.

I also make liberal use of activation sets–the surface the model sees is small; the surface it can reach is large.

Naming is the chain

Again, models chain whatever is most likely (or rhymes), and the most effective tactic, for me, has been taking advantage of that.

All Word tools are word_*, all Excel excel_*, all unified office_*. A model that just called office_inspect will reach for office_patch next, not word_patch_with_track_changes, because the prefix matches.

This particular server also makes liberal use of annotations and a little intent/inferrer hack that reads those prefixes to assign readOnlyHint/destructiveHint automatically, so naming discipline turns into safety metadata for free.

The prefix is the plan. The verb is the step. If you take one thing from this entire post, I'd suggest this notion…

Every response nominates the next call

This was the single change that made things behave on smaller models. The big ones will plan a chain from a tool list and a goal; the wee ones won't–they grab the first plausible tool and stop.

The fix is stupid simple: every response ends with a breadcrumb dictionary of hints to follow. At minimum next_tools: [...], plus usage: "<exact call>" whenever the current tool produced a value the next one needs.

A model that can't assemble arguments from a schema can copy the usage string verbatim. In fact, they will copy it, because it is still the most likely outcome as it fills in tokens, and thus those usage hints funnel the path the model takes.

Discovery as a tool, not documentation

Another thing I hit upon was that signposting needed to be curated.

Borrowing a page from intent mapping, office_help(goal=...) returns a structured record–recommended chain with rationale, fallbacks, diagnostic strings to watch for, one imperative next_step sentence. Not prose. Not a README, not skills. Data the model can act on without reading comprehension.

Called with no arguments, it returns the catalogue. Called with an unknown goal, it returns the supported set rather than an error, which turns a potential workflow-stopping error into an actual useful catalogue.

Addressing: anchors, not offsets

The biggest reason simple models can't follow chains is the model losing the thread between calls. "Insert a paragraph after the introduction" is fine in English but catastrophic if you expect it to remember a byte offset across three tool calls.

In this particular scenario, I cheated and since most Office documents have headings (or cells, or internal structured paths inside OOXML), I used either verbatim text from the document or immovable coordinates (which was particularly hard in PowerPoint, by the way).

So besides suggestions and hints, return identifiers your tools will later accept as input. If you find yourself returning data the model has to describe back to you in natural language, you've made a chain that will misfire on a Tuesday afternoon when you're not watching.

Modes turn one tool into four

I started out with individual editing tools per format, which was very easy to do automated tests for but incredibly wasteful of context, so at one point I decided to make things much simpler for initial discovery, and since I needed to make all outputs auditable, I then tagged available sub-operations risk-wise.

office_patch is the same code path whether you ask for dry_run, best_effort, safe, or strict. One tool, four modes, one entry in tools/list.

Discovery cost scales with tool count, not mode count. And dry_run -> safe -> strict is an escalation chain the model figures out on its own without being told.

If you have N tools that differ only in how cautious they are, collapse them. You're wasting everyone's context budget.

Diagnostics as the back-edge

Linear chains are easy. Real chains have loops, and loops only happen when the server invites the model back in. Every mutating tool returns a standard envelope with status, matched_targets, unmatched_targets, and next_tools.

The model then branches on a small subset of options "locally" without needing to go over the entire context, and if you name the diagnostic fields with exact strings the model will see again in your instructions, it will just reinforce them.

In this particular case, again, I cheated. I figured out that the models were starting to call tools at random because they couldn't introspect the document well enough and ended up breaking files, so I always gave them at least one read-only tool, so the penalty for "I'm confused, let me look again" is one extra round-trip, not a destructive cock-up.

My MCP Design Checklist

- Pick five to ten core verbs and name them in

get_instructions()or your local equivalent - Use consistent prefixes by surface

- Provide a discovery tool that returns recommendations as data, not prose

- Make the discovery tool browseable–no-arg returns the catalogue, unknown input returns the supported set

- Embed forward breadcrumbs in every tool response

- Provide a map/anchors tool so addresses survive between calls

- Give every mutating tool a mode enum including

dry_run - Return named diagnostic fields and cite the recovery tools

- Standardise the mutation envelope. If one tool changes something in a specific way, make sure the others are consistent (arguments, semantics, etc.)

- Reject unknown arguments strictly (this is much easier in some runtimes than others)

- Provide an audit tool so the model has somewhere to land

- Cache anything the recovery loop calls more than once, because, well, it will get called dozens of times even if you carefully curate paths through your tooling with hints.

- Make repeat calls safe–models retry, and they should be allowed to (idempotence is hard, and often impossible).

Do the boring work in the schema and the descriptions. The model will happily do the clever bit if you stop making it guess.

A new framework uses diffusion models to help language models reason better by allowing them to revise their thinking process holistically instead of generating responses token-by-token.

Deep dive

- LaDiR addresses a fundamental limitation of autoregressive LLMs: they generate chain-of-thought reasoning token-by-token without ability to holistically revise earlier steps

- The framework uses a Variational Autoencoder (VAE) to create a structured latent reasoning space that encodes text reasoning steps into compact "blocks of thought tokens"

- These latent representations preserve semantic information and interpretability while being more expressive than discrete tokens

- A latent diffusion model learns to denoise blocks of latent thought tokens using blockwise bidirectional attention masks

- This architecture enables parallel generation of multiple diverse reasoning trajectories instead of sequential generation

- The iterative refinement process allows for adaptive test-time compute allocation

- Models can plan and revise the reasoning process holistically rather than committing to each token immediately

- Evaluated on mathematical reasoning and planning benchmarks

- Results show consistent improvements in accuracy, diversity, and interpretability compared to autoregressive, diffusion-based, and latent reasoning baselines

- Represents a paradigm shift from next-token prediction to iterative latent reasoning refinement

Decoder

- Chain-of-thought (CoT): A technique where LLMs show their reasoning process step-by-step in text form

- Autoregressive decoding: Generating text one token at a time, where each token depends on previous tokens

- Latent representation: A compressed, continuous numerical encoding of information in a hidden space

- Variational Autoencoder (VAE): A neural network that learns to encode data into a compact latent space and decode it back

- Diffusion model: A generative model that learns to iteratively denoise random noise into structured outputs

- Bidirectional attention: Attention mechanism that can look at both past and future context, unlike autoregressive models

Original article

LaDiR: Latent Diffusion Enhances LLMs for Text Reasoning

Large Language Models (LLMs) demonstrate their reasoning ability through chain-of-thought (CoT) generation. However, LLM's autoregressive decoding may limit the ability to revisit and refine earlier tokens in a holistic manner, which can also lead to inefficient exploration for diverse solutions. In this paper, we propose LaDiR (Latent Diffusion Reasoner), a novel reasoning framework that unifies the expressiveness of continuous latent representation with the iterative refinement capabilities of latent diffusion models for an existing LLM. We first construct a structured latent reasoning space using a Variational Autoencoder (VAE) that encodes text reasoning steps into blocks of thought tokens, preserving semantic information and interpretability while offering compact but expressive representations. Subsequently, we utilize a latent diffusion model that learns to denoise a block of latent thought tokens with a blockwise bidirectional attention mask, enabling longer horizon and iterative refinement with adaptive test-time compute. This design allows efficient parallel generation of diverse reasoning trajectories, allowing the model to plan and revise the reasoning process holistically. We conduct evaluations on a suite of mathematical reasoning and planning benchmarks. Empirical results show that LaDiR consistently improves accuracy, diversity, and interpretability over existing autoregressive, diffusion-based, and latent reasoning methods, revealing a new paradigm for text reasoning with latent diffusion.

Microsoft released World-R1, a reinforcement learning framework that improves 3D spatial consistency in AI-generated videos without requiring changes to underlying video generation models.

Decoder

- 3D consistency: The property of maintaining accurate spatial relationships and object geometry as viewpoint changes in generated video, preventing warping or impossible perspectives

- Vision-language models: AI systems that understand both visual content and text descriptions, used here to evaluate whether generated videos match their prompts

- Reinforcement learning framework: A training approach where the model learns by receiving rewards or penalties based on how well its outputs meet certain criteria

Original article

World-R1 is a reinforcement learning framework that improves 3D consistency in video generation by leveraging feedback from 3D and vision-language models without modifying the base architecture.

Researchers developed DataPRM, a process reward model that makes AI data analysis agents more reliable by detecting silent errors that produce incorrect results without triggering exceptions.

Deep dive

- General-domain process reward models trained on static tasks like math proofs fundamentally fail when applied to data analysis agents, struggling with the dynamic, exploratory nature of the domain

- Silent errors represent a critical failure mode where code executes without exceptions but produces logically incorrect results—something traditional PRMs cannot detect without environment interaction

- DataPRM functions as an active verifier that probes intermediate execution states by interacting with the environment, rather than passively evaluating reasoning traces