From Vibe Coder to Product Builder (6 minute read)

Product managers can now build real, shippable products with coding agents like Cursor and Claude Code by learning engineering basics, moving beyond prototype-only tools to become better collaborators and more critical evaluators of AI output.

Deep dive

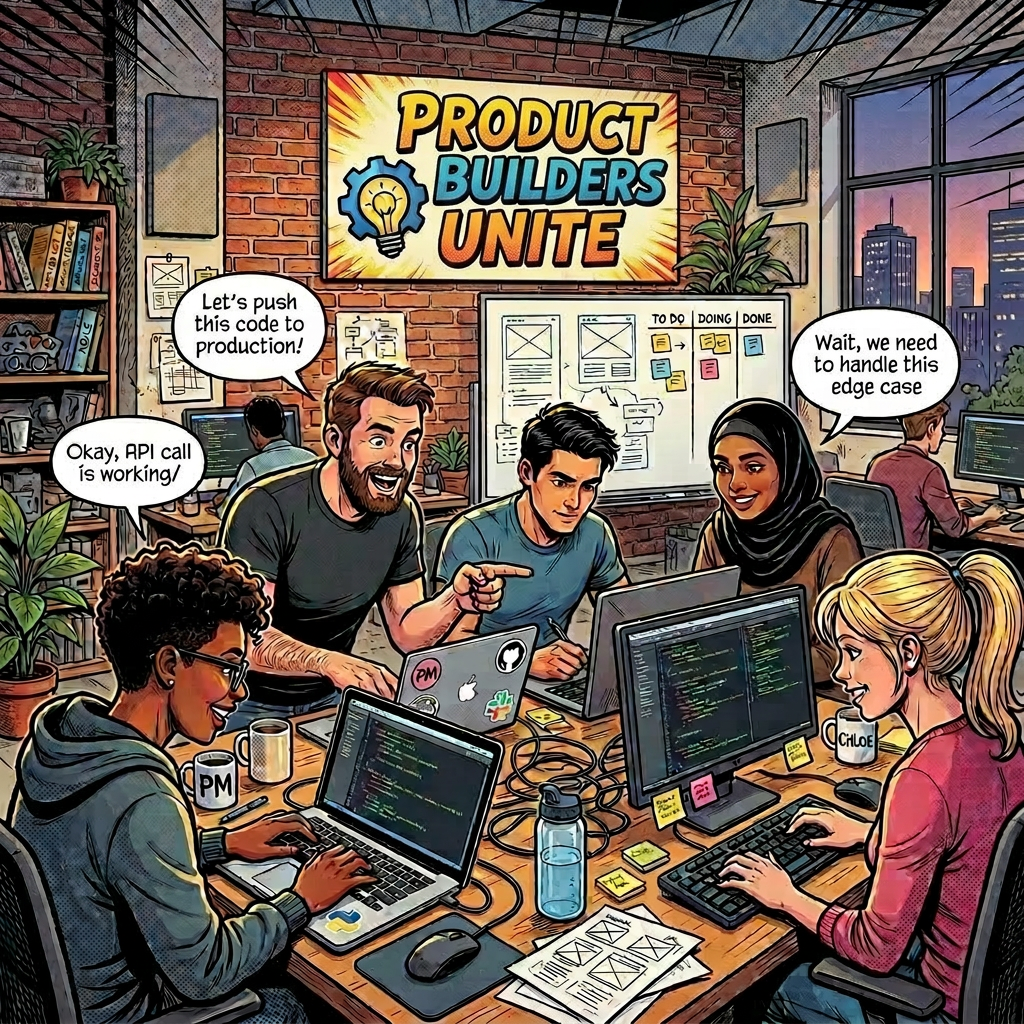

- The "vibe coding spectrum" ranges from no-code tools like Lovable and Bolt (good for visualizing ideas) to using coding agents like Cursor and Claude Code (for building real products)

- Key insight: understanding engineering basics doesn't mean becoming an engineer, but rather becoming a better collaborator who can interrogate AI output instead of blindly trusting it

- Planning still matters: despite AI's ability to generate prototypes from single prompts, starting with a structured PRD and having the AI ask clarifying questions about tech/UX tradeoffs produces better results

- Understanding the tech stack (client, server, database relationships) changes how you evaluate architectural decisions proposed by coding agents rather than just accepting them

- Error-driven development (EDD) reframes errors as instructions rather than failures—reading server and browser logs helps direct the agent toward solutions

- Debugging workflow: ask the agent to diagnose before fixing, understand the proposed change, and optionally run a second agent as a sense-check for complex fixes

- Incremental iteration applies to building with AI just as it does to product releases—make one change at a time, test, understand, then move forward

- The trap: easier building creates risk of jumping to solutions before validating the problem, its commercial impact, and whether it's worth solving at all

- Foundational PM discipline remains critical: who has this problem badly enough to care about a solution, and what outcome are we creating

- The real value isn't writing code from scratch but being able to read it, understand what the agent did, and spot when something looks off

Decoder

- PRD: Product Requirements Document, a structured plan outlining what to build and why before writing code

- Error-driven development (EDD): Treating error messages as instructions that guide the next action rather than as failures to avoid

- Tech stack: The combination of programming languages, frameworks, and systems (client, server, database) that make up an application's architecture

- Node.js: A JavaScript runtime that allows using the same language for both front-end and back-end development

- Functions: Reusable blocks of code that take inputs and return outputs

- Objects: Data structures that organize related information and behavior into a single unit

- Vibe coding: Colloquial term for using AI-assisted coding tools to build quickly based on prompts and intuition

Original article

From Vibe Coder to Product Builder

The Vibe Coding spectrum

The lines between product management and software engineering are becoming increasingly blurred. As product managers, we can now show rather than tell; build rather than write. There's a spectrum here, which is nicely captured by product management consultant and trainer Dan Olsen:

A lot of product managers stop at Bolt or Lovable – and that's fine for visualising an idea. But I believe there's a meaningful difference between visualising a product and actually building one. My take is that there are different degrees of product building, and if you want to move from prototyping ideas to shipping real products, you need to start using coding agents and get comfortable with some engineering basics. Not to become an engineer, but to get the most out of the tools.

Why bother going further?

For the past few months I've been going beyond Lovable and Bolt myself. I won't pretend it's been smooth – this is how I felt at first, and still feel at times:

I have no ambition to become an engineer, and no illusion that my code is immediately production-ready. But moving beyond prototyping matters for a few concrete reasons. Using coding agents gives you more control over both the front and back-end of what you're building. It means you can produce something real that engineers can actually build on – especially when they share relevant code with you – and have far more grounded conversations with your engineering team as a result. Most importantly, it makes you better at reviewing and challenging AI output. You can't critique what you don't understand.

From visualising to building

From the moment I started building with Cursor and Claude Code, I had to get comfortable with breaking things. Database migrations failing, bugs that made no immediate sense, outputs that weren't what I'd asked for. It has felt equal parts daunting and empowering; I'm out of my comfort zone, but I'm also able to work through problems as they emerge. That's a new feeling for a PM without an engineering background, and an instructive one.

What I had to learn

Getting to grips with coding agents meant familiarising myself with some engineering basics – commands, functions, debugging — while also leaning on product fundamentals like critical thinking and planning. Here are my main lessons learned so far.

The value of planning

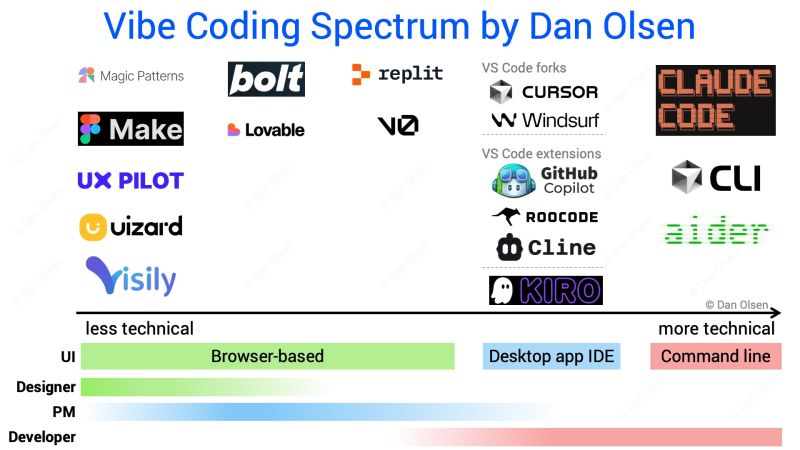

I love a good plan, and despite being able to generate a first prototype from a single prompt, I still believe in the importance of thinking upfront about the why and what of your product idea – including key UX and tech choices. I typically start with a few pointers and prompt the AI to turn them into a PRD.md file.

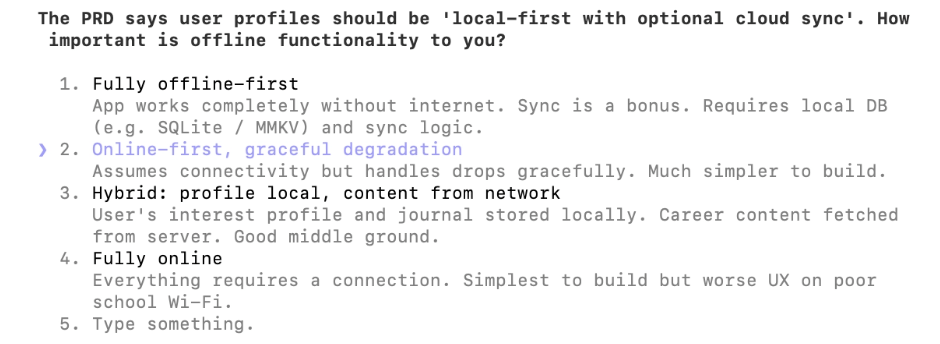

I'll then prompt Claude Code, using its AskUserQuestionTool, to ask me questions about anything related to tech, UX, tradeoffs and data concerns before writing a single line of code. This planning step directly shapes the quality of what the agent builds. A well-structured PRD means fewer wrong turns, less backtracking, and a clear reference point to check that the code actually reflects what you had in mind.

Thinking about the tech stack

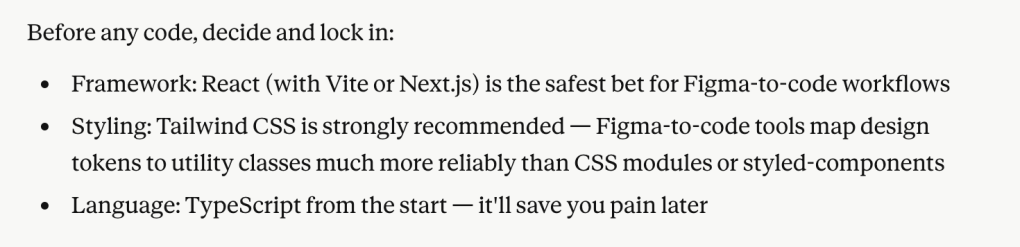

Building things forces you to make real architectural decisions: what programming language to use, how the client, server and database relate to each other, and what tradeoffs come with different choices. Node JS has felt like the right entry point for me, given its flexibility across front and back-end. More importantly, having a working mental model of the stack changes how you interact with a coding agent. When the agent proposes a structural change, you can evaluate it rather than just accept it. Without that mental model, you're trusting output you can't interrogate. This is fine for a prototype, but not for something you intend to ship or hand to engineers to build on.

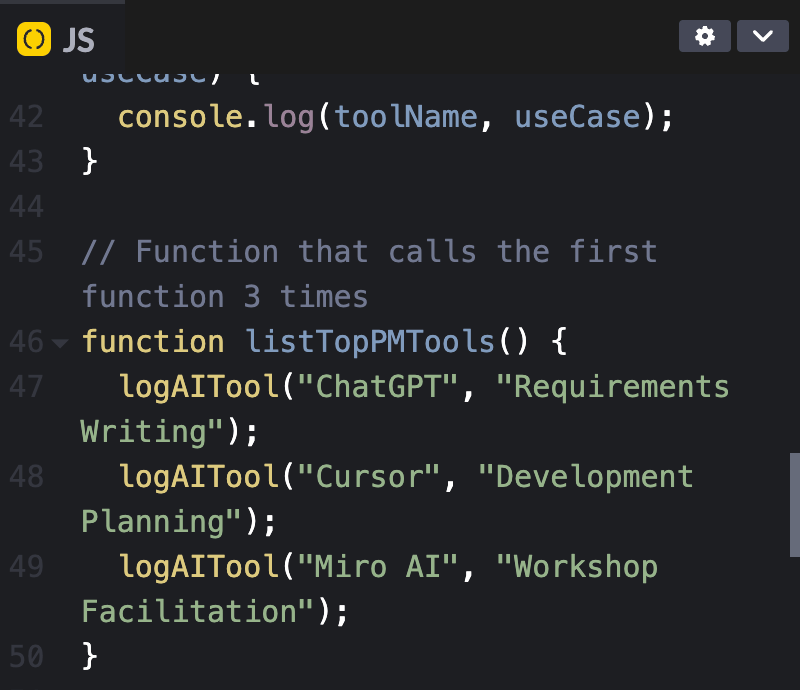

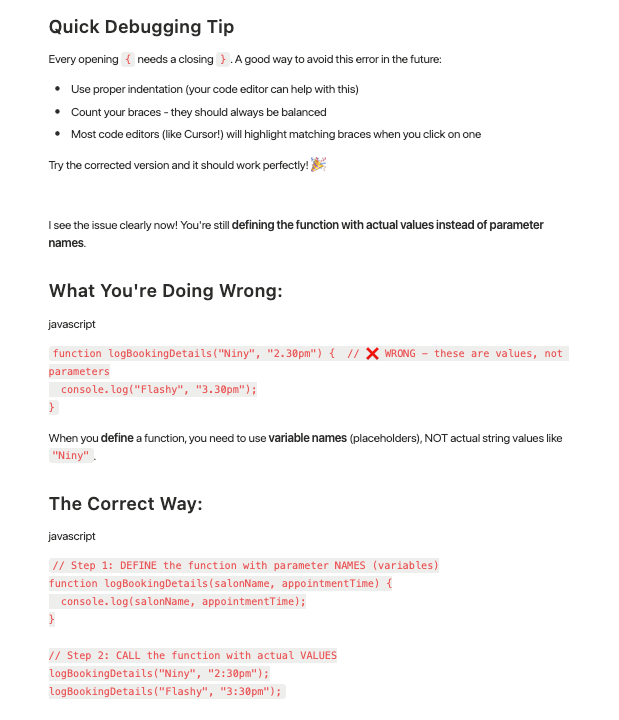

Functions and objects

Two concepts that confused me early on. A function is a reusable block of code that takes an input and returns an output. An object organises related data and behaviour into a single unit. When I built a personal landing page in Cursor, I wrote a function to return a list of my recommended product management tools — straightforward in retrospect, but understanding why it was structured that way helped me prompt more precisely and catch it when the agent implemented something differently to what I'd intended. The practical value isn't being able to write these from scratch; it's being able to read them, understand what the agent has done, and spot when something looks off.

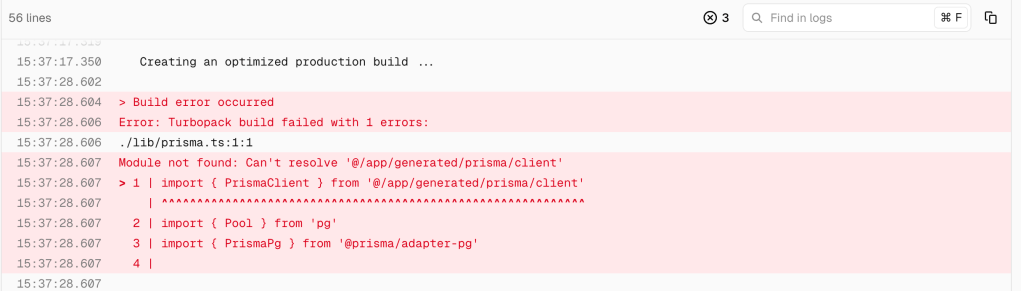

Error-driven development

In my hunt for perfection, every error the coding agent threw used to stop me in my tracks. Until Shawn, who has very patiently helped me learn, introduced me to error-driven development (EDD). The insight is simple but powerful: errors aren't failures, they're instructions. They're designed to prompt a next action. I've learned to look at server and browser logs for clues about what's broken and why, which has made me a much better collaborator with the agent — I can point it in the right direction rather than just asking it to "fix the error."

Debugging

Related to EDD but distinct: when I hit a bug, I now ask the agent to diagnose before it fixes. I want to understand what the issue is and what change is being proposed before anything gets implemented. Claude Code has a built-in debug skill which is prompt-based and allows it to run a detailed, structured debugging process. I'll sometimes run a second agent on the same problem to see if it reaches the same diagnosis. I've found this to be a useful sense-check when the proposed fix seems overly complex or touches parts of the codebase I wasn't expecting.

Iterating, one thing at a time

This is where product instincts transfer most directly. Just as you wouldn't ship a full product rebuild without testing incrementally, you shouldn't try to build everything in a single prompt. I've learned to make one change at a time, test it, understand it, then move on. It keeps the codebase manageable, makes errors easier to isolate, and – honestly – makes the whole process feel less overwhelming. The PM habit of releasing small and learning early applies just as well to building with coding agents as it does to shipping product.

What this changes for PMs

PMs who understand these basics become genuinely better at writing prompts, reviewing agent output critically, and working through problems when things go wrong. Using coding agents gives you real control over what you're building — and real accountability for it. With tools like Lovable or Bolt, it's easy to fall into a pattern of prompting and hoping for the best. Coding agents demand more from you, and in return give you more back.

What doesn't change for PMs

The fact that it has become much easier to build doesn't automatically mean that you should – and that's a trap worth naming explicitly. The lower barrier to building creates a real risk of PMs jumping straight into a PRD and opening up a coding agent before they've properly validated the problem they're solving and its commercial impact. The foundational questions still apply: is this problem worth solving? Who has it, and badly enough to care about a solution? What outcome are we actually trying to create? Even with my own side projects, I make a point of working through the 'why' and the 'who' before writing a single line of a PRD. The tools have changed. The thinking hasn't, and if anything, it matters more now that building has become so fast and so easy.

Main learning point: The shift from vibe coding to product building isn't about learning to code. It's about learning enough to be a better builder. PMs who get comfortable with engineering basics don't just get more out of coding agents; they become more credible collaborators with their engineering teams, more rigorous reviewers of AI output, and more capable of shipping things that go beyond a demo. The tools are already there. The question is how far along the vibe coding spectrum you're willing to go.

Related links for further learning

- https://navonsanjuni178.medium.com/oop-concepts-with-real-world-examples-b8eb8f09b623

- https://code.claude.com/docs/en/common-workflows

- https://cursorai.notion.site/Building-with-Cursor-public-273da74ef0458051bf22e86a1a0a5c7d

- https://testrigor.com/blog/what-is-error-driven-development/

- https://www.producttalk.org/vibe-coding-best-practices/

- https://medium.com/singularity-energy/error-driven-development-8ef893b90b19

- https://navonsanjuni178.medium.com/oop-concepts-with-real-world-examples-b8eb8f09b623

- https://www.geeksforgeeks.org/dsa/functions-programming/

- https://www.romanpichler.com/blog/product-managers-product-builders/