Devoured - May 05, 2026

GPT-5.5 doubled API prices with real cost increases of 49-92% depending on prompt length, Vercel open sourced deepsec for AI-powered security scanning, and research shows that matching AI models to the right tool harness matters as much as model selection itself. Stripe quietly assembled a full stablecoin payments stack through acquisitions and a new blockchain, while Ethereum developers finalized parameters for the Glamsterdam upgrade and Brazil banned stablecoins from regulated cross-border payment rails.

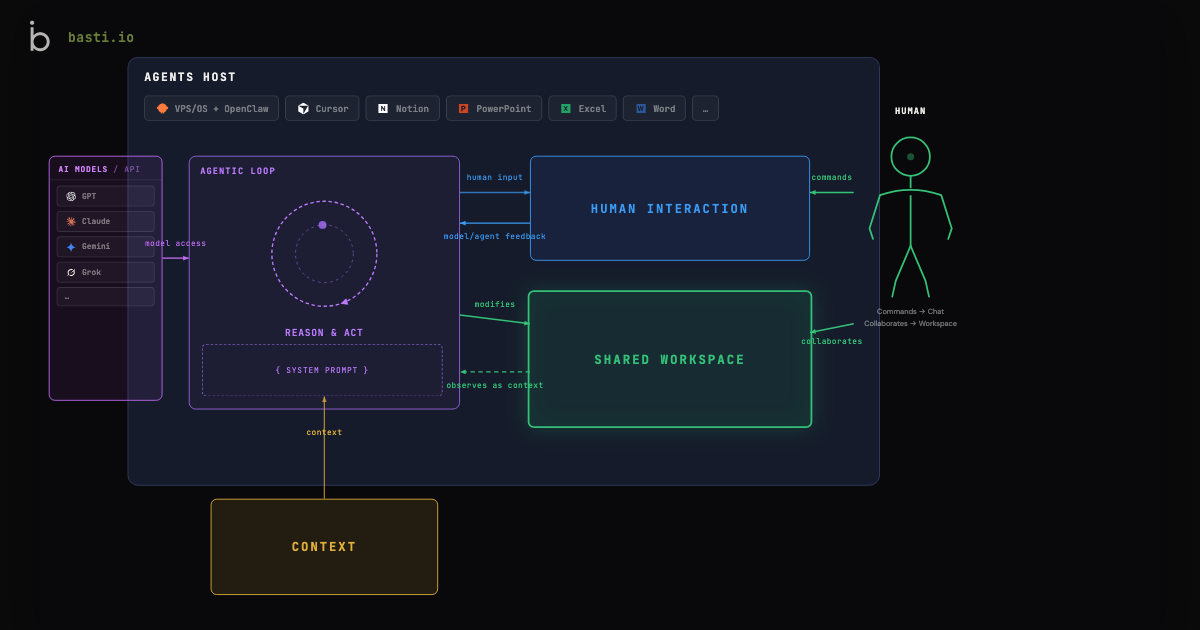

Anthropic and OpenAI are each launching separate enterprise AI joint ventures backed by major financial firms to deploy engineers directly at portfolio companies.

Decoder

- Forward-deployed engineer (FDE): Engineering model popularized by Palantir where engineers work onsite with customers to build custom solutions integrated into their specific workflows, rather than selling standardized products.

Original article

On Monday, Anthropic announced a joint venture focusing on deploying enterprise AI services. Blackstone, Hellman & Friedman, and Goldman Sachs will be founding partners in the new venture, which is backed by a group of VCs, hedge funds, and private equity firms, including Apollo Global Management, General Atlantic, GIC, Leonard Green, and Sequoia Capital.

The Wall Street Journal, which first reported news of the partnership, reported the new venture was valued at $1.5 billion, which includes a $300 million commitment each from Anthropic, Blackstone, and Hellman & Friedman.

The announcement comes just as Anthropic's chief rival is preparing to make a similar move. Mere hours before the Anthropic announcement, Bloomberg reported that OpenAI was raising funds for a new venture called The Development Company, along very similar lines. OpenAI's venture would operate at a larger scale, raising $4 billion from 19 investors against a $10 billion valuation. Named investors include TPG, Brookfield Asset Management, Advent, and Bain Capital, with no apparent overlap in investment between the OpenAI venture and Anthropic's competitor.

The overall logic of the two ventures is the same, raising money from alternative asset managers to create new channels for enterprise AI deals. The ventures will presumably get preferred sales access to their investors' portfolio companies, while the investors will capture more value from any resulting contracts.

The new capital will also allow more engineering resources to be devoted to each individual, embracing the forward-deployed engineer (FDE) model popularized by Palantir.

As Anthropic put it in its announcement:

An engagement might begin with the company's engineering team sitting down with clinicians and IT staff to build tools that fit into the workflows that staff already use… Engagements like this will run across mid-sized companies across industries, each shaped by the people closest to the work.

The new ventures come as both AI labs fundraise at a blistering pace, while circling possible IPOs. OpenAI announced $122 billion in new funding at the end of March, against a valuation of $852 billion. TechCrunch reported last week that Anthropic is in the final stages of its own funding round, seeking $50 billion of new funding against a $900 billion valuation.

Anthropic is working on Orbit, its upcoming proactive assistant (2 minute read)

Anthropic is preparing to launch Orbit, a proactive AI briefing tool that automatically generates personalized insights from developer and productivity tools like GitHub, Figma, Slack, and Gmail.

Decoder

- Claude Cowork: Anthropic's collaboration-focused version of Claude designed for team productivity and work tools

- Proactive assistant: An AI that generates insights and briefings automatically on a schedule rather than waiting for explicit user prompts

- Connectors: Integrations that allow Claude to access and analyze data from external services like Gmail or GitHub

Original article

Anthropic appears to be lining up a new proactive assistant called Orbit, with evidence pointing to an upcoming release. Across recent web and mobile builds, more references and supporting scaffolding have surfaced, though for now the tool only manifests as a toggle in the settings panel, a typical pattern for a feature being staged before a broader rollout.

Based on the descriptions found in code, Orbit is positioned as a proactive briefing and insights system spanning both Claude and Claude Code. The setup would be opt-in and time zone-aware, producing personalized briefings with actionable insights drawn from connected work tools. The initial connector list reads like a knowledge worker's daily stack: Gmail, Slack, GitHub, Calendar, Drive, and Figma.

Your deployed Orbit apps. Pin favorites for quick access.

Anthropic's Code with Claude developer conference kicks off in San Francisco on May 6, with London and Tokyo dates following on May 19 and June 10. Whether Orbit lands as a quiet rollout or gets formally unveiled on stage remains uncertain, but the build activity is consistent with a feature in late preparation rather than early experimentation.

Claude Cowork will get its own proactive assistant called "Orbit".

Users will get personalized insights from Gmail, Slack, GitHub, Calendar, Drive, Figma, and other apps, which Claude will generate proactively.

There are also mentions of "Orbit" apps.

The broader context matters too. OpenAI shipped ChatGPT Pulse last September as its first proactive, asynchronous assistant, generating overnight briefings stitched from chats, memory, and Gmail and Calendar connectors. Similar groundwork has been spotted inside Google's Gemini and Perplexity, suggesting proactive briefing layers are becoming table stakes across major AI assistants.

Anthropic's twist seems to be the explicit inclusion of GitHub and Figma alongside the standard productivity suite, fitting its growing positioning around developer and creative workflows. Paired with the Claude Code integration, Orbit looks less like a Pulse clone and more like a workflow-aware briefing surface aimed at people who ship things, not just read email.

The New Yorker quoted Paul Graham on Sam Altman's trustworthiness without disclosing YC's $5 billion OpenAI stake.

Original article

OpenAI was seeded by an offshoot of Y Combinator called YC Research in 2016, when Altman was running YC. Y Combinator owns about 0.6% of OpenAI. At OpenAI's current valuation, that stake is worth over $5 billion.

A cost analysis of GPT-5.5 reveals the 2x sticker price increase translates to actual cost increases of 49-92% depending on prompt length, with shorter outputs only benefiting longer prompts.

Deep dive

- GPT-5.5's nominal pricing doubled from GPT-5.4: input tokens went from $2.50/M to $5.00/M and output tokens from $15/M to $30/M

- OpenRouter analyzed real-world usage by tracking users who switched from GPT-5.4 to GPT-5.5 to measure actual cost impact

- For prompts over 10K tokens, GPT-5.5 generates 19-34% fewer completion tokens, partially offsetting the price increase

- For prompts under 10K tokens, completions stay the same length or get longer (up to 52% longer in the 2K-10K range)

- Actual cost increases ranged from 49% (for 50K-128K token prompts) to 92% (for prompts under 2K tokens)

- Users with longer prompts (over 10K tokens) saw cost increases of 49-62%, benefiting from shorter outputs

- Users with shorter prompts (under 10K tokens) saw cost increases of 69-92%, getting little to no offset from verbosity reduction

- The analysis used OpenRouter's independent token counting to provide a consistent baseline across model versions

- Sample data came from April 21-23 for GPT-5.4 and April 25-28 for GPT-5.5, excluding the launch day itself

Decoder

- GPT-5.4 / GPT-5.5: Sequential versions of OpenAI's GPT language model, with 5.5 being the latest release

- OpenRouter: API aggregator that provides access to multiple LLM providers and tracks usage metrics

- Token: Fundamental unit of text processed by LLMs, roughly 3-4 characters or 0.75 words

- Input/Prompt tokens: Tokens in the request sent to the model

- Output/Completion tokens: Tokens in the response generated by the model

- Switcher cohort: Users who primarily used GPT-5.4 before the launch and switched to GPT-5.5 after

- M: Million (as in $5.00/M = $5.00 per million tokens)

Original article

GPT-5.5 Price Increase: What It Actually Costs

We replicated the cost analysis we did on Opus on the new GPT-5.5 model. GPT-5.5 launched with a 2x price increase over GPT-5.4: input tokens increased from $2.50/M to $5.00/M and output tokens from $15/M to $30/M. OpenAI has also noted that the model is less verbose, producing shorter completions for the same tasks. Just as we did with Opus 4.7 we wanted to know what is the net impact on costs to users by analyzing usage that shifted from GPT-5.4 to GPT-5.5.

We observed cost increases between 49-92%. The price increase is mitigated by the model generating 19-34% fewer completion tokens for longer prompts.

Methodology: Same Switcher Cohort Approach

We used the same approach as our Opus 4.7 analysis. We identified users whose top model by request count was GPT-5.4 prior to the 5.5 launch, who then switched to GPT-5.5 as their top model. This "switcher cohort" gives us a controlled before-and-after comparison of the same user base across model versions.

Since GPT-5.4 and 5.5 use the same tokenizer family, we don't need to control for tokenizer differences. The comparison is direct: same users, same workflows, different model version.

GPT-5.5 Is Less Verbose, But Only for Longer Prompts

Using OpenRouter's consistent token counts, we measured how completion lengths changed between models:

| Prompt Size | Median Completion (5.4) | Median Completion (5.5) | Change |

|---|---|---|---|

| < 2K tokens | 121 | 129 | +7% |

| 2K – 10K | 140 | 213 | +52% |

| 10K – 25K | 211 | 143 | -32% |

| 25K – 50K | 185 | 150 | -19% |

| 50K – 128K | 188 | 136 | -28% |

| 128K+ | 215 | 143 | -34% |

For prompts above 10K tokens, GPT-5.5 produces 19-34% fewer tokens. For shorter prompts, the pattern reverses: under 2K tokens completions are roughly the same length, and in the 2K-10K range they are 52% longer.

Actual Cost Impact

Using billed costs from requests in the switcher cohort, we calculated the average cost per million OpenRouter tokens. This normalizes for prompt length, allowing a direct comparison of cost efficiency.

| Prompt Size | Avg $/M OR Tokens (5.4) | Avg $/M OR Tokens (5.5) | Change |

|---|---|---|---|

| < 2K tokens | $4.89 | $9.37 | +92% |

| 2K – 10K | $2.25 | $3.81 | +69% |

| 10K – 25K | $1.42 | $2.15 | +51% |

| 25K – 50K | $1.02 | $1.65 | +62% |

| 50K – 128K | $0.74 | $1.10 | +49% |

| 128K+ | $0.71 | $1.31 | +85% |

Our analysis shows that GPT-5.5 actual costs increased 49% to 92%. Longer prompts, over 10k tokens, saw costs offset by shorter completions. Shorter prompts, under 10k, experience a higher cost increase where completions did not get shorter.

Methodology

- Source: OpenRouter's request logs

- Cohort: Users whose top model by request count was GPT-5.4, who then switched to GPT-5.5 as their top model

- Sample size: Text-only, non-cancelled requests split across 5.4 and 5.5

- Windows: GPT-5.4: April 21-23, 2026 (pre-launch); GPT-5.5: April 25-28, 2026 (post-launch, launch day excluded)

- Normalization: Cost per million OpenRouter tokens, bucketed by prompt token count. OpenRouter counts tokens independently from OpenAI, providing a consistent baseline across model versions.

- Controls: Excluded media (images, files, audio, video), cancelled requests, and zero-token requests

OpenAI published technical details on their redesigned WebRTC infrastructure that uses a split relay and transceiver architecture to deliver low-latency voice AI interactions globally.

Decoder

- WebRTC: Web Real-Time Communication, a framework for peer-to-peer audio/video streaming in browsers and applications

- Relay: A server component that forwards network traffic between clients when direct connections aren't possible

- Transceiver: A component that handles both transmission and reception of media streams

Original article

OpenAI detailed a redesigned WebRTC architecture using a split relay and transceiver model to maintain low-latency, real-time voice interactions at global scale.

AI systems are approaching the ability to autonomously conduct their own R&D and train successor models, with a 60% chance of full automation by 2028 according to analysis of public benchmarks and capabilities.

Deep dive

- Coding benchmarks show near-saturation: SWE-Bench scores jumped from ~2% (Claude 2, late 2023) to 93.9% (Claude Mythos Preview, 2026), indicating AI can now solve real-world GitHub issues as well as humans

- Task time horizons expanded dramatically: AI systems went from handling 30-second tasks (GPT-3.5, 2022) to 12-hour tasks (Opus 4.6, 2026), with expectations of 100-hour capability by end of 2026

- Scientific competency accelerating across AI-relevant domains: CORE-Bench (paper reproduction) went from 21.5% to 95.5% in 15 months; MLE-Bench (Kaggle competitions) rose from 16.9% to 64.4% in 16 months

- Kernel optimization increasingly automated: Multiple research efforts show AI systems can now write and optimize GPU kernels, a critical bottleneck in AI training and inference efficiency

- PostTrainBench shows AI achieves 50% of human performance at fine-tuning models, using the production instruct-tuned models from frontier labs as challenging human baselines

- Anthropic's internal LLM training optimization task shows 52× speedup with Claude Mythos (April 2026), up from 2.9× with Opus 4 (May 2025)—humans typically achieve 4× in 4-8 hours

- Proof-of-concept automated alignment research demonstrated: Anthropic showed AI agent teams can autonomously improve on human baselines for scalable oversight problems

- Meta-capabilities emerging: AI systems now manage other AI systems in production (Claude Code, OpenCode), enabling parallel multi-specialist workflows under single AI director

- Early signs of creative scientific contribution: AI assisted in solving Erdős math problems and co-authoring novel proofs, though still unclear if this represents true creativity or advanced pattern matching

- Major labs explicitly pursuing automated R&D: OpenAI targets automated AI research intern by September 2026; Anthropic publishing on automated alignment; startups like Recursive Superintelligence ($500M raised) focused entirely on automating AI research

- Alignment compounds under recursion: Even 99.9% accurate alignment degrades to 60.5% after 500 generations, creating existential risk as systems become smarter than their supervisors

- Capital-heavy machine economy forming: AI R&D automation signals broader shift toward corporations with high compute costs but minimal human labor, creating a parallel "machine economy" that increasingly trades with itself

- Critical question remains: Is AI research more like engineering (brick-by-brick optimization that AI excels at) or paradigm shifts (transformer architecture, mixture-of-experts) requiring human creativity? Most AI progress comes from methodical scaling and debugging rather than radical insights

- Timeline estimate: 30% chance of automated frontier model training by end of 2027, 60% by end of 2028—failure to achieve this would reveal fundamental limitations in current paradigm requiring human invention

Decoder

- SWE-Bench: Benchmark evaluating AI systems' ability to solve real-world GitHub software issues, testing practical coding competency on production codebases

- METR task horizons: Measurement of the longest time period (in hours) over which AI systems can reliably complete tasks a skilled human would perform, tracking autonomous work capability

- Kernel design: Writing and optimizing low-level code that maps AI operations (like matrix multiplication) to hardware, critical for training and inference efficiency

- PostTrainBench: Benchmark testing whether AI systems can fine-tune smaller open-weight models, compared against production instruct-tuned versions created by expert human teams

- Alignment: Ensuring AI systems behave safely and as intended, particularly challenging when systems become smarter than the humans or AI supervisors training them

- Recursive self-improvement: AI systems autonomously improving their own capabilities and training successor versions, potentially creating exponential capability growth

- CORE-Bench: Computational Reproducibility Agent Benchmark testing AI's ability to reproduce scientific paper results from code repositories

- MLE-Bench: Benchmark where AI systems compete in Kaggle machine learning competitions across diverse domains like NLP and computer vision

- Centaur configuration: Humans and AI working in close collaboration, combining their complementary strengths on complex problems

Original article

Import AI 455: Automating AI Research

AI systems are about to start building themselves. What does that mean?

I'm writing this post because when I look at all the publicly available information I reluctantly come to the view that there's a likely chance (60%+) that no-human-involved AI R&D – an AI system powerful enough that it could plausibly autonomously build its own successor – happens by the end of 2028. This is a big deal. I don't know how to wrap my head around it. It's a reluctant view because the implications are so large that I feel dwarfed by them, and I'm not sure society is ready for the kinds of changes implied by achieving automated AI R&D. I now believe we are living in the time that AI research will be end-to-end automated. If that happens, we will cross a Rubicon into a nearly-impossible-to-forecast future. More on this later.

The purpose of this essay is to enumerate why I think the takeoff towards fully automated AI R&D is happening. I'll discuss some of the consequences of this, but mostly I expect to spend the majority of this essay discussing the evidence for this belief, and will spend most of 2026 working through the implications.

In terms of timing, I don't expect this to happen in 2026. But I think we could see an example of a "model end-to-end trains it successor" within a year or two – certainly a proof-of-concept at the non-frontier model stage, though frontier models may be harder (they're a lot more expensive and are the product of a lot of humans working extremely hard). My reasoning for this stems primarily from public information: papers on arXiv, bioRxiv, and NBER, as well as observing the products being deployed into the world by the frontier companies. From this data I arrive at the conclusion that all the pieces are in place for automating the production of today's AI systems – the engineering components of AI development. And if scaling trends continue, we should prepare for models to get creative enough that they may be able to substitute for human researchers at having creative ideas for novel research paths, thus pushing forward the frontier themselves, as well as refining what is already known.

Upfront caveat

For much of this piece I'm going to try to assemble a mosaic view of AI progress out of things that have happened with many individual benchmarks. As anyone who studies benchmarks knows, all benchmarks have some idiosyncratic flaws. The important thing to me is the aggregate trend which emerges through looking at all of these datapoints together, and you should assume that I am aware of the drawbacks of each individual datapoint.

Now, let's go through some of the evidence together.

The coding singularity – capabilities over time

AI systems are instantiated via software and software is made out of code.

AI systems have revolutionized the production of code. This has happened due to two related trends: AI systems have gotten better at writing complicated real-world code, and AI systems have gotten much better at chaining together many linear coding tasks (e.g, writing code, then testing it) independent of human oversight.

Two things that exemplify this trend are SWE-Bench and the METR time horizons plot.

Solving real-world software engineering problems

SWE-Bench is a widely used coding test which evaluates how well AI systems can solve real world GitHub issues. When SWE-Bench launched in late 2023 the best score at the time was Claude 2 which had an overall success rate of ~2%. Claude Mythos Preview gets 93.9%, effectively saturating the benchmark. (All benchmarks have some amount of noise inherent to them, so there's usually a point where you score high enough that you are running into the limitations of the benchmark itself rather than your method – for instance, about 6% of the labels in the ImageNet validation set are wrong or ambiguous).

SWE-Bench is a reliable proxy for the general issue of coding competency and the impact of AI on software engineering. The vast majority of people I meet at frontier labs and around Silicon Valley now code entirely through AI systems. Increasingly, they use AI systems to write the tests and check the code as well. In other words, AI systems have gotten good enough to automate a major component of AI R&D, speeding up all the humans that work on it.

Measuring an AI system's ability to complete tasks that take people a long time

METR makes a plot that tells us about the complexity of tasks AIs can complete, measured by how many hours a skilled human would take to do them. The key measure here is one which tells you the rough time horizon over which AI systems can be 50% reliable at a basket of tasks.

Here, progress has been extremely striking: In 2022, GPT 3.5 could do tasks that might take a person about ~30 seconds. In 2023, this rose to 4 minutes with GPT-4. In 2024, this rose to 40 minutes (o1). In 2025, it reached ~6 hours (GPT 5.2 (High)). In 2026, it has already risen to ~12 hours (Opus 4.6). Ajeya Cotra, a longtime AI forecaster who works at METR, thinks it isn't unreasonable to expect AI systems to do tasks that take ~100 hours by the end of 2026 (#448).

This significant rise in the length of time that AI systems can work independently correlates neatly with the explosion in agentic coding tools – this is the productization of AI systems which do work on behalf of people, acting independently for significant periods of time. It also loops back to AI R&D, where if you look closely at the work of many AI researchers, a lot of their tasks boil down into things that might take a person a few hours to do – cleaning data, reading data, launching experiments, etc. All of this kind of work now sits inside the time horizon scope of modern systems.

The more skilled AI systems get and the better they get at working independently of us, the more they can help automate chunks of AI R&D

Key ingredients in delegation are a) confidence in the skills of the person, and b) confidence in their ability to work independently of you in a way that is aligned with your intentions.

When we look at the competency of AI at coding, it seems that AI systems are getting far more skilled and also able to work independently of people for longer and longer periods before needing re-calibration. This correlates with what we see around us – engineers and researchers are now delegating larger and larger chunks of their work to AI systems, and as capabilities rise, so too does the complexity and importance of the work being delegated.

AI is getting good at core science skills essential to AI R&D

Think about modern science – a huge amount of it is about specifying a direction where you want to generate some empirical information, running experiments to generate that information, then sanity-checking the results of the experiment. The combination of advances in coding over time combined with the general world modeling capabilities of LLMs has yielded tools that are already helping to speed up human scientists and partially automate aspects of R&D broadly.

Here, we can look at the rate of AI progress in a few key scientific skills which are inherent to AI research itself: Replicating research results, chaining together machine learning techniques and other approaches to solve technical problems, and optimizing AI systems themselves.

Implementing entire scientific papers and doing the experiments

One core job of AI research is reading scientific papers and reproducing their results. Here, there has been dramatic progress on a wide range of benchmarks.

One good example is CORE-Bench, the Computational Reproducibility Agent Benchmark. This benchmark challenges AI systems to "reproduce the results of a research paper given its repository. The agent must install libraries, packages, and dependencies and run the code. If the code runs successfully, the agent needs to search through all outputs to answer the task questions." CORE-Bench was introduced in September 2024 and the best scoring system at the time was a GPT-4o model in a scaffold called CORE-Agent which scored ~21.5% on the hardest set of tasks in the benchmark.

In December 2025 one of the authors of CORE-Bench declared the benchmark 'solved', with an Opus 4.5 model achieving 95.5%.

Building entire machine learning systems to solve Kaggle competitions

MLE-Bench is an OpenAI-built benchmark which examines how well AI systems can compete (offline) in "75 diverse Kaggle competitions across a variety of domains, including natural language processing, computer vision, and signal processing." At launch in October 2024, the top scoring system (an o1 model inside an agent scaffold) got 16.9%. As of February 2026, the best scoring system (Gemini3 inside an agent harness with search) gets 64.4%.

Kernel design

One of the harder tasks in AI development is kernel optimization, where you write and refine the code that maps specific operations, like matrix multiplication, to the underlying hardware. Kernel optimization is core to AI development because it defines the efficiency of both training and inference – how much compute you can effectively utilize to develop an AI system, and once you've trained a model, how efficiently you can convert that compute into inference.

In recent years, AI for kernel design has gone from a curiosity to a competitive area of research and several benchmarks have emerged. None of these benchmarks are especially popular, so we can't easily model progress over time. On the other hand, we can look at some of the research being done to get a feel for the progress.

Some of the types of work include:

- Using DeepSeek's models to try to build better GPU kernels (#400)

- Automating the conversion of PyTorch modules to CUDA code (#401)

- Meta using LLMs to automate the generation of optimized Triton kernels for use within its infrastructure (#439)

- Using LLMs to help write kernels for non-standard hardware like Huawei's Ascend chips ("AscendCraft" #444)

- Fine-tuning open weight models for GPU kernel design ("Cuda Agent", #448)

One caveat here is that kernel design does have some properties that make it unusually amenable to AI-driven R&D, like having easily verifiable rewards.

Fine-tuning language models via PostTrainBench

A harder version of this kind of test is PostTrainBench (#449), which sees how well different frontier models can take smaller open weight models and fine-tune them to improve performance on some benchmark. The nice feature of this benchmark is we have extremely good human baselines – the existing 'instruct-tuned' versions of these models, which have been developed by talented human AI researchers working at frontier labs. These models have been worked on by extremely talented researchers and engineers and deployed into the world, so they represent a very challenging human baseline to overcome.

As of March 2026, AI systems are able to post-train models to get about half as much of the uplift as ones trained by humans.

The specific eval scores are derived by a "weighted average is taken across all post-trained LLMs (Qwen 3 1.7B, Qwen 3 4B, SmolLM3-3B, Gemma 3 4B) and benchmarks (AIME 2025, Arena Hard, BFCL, GPQA Main, GSM8K, HealthBench, HumanEval). For each run, we ask a CLI agent to maximize the performance of a specific base LLM on a specific benchmark."

The top-scoring systems as of April get 25%-28% (Opus 4.6, and GPT 5.4), compared to a human score of 51%. This is already quite meaningful.

Optimizing language model training

For the last year Anthropic has reported how well its systems do at an LLM training task which is described as tasking its models to "optimize a CPU-only small language model training implementation to run as fast as possible". The score is the average speedup over the unmodified starting code and progress has been striking: Claude Opus 4 achieved a 2.9× mean speedup in May 2025; this rose to 16.5× with Opus 4.5 in November 2025, 30× with Opus 4.6 in February 2026, and 52× with Claude Mythos Preview in April 2026. To calibrate on what these numbers mean, it is expected to take a human researcher 4 to 8 hours of work to achieve a 4x speedup on this task.

Conducting AI alignment research

Another Anthropic result is a proof-of-concept of Automated Alignment Research (#454); here, an Anthropic researcher primes a team of individual AI agents with a research direction, then they autonomously go and try to get a better score than a human baseline on an AI safety research problem (specifically, scalable oversight). The approach works, with the AI agents coming up with techniques that beat the Anthropic-designed baseline. However, this is done at a relatively small scale and doesn't (yet) generalize to a production model. Nonetheless, it's proof that you can apply today's AI systems to contemporary cutting-edge research problems and we already see meaningful signs of life. All of the above mentioned benchmarks once looked like this, too, and then after a few months or at most a year, AI systems got dramatically better at whatever the benchmarks were testing.

Meta-skills: management

AI systems are also learning to manage other AI systems. This is visible in broadly deployed products like Claude Code or OpenCode, where a single agent can end up supervising multiple sub-agents. This allows AI systems to work on large-scale projects that require multiple individual 'workers' each with different specialisms that work in parallel, typically under the direction of a single AI manager (which, here, is an AI system).

Is AI research more like discovering general relativity or Lego?

Can AI invent new ideas that help it improve itself, or are these systems best equipped for the unglamorous, brick-by-brick work required for research? This is an important question for figuring out the extent to which AI systems can end-to-end automate AI research itself. My sense is that AI cannot yet invent radical new ideas – but the technology may not need to for it to automate its own development.

As a field, AI moves forward on the basis of doing ever larger experiments that utilize more and more inputs (e.g, data and compute). Every so often, humans come up with some paradigm-shifting idea which can make it dramatically more resource efficient to do things – a good example here is the transformer architecture and another is the idea of mixture-of-expert models. But mostly the field of AI moves forward through humans methodically going through some loop of taking a well performing system, scaling up some aspect of it (e.g, the amount of data and compute it is trained on), seeing what breaks when you scale it up, figuring out the engineering fix to allow it to scale, then scaling it again. Very little of this requires extremely out-of-leftfield insights and a lot of it seems more like unglamorous 'meat and potatoes' engineering work.

Similarly, a lot of AI research is about running variations of existing experiments where you explore the outcomes of using different parameters, though research intuitions can help pick the most fruitful parameters to vary, you can also automate this and have the AI figure out which parameters to vary (an early version of this was neural architecture search).

Thomas Edison said that "genius is 1% inspiration and 99% perspiration". Even 150 years later, this feels right. Very occasionally new insights come along which transform a field. But mostly, the field has moved forward through humans sweating a lot of pain out on the schlep of improving and debugging various systems.

As the public data above shows, AI has got extremely good at performing many of the essential schlep components of AI development. Along with this, the meta-trend of basic capabilities like coding combined with an ever-expanding time horizon, means AI systems are able to chain together more and more of these tasks into complex sequences of work. This means even if AI systems are relatively uncreative, it feels safe to bet they can push themselves forward – albeit at a slower rate than if they're able to generate novel insights. But if you look at the public data, here too there are tantalizing signs that AI systems may be able to be creative in a way that lets them advance themselves in more impressive ways.

Pushing forward the frontier of science

We have some very preliminary signs that general-purpose AI systems can push forward the frontiers of human science, though this has so far only happened in a couple of domains – primarily computer science and mathematics – and often it happens less through AI systems acting alone and more them acting in partnership with humans in a centaur configuration.

Nonetheless, it's worth observing the trends:

-

Erdos Problems: A team of mathematicians worked with a Gemini model to see how well it could tackle some Erdos math problems. After directing the system to attack around 700 problems they came up with 13 solutions. Of these solutions, 1 was deemed by them to be interesting: "We tentatively believe Aletheia's solution to Erdős-1051 represents an early example of an AI system autonomously resolving a slightly non-trivial open Erdős problem of somewhat broader (mild) mathematical interest, for which there exists past literature on closely-related problems," they wrote. (#444).

-

Centaur math discovery: Researchers with the University of British Columbia, University of New South Wales, Stanford University, and Google DeepMind published a new math proof which was built in close collaboration with some AI-based math tools built at Google. "The proofs of the main results were discovered with very substantial input from Google Gemini and related tools," they wrote. (#441).

If you squint, you could argue that this is a sign that AI systems are developing some of the field-advancing creative intuitions that humans have. But you could just as easily say that math and CS could be unusual domains that are oddly amenable to AI-driven invention, and might end up being exceptions that prove a larger rule. Another example here is Move 37, though I'd contend that the fact it's been ten years since the AlphaGo result and that Move 37 hasn't been replaced by some incredibly impressive more modern flash of insight is another weakly bearish signal here.

Putting it all together

If I put this all together the picture from all of the above evidence I end up with is the following facts:

-

AI systems are capable of writing code for pretty much any program and these AI systems can be trusted to independently work on tasks that'd take a human tens of hours of concentrated labor to do.

-

AI systems are increasingly good at tasks that are core to AI development, ranging from fine-tuning to kernel design.

-

AI systems can manage other AI systems, effectively forming synthetic teams which can fan out and attack complex problems, with some AI systems taking on the roles of directors and critics and editors and others taking on the role of engineers.

-

AI systems can sometimes out-compete humans on hard engineering and science tasks, though it's hard to know whether to attribute this to inventiveness or mastery of rote learning.

To me, this makes a very convincing case that AI can today automate vast swatches, perhaps the entirety, of AI engineering. It is not yet clear how much of AI research it can automate, given that some aspects of research may be distinct from the engineering skills. Regardless, it all feels to me like a clear sign that AI is today massively speeding up the humans that work on AI development, allowing them to scale themselves through pairing with innumerable synthetic colleagues.

Finally, the AI industry is literally saying that AI R&D is its goal

OpenAI wants to build an "automated AI research intern by September of 2026". Anthropic is publishing work on building automated alignment researchers. DeepMind appears to be the most circumspect of the big three, but still says "automation of alignment research should be done when feasible". Automating AI R&D is also the goal of numerous startups: Recursive Superintelligence just raised $500m with the goal of automating AI research, and another neolab, Mirendil, has the goal of "building systems that excel at AI R&D."

In other words, the combined efforts of hundreds of billions of existing and new capital is being sunk into entities that have the goal of automating AI R&D. We should surely expect at least some progress in this direction as a consequence.

Why this matters

The implications of this are profound and under-discussed in popular media coverage of AI R&D. I'll list a few here. This isn't a comprehensive list, but it gestures at the enormity of the challenges AI R&D introduces.

-

We have to get alignment right: Alignment techniques that work today may break under recursive self-improvement as the AI systems become much smarter than the people or systems that supervise them. This is a very well covered area, so I'll just briefly highlight some of the issues:

- Training AI systems to not lie and cheat is surprisingly subtle (e.g, despite trying very hard to build good tests for environments, it's sometimes the case the best way for an AI to solve it is to cheat, thus teaching it that teaching is good)

- AI systems might be able to 'fake alignment' by outputting scores that make us think they behave a certain way that actually hides their true intentions. (In general, AI systems are already aware of when they are being tested.)

- As AI systems start to contribute more of the foundational research agenda for their own training, we might end up substantially changing the overall way AI systems get trained and not have good intuitions or intellectual foundations for understanding what this means.

- There are very basic "compounding error" problems whenever you put something in a recursive loop that likely hits on all of the above and other problems: unless your alignment approach is "100% accurate" and has a theoretical basis for continuing to be accurate with smarter systems, then things can go wrong quite quickly. For example, your technique is 99.9% accurate, then that becomes 95.12% accurate after 50 generations, and 60.5% accurate after 500 generations. Uh oh!

-

Everything that AI touches gets a massive productivity multiplier: In the same way AI is dramatically improving the productivity of software engineers, we should expect the same thing to happen for everything else that AI touches. This introduces a couple of issues we'll have to contend with: 1) inequality of access: assuming that demand for AI continues to outstrip compute supply, we'll have to figure out where to allocate AI to maximize a social upside. By default, I am skeptical that market incentives guarantee us the best societal upside from limited AI compute. Figuring out how to allocate the acceleratory capabilities conferred by AI R&D will be a politically charged problem. 2) 'Amdahl's Law' for the economy: as AI flows into the economy, we'll discover places where things break or slow under the increased volume, and we'll need to figure out how to fix those weak links in the chain. This may be especially pronounced in areas where you have to reconcile the fast-moving digital world with the slow-moving physical world, like drug trials for new medical therapies.

-

The formation of a capital-heavy, human-light economy: All of the above evidence for AI R&D also points to the increasing capabilities of AI systems to autonomously run businesses as well. This means we should expect for an increasing chunk of the economy to get colonized by a new generation of companies which are either capital-heavy (because they own a lot of computers), or opex-heavy (because they spend a lot of money on AI services which they build value on top of), and relatively light on labor compared to today's corporations – because the marginal value of spending more on AI versus human labor will be constantly growing as a consequence of the sustained capability expansion of the AI systems. In practice, this will look like the emergence of a "machine economy" that grows within the larger "human economy", though we might expect that over time the machine economy will interact more and more with itself as AI-run corporations begin to trade with one another. This will do profoundly weird things to the economy and will invite all sorts of questions around inequality and redistribution. Eventually, it may be possible to see the emergence of fully autonomous corporations that are run by AI systems themselves, which would exacerbate all of the above issues, while also posing many novel governance challenges.

Staring into the black hole

Given all of this, I think there's a ~60% chance we see automated AI R&D (where a frontier model is able to autonomously train a successor version of itself) by the end of 2028. Based on the above analysis, you might ask why I don't expect this in 2027? The answer is that I think AI research contains some requirement for creativity and heterodox insights to move forward – so far, AI systems haven't yet displayed this in a transformative and major way (though some of the results on accelerating math research are suggestive of this). If you had to push me for a 2027 probability, I'd say 30%. If we don't see it by the end of 2028, then I think we will have revealed some fundamental deficiency within the current technological paradigm and it'll require human invention to move things forward.

I have written this essay in an attempt to coldly and analytically wrestle with something that for decades has seemed like a science fiction ghost story. Upon looking at the publicly available data, I've found myself persuaded that what can seem to many like a fanciful story may instead be a real trend. If this trend continues, we may be about to witness a profound change in how the world works.

Thanks to Andrew Sullivan, Andy Jones, Holden Karnofsky, Marina Favaro, Sarah Pollack, Francesco Mosconi, Chris Painter, and Avital Balwit, for feedback on this essay.

Thanks for reading!

Vercel open sourced deepsec, an AI agent-powered security scanner that uses Claude and GPT models to autonomously investigate codebases for complex vulnerabilities with a 10-20% false positive rate.

Deep dive

- Deepsec runs entirely on your own infrastructure (locally or on Vercel Sandboxes) so privileged source code never leaves your control, using your existing Claude or GPT API subscriptions

- The five-stage workflow starts with regex-based static analysis to identify security-sensitive files, then dispatches AI agents to investigate each candidate individually

- Investigation agents trace data flows through the codebase and check for security mitigations, producing findings with severity ratings

- A separate revalidation step uses a second agent run to filter false positives and reclassify severity levels, bringing the false positive rate to 10-20%

- Scans of Vercel's own codebases routinely scale to 1,000+ concurrent sandboxes running in parallel; single-machine scans can take multiple days for large repositories

- Production testing on dub.co (an open source marketing attribution platform with auth, database, and backend services) surfaced actionable security issues that impressed the founder

- Vercel used deepsec findings on their own monorepos to develop custom scanner plugins covering every authentication path in their code

- The plugin system allows custom regex matchers tuned to your specific authentication model, data layer, or team conventions

- Deepsec includes a classifier that detects model refusals after each research step, though refusals are reportedly a non-issue with current models

- The tool works with standard Claude Opus 4.7 and GPT 5.5 models without requiring special "cyber" fine-tuned versions, though it supports those too

- Best suited for applications and services rather than libraries or frameworks, which would likely need custom prompts and scanners

- The enrichment step uses git metadata and optional services to identify which developers should fix each discovered issue

- Export formats findings as instructions that can become tickets for both human developers and coding agents to remediate

Decoder

- Opus 4.7: Anthropic's Claude model version referenced in the article (likely future/hypothetical given current date context)

- GPT 5.5: OpenAI model version referenced with "xhigh reasoning" capability (likely future/hypothetical)

- Static analysis: Code examination technique that analyzes source code without executing it, typically using pattern matching

- Sandboxes: Isolated execution environments that run code safely without affecting production systems

- False positive: A security alert for an issue that doesn't actually exist or isn't exploitable

- Data flow tracing: Following how data moves through a codebase to identify where user input might reach sensitive operations without proper validation

- Monorepo: A single repository containing multiple projects or services, common at large companies like Vercel

- Cyber model: Fine-tuned AI models specifically trained to perform security research tasks that base models might refuse

Original article

Deepsec is an agent-driven security tool that scans large codebases locally or in parallel cloud sandboxes to uncover complex vulnerabilities.

Reduce friction and latency for long-running jobs with Webhooks in Gemini API (3 minute read)

The Gemini API now supports webhooks for long-running jobs, eliminating the need for polling.

Original article

JavaScript is not available.

We've detected that JavaScript is disabled in this browser. Please enable JavaScript or switch to a supported browser to continue using x.com. You can see a list of supported browsers in our Help Center.

Meta's Tuna-2 shows that direct pixel embeddings outperform complex vision encoders for unified image understanding and generation tasks.

Deep dive

- Tuna-2 progressively simplifies the Tuna architecture by removing visual encoding components while improving performance on multimodal benchmarks

- Original Tuna used VAE for visual encoding, Tuna-R removed VAE to use only representation encoders, Tuna-2 removes both for direct pixel patch embeddings

- The model supports text-to-image generation and image editing tasks at various resolutions including 512px and 1024px classes

- Available in 7B and 2B parameter sizes depending on variant, with inference handled through a unified script

- Meta is releasing foundation checkpoints with some LLM backbone and diffusion head layers removed due to organizational policy constraints

- All other components including vision encoder, projections, and embeddings are fully preserved in the release

- Video generation training and inference code is included but the video model itself cannot be released due to policy restrictions

- The removed layers can be re-learned through short fine-tuning on user data, and Meta plans to release fully restored weights fine-tuned on external data

- Research published in collaboration with University of Hong Kong and University of Waterloo, with paper accepted to CVPR 2026

Decoder

- Pixel embeddings: Direct encoding of image patches into numerical representations without intermediate compression or feature extraction layers

- VAE (Variational Autoencoder): Neural network that compresses images into compact latent representations before processing

- Diffusion head: Component that generates images through iterative denoising process

- Unified multimodal model (UMM): Single model architecture that handles both understanding and generating multiple data types like text and images

- Representation encoder: Intermediate layer that transforms visual inputs into feature representations for downstream tasks

- Patch embedding: Breaking an image into fixed-size patches and converting each to a vector representation

Original article

TUNA-2: Pixel Embeddings Beat Vision Encoders for Unified Understanding and Generation

Overview

We simplify Tuna by progressively stripping away its visual encoding components. By removing the VAE, we first derive Tuna-R, a pixel-space unified multimodal model (UMM) that relies solely on a representation encoder. Tuna-2 further streamlines the design by bypassing the representation encoder entirely, utilizing direct patch embedding layers for raw image inputs. Tuna-2 using pixel embeddings outperforms both Tuna-R and Tuna across a diverse suite of multimodal benchmarks.

Generation Results

Installation

git clone https://github.com/facebookresearch/tuna-2.git

cd tuna-2

bash scripts/setup_uv.sh # creates .venv with all dependencies

source .venv/bin/activateManual setup (if you prefer to drive uv yourself)

curl -LsSf https://astral.sh/uv/install.sh | sh

uv sync

uv pip install torch torchvision --index-url https://download.pytorch.org/whl/cu121

uv pip install -e .

source .venv/bin/activateInference

All inference is done through a single unified script:

bash scripts/launch/predict.sh --ckpt <PATH> --prompt <TEXT> [OPTIONS]Options

| Flag | Values | Default | Description |

|---|---|---|---|

--ckpt |

path | (required) | Path to the model checkpoint |

--prompt |

text | (required) | Text prompt (t2i) or editing instruction (edit) |

--task |

t2i, edit |

t2i |

Inference task |

--variant |

none_encoder, siglip_pixel, vae |

none_encoder |

Model variant: Tuna-2, Tuna-R, or Tuna |

--size |

7b, 2b |

7b |

Model size (2b only available for --variant vae) |

--resolution |

See table below | 512x512 |

Output resolution (HxW) |

--gpu |

int | 0 |

GPU device index |

--image |

path | — | Source image (required for --task edit) |

--steps |

int | 50 |

Number of diffusion steps |

--guidance |

float | (from config) | Classifier-free guidance scale |

--seed |

int | 42 |

Random seed |

--negative |

text | (from config) | Negative prompt |

Supported Resolutions

| 512-class | 1024-class |

|---|---|

512x512 |

1024x1024 |

448x576 |

896x1152 |

576x448 |

1152x896 |

384x672 |

768x1344 |

672x384 |

1344x768 |

Examples

See assets/prompts.txt for sample prompts.

# Tuna-2 (7B, no encoder, 512px)

bash scripts/launch/predict.sh \

--ckpt /path/to/tuna_2_pixel_7b.pt \

--prompt "A highly realistic beauty portrait in extreme close-up, showing the face of a young woman from just above the eyebrows down to the lips. Her skin is natural, luminous, and textured, with visible pores, fine facial hairs, subtle unevenness, and a slightly dewy finish, without heavy retouching or artificial smoothing."

# Tuna (2B, VAE latent, 512px)

bash scripts/launch/predict.sh \

--variant vae --size 2b \

--ckpt /path/to/tuna_2b.pt \

--prompt "A brutally realistic cinematic close-up inside a real space station cupola, side profile of a blonde female astronaut floating in zero gravity beside the window, her loose braid drifting naturally, looking out at Earth in silence."Video

Due to policy constraints, we are unable to release the video generation model at this time. However, we provide the complete video training and inference codebase. If you are interested in training your own video model, this is a ready-to-use starting point — see configs/train/video_t2v.yaml for training configuration and configs/predict/t2v_2b.yaml for inference.

TODO

- Release some of the Tuna-2 model weights.

- Release some of the Tuna model weights.

- Release the fully restored model weights (fine-tuned on external data to recover the missing layers).

A Note on Model Release

Due to organizational policy constraints, we are unable to release the full production-trained model weights. To support the research community, we plan to release a foundation checkpoint with a small number of layers removed from both the LLM backbone and the diffusion head (flow head). The remaining layers and all other components (vision encoder, projections, embeddings, etc.) are fully preserved. With a short fine-tuning pass on your own data, the removed layers can be quickly re-learned and the model restored to full quality.

For detailed fine-tuning instructions, please refer to the training guide.

Meanwhile, we are also actively working on fine-tuning the removed layers using external data, and plan to release the complete weights as soon as possible.

Citation

@article{tuna2,

title={TUNA-2: Pixel Embeddings Beat Vision Encoders

for Unified Understanding and Generation},

author={Liu, Zhiheng and Ren, Weiming and Huang, Xiaoke

and Chen, Shoufa and Li, Tianhong and Chen, Mengzhao

and Ji, Yatai and He, Sen and Schult, Jonas

and Xiang, Tao and Chen, Wenhu and Luo, Ping

and Zettlemoyer, Luke and Cong, Yuren},

journal={arXiv preprint arXiv:2604.24763},

year={2026}

}

@article{liu2025tuna,

title={Tuna: Taming unified visual representations for native unified multimodal models},

author={Liu, Zhiheng and Ren, Weiming and Liu, Haozhe and Zhou, Zijian and Chen, Shoufa and Qiu, Haonan and Huang, Xiaoke and An, Zhaochong and Yang, Fanny and Patel, Aditya and others},

journal={CVPR2026},

year={2026}

}License

This project is licensed under the Apache License 2.0. See LICENSE for details.

Consumer AI products like ChatGPT struggle with monetization despite viral growth because users cap out at $20/month subscriptions while enterprise AI revenue expands through higher per-user spending.

Decoder

- ARPU: Average Revenue Per User, a key business metric measuring how much revenue each customer generates

- Retention curve: A graph showing what percentage of users continue using a product over time; ChatGPT's "smile" curve indicated high sustained engagement

- Gross vs. net retention: Gross retention tracks whether users stay; net retention includes revenue expansion from existing users spending more over time

Original article

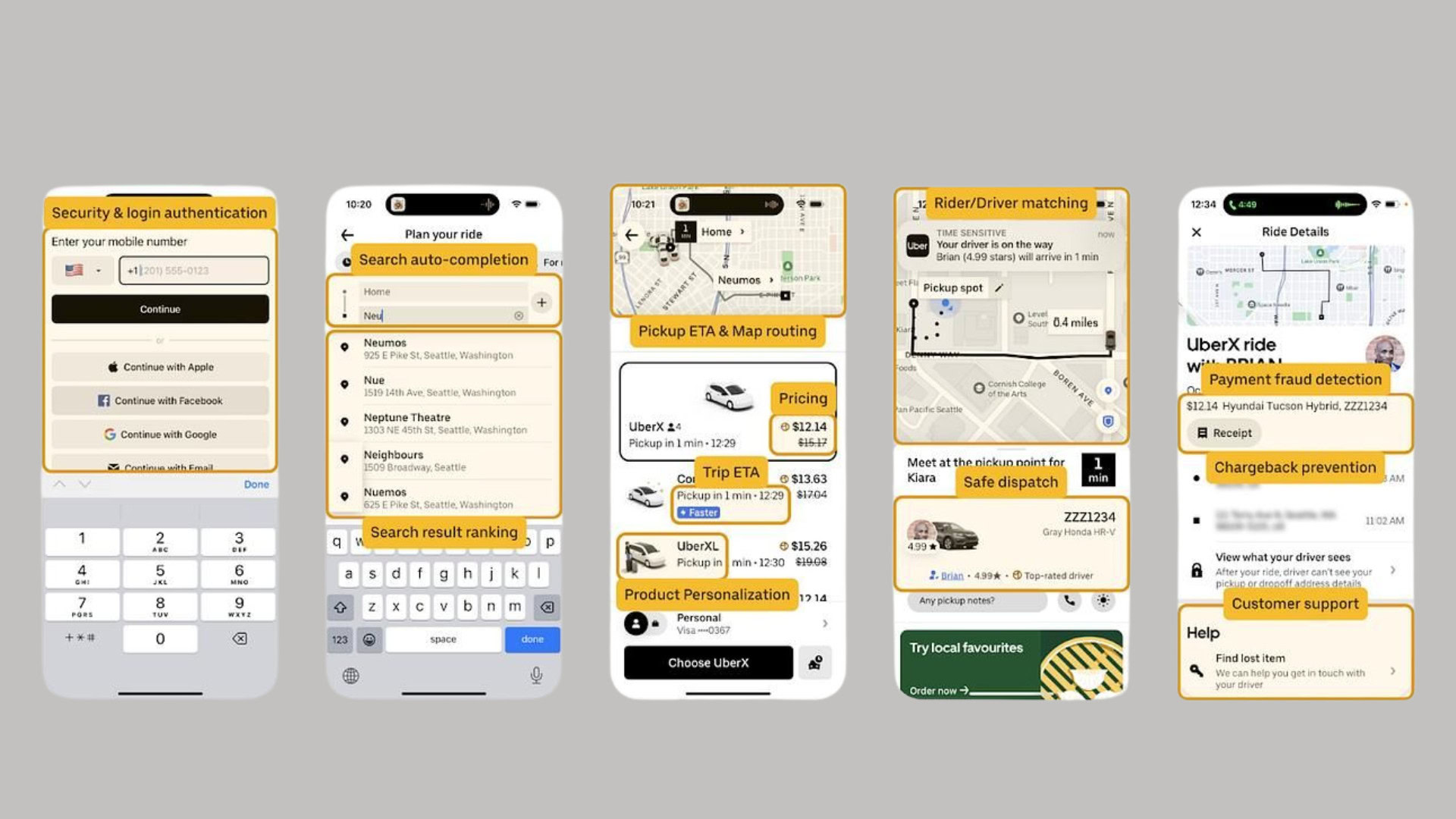

Research shows frontier AI models are post-trained for specific tool harnesses, with Claude Opus 4.6 scoring 4.5% higher with the right framework and harness choice alone moving Cursor from top 30 to top 5 in benchmarks.

Deep dive

- Terminal-Bench 2.0 data demonstrates measurable impact: Claude Opus 4.6 achieved 79.8% accuracy with ForgeCode versus 75.3% with Capy, a 4.5 percentage point difference from harness choice alone

- Cursor's jump from top 30 to top 5 ranking was achieved solely by changing the harness, without any model improvements

- OpenAI models default to patch-based file editing approaches while Anthropic models prefer string replacement methods

- Harness mismatches force models to spend reasoning tokens adapting their output format instead of solving the actual problem

- Post-training against specific harnesses embeds tool names, schemas, citation tag formats, memory rituals, and system prompt structures directly into model weights

- This suggests frontier labs are optimizing models for their own tooling ecosystems, creating lock-in effects

- The research covers three major CLI tools: Codex CLI, Claude Code, and GitHub Copilot CLI

- Harness design choices include how tools are invoked, how context is structured, and how outputs are formatted

- The findings challenge the assumption that models are general-purpose and perform consistently across different integration layers

- For developers, this means harness selection is a first-order concern, not just an implementation detail

Decoder

- Harness: The integration layer or framework that wraps an AI model, defining how it receives inputs, formats outputs, and interacts with tools

- Post-training: Additional training applied to base models to optimize them for specific use cases, tools, or formats after initial pre-training

- Terminal-Bench: A benchmark for evaluating AI models' performance on command-line and terminal-based coding tasks

- ForgeCode/Capy: Different harness frameworks used in the benchmark comparisons

- Reasoning tokens: The computational budget models spend on internal processing and problem-solving, which can be wasted on format adaptation instead of the core task

- Citation tags: Specific markup formats models use to reference sources or indicate tool usage in their outputs

- Memory rituals: Patterns for how models maintain and reference context across interactions

Original article

JavaScript is not available.

We've detected that JavaScript is disabled in this browser. Please enable JavaScript or switch to a supported browser to continue using x.com. You can see a list of supported browsers in our Help Center.

.svg)

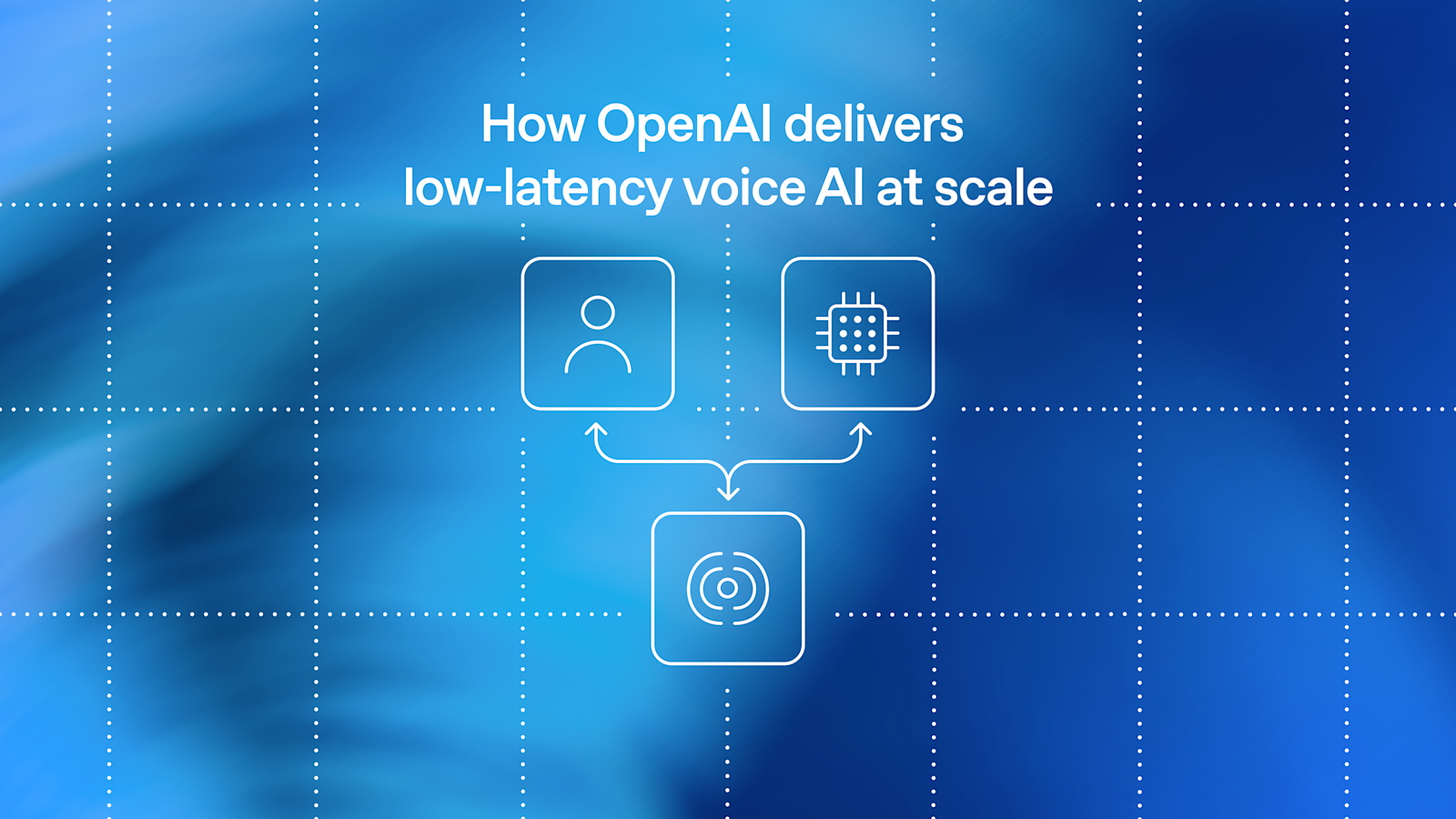

Powering the Inference Era: Inside the DigitalOcean AI-Native Cloud (7 minute read)

DigitalOcean launched an AI-Native Cloud platform with five integrated layers from silicon to agent runtime, designed specifically for inference and agentic workloads rather than traditional SaaS applications.

Deep dive

- DigitalOcean's five-layer stack integrates Infrastructure (owned data centers and GPUs), Core Cloud (compute and networking), Inference Engine (model serving), Data & Learning (databases and vector stores), and Managed Agents (production runtime for agentic workloads) into a single platform

- The Inference Router is a preference-aware control plane that automatically selects optimal models for each request based on cost, latency, and quality metrics, running on a small language model that resolves intent in 200ms—one customer (Celiums.AI) shifted 83% of traffic to open models and cut per-token costs by 61%

- DigitalOcean owns its silicon infrastructure across 19 data centers with NVIDIA HGX B300 and AMD Instinct MI350X GPUs, plus liquid-cooled racks for high-density workloads, rather than reselling hyperscaler capacity

- Achieved fastest inference for Qwen 3.5 and DeepSeek V3.2 in independent Artificial Analysis benchmarks through kernel-level co-engineering with NVIDIA and AMD

- MicroVM Droplets based on Firecracker start in roughly 200 milliseconds and are designed for agent sandboxes that need quick burst capacity for code execution between GPU inference calls

- Managed Agents runtime separates agent orchestration from business logic with five primitives: open harness support (LangGraph, CrewAI, OpenCode), E2B-compatible sandboxes, durable state management, the Plano orchestration framework (Apache 2.0), and Model Context Protocol integration

- Knowledge Bases feature automatically exposes every managed retrieval system as an MCP tool by default, enabling agents to query data sources with grounded, cited answers

- Batch Inference for asynchronous workloads like document processing and eval runs costs roughly 50% of peak serverless pricing

- PostgreSQL and MySQL Advanced Editions now scale to 50 TiB capacity with 1 TiB increments, proxy-based failover in seconds, and 100+ observability metrics

- Production customer examples include Workato running a trillion automation tasks at 67% lower cost, Character.AI handling over a billion queries daily at 2x throughput, and Hippocratic AI powering 20M+ patient interactions with 40% lower latency

- Platform runs on open-source foundation including PostgreSQL, MySQL, MongoDB, Valkey, OpenSearch, Kafka, Weaviate, vLLM, and SGLang rather than proprietary services

- The integrated stack eliminates egress costs and margin stacking from using multiple vendors, as inference, data, compute, and agents run in the same VPC on the same silicon

- Announced 15 products at Deploy 2026 with features ranging from general availability (Serverless Inference, BYOM, Managed Agents) to public preview (Inference Router, Evaluations, Advanced Edition databases) to private preview (Burstable CPU, Managed Weaviate)

Decoder

- RDMA: Remote Direct Memory Access, a networking technology that allows high-speed data transfer between servers with minimal CPU involvement, critical for GPU cluster communication

- vLLM: an open-source library for fast large language model inference and serving, optimized for throughput

- SGLang: Structured Generation Language, a framework for efficient LLM serving with structured outputs

- MCP (Model Context Protocol): a standard protocol that allows AI agents to access external data sources and tools in a consistent way

- Firecracker: a lightweight virtual machine manager from Amazon used to create secure, fast-starting microVMs for containerized workloads

- Vector store: a specialized database that stores high-dimensional embeddings for semantic search and retrieval-augmented generation (RAG)

- Knowledge Bases: managed systems that combine document storage, embedding generation, and retrieval to provide LLMs with domain-specific context

- Agentic workloads: AI systems that operate in loops, making decisions, taking actions, and adjusting based on feedback rather than processing single request-response cycles

Original article

Powering the Inference Era: Inside the DigitalOcean AI-Native Cloud

I've spent the last fifteen years building cloud services: early days of AWS building S3 and EBS, helping launch Oracle Cloud Infrastructure from inception, and now building the agentic cloud at DigitalOcean for AI-natives. Every cloud I've worked on was designed for the workloads of its era. Those clouds were built for human-centric SaaS applications: a few users, a handful of requests per session, predictable data flows.

AI workloads break every one of those assumptions.

AI runs in loops. Agents think, then act, then think again. A single user task can span hundreds of thousands of tokens, traverse half a dozen tools, hit a knowledge base, write code, execute it, and persist state, all before returning an answer. The clouds we have weren't built for this. Hyperscalers give you hundreds of services built for yesterday's applications, and leave the integration to you. Inference-only providers sit on someone else's compute and stack their margin on top. GPU rental shops (frequently referred to as "Neoclouds") give you silicon, but not a system.

This week at Deploy 2026, we launched the DigitalOcean AI-Native Cloud, a purpose-built platform for the inference and agentic era that integrates five layers from silicon to agents into a single open stack.

We shipped fifteen products on Tuesday. Here's what's inside.

The shape of the stack

Our AI-Native Cloud is composed of five layers, each addressing a real workload pattern we've watched our customers wrestle with.

They're independently useful and beautifully integrated:

- Managed Agents: production runtime for agents, with sandboxes, durable state, and a universal data plane

- Data & Learning: managed databases, vector stores, knowledge bases, and feedback loops

- Inference Engine: every open and frontier model on one endpoint, optimized at the kernel

- Core Cloud: compute, networking, and storage primitives, tuned for AI

- Infrastructure: DigitalOcean-owned silicon and facilities, co-engineered with the industry's best

Open source isn't an add-on at any of these layers. It's the foundation: PostgreSQL, MySQL, MongoDB, Valkey, OpenSearch, Kafka, Weaviate, vLLM, SGLang, OpenCode, LangGraph, CrewAI. Open all the way down. You bring your weights, your harness, your tools. We provide the runtime.

Let me walk through it, from the ground up.

Infrastructure: own the silicon, own the economics

Our global footprint now spans 19 data centers and 200+ network points of presence, with future capacity coming online in Kansas City and Memphis. That includes our first liquid-cooled racks, purpose-built for next-generation high-density GPU workloads.

Our Richmond data center is now generally available, with NVIDIA HGX™ B300 and AMD Instinct™ MI350X GPUs available alongside the H100, H200, and MI300/MI325 silicon already running across our fleet. We co-engineer at the kernel level with both NVIDIA and AMD. We don't rent capacity. We own it. That's why your unit economics improve as you scale on us, instead of getting worse.

Core Cloud: the foundation under every agent

Hundreds of thousands of customers already run on our core cloud every day: Droplets, Kubernetes (DOKS), VPC networking, and object/block/network file storage. We've extended it for AI workloads with a non-blocking RDMA fabric, RDMA-enabled NFS, and VPC-native inference out of the box.

At Deploy we announced Burstable CPU and MicroVM Droplets, currently in Private Preview. These are Firecracker-based instances that start in roughly 200 milliseconds, ideal for agent sandboxes and lightweight, spiky workloads. Agents need GPUs for thinking and CPUs for doing. We have both, and now they're sized for how agents actually behave.

Inference Engine: every model, one endpoint

This is the layer we've rebuilt from the ground up. We co-developed it with design partners like Hippocratic AI, and the result is one of the highest-performing inference engines on the market today: fastest inference for Qwen 3.5 and DeepSeek V3.2 in independent Artificial Analysis benchmarks for token throughput.

Here's what's new:

- Inference Router (Public Preview): a preference-aware control plane that picks the right model for each request, balancing cost, latency, and quality with no code changes

- Dedicated Inference (General Availability): reserved capacity with predictable performance and economics for production workloads

- Bring Your Own Model (BYOM) (General Availability): a service for hosting your fine-tunes on our serving stack and inherit the kernel-level optimizations

- Multi-modal model support (General Availability): text, vision, audio, and video on a single API

- Batch Inference (General Availability): purpose-built for asynchronous workloads (document processing, eval runs, synthetic data generation) at roughly 50% of peak serverless pricing

- Content Safety Guardrails (General Availability): policy controls integrated at the inference layer

- Serverless Inference with multi-modal support (General Availability): single API, scale to zero, pay only for tokens consumed

- Evaluations (Public Preview): automated scoring against golden datasets or built-in judge models, so you can swap models without flying blind

The Router deserves a closer look. It's a preference-aware control plane that picks the best model for each request, balancing cost, latency, and quality without touching application code. Unlike static routing rules, it runs on a purpose-built small language model that resolves intent in 200 milliseconds and ranks candidates against live cost and latency data, so the right model wins at 2am and at 2pm. Most AI builders start on a single frontier model. Then PMF happens, the bill scales linearly with usage, and the unit economics get painful fast. Most successful AI natives we work with run three or more models in production. The leading edge is running twenty or more. The Router makes that possible without a rewrite.

Take Celiums.AI, across 29.2M tokens processed through the Inference Router, 83% of their traffic now lands on open-source models, up from zero.

"Our AI Ethics Engine was built with open-source AI, so running it on closed-source models felt backwards. DigitalOcean's Inference Router closed the loop: we swapped frontier closed-source models for open alternatives and cut per-token cost by 61% while pulling p95 latency under 400ms. Same API. Zero code changes. The Router routes to the optimal model on every request. We just build."

— Mario Gutiérrez CTO at Unity Financial Network and Founder of Celiums.AI

We also expanded the Model Catalog with over 25 new models, including:

- NVIDIA Nemotron 3 Nano Omni

- DeepSeek V3.2

- Llama 3.3 70B

- Qwen 3.5

- MiniMax-M2.5

Data & Learning: AI-ready data, no rebuild required

Stateful agents need context, memory, and the ability to learn from what happens in production. The Data & Learning layer is built on the managed services tens of thousands of customers already trust, extended for how AI systems actually run.

What's new:

- Knowledge Bases (General Availability): managed retrieval with grounded, cited answers; every knowledge base is exposed as an MCP tool by default

- Learning & Feedback Loops (General Availability): capture production signals and route them back into model improvement, without a separate data pipeline

- Managed Weaviate (Private Preview): open-source vector store, fully managed

- PostgreSQL Advanced Edition and MySQL Advanced Edition (Public Preview): capacity to 50 TiB, 1 TiB scaling in minutes, proxy-based failover in seconds, and 100+ observability metrics

Transactional databases remain the foundation for AI. We made them production-grade for the agentic era.

Managed Agents: a production runtime, not a monolith

This is the newest layer of the stack, and the one where we've spent the most time listening. We've watched customers deploy tens of thousands of agents on App Platform as containers. We've also watched them hit a wall when the agent loop, tool calls, state, observability, and code execution all live tangled together inside a single monolith.

So we asked a simple question: what would help you actually move faster? The answer became Managed Agents: five primitives that separate the plumbing from the business logic of your agent.

What's new:

- Managed Agents (General Availability): the production runtime

- Open Harness (General Availability): bring your own agent framework, including OpenCode, LangGraph, CrewAI, or any other harness

- Managed Sandboxes (General Availability): E2B-compatible, Firecracker-based, sub-second cold start for safe execution of model-generated code

- Durable State Management (General Availability): checkpoints and memory primitives the harness can rely on

- Plano (General Availability): our orchestration framework and data plane for agents, released under Apache 2.0

- Launchpad (General Availability): go from prototype to deployed agent in clicks

- Model Context Protocol (MCP) (General Availability): expanded support across the platform

- ToolBox (Coming Soon): 3,000+ tool connectors so your agents can act on the systems your business actually runs on

The compounding effect of the full stack

Any single layer of this stack is useful on its own. The reason to run them together is that the optimization compounds.

When your agents, your inference, your data, and your compute live in the same VPC, on the same silicon, billed on the same invoice, you eliminate the egress taxes, the margin stacking, and the integration debt that come from stitching across three vendors and three bills.

We've seen customers like Workato run a trillion automation tasks at 67% lower cost. Character.AI handle over a billion queries a day at 2x inference throughput. LawVo cut inference costs 42% with no code changes by routing through us. Hippocratic AI is powering 20M+ patient interactions with 40% lower latency. None of these are demos. They're production workloads at scale.

Start here. Scale here.

If you're an AI builder, whether you're writing your first line of code or accelerating past product-market fit, this stack is for you. You don't need to wait in a hyperscaler queue behind a frontier lab. You don't need to glue together a Neo Cloud, an inference wrapper, and a vector database vendor. You don't need to compromise on openness, on economics, or on developer experience.

Welcome to the AI-Native Cloud. Let's build.

Researchers achieve state-of-the-art image generation results by training the image tokenizer and generator jointly instead of separately.

Deep dive

- Autoregressive image models compress images into latent representations using visual tokenizers before generation, but traditional two-stage pipelines train tokenizers and generators separately

- This work proposes end-to-end joint optimization of both reconstruction quality and generation performance, enabling direct supervision signals from generation results to flow back to the tokenizer

- The approach leverages vision foundation models to improve 1D tokenizers specifically for autoregressive modeling tasks

- Achieved state-of-the-art FID score of 1.48 without guidance on ImageNet 256x256 generation benchmark

- The joint training contrasts with prior work where tokenizers were optimized purely for reconstruction without considering downstream generation performance

- Accepted to ICML 2026 as a Spotlight presentation, indicating significant contribution to the field

- The method addresses a key limitation where separately-trained tokenizers may learn representations that reconstruct well but don't support optimal generation

Decoder

- Autoregressive: A modeling approach that generates data sequentially, predicting each element based on previously generated elements

- Tokenizer: A component that converts images into discrete tokens or compact representations processable by generative models

- FID (Fréchet Inception Distance): A metric for evaluating image generation quality by comparing distributions of generated versus real images (lower is better)

- ImageNet: A large-scale image dataset commonly used as a benchmark for computer vision tasks

- Vision foundation models: Large pre-trained models like CLIP or DINOv2 that learn general visual representations from massive datasets

- 1D tokenizer: A tokenizer that converts 2D images into a one-dimensional sequence of tokens for sequential processing

Original article

End-to-End Autoregressive Image Generation with 1D Semantic Tokenizer

Authors: Wenda Chu, Bingliang Zhang, Jiaqi Han, Yizhuo Li, Linjie Yang, Yisong Yue, Qiushan Guo

Autoregressive image modeling relies on visual tokenizers to compress images into compact latent representations. We design an end-to-end training pipeline that jointly optimizes reconstruction and generation, enabling direct supervision from generation results to the tokenizer. This contrasts with prior two-stage approaches that train tokenizers and generative models separately. We further investigate leveraging vision foundation models to improve 1D tokenizers for autoregressive modeling. Our autoregressive generative model achieves strong empirical results, including a state-of-the-art FID score of 1.48 without guidance on ImageNet 256x256 generation.

Researchers from UC Berkeley, Google DeepMind, and other institutions investigate how large language models are subtly changing written language with potential effects on cultural institutions.

Original article

How LLMs Distort Our Written Language

White House Considers Vetting AI Models Before They Are Released (10 minute read)

The Trump administration is exploring an executive order that could require AI models to undergo government vetting before public release.

Original article

The Trump administration is discussing a potential executive order to create an AI working group that would bring together tech executives and government officials to examine potential oversight procedures.

Elon Musk Megatrial Kicks Off Second Week With Scrutiny of OpenAI Exec's Finances (8 minute read)

The Elon Musk versus OpenAI trial continued with OpenAI president Greg Brockman testifying about settlement talks where Musk threatened reputational damage.

Original article

OpenAI president Greg Brockman took the stand on Monday. Two days before the trial, Elon Musk had messaged Brockman to gauge his interest in settling the case. Brockman suggested that both sides drop their claims, but Musk responded by saying that Brockman and Sam Altman would be the most hated men in America by the end of the week. Musk's lawyers are attempting to paint Brockman as motivated by money at the expense of OpenAI's nonprofit mission.

Amazon Built a Massive Supply Chain for Itself. Now It's for Hire (7 minute read)

Amazon is opening its logistics infrastructure as a service, aiming to replicate AWS's success in the $1.3 trillion third-party logistics market.

Original article

Amazon has launched Amazon Supply Chain Services, a centralized place for companies to hire Amazon for services such as fulfillment, ocean and air shipping, and truck transportation. The offering puts Amazon in competition with transportation and warehousing giants such as DSV and DHL. The global market for third-party logistics is estimated to be more than $1.3 trillion. The move is a bet that Amazon can do for logistics what Amazon Web Services did for cloud computing.