Cursor and SpaceX: In search of a complete loop (13 minute read)

Cursor and SpaceX announced a co-development deal where SpaceX can acquire the coding AI company for $60B or pay $10B to continue collaboration, combining Cursor's product expertise with SpaceX's massive compute resources to compete with frontier labs.

What: Cursor (an AI coding assistant with $2B revenue run rate) and SpaceX/xAI entered an agreement to jointly develop coding and knowledge agent models, with SpaceX holding a call option to acquire Cursor for $60B later this year or pay $10B instead. The deal pairs Cursor's proven coding product and research team with SpaceX's underutilized datacenter capacity and compute resources.

Why it matters: This represents the first credible attempt by two sub-frontier AI labs to combine into a frontier-tier competitor, validating the thesis that leading AI labs must own both the product layer (to gather training data and user feedback) and the compute layer (to train state-of-the-art models). Cursor was losing ground to Anthropic's Claude Code and OpenAI's Codex despite strong revenue, while xAI lost its research leadership after merging into SpaceX and couldn't bootstrap a coding product from scratch.

Takeaway: Watch whether this call-option acquisition structure becomes a template for future AI company acquisitions, as it solves the problem of keeping acquired teams motivated while giving both parties time to prove their valuations before closing.

Deep dive

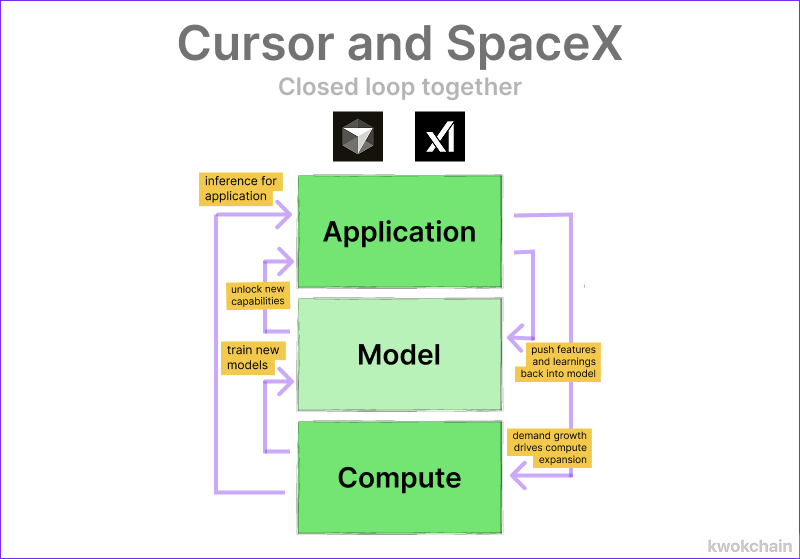

- The core thesis is that top AI labs need both model training capabilities (compute, capital) and product surfaces (user data, feedback loops) to compound improvements, and neither Cursor nor SpaceX has both alone

- Cursor pioneered AI coding assistants and reached $2B revenue run rate, but Anthropic's Claude Code and OpenAI's Codex have now overtaken them because they control their own model training

- Cursor attempted to build its own models (Composer 1 with post-training, Composer 2 with extended pre-training, now pre-training from scratch) but lacks the billions needed for state-of-the-art compute

- xAI merged into SpaceX but has seen complete turnover of research leadership and lacks direction despite having perhaps the cheapest cost of compute in the industry from building massive underutilized datacenters

- The deal is structured as a call option rather than immediate acquisition primarily to avoid complicating SpaceX's ongoing IPO process, which would require folding the acquisition into registration documents

- The structure also allows both sides to vindicate their valuations over the year—SpaceX believes it will double in value post-IPO, Cursor believes it will prove it can train state-of-the-art models with SpaceX resources

- The delayed acquisition timeline solves a problem with AI acquihires where teams often coast or leave once they're liquid, since Cursor must keep performing as an independent company that might not be acquired

- Even if the acquisition doesn't happen, Cursor gets $10B dilution-free plus access to compute to train at scale it couldn't afford otherwise, better than raising $2B at $50B valuation

- SpaceX gets immediate access to a proven coding product and research team for $10B, cheaper than the combined cost of recruiting top talent and spending compute to bootstrap from scratch

- Coding is seen as the critical path to general agents because it creates a compounding loop where product complexity today becomes model simplicity tomorrow through training on real usage patterns

- The article notes OpenAI would have been the natural partner for Cursor (shared investor in Thrive) but didn't appreciate coding agents' importance soon enough to pay what Cursor wanted

Decoder

- Frontier lab: The top tier of AI research organizations (OpenAI, Anthropic, DeepMind, etc.) with the resources and capability to train state-of-the-art models from scratch

- Harness: The product infrastructure and tooling that wraps around a model to make it useful, collecting data on failures and edge cases to improve future model versions

- Post-training: Fine-tuning an existing pre-trained model on specific tasks or data, cheaper and faster than training from scratch

- Pre-training: Training a model from scratch on massive datasets, requiring billions in compute and taking months

- SOTA: State of the art, the current best performance in a given domain

- HALO: Hiring employees and acquiring their startup, a structure focused on getting the team rather than the product

- Inference scaling: Using more compute at runtime to improve model outputs, as opposed to training-time compute

- Codex: OpenAI's AI coding product/assistant

- Claude Code: Anthropic's AI coding product, the first major lab to recognize the importance of owning both the model and product layers in coding

Original article

Being the top lab in coding means owning both the compute to train new models and capabilities and the product to recursively inform that process. Cursor and SpaceX combining together can complete that loop. That's why they entered into an agreement to co-develop coding and knowledge agent models together. This is the first deal where two sub-frontier labs plausibly combine into a frontier contender.